Clear Sky Science · en

Scientific reports multi relational dual attention graph transformer for fine grained sentiment analysis

Why tiny clues in reviews matter

Online reviews are full of mixed feelings: a restaurant might have “great food but slow service,” or a phone could have a “beautiful screen yet terrible battery life.” Companies and researchers want computers to understand this kind of detailed opinion, not just whether a whole review is positive or negative. This paper introduces a new artificial intelligence model that zooms in on specific parts of a sentence—like “service” or “battery life”—and figures out exactly how people feel about each one, even when the clues are scattered and subtle.

Looking past one-size-fits-all sentiment

Traditional sentiment analysis treats a sentence or review as a single blob of text and decides if it is overall positive or negative. That works for simple comments, but it fails when people praise one aspect and criticize another in the same breath. The field of Aspect-Based Sentiment Analysis tackles this by asking: what is the sentiment toward each specific target, such as “service,” “environment,” or “staff” in a restaurant review. Earlier methods relied on hand-crafted rules or simple machine learning that counted words, then moved to neural networks that read text in sequence, like reading from left to right. These sequential models improved accuracy but still missed long-distance links and subtle signals, especially when the words that matter are far apart or joined by contrast words like “but” and “although.”

Turning sentences into connected maps

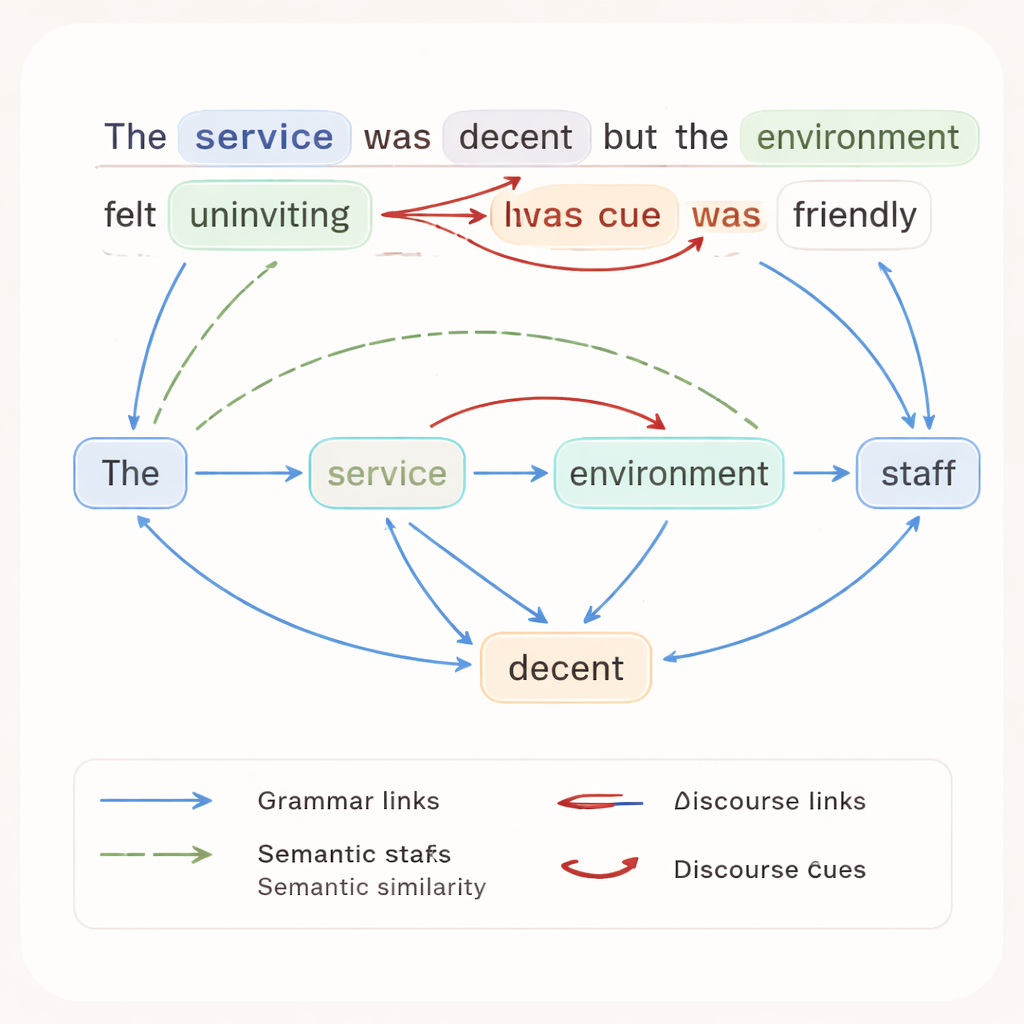

The authors argue that to really understand fine-grained opinions, a computer should see a sentence as a network rather than a straight line. In their approach, each word becomes a node in a graph, and different types of relationships become edges. One set of edges captures grammar, such as which word is the subject or object. Another set links words that are similar in meaning, even if they are not next to each other. A third set marks discourse cues—words like “but,” “however,” or “although” that often signal a change in sentiment. In a sentence like “The service was decent but the environment felt uninviting although the staff was friendly,” this graph shows how praise and criticism are woven together around different aspects.

A dual spotlight on context and targets

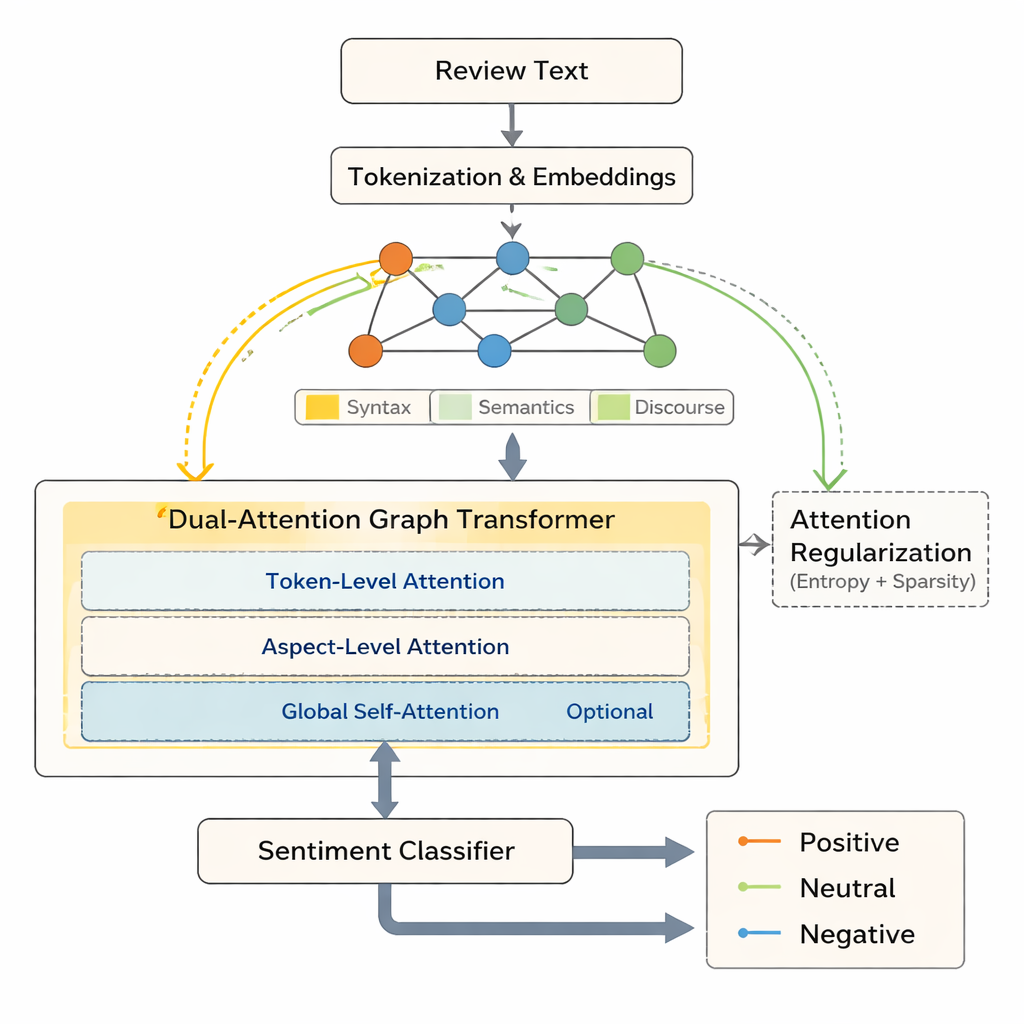

Building on this graph view, the paper presents the Multi-Relational Dual-Attention Graph Transformer (MRDAGT). This model uses a Transformer-style attention mechanism adapted to graphs. One attention head looks broadly at how all words in the sentence relate to one another, collecting useful local context. A second attention head focuses specifically on the aspect under consideration—say, “service” or “environment”—and raises the influence of words that shape opinions about that target, such as “decent” or “uninviting.” When there are several aspects in a single sentence, an optional global attention layer helps the model weigh their interactions. In effect, the system shines two coordinated spotlights: one on the general sentence structure, and one directly on the aspect whose sentiment it is trying to judge.

Making machine attention more selective and explainable

A key concern with modern AI is that attention weights—the numbers that indicate which words the model focused on—can be spread too thin, making decisions hard to interpret. MRDAGT addresses this with two regularizing forces. An entropy penalty discourages overly flat attention, nudging the model to concentrate more sharply on a few important words. At the same time, an L1 sparsity term pushes many attention links toward zero, trimming away weak, noisy connections. Together, these forces create “focused sparsity”: the model tends to place clear, high weights on truly relevant word–aspect pairs while ignoring distractions. Experiments on three benchmark datasets—formal laptop reviews, complex multi-sentence reviews, and informal Twitter posts—show that MRDAGT consistently beats strong existing systems by about one to two percentage points in accuracy, while also producing cleaner, more interpretable attention maps.

What this means for real-world opinion mining

For non-specialists, the takeaway is that this model offers a more precise, trustworthy way to mine opinions from messy, real-world text. Instead of just saying a review is “mostly positive,” MRDAGT can separately report that customers like a device’s speed but dislike its battery, or that diners appreciate a café’s staff while complaining about noise. Because its attention patterns line up with human intuition—focusing on contrast words, sentiment adjectives, and aspect terms—it is easier for analysts to see why the model made a particular judgment. The authors suggest that this approach can support better product design decisions, sharper social media monitoring, and future extensions to many languages and even multi-modal data like audio and images, all while keeping the reasoning process relatively transparent.

Citation: Anilkumar, A.P., Kim, SK. & Yoon, YC. Scientific reports multi relational dual attention graph transformer for fine grained sentiment analysis. Sci Rep 16, 7236 (2026). https://doi.org/10.1038/s41598-026-36490-6

Keywords: aspect-based sentiment analysis, graph neural networks, transformer attention, opinion mining, natural language processing