Clear Sky Science · en

A collaborative multi-attention network for real-time small object detection in UAV imagery

Why spotting tiny details from the sky matters

As drones become common tools for traffic monitoring, disaster response, and security, they must reliably spot very small objects—like cars, bikes, or people—seen from high above. In these aerial views, targets are only a few pixels wide, easily lost in shadows, glare, and cluttered backgrounds. This paper presents a new computer vision system, called Collaborative Multi-Attention Network (CMA-Net), designed to detect such small objects in drone images quickly and accurately enough for real-time use.

Challenges of seeing small things from high up

Detecting small objects in drone imagery is harder than in ordinary street photos. Because drones fly high and view scenes from many angles, vehicles and people appear tiny and blurred, and lighting can change rapidly. Traditional two-step detectors can be very accurate but are often too slow for real-time use on flying platforms with limited computing power and communication bandwidth. Faster one-step methods run in real time but tend to miss small targets because their details are gradually washed out as images are processed layer by layer. The authors argue that better small-object detection requires smarter ways of combining information across scales and focusing computational attention on the most informative parts of an image.

Building a smarter feature ladder

CMA-Net starts from a widely used image-processing backbone, ResNet-50, and then adds an Efficient Bi-directional Feature Pyramid Network (E-BiFPN). This structure builds a kind of ladder of feature maps at different sizes, letting the system mix fine details from early layers with more abstract context from deeper layers. Unlike earlier designs, E-BiFPN trims unnecessary high-level layers and adds a special lightweight processing block that uses partial convolutions to cut down on computation. A weighted fusion scheme then learns how much to trust shallow versus deep features at each scale, so that fragile information about tiny cars or pedestrians is boosted while noise from the background is reduced.

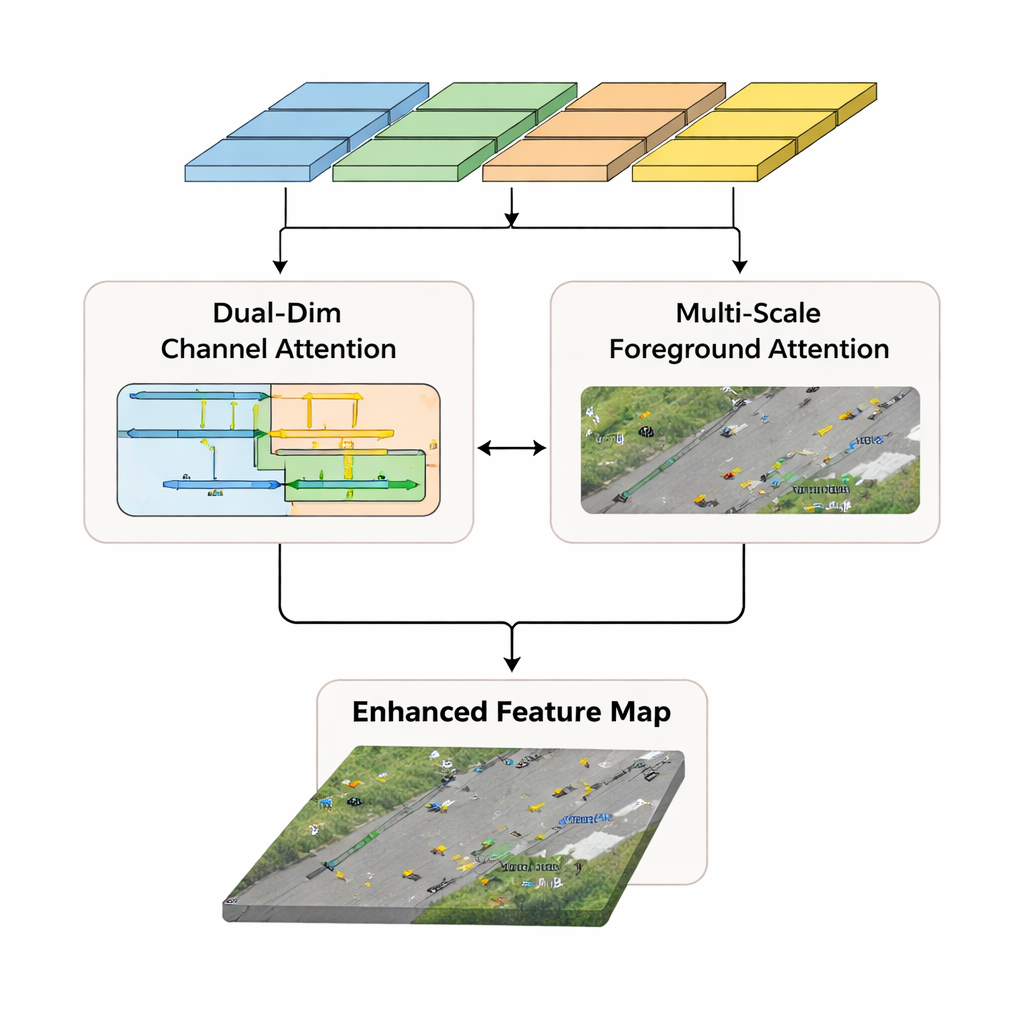

Teaching the network where to look

Beyond rearranging features, CMA-Net uses attention mechanisms that mimic how humans focus on relevant parts of a scene. A Dual-Dimensional Channel Attention (DDCA) module analyzes features separately along the width and height of the image, rather than compressing everything into a single global summary. This design helps the network capture long-range patterns in both horizontal and vertical directions, preserving location cues that are crucial when small objects blend into complex surroundings. In parallel, a Multi-Scale Foreground Attention (MSFA) module links large, easily recognized objects in deeper layers with smaller ones in shallower layers. By sampling and fusing information from three scales, MSFA learns to highlight foreground regions where vehicles are likely to be and to suppress confusing background textures.

From enhanced features to fast decisions

The outputs of the DDCA and MSFA branches are merged into rich, small-object-friendly feature maps that are passed to an "anchor-free" detection head. Instead of relying on a dense grid of pre-set boxes, this head directly predicts both the category and position of objects, simplifying computations and making training more flexible. The authors evaluated CMA-Net on two demanding public drone datasets, UAVDT and Stanford Drone, which include crowded roads, varied weather, and day–night conditions. CMA-Net achieved accuracy scores of 67.2% and 62.0% on these datasets while running at 64 frames per second, meaning it can process video in real time while outperforming many popular detectors, including some members of the YOLO family and more complex transformer-based models.

What this means for real-world drone use

For non-specialists, the key takeaway is that CMA-Net significantly improves a drone’s ability to notice small, hard-to-see objects without slowing it down. By carefully fusing information across multiple scales and guiding the network’s attention both across image channels and between foreground and background, the method keeps tiny vehicles and people from being overlooked. This combination of accuracy and speed makes the approach promising for practical applications such as intelligent traffic monitoring, crowd observation, and emergency response, where missing a small object or reacting too slowly could have serious consequences.

Citation: Yang, J., Yue, X. & Wu, L. A collaborative multi-attention network for real-time small object detection in UAV imagery. Sci Rep 16, 5852 (2026). https://doi.org/10.1038/s41598-026-36440-2

Keywords: drone vision, small object detection, real-time surveillance, attention networks, traffic monitoring