Clear Sky Science · en

DEENet: an edge-enhanced CNN–Transformer dual-encoder model for steel surface defect detection

Why tiny flaws in steel matter

From cars and bridges to household appliances, modern life quietly leans on steel. Yet the reliability of all these products can be undermined by flaws so small they are hard to spot even under a microscope. This study introduces DEENet, a new computer-vision system that can automatically find subtle surface defects on steel strips more accurately and efficiently than existing tools, helping factories catch problems early, improve safety, and cut waste.

The challenge of seeing small defects

Steel surfaces pick up many kinds of flaws during production: scaly patches, pits, hairline cracks, inclusions of foreign material, and scratches. Traditional inspection relies on human workers or simple image filters, which are slow, inconsistent, and easily confused by noisy factory backgrounds. Modern “one-shot” detection algorithms such as the YOLO family can scan an image in a single pass, but they still miss very small or low-contrast defects and often blur the edges of damaged regions. When the borders between healthy and faulty steel are fuzzy, detectors misjudge size and location, leading to missed defects or false alarms.

Blending two ways of seeing

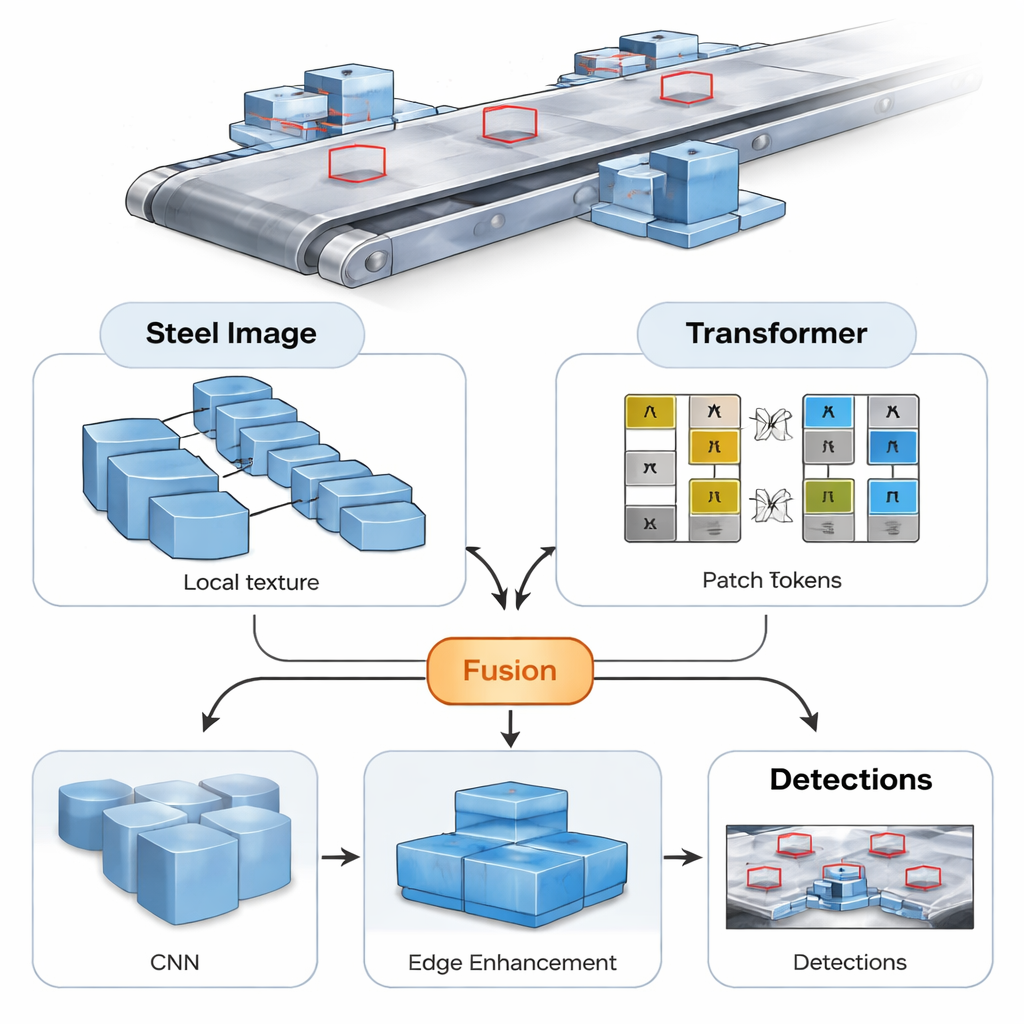

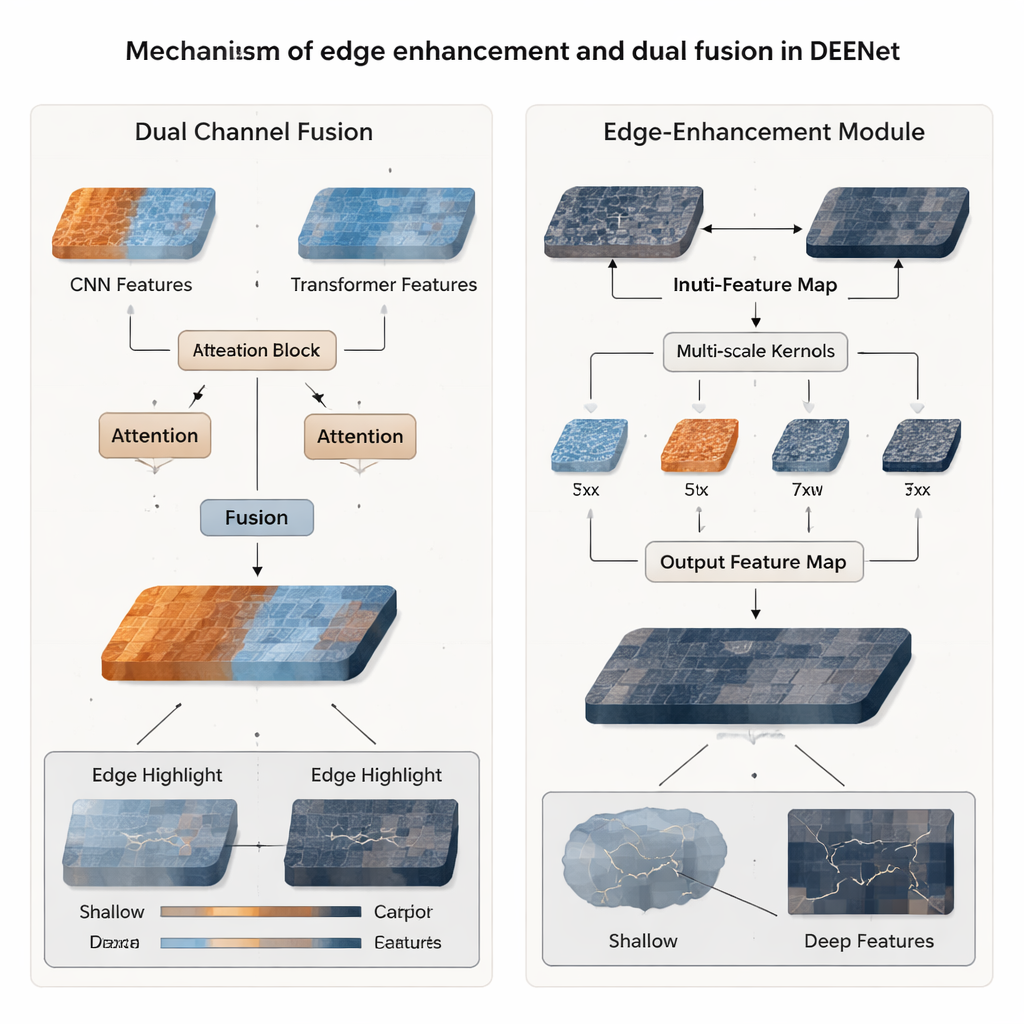

DEENet tackles this problem by combining two complementary ways of looking at an image. One branch is a classic convolutional neural network (CNN), which is good at picking up fine local textures, such as tiny pits or thin scratches. The other branch is a Transformer-based network, which breaks the image into patches and excels at capturing the broader context—how patterns relate across the entire strip of steel. In DEENet, these two branches act as twin “eyes”: one focused on detail, the other on the big picture. A custom Dual Channel Fusion module then blends their outputs, so that every region of the image is described both by its local texture and by its role in the overall scene. This cross-talk makes the system more sensitive to tiny, crowded defects that older models tend to overlook.

Sharpening the outline of damage

Even with rich features, detectors can still struggle to draw crisp boundaries around defects, especially when they fade gradually into the background. To address this, the authors design an edge-enhancement module, called C2f_EEM, which focuses specifically on changes in intensity at the borders between damaged and undamaged areas. It passes features through several filters of different sizes to capture structures from thin cracks to broader blotches, then uses a kind of before-and-after comparison to emphasize sharp transitions. This process highlights the “high-frequency” content where edges live, making cracks and pits stand out more clearly, and it does so with lightweight computations suitable for real-time use on production lines.

Putting the system to the test

The researchers evaluate DEENet on a widely used benchmark of steel-strip defects that includes six common flaw types, each with hundreds of sample images. Compared with standard YOLO-based detectors and newer Transformer-style models, DEENet achieves a higher mean Average Precision—a summary measure of how often detections are both correct and well placed—reaching 81.4%. Gains are especially strong for the hardest category, crazing, which looks like a fine web of cracks and typically has very low contrast. DEENet not only finds more of these tricky defects but also draws tighter boxes around them, while keeping the overall computation low enough for practical deployment. Additional tests on another industrial dataset and on images with added noise and lighting changes show that the model remains accurate even when conditions worsen.

What this means for everyday products

In simple terms, the study shows that giving a machine-vision system two complementary "views" of the same steel surface—and teaching it to sharpen edges—can make defect detection both smarter and more reliable. DEENet’s improved ability to spot small, faint flaws and outline them precisely could help steelmakers catch problems earlier, reduce scrap, and deliver more consistent materials for everything from skyscrapers to smartphones. While the authors note that further work is needed to shrink the model for low-power devices and to test it in more varied factories, their results mark a step toward safer, more efficient, and more automated quality control in heavy industry.

Citation: Pan, W., Zhong, R., Huang, J. et al. DEENet: an edge-enhanced CNN–Transformer dual-encoder model for steel surface defect detection. Sci Rep 16, 6692 (2026). https://doi.org/10.1038/s41598-026-36390-9

Keywords: steel defects, computer vision, deep learning, quality inspection, industrial automation