Clear Sky Science · en

A deep sentiment model combining ALBERT-driven context and EHO-optimized architecture

Why Smarter Sentiment Reading Matters

Every day, millions of people share opinions about products, services, politics, and events on the internet. Turning this flood of text into reliable insight is vital for companies, governments, and researchers. Yet our online language is messy: sarcastic jokes, slang, typos, and rare emotions can easily confuse computers. This paper introduces a new sentiment analysis system that aims to read these emotions more accurately, while using less computing power than many current artificial intelligence models.

From Simple Word Counts to Context-Aware Reading

Early sentiment analysis tools treated text like a bag of disconnected words, counting how often terms like “good” or “terrible” appeared. This approach ignored word order and subtle context, such as “not bad” meaning something closer to “pretty good.” Deep learning methods improved on this by processing text as sequences, but they often required vast labeled datasets and heavy computation. Transformer models like BERT pushed accuracy even further, yet their large size makes them expensive to run in real-world settings such as customer service platforms or social media monitoring systems. The authors of this paper respond to this challenge by combining several lighter but powerful components into one streamlined system.

A Leaner Brain for Understanding Text

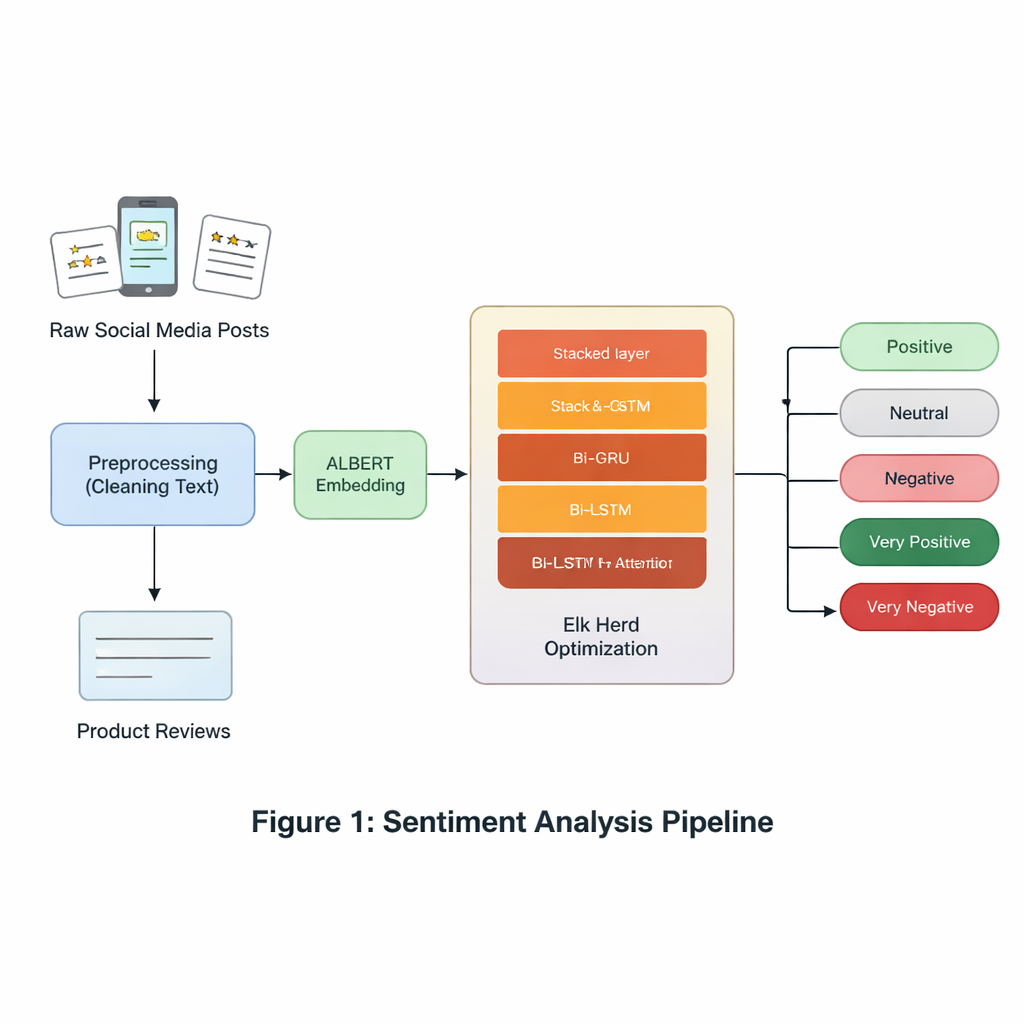

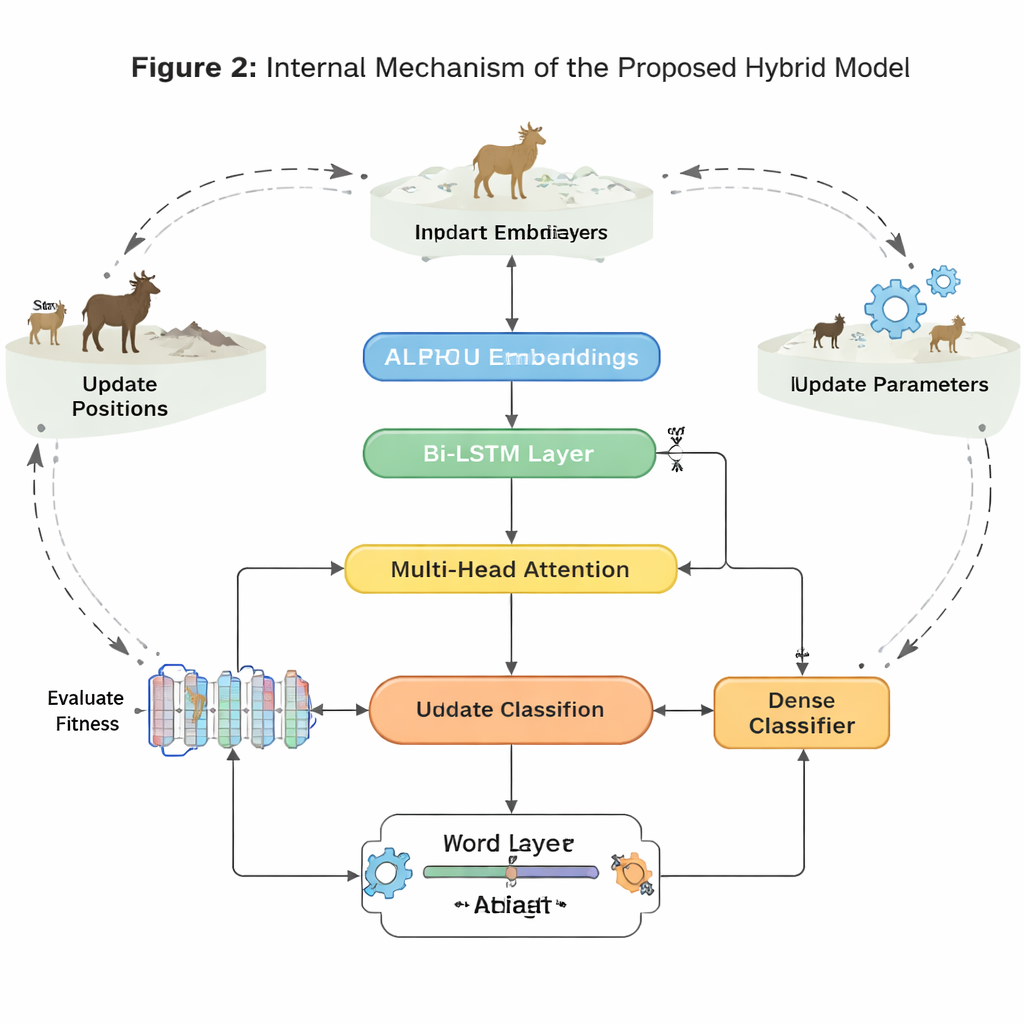

At the heart of the model is ALBERT, a compact cousin of the BERT language model. ALBERT turns each word in a sentence into a context-aware numerical representation, capturing how meanings shift depending on neighboring words. Unlike larger models, ALBERT reduces memory use by sharing parameters between layers and compressing its word vocabulary. This makes it easier to run on standard hardware without sacrificing much understanding. These ALBERT-based word representations become the input to a sequence of specialized layers that focus on how sentiments unfold across a sentence.

Letting Two Memory Systems Work Together

To follow how meaning changes from word to word, the system uses two types of recurrent networks: GRUs (Gated Recurrent Units) and LSTMs (Long Short-Term Memory units), each run in both forward and backward directions. GRUs are efficient at tracking short phrases with fewer parameters, while LSTMs are better at remembering information over longer stretches of text. By stacking a bidirectional GRU layer on top of a bidirectional LSTM layer and adding an attention mechanism, the model can highlight the most sentiment-heavy parts of each sentence—such as the phrase “except the battery life” in an otherwise positive review. This hybrid design aims to capture both quick shifts in tone and longer-range context that might flip the overall sentiment.

Nature-Inspired Tuning for Tough Cases

Beyond architecture, the authors tackle a key real-world obstacle: sentiment datasets are often imbalanced and noisy. Emotions like disgust or surprise, and neutral statements, appear less often than clearly positive or negative ones, causing many models to ignore them. To counter this, the paper uses Elk Herd Optimization, a nature-inspired search strategy modeled on how elk move, compete, and form groups. After the neural network produces internal sentiment vectors, this optimization step fine-tunes how these vectors represent each class, especially the rare ones, by iteratively improving a “fitness” score. This process helps the model avoid shallow solutions and improves its ability to distinguish subtle or underrepresented emotions.

Putting the Model to the Test

The authors evaluate their system on six widely used datasets, including Twitter posts, restaurant and laptop reviews, and a five-level movie review benchmark that distinguishes very positive and very negative opinions from more moderate ones. Across these varied sources, the new approach consistently outperforms several advanced graph-based and transformer-based competitors in both accuracy and F1 score, a metric that balances correct hits and missed cases. Gains are especially strong on the five-way movie review task and on underrepresented sentiment classes, showing that the method can handle both fine-grained emotion and lopsided data. An ablation study, where components are removed one at a time, confirms that ALBERT, the combined GRU–LSTM design, attention, and elk-inspired optimization each contribute to the overall performance.

What This Means for Everyday Applications

For non-specialists, the key takeaway is that this research offers a more efficient and reliable way to interpret large volumes of online opinion. By blending a compact language model with complementary memory layers and a biologically inspired tuning step, the system reads between the lines more accurately, especially when sentiments are subtle or data are skewed. This makes it promising for real-world uses such as tracking customer satisfaction, monitoring public health attitudes, or gauging reactions to policies and events, where both precision and computational cost matter.

Citation: Oqaibi, H., Sharma, S. A deep sentiment model combining ALBERT-driven context and EHO-optimized architecture. Sci Rep 16, 5784 (2026). https://doi.org/10.1038/s41598-026-36389-2

Keywords: sentiment analysis, ALBERT, deep learning, text classification, metaheuristic optimization