Clear Sky Science · en

A cognitive internet of things resource allocation method based on multi-agent reinforcement learning algorithm

Why your car’s data needs to stay “fresh”

Modern cars constantly share information about their position, speed, and surroundings with other vehicles and roadside equipment. For safety features and future self-driving functions to work well, this information must be not only accurate but also fresh: a braking alert that is one second late can be useless. This paper explores how to keep such data as up to date as possible over busy wireless networks, using a new kind of learning-based control method that lets cars decide, on their own, how and when to transmit.

Smart roads that share the airwaves

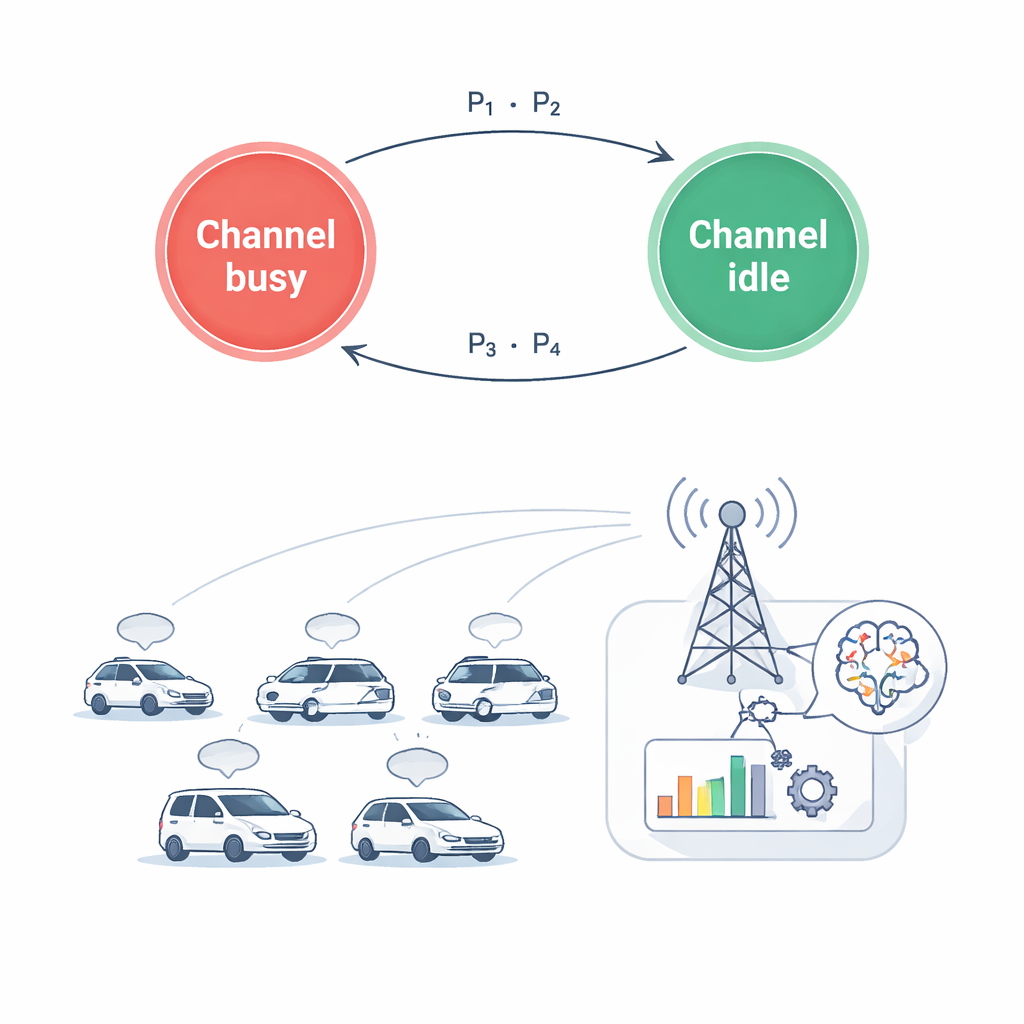

The study looks at a future road network where thousands of connected cars share limited radio spectrum with existing users such as mobile phone customers. This setting, called a cognitive Internet of Things, assumes the cars are “polite guests”: they can borrow frequencies only when doing so does not disturb primary users. At the same time, vehicles must talk to each other and to base stations quickly enough to support collision warnings, traffic coordination, and entertainment services. Balancing these demands is difficult because cars move fast, signals fade as they weave through city blocks, and the available channels change from one moment to the next.

Measuring freshness, not just speed

Traditional network design often focuses on boosting data rate or reducing average delay. However, for safety-critical car messages, what really matters is how old the most recent status update is when it reaches a receiver. The authors use a metric called the Age of Information, which grows as time passes after the last successful update and is reset when a new message arrives. In their model, each pair of vehicles repeatedly sends chunks of data. If the wireless link is strong and the chosen power level is high enough, the current chunk is cleared quickly and the age drops; if the connection is poor or power is limited, leftover data carries over and the age keeps climbing. The goal is to choose radio channels and power levels so that this age stays as low as possible, while still saving energy and protecting primary users from interference.

Teaching cars to cooperate by trial and error

Because the wireless environment changes rapidly and each car only sees local information, the authors frame the problem as a learning task rather than a fixed formula. Each car acts as an intelligent agent that repeatedly observes its situation: which channels appear busy, how strong its radio links are, how much data remains to be sent, and how old its last update is. Based on this partial view, it picks an action that combines a discrete choice (which channel to use, or whether to stay silent) with a continuous choice (how much power to transmit with). After acting, the system measures how fresh the information is, how much power was used, and whether any primary users were disturbed. This feedback is turned into a reward signal that guides the agents, over many simulated episodes, toward better joint decisions.

A tailored learning algorithm for mixed decisions

To train these agents, the authors develop an improved multi-agent version of a popular method called Proximal Policy Optimization. Their variant, IMAPPO, uses a central training module that sees the global state and evaluates how good the combined actions of all cars are, while each individual car learns a private decision rule it can apply on its own in real time. A key innovation is an upgraded decision network that can naturally handle both the on/off choice of channels and the smooth range of possible power levels. In simulations of grid-like city roads, with cars and base stations placed at realistic positions and radio effects such as fading and interference included, the proposed method is compared to several state-of-the-art learning algorithms and a random baseline.

Fresher data with less energy

The results show that the new method can keep information noticeably fresher while also consuming less power. Across different numbers of vehicles and different amounts of data to send, IMAPPO reduces the average Age of Information by up to roughly half compared with simple random access, and outperforms other advanced learning methods by meaningful margins. At the same time, it lowers the overall power used by the cars, helping preserve battery life and limit interference to other spectrum users. For a lay reader, this means that smarter, learning-based control of who talks when and how loudly on the wireless “roadway” could make connected and autonomous vehicles safer, more efficient, and more respectful of the crowded airwaves they must share.

Citation: Wang, R., Shen, Y., Wang, D. et al. A cognitive internet of things resource allocation method based on multi-agent reinforcement learning algorithm. Sci Rep 16, 7756 (2026). https://doi.org/10.1038/s41598-026-36380-x

Keywords: connected vehicles, wireless spectrum sharing, age of information, reinforcement learning, internet of things