Clear Sky Science · en

Multi-level screening method for network security alarms based on DBSCAN algorithm and rete rule inference

Why smarter alarms matter

Every modern organization now depends on networks that never sleep, from hospitals and banks to cloud providers and city infrastructure. These networks are watched by security tools that pour out thousands of alerts a day—far more than human analysts can realistically examine. Buried in that flood are a few alarms that signal real break-ins or serious weaknesses. This paper presents a new way to separate those crucial signals from the noise, cutting false alarms while catching more genuine attacks and doing so with very little computing power.

From messy logs to clean, usable data

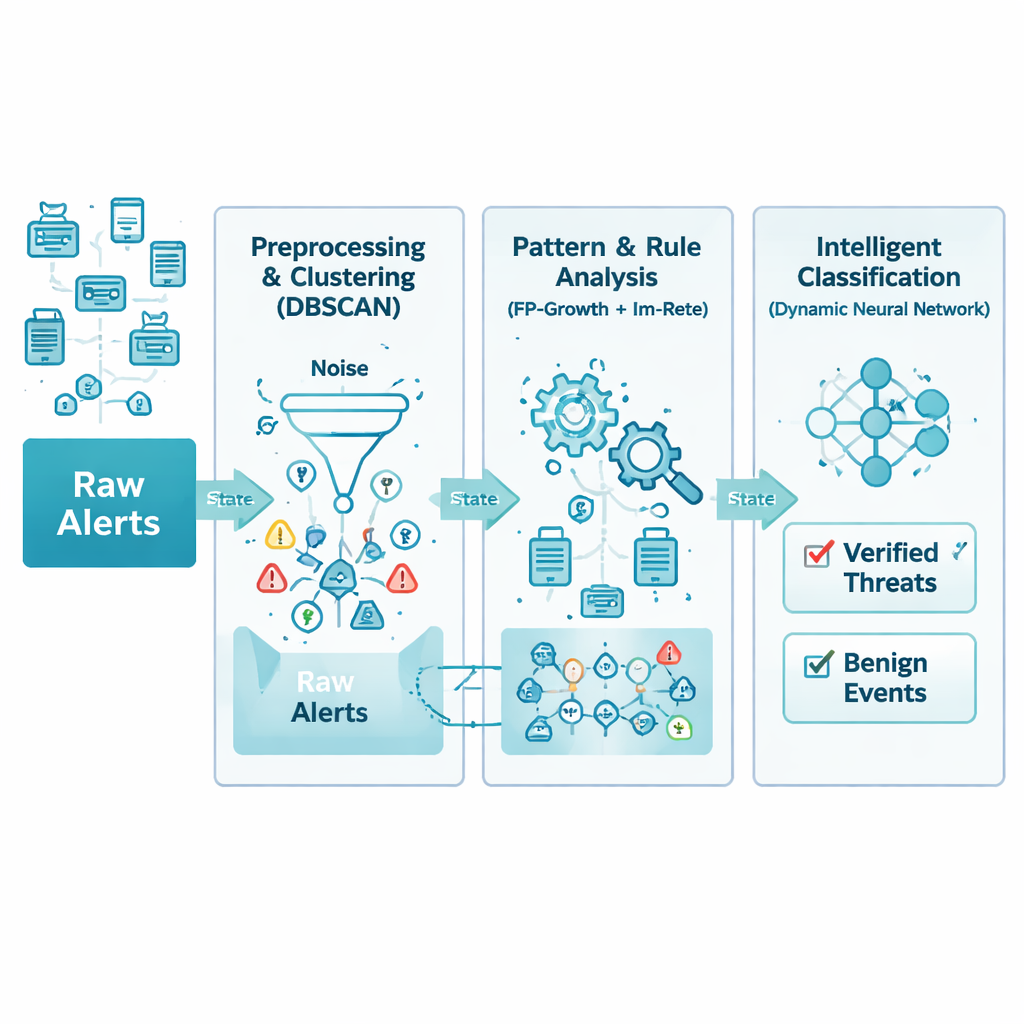

Network alarms arrive from many different devices and vendors, each with their own format and level of detail. The authors first tackle this chaos with a careful cleaning and standardization step. All incoming alerts are translated into a common structure and stripped of duplicates, missing fields, and obvious errors. For example, repeated warnings from several devices about the same attack within a few seconds are merged into a single, richer record. The result is a streamlined database of alerts that preserves what matters most—what happened, when it happened, and which systems were involved—while removing clutter that would only slow later analysis.

Letting patterns in time reveal real trouble

Even cleaned data can be overwhelming, so the next layer looks for natural groupings in time. The method relies on a technique called density-based clustering, which essentially searches for periods when related alarms appear close together, while treating isolated or random alerts as noise. This avoids having to guess in advance how many types of incidents exist. The system also uses sliding time windows that overlap, so that fast-moving attacks are not accidentally split across different batches. Carefully tuned, this step keeps the most informative bursts of activity while discarding up to a third of misleading background noise in the raw streams.

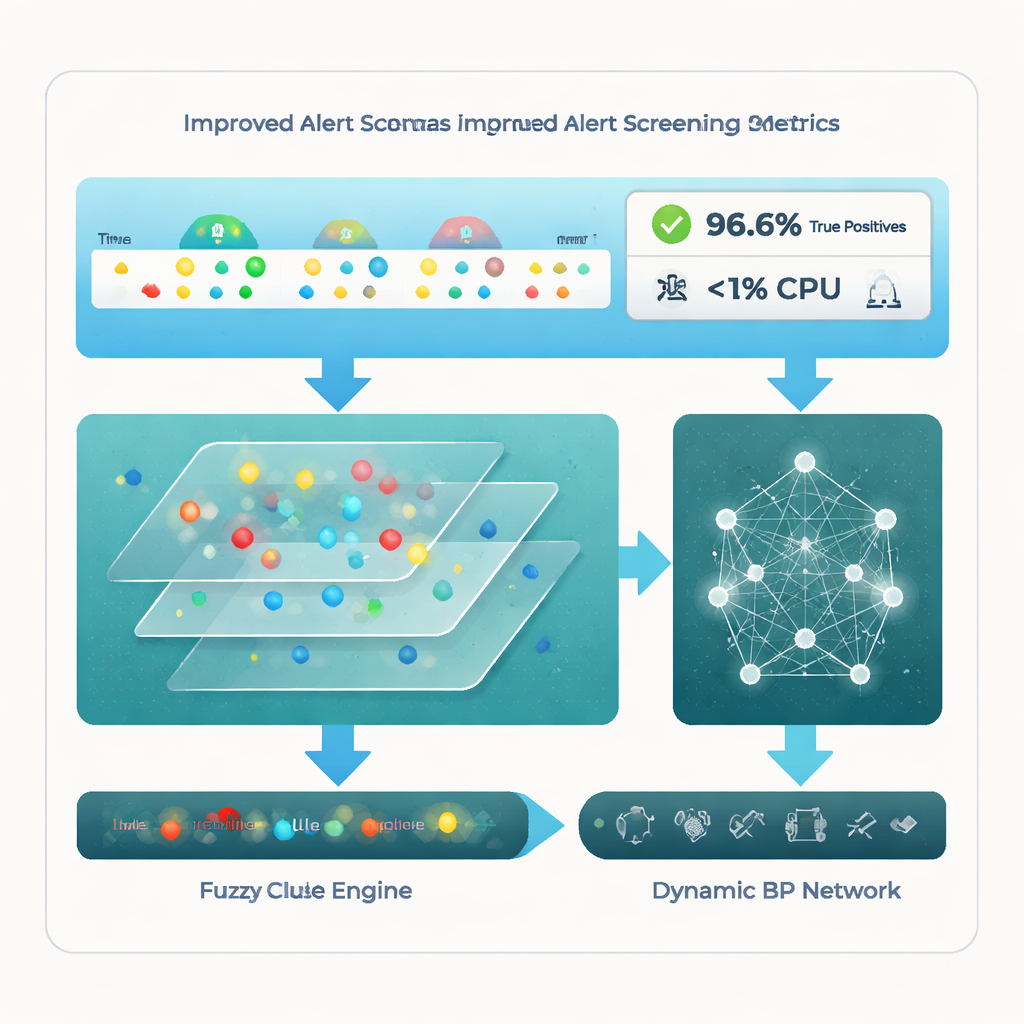

Teaching rules to cope with missing pieces

Real-world networks are imperfect: packets get dropped, devices misbehave, and some alarms never arrive. Traditional rule engines expect complete information and simply fail if one piece is missing. Here, the authors redesign the classic Rete rule system so that each condition in a rule has a weight, reflecting how important it is. Instead of demanding that every detail match perfectly, the engine checks whether enough of the important pieces line up over time. This “fuzzy” approach lets the system still recognize an attack pattern even if, say, an early probe or a minor sensor alert was never recorded. At the same time, rarely used or long-idle rule branches are pruned away to keep memory use low.

Neural network that reshapes itself

After patterns and rules have transformed the alerts into more meaningful features, a final stage uses a neural network to decide which events are true threats and which are benign. Unlike many machine-learning models that are fixed once designed, this network can grow or shrink its hidden layers as it trains. It starts small, adds units when doing so clearly improves performance, and trims back parts that are not pulling their weight. This adaptive design helps the model fit both simple and complex datasets without guesswork, reducing the risk of overfitting and shortening training time while keeping accuracy high.

What the tests show in practice

The team evaluates their framework on well-known public intrusion datasets and on a large real-world collection of company alerts. Compared with four recent advanced methods—including pure clustering, specialized IoT alert systems, and tuned neural networks—the new multi-level pipeline stands out. It reaches a true positive rate around 96.6%, meaning it correctly flags almost all real attack roots, while keeping noisy or irrelevant alerts to about 18.7%. Just as striking, it does this with less than 1% CPU usage, far below competing approaches. Statistical tests confirm that these gains are not due to chance but to the way the method combines clustering, rule reasoning, and adaptive learning.

What this means for everyday security teams

For security analysts who drown daily in alarms, this work points toward tools that are both more accurate and easier on limited hardware. By cleaning data, grouping it intelligently over time, tolerating missing pieces, and using a self-adjusting neural network, the framework helps highlight the relatively small set of alerts that truly deserve attention. That means faster response to real attacks, fewer wasted hours chasing false leads, and better use of existing equipment. As networks grow in size and complexity, such multi-level screening could be a key ingredient in keeping digital infrastructure safe without overwhelming the people who defend it.

Citation: Ni, L., Zhang, S., Huang, K. et al. Multi-level screening method for network security alarms based on DBSCAN algorithm and rete rule inference. Sci Rep 16, 5632 (2026). https://doi.org/10.1038/s41598-026-36369-6

Keywords: network security, intrusion detection, alert filtering, machine learning, cyber attack detection