Clear Sky Science · en

Exploiting topic analysis models to explore psychological dimensions in social media data

Why Our Online Words Matter

Millions of people talk about their feelings on social media every day, often more openly than they would in person. Hidden inside this sea of casual comments are valuable clues about mental health, including signs of depression or self-harm. This study asks a simple question with big implications: can modern artificial intelligence sort through noisy online chatter, find meaningful themes, and help professionals better understand psychological risks—without reading every post one by one?

Turning Chaos into Themes

The researchers focused on a large collection of Reddit posts from the eRisk initiative, which includes people who said they had been diagnosed with depression and a control group without known diagnoses. Their goal was not to diagnose individuals, but to see whether topic analysis—techniques that group texts by shared themes—could reveal patterns relevant to mental health. Because social media language is messy, full of slang, typos, and sudden topic shifts, it is a realistic but very challenging test for these methods.

Three Ways to Discover What People Talk About

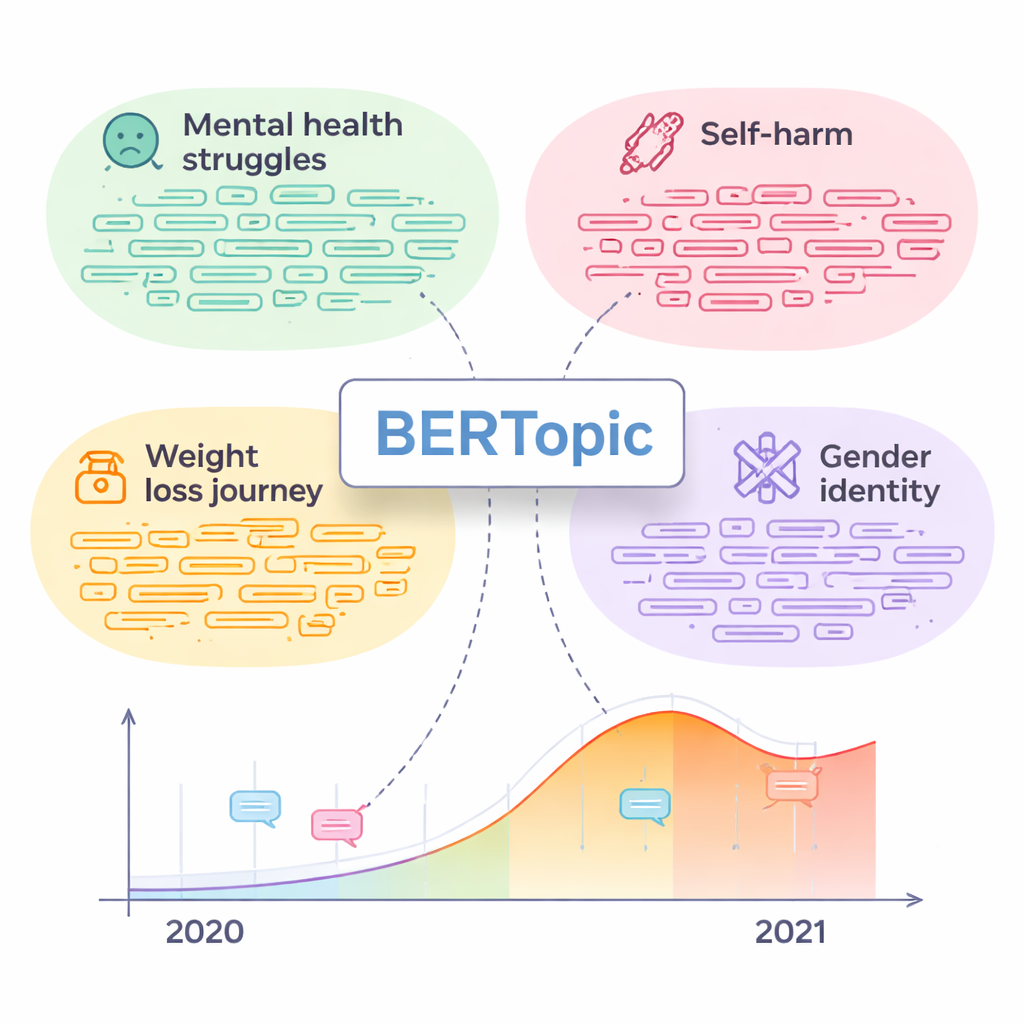

The study compared three different families of topic models. The first, Latent Dirichlet Allocation (LDA), is a classic method that looks at how often words appear together in documents. The second, BERTopic, uses powerful modern language models to turn each post into a rich numerical representation, then clusters similar posts and extracts key words for each group. The third, TopClus, also relies on neural networks, combining attention mechanisms and clustering in a shared mathematical space. All three were run with standard settings to produce 50 topics each, mimicking how many researchers would use them out-of-the-box.

Asking Humans, Not Just Formulas

To judge which topics were truly meaningful, the team did not rely on automatic scores alone. Six trained annotators examined 150 topics, each represented by its top words and a handful of central posts. For every topic, they rated how coherent the word list was, how coherent the example posts were, and whether the words and posts matched each other. They also tried to give each topic a short, intuitive name when possible. This human-centered approach revealed a key finding: numerical “coherence” metrics, which are popular in research, often disagreed with human judgment, especially on messy social media text.

The Clear Winner and What It Revealed

Across all human ratings, BERTopic clearly produced the most understandable and specific topics. Annotators could name its topics far more often than for the other models, and they agreed with each other at a solid, moderate level. LDA, in contrast, often grouped together unrelated words and posts that felt almost random to the reviewers. Once the best topics were selected, the researchers dug into what people were actually talking about. Some themes, like “Mental health struggles” and “Self-harm,” were strongly linked to users with depression and contained many posts expressing distress. Others were less obviously clinical—such as “Weight loss journey,” “Gender identity,” “Sexual dreams,” and “Social drinking etiquette”—but turned out to host a high share of posts from depressed users and many signs of emotional pain. A simple time-based analysis showed that activity in some of these sensitive topics rose sharply during the COVID-19 pandemic, mirroring broader reports of worsening mental health.

From Online Patterns to Real-World Help

To better understand how serious some of these posts might be, the authors used a separate language model to roughly map the content onto items from a well-known depression questionnaire (the Beck Depression Inventory). This exploratory step suggested that certain topics, especially around mental health struggles, self-harm, body image, and gender identity, often contain language associated with moderate to severe depressive symptoms. The authors stress that such automated readings are not clinical diagnoses, but they can help highlight where expert attention is most urgently needed.

What This Means for Mental Health and Technology

In plain terms, the study shows that today’s most advanced topic models, especially BERTopic, can turn chaotic social media conversations into clear themes that align with real psychological concerns. It also shows that blindly trusting automatic quality scores is risky; human review remains essential when the goal is to support mental health decisions. In the future, similar tools could help clinicians, public agencies, and researchers monitor broad trends, spot emerging risks, and design better prevention efforts—while still leaving final judgment and care to human professionals.

Citation: Couto, M., Parapar, J. & Losada, D.E. Exploiting topic analysis models to explore psychological dimensions in social media data. Sci Rep 16, 6047 (2026). https://doi.org/10.1038/s41598-026-36339-y

Keywords: social media and depression, topic modeling, mental health patterns, online self-harm signals, language models in psychology