Clear Sky Science · en

Hint recognition in Chinese and Russian diplomatic discourse using large language models

Reading Between the Lines

When diplomats speak in public, what they do not say can matter as much as the words they choose. This study explores whether modern artificial intelligence can pick up the subtle hints and veiled messages in Chinese and Russian foreign ministry press conferences—signals that human listeners often miss, yet that can sway international relations.

Why Hints Matter in World Affairs

Diplomatic language is designed to be careful and polite. Governments need to defend their interests without openly provoking rivals or alarming the public. As a result, officials often rely on hints—phrases that sound neutral on the surface but quietly criticize, warn, or signal a political stance. Misreading such hints has, in the past, contributed to crises and mistrust between states. Understanding these indirect messages is especially difficult across languages and cultures, where shared background knowledge cannot be taken for granted.

From Classic Theory to Smart Machines

For decades, linguists and philosophers have studied how speakers imply more than they literally say. Early theories focused mainly on the speaker’s intentions and assumed that a rational listener could reconstruct the hidden meaning. Later work in “cognitive pragmatics” stressed that understanding hints also depends on the listener’s mental processes, cultural background, and the surrounding context. Building on these ideas, the authors describe hints as layered: the visible wording (verbal–semantic level), the culturally shaped ways of thinking behind it (linguistic–cognitive level), and the speaker’s motives and strategies, such as criticism, warning, or saving face (motivational–pragmatic level).

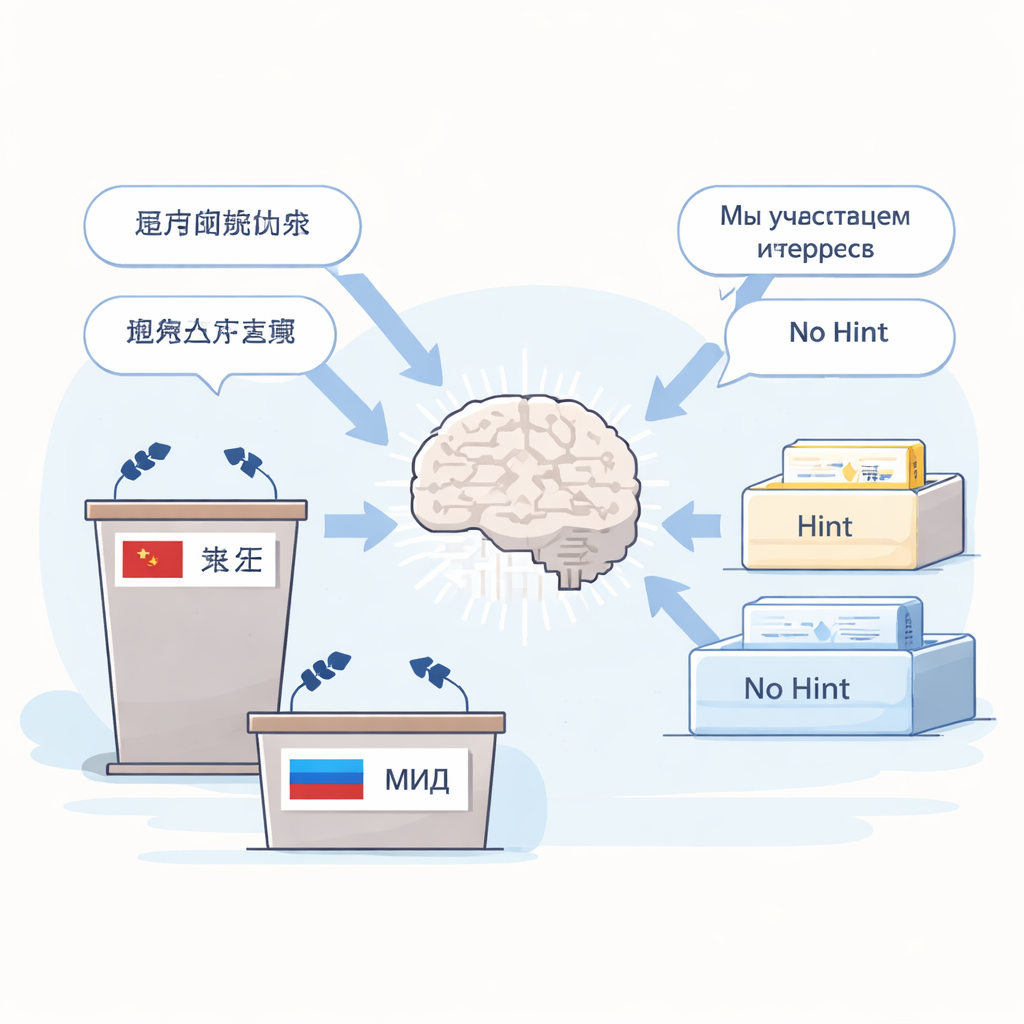

How the AI System Was Built

The researchers collected nearly 1,400 question–answer segments from official press conferences of the Chinese and Russian foreign ministries held in 2024. Expert linguists manually annotated 498 instances where spokespersons were hinting rather than speaking plainly. They grouped these into three types: “fixed hints” with stable, repeated phrasing (for example, stock diplomatic formulas), “cultural hints” whose meaning relies on shared cultural knowledge and metaphors, and “contextual hints” that can only be recognized by looking closely at the immediate situation and motives. These examples were used to build an external knowledge base and to design a set of reasoning rules for a large language model.

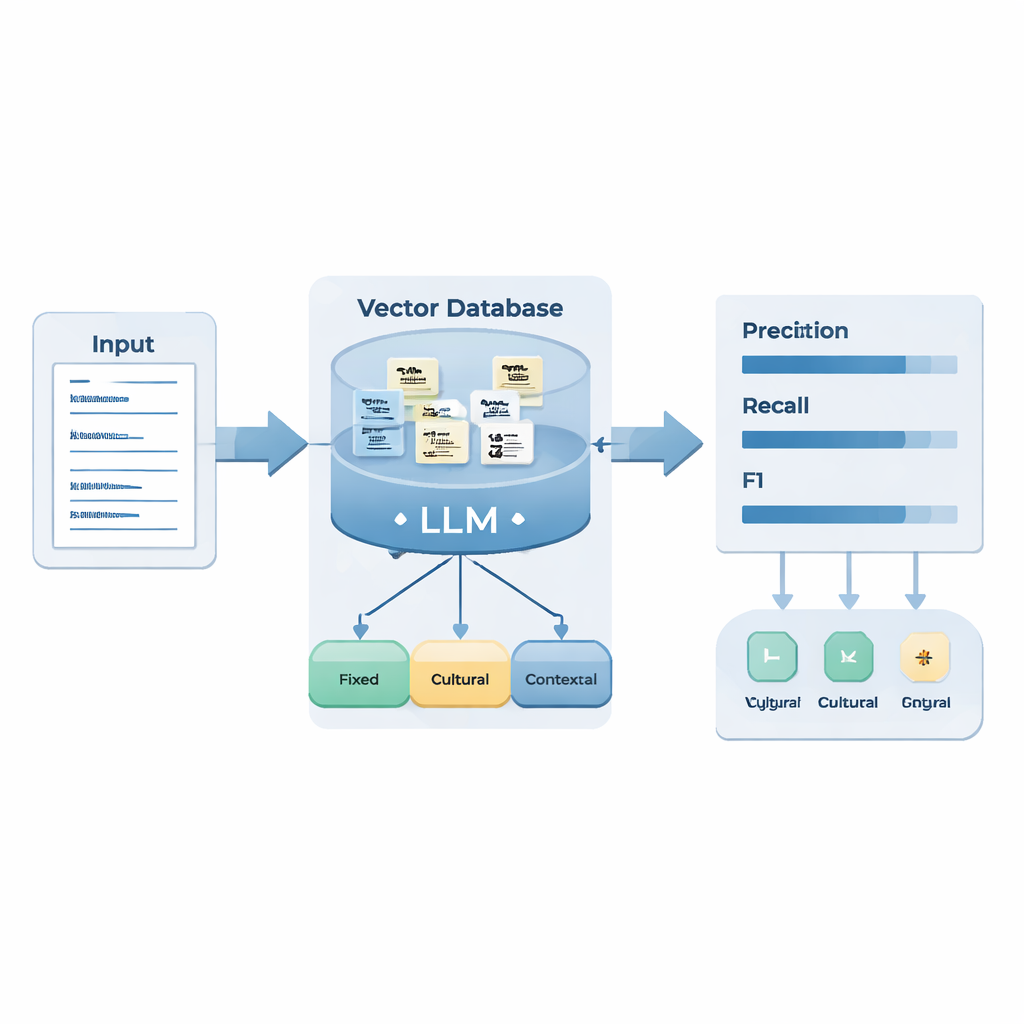

Teaching the Model to Think in Steps

The team combined two AI techniques. Retrieval-Augmented Generation (RAG) lets the model pull in relevant examples from the custom hint database whenever it processes a new press-conference answer. Chain-of-Thought (CoT) prompting then forces the model to reason step by step: identify the language, split the answer into sentences, check for known hint patterns, decide whether a sentence expresses a particular motive (such as criticism or warning) through a recognized strategy (such as factual statement, contrast, or irony), and finally label it as a fixed, cultural, contextual hint or “no hint.” The system also performs a self-check to ensure that the implied meaning is truly different from the literal wording.

How Well Did It Work?

To test the system, the authors used new 2025 press-conference data in both languages. Overall, the enhanced model did a credible job at spotting hidden messages: it caught most genuine hints (high recall) and achieved respectable balance between catching and overcalling them (F1 score 0.83 for Russian and 0.76 for Chinese). It was especially strong on fixed hints in both languages, supporting the idea that stable patterns are easiest for machines to learn. However, it struggled more with Chinese cultural and contextual hints than with Russian ones. The authors link this gap to differences in style: Russian diplomatic speech often uses vivid metaphors and sharp contrasts that signal criticism or warning quite clearly, while Chinese discourse relies more on neutral formulas, idioms, and context-dependent politeness, which are harder for the model to distinguish from literal statements.

What the Errors Reveal—and How to Improve

Looking closely at the mistakes, the authors found three recurring problems. Sometimes the model “over-read” the text, inventing hidden meanings where none existed. Sometimes it detected a hint but assigned the wrong type, blurring the line between fixed and contextual cases. And sometimes it simply treated plain wording as a hint because certain sensitive words or familiar patterns were present. To address these weaknesses, the paper suggests adding many clear “no-hint” diplomatic phrases as negative examples, forcing the system to anchor its inferences more tightly to the actual question and surrounding context, matching sentences against the knowledge base more than once with rewrites, and inserting a pre-filter and self-evaluation step that asks: is this already explicit, or truly implicit?

Why This Matters for the Rest of Us

For non-specialists, the key takeaway is that large language models can already help analysts sift through large volumes of official statements and flag places where governments may be speaking between the lines. At the same time, the study highlights how strongly diplomacy depends on culture, history, and style—factors that remain challenging even for advanced AI. By merging linguistic theory with modern AI tools, this work points toward more reliable systems for tracking subtle signals in global politics, while making clear that human judgment and cross-cultural expertise are still essential for interpreting what is left unsaid.

Citation: Guo, Y., Wang, X. Hint recognition in Chinese and Russian diplomatic discourse using large language models. Sci Rep 16, 5751 (2026). https://doi.org/10.1038/s41598-026-36338-z

Keywords: diplomatic language, implicit meaning, large language models, cross-lingual analysis, retrieval-augmented generation