Clear Sky Science · en

Scaling digital models

Why Shrinking Machines Matters

Before new construction machines ever touch dirt, engineers now test their virtual cousins first. These digital stand‑ins, called digital models, help predict how real equipment will behave, saving money and improving safety. But each size of machine—full‑scale, mid‑size, or tabletop—usually needs its own expensive round of sensors and testing to make the digital model trustworthy. This paper shows a way to calibrate just one real machine and then “shrink” or “grow” that knowledge so it works for different‑size machines, without repeating all the experiments.

From Real Machines to Their Virtual Twins

Digital models try to mimic the true physics of a machine: how heavy parts move, how hydraulic cylinders push, how soil resists a digging bucket. When these models are tuned with real measurements from sensors on the machine, they can become digital twins, updated as the machine works. For construction vehicles like wheel loaders, such models are especially useful because the industry struggles with low productivity in repetitive tasks. Previous work has shown that while physics‑based simulations can accurately track motion when a loader is just driving, they often fail badly when the bucket is digging into soil. In those moments, forces become complex and hard to predict. Careful experiments with load pins, pressure sensors, and motion trackers can fix this, but repeating that process for every size of loader in a product family quickly becomes too costly.

Why Simple Scaling Breaks Down

Engineers have a long tradition of using scale models: wind tunnels for aircraft, miniature bridges, and reduced‑size ships. The usual tool behind this is dimensional analysis, which rewrites the physics in terms of dimensionless numbers—ratios that should behave the same at any scale if the systems are perfectly similar. In practice, real product lines rarely obey these perfect “similitude” rules. Different loaders may have changed proportions, different hydraulic layouts, or slightly altered materials. Those mismatches, called distorted scaling factors, twist the relationships among the key dimensionless numbers. Traditional formulas and simple regression tools cannot reliably capture these distortions, especially when the underlying behavior is highly nonlinear. As a result, classic scaling laws can give large errors when applied straight to modern industrial machines.

Letting Data Learn the Distortions

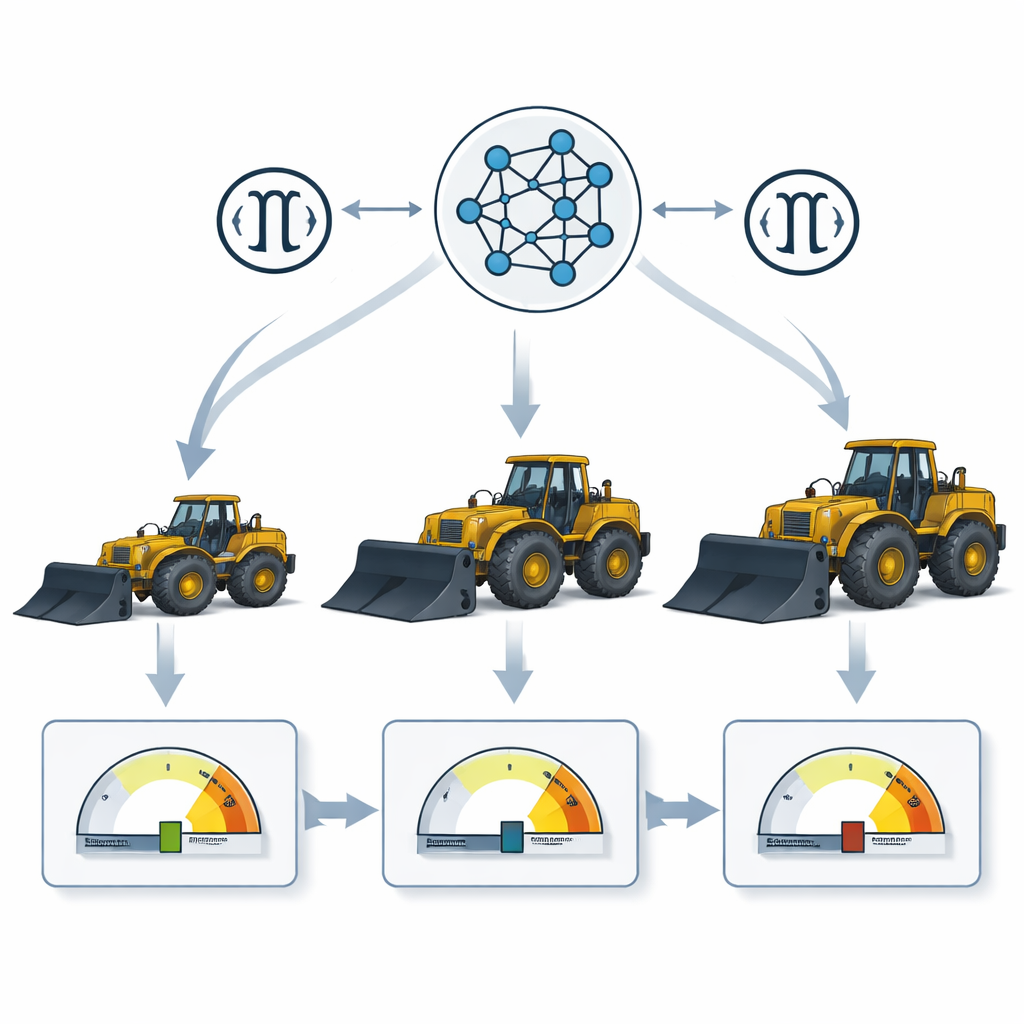

The authors propose a new framework that lets machine learning learn how scaling really behaves when the neat textbook assumptions fail. First, they use dimensional analysis to reduce a complex loader mechanism to a small set of influential variables, such as joint forces, bucket weight, hydraulic pressures, and accelerations. These are combined into dimensionless groups that describe the system’s behavior more compactly. Next, they introduce “distortion terms,” which measure how each of these groups differs between a reference machine (say, a medium‑size loader) and another machine (a larger or smaller one). A neural network is then trained to map these distortions to a single prediction factor that tells how much to adjust a key quantity—here, the force in a critical bucket joint—when moving from one scale to another. Instead of hand‑crafting a new model for every loader, the network discovers this mapping directly from simulated and measured data.

Testing the Idea with Three Wheel Loaders

To put the method to the test, the team used a detailed digital model of an industrial wheel loader that had already been carefully calibrated with sensors. They paired it with a larger commercial loader and a tiny 11‑kilogram desk‑top model. The medium and large machines provided training data, generated by realistic simulations of digging motions. The miniature loader was held back as a fresh test. Several machine‑learning setups were tried, including a standard feed‑forward neural network and more complex recurrent networks that track time histories. The best performer was the simpler feed‑forward network, which predicted the scaling factor for joint forces with near‑perfect statistical accuracy on the training scales. When applied to the miniature loader—whose data it had never seen—the method cut the average error in estimated joint forces to about 4 percent, compared with more than 40 percent error when using textbook scaling alone.

What This Means for Future Machines

For a non‑specialist, the takeaway is that companies may soon be able to calibrate one well‑instrumented “hero” machine and then reliably translate that knowledge to an entire family of larger and smaller machines. By combining the discipline of dimensional analysis with the flexibility of neural networks, this approach turns messy real‑world differences into learnable patterns. That could dramatically reduce the number of sensors, tests, and engineering hours needed to build accurate digital twins across a product line. Beyond wheel loaders, the same strategy could help design and test many other complex systems—from cranes and robots to energy devices—whenever building and instrumenting every version at full size would be too slow or too expensive.

Citation: Karanfil, D., Ravani, B. Scaling digital models. Sci Rep 16, 5962 (2026). https://doi.org/10.1038/s41598-026-36310-x

Keywords: digital twin, machine learning, dimensional analysis, construction equipment, model scaling