Clear Sky Science · en

Deep learning with fourier features for regressive flow field reconstruction from sparse sensor measurements

Why guessing the wind matters

Imagine trying to understand how air flows around an airplane wing, a wind turbine, or even a city block, when you can afford to place only a handful of sensors. Engineers face this problem constantly: full simulations or dense measurements of a flow field are expensive, but decisions about safety, efficiency, and climate often depend on knowing the whole picture. This paper presents FLRNet, a deep‑learning method that can infer an entire flow pattern from just a few readings, doing so more accurately and robustly than existing techniques across a wide range of flow conditions.

From a few readings to a full picture

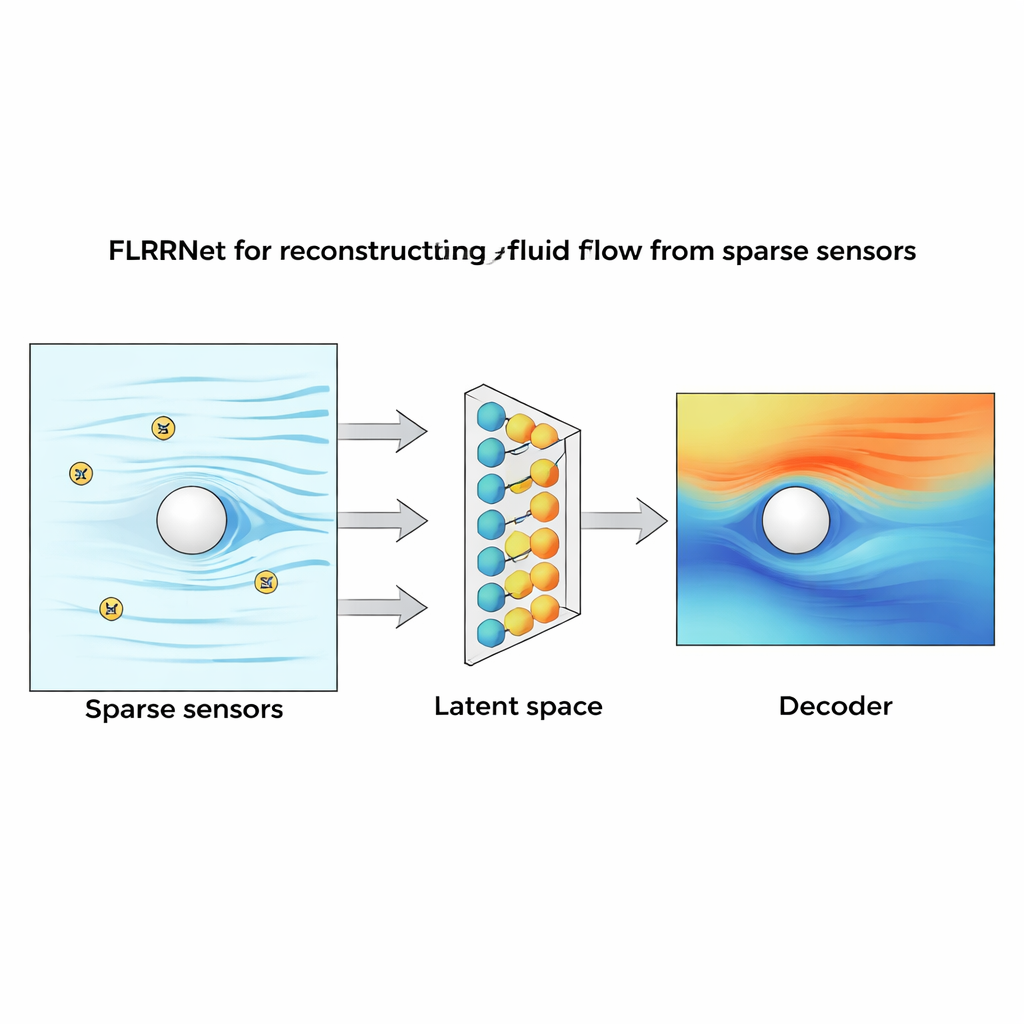

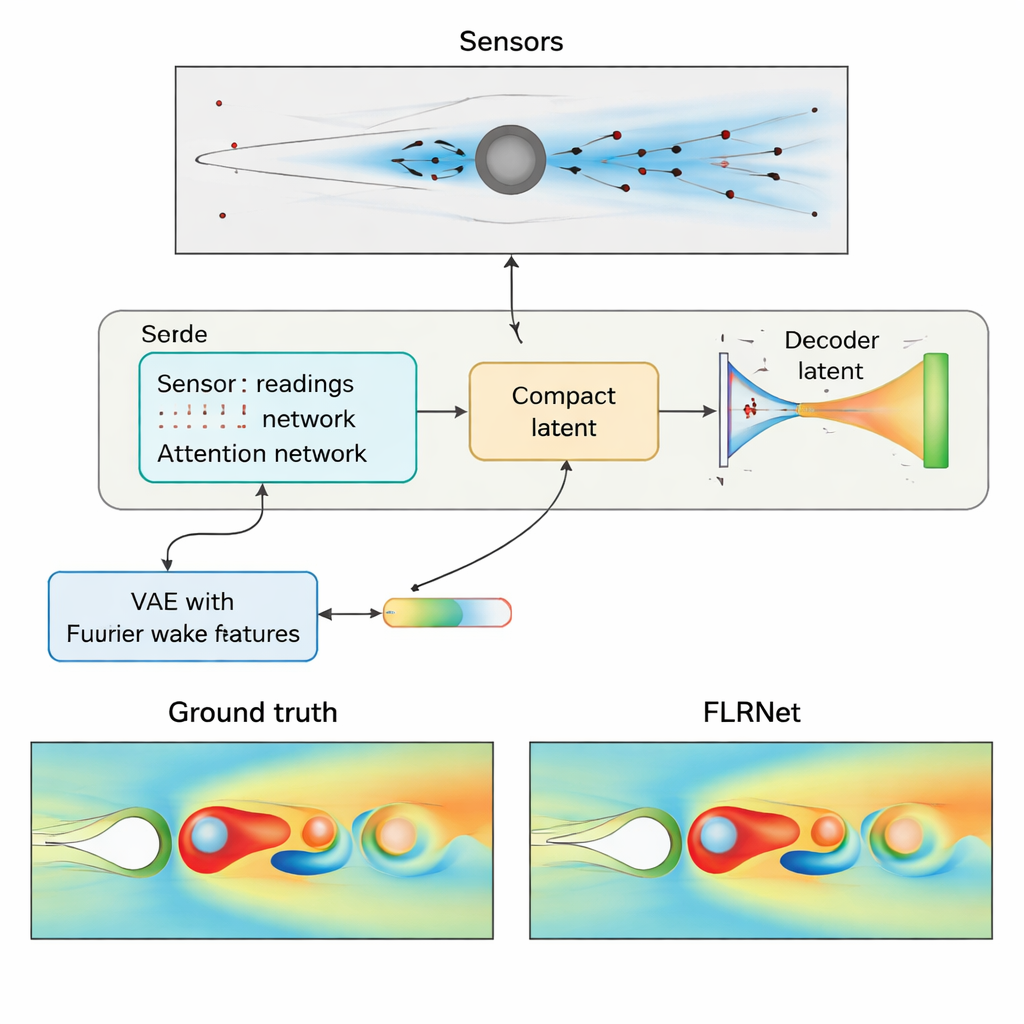

In a typical flow experiment or simulation, the underlying fluid field contains millions of values in space and time, while sensors may record only a few dozen numbers. Directly inverting this mapping from “few” to “many” is mathematically ill‑posed: many different flow states could produce the same sparse readings. Earlier approaches either solved a new optimization problem every time data arrived, or trained machine‑learning models that worked only for a narrow range of conditions and often produced overly smooth, blurry reconstructions. The authors reframe the task: instead of jumping straight from sensor data to the full flow, they first learn a compact internal description—a kind of “flow fingerprint”—and then learn how sensors relate to that fingerprint.

Teaching a network to dream in flows

To build this fingerprint, FLRNet uses a variational autoencoder (VAE), a type of neural network that learns to compress complex data into a low‑dimensional latent space and then reconstruct it. The encoder converts a detailed flow snapshot into a short numerical code; the decoder learns to expand that code back into a full flow field. Crucially, the authors enhance this VAE with two ideas borrowed from modern image processing. First, they feed in Fourier features derived from the spatial coordinates, which help the network represent fine, high‑frequency structures such as sharp vortices that standard networks tend to wash out. Second, they add a “perceptual loss” term, which compares flows not just pixel by pixel but through features extracted by a pre‑trained vision network, nudging the reconstructions to preserve visually and physically important patterns.

Listening carefully to sparse sensors

Once this compact flow language is learned, a second network learns to translate from sensor readings into the latent code. Here the authors use an attention‑based design, similar in spirit to those used in modern language models. The sensor measurements are embedded and passed through a series of attention blocks that allow the network to weigh which sensors matter most for a given flow state. A global attention pooling step distills all sensor information into a single vector, which is then mapped to the latent variables that the decoder can interpret. During use, only this sensor network and the decoder are needed, so FLRNet can rapidly turn new measurements into full flow reconstructions.

Putting the method to the test

To evaluate FLRNet, the authors choose a classic benchmark: air flowing past a circular cylinder in a rectangular channel. By varying the Reynolds number over a wide range from 10 to 10,000, they generate flow regimes ranging from steady, smooth patterns to unsteady vortex shedding and fully chaotic wakes. They then place 8, 16, or 32 virtual sensors in different layouts—randomly in the domain, concentrated around the cylinder, or near the outer walls—and ask FLRNet and several alternatives to reconstruct the full velocity field. Compared with a multilayer perceptron and a method based on proper orthogonal decomposition, FLRNet consistently achieves lower errors, sharper structures, and better preservation of vortex patterns, especially in complex, high‑Reynolds‑number flows and when sensors are very sparse.

Sharper details, less noise, more realism

Beyond simple error scores, the authors probe how each method distributes its mistakes across spatial scales. Using Fourier analysis, they show that traditional models tend to lose high‑frequency content, smoothing away small‑scale features. FLRNet, thanks to its Fourier features and perceptual loss, recovers more of the fine‑scale energy while keeping overall errors low. It also proves more robust when artificial noise is added to the sensor readings: even as noise levels grow, FLRNet’s reconstructions degrade more gracefully than the baselines. Importantly, its performance remains strong across all tested flow regimes, instead of being tuned to just one particular Reynolds number.

What this means in plain terms

The study demonstrates that it is possible to reconstruct rich, detailed flow fields from surprisingly few measurements by first learning a compact internal representation of how flows behave, and then learning how sensors map into that representation. FLRNet’s design allows it to capture both broad structures and small‑scale swirls, handle noisy data, and generalize across very different flow conditions. For engineers and scientists, this means faster, more reliable flow estimates from limited instrumentation, with potential applications ranging from aerospace and energy systems to environmental monitoring and materials research.

Citation: Nguyen, P.C.H., Choi, J.B. & Luu, QT. Deep learning with fourier features for regressive flow field reconstruction from sparse sensor measurements. Sci Rep 16, 5980 (2026). https://doi.org/10.1038/s41598-026-36301-y

Keywords: flow reconstruction, deep learning, fluid dynamics, sparse sensors, Fourier features