Clear Sky Science · en

Integrative multimodal hybrid data fusion for mortality prediction

Why smarter ICU predictions matter

When someone’s kidneys suddenly fail in the intensive care unit, doctors must quickly decide who is most at risk of dying and needs the most aggressive care. Today, those decisions rely on experience and on scores built from a limited slice of patient data. This study asks a simple question with big consequences: if we let artificial intelligence look at many kinds of hospital data at once—heart signals, lab tests, and doctors’ notes—can it warn us more accurately when a patient with acute kidney injury is in real danger?

Seeing the patient from many angles

Acute kidney injury (AKI) is common and deadly, affecting roughly one in ten people over a lifetime and contributing to tens of thousands of deaths each year. Clinicians already review many information streams—vital signs, blood tests, heart rhythm strips, and long narrative notes—to judge who is getting better or worse. Most computer tools, however, only use one of these streams at a time, such as lab values or a single scoring system. That is like trying to understand a complex movie by listening to only the dialogue or watching without sound. The researchers set out to build an AI system that could, in effect, watch the whole movie by combining three major types of information collected in modern ICUs.

Turning messy hospital data into a common language

The team drew on large, publicly available hospital databases from a U.S. teaching hospital. Structured records from the MIMIC-IV dataset provided millions of entries on vital signs, lab test results, procedures, diagnoses, and demographics. Electrocardiogram (ECG) data added detailed snapshots of heart electrical activity. Text from doctors’ notes supplied rich descriptions of symptoms, treatments, and clinical impressions. Each type of data needed heavy cleaning: removing noise and outliers in lab and monitoring data, filtering and normalizing raw ECG signals, and stripping headers and identifiers from notes before feeding them into a language model similar to those used in modern chatbots. For the tabular values, the authors distilled tens of thousands of possible measurements down to 500 especially informative features, grouped into clinically familiar themes such as kidney function, liver enzymes, blood pressure, breathing status, and neurological scores.

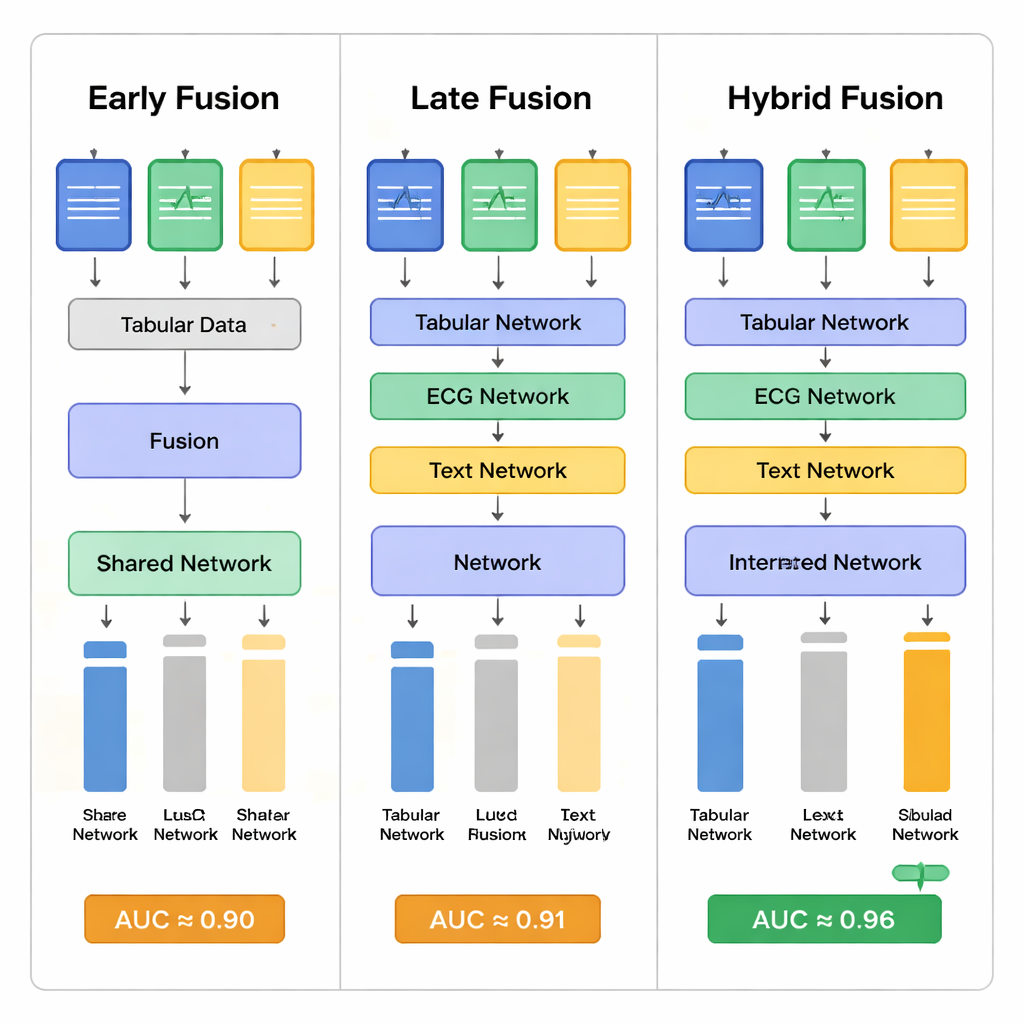

Blending multiple data streams with AI

At the heart of the work is how these very different inputs are fused. The researchers compared three strategies. In “early fusion,” they converted all inputs into numeric vectors, then combined them right away and passed them through a deep neural network inspired by image-recognition models. In “late fusion,” each data type first went through its own specialized network—one tuned to tables, one to ECG, one to text—and only then were the outputs merged. In their “hybrid” approach, the tabular and ECG pathways were merged earlier, while the textual notes were added at a later stage. Attention mechanisms—software components that learn to focus on the most informative parts of each input—helped the networks decide which signals from each modality mattered most for predicting survival.

How well did the model predict death risk?

The authors first tested simpler models that used just one type of data at a time. These single-source models did reasonably well, but each missed important cases: text-based models, for example, often failed to catch patients who would later die, while ECG-based models varied widely depending on how they were trained. When all three data sources were combined, performance clearly improved. The best hybrid fusion model reached an area under the receiver operating curve (AUC) of about 0.96 and an accuracy over 93% in predicting whether AKI patients in the ICU would die during their hospital stay. This substantially exceeded most previous studies in the field, which typically reported AUC values below 0.90. Statistical tests showed that the hybrid strategy offered the most stable and balanced results, reducing both missed deaths and unnecessary alarms compared with the other fusion methods.

Promises, caveats, and what it means for patients

To a non-specialist, the core message is straightforward: AI tools that look at many aspects of a patient’s condition at once can foresee danger more reliably than tools that focus on a single data stream. For AKI patients in intensive care, that could translate into earlier warnings, more carefully targeted treatments, and better use of limited ICU resources. Yet the authors stress that their study is based on data from just one hospital system and on complex “black box” models that are hard for clinicians to interpret. They call for future work on making such tools explain their reasoning, handling missing data when not all tests are available, and checking that the algorithms treat different patient groups fairly. Even with these caveats, the study illustrates how weaving together numbers, waveforms, and words can give computers a more human-like, holistic view of critically ill patients—and potentially help save lives.

Citation: Abuhamad, H., Zainudin, S. & Abu Bakar, A. Integrative multimodal hybrid data fusion for mortality prediction. Sci Rep 16, 5803 (2026). https://doi.org/10.1038/s41598-026-36296-6

Keywords: acute kidney injury, intensive care, multimodal machine learning, mortality prediction, clinical decision support