Clear Sky Science · en

Uncertainty and inconsistency of COVID-19 non-pharmaceutical intervention effects with multiple competitive statistical models

Why this study matters now

The COVID-19 pandemic reshaped daily life through school closures, curfews, mask mandates, and many other rules. Governments argued that these non-pharmaceutical interventions, or NPIs, were necessary to slow the virus. But how strong was the evidence that each measure really worked, and how certain were scientists about their estimates? This study revisits Germany’s official analysis of COVID-19 policies and shows that much of the supposed precision about what helped and by how much was an illusion.

Looking again at Germany’s pandemic playbook

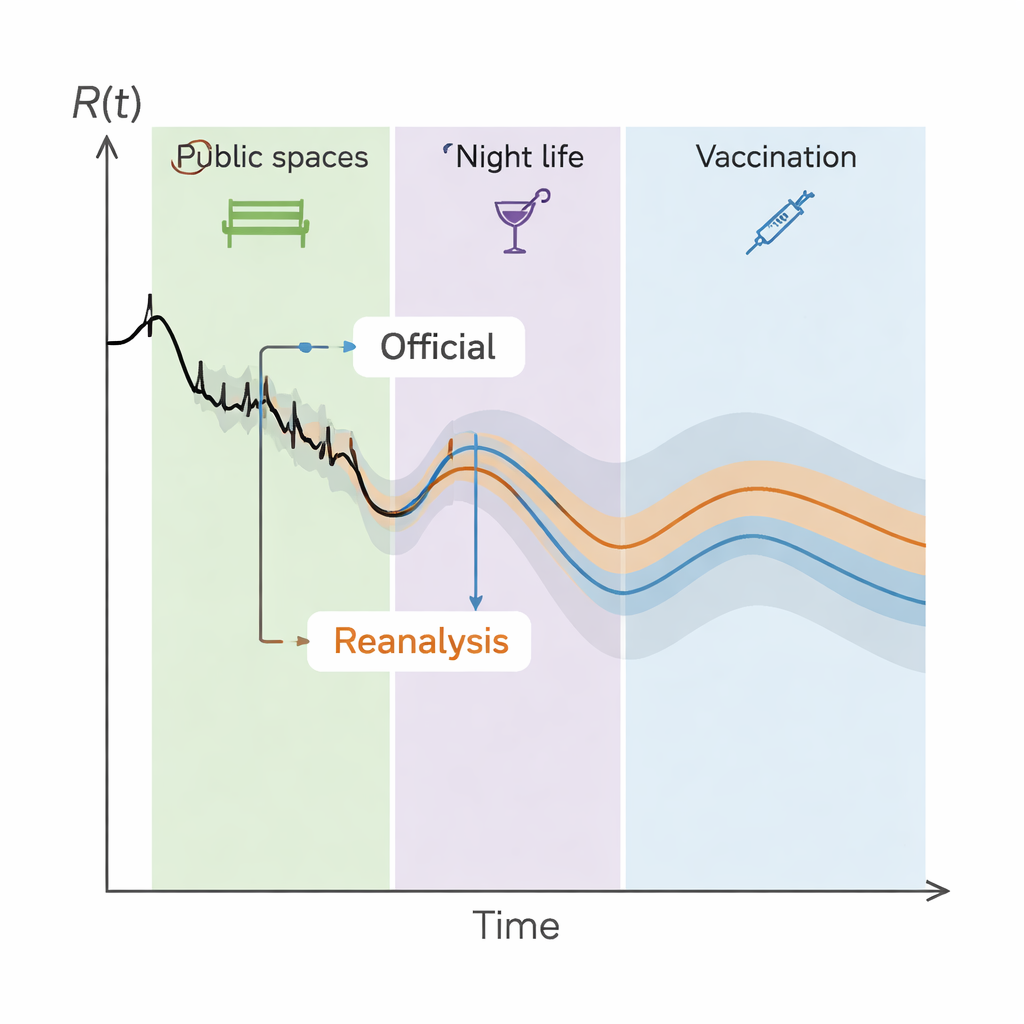

Germany’s health ministry commissioned a major analysis, called the StopptCOVID study, to estimate how different interventions affected the virus’s spread in each federal state. The original work used a statistical model that linked a time-varying reproduction number, R(t) – how many new infections each case causes on average – to more than 50 policy and context variables, including vaccination and season of the year. The model produced neat numbers for how much closing public spaces, restricting nightlife, or requiring masks reduced R(t), and these numbers were reported with seemingly tight confidence intervals, suggesting considerable certainty.

What the reanalysis set out to test

The new research team treated the German report as something that needed an independent audit. They kept the same basic input data and epidemiological assumptions but used nine different, widely accepted statistical approaches to probe how robust the original results really were. Their focus was deliberately narrow: instead of debating which biological model of the epidemic was best, they asked how much the answers changed when one took statistical uncertainties seriously, especially for time series that track many regions over long periods and include dozens of overlapping policies.

Hidden statistical pitfalls in the original study

Two problems turned out to be crucial. First, the official model assumed that the unexplained part of the data – the residuals – were random from day to day. In fact, when plotted over time for each state, these residuals clearly moved in runs, showing strong autocorrelation. That means yesterday’s errors were tied to today’s, violating basic regression assumptions and making error bars from standard formulas far too optimistic. Second, many interventions were introduced or tightened at almost the same time across the country. This created severe multicollinearity: the activation patterns of different NPIs were so similar that the model had trouble telling them apart. Under these conditions, estimates for individual policy effects can swing widely or even flip sign if the model is tweaked, again undermining any impression of precision.

What remains solid, and what does not

Across the competitive model set, the researchers found that the official confidence intervals should have been much wider. When autocorrelation and collinearity are handled more rigorously, most NPIs cannot be linked confidently to changes in R(t). That does not mean the measures had no effect; it means the available data and methods cannot reliably disentangle them. Some associations are more robust: vaccination stands out as clearly reducing transmission, and there is strong, consistent evidence that COVID-19 followed a seasonal pattern. Restrictions on public spaces, nightlife, and certain service sectors, as well as the strictest rules in child care, also emerge as candidates for real effects, but even there the exact size of the benefit is highly uncertain and may be partly confounded with early, broad measures like general physical distancing.

Lessons for future pandemic decisions

For a non-specialist, the take-home message is that tidy tables ranking policies by effectiveness can be misleading when based on complex, noisy data. The authors argue that Germany’s approach – and much of the global time-series literature on COVID-19 policies – underestimated uncertainty and therefore overstated how precisely we can judge individual interventions. They call for future pandemic plans to build evaluation into the design of measures: allowing adequate observation periods, collecting better-quality data, using modern time-series methods, and subjecting influential models to independent verification. Without such care, governments risk making or defending sweeping policies on a fragile statistical foundation, and the public may be given more confidence in those numbers than they deserve.

Citation: Müller, B., Padberg, I., Lorke, M. et al. Uncertainty and inconsistency of COVID-19 non-pharmaceutical intervention effects with multiple competitive statistical models. Sci Rep 16, 5767 (2026). https://doi.org/10.1038/s41598-026-36265-z

Keywords: COVID-19 interventions, pandemic policy evaluation, statistical uncertainty, Germany, non-pharmaceutical measures