Clear Sky Science · en

A hybrid learning framework integrating chaotic Niche alpha evolution for student academic performance prediction

Why predicting grades early matters

Schools increasingly sit on a gold mine of information about their students—from attendance records and homework scores to survey responses about home life and study habits. This paper explores how to turn that raw data into early warnings about who might struggle or excel in a course. The authors present a new computer framework that more accurately predicts final grades for secondary school students, opening the door to earlier, more tailored support instead of last‑minute rescue efforts.

From report cards to rich data trails

Modern classrooms generate far more than a couple of exam scores. The dataset used in this study includes 480 students and 32 different pieces of information for each one: age, family background, commute time, access to the internet, time spent studying, absences, and three separate course grades over the school year. Together, these details trace a learning journey—how effort, circumstances, and prior results build up to a final mark. Yet this richness also makes prediction harder: the data are noisy, uneven, and highly varied from one student to another.

A smarter way to read learning over time

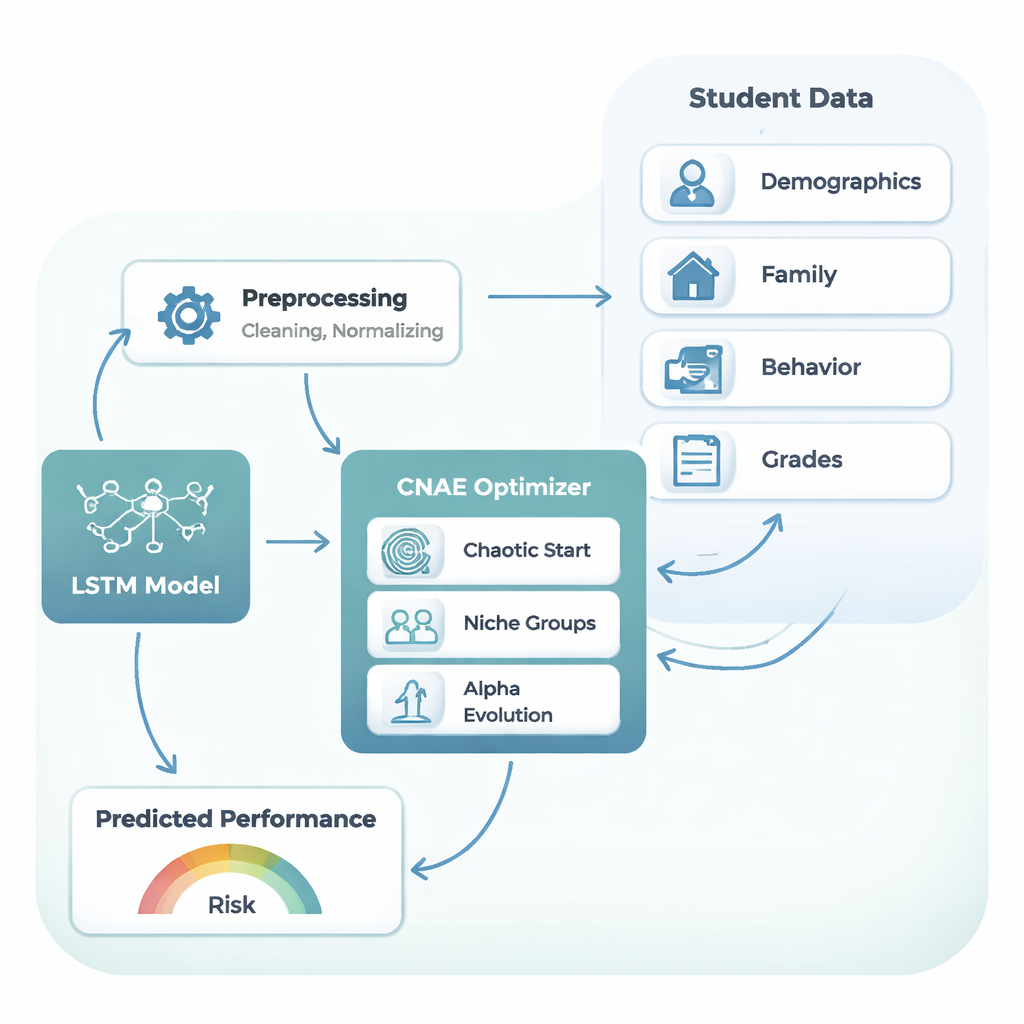

To follow these learning journeys, the authors rely on a type of neural network called Long Short-Term Memory, or LSTM. Instead of treating each piece of information as a disconnected fact, an LSTM is designed to remember useful signals from earlier in a sequence—much like a teacher who recalls a student’s steady improvement or gradual disengagement rather than looking only at the last quiz. In this study, the LSTM takes in the blend of background factors, behavior, and earlier grades and outputs a prediction of the final exam score on a 0–20 scale. However, LSTMs are fussy: their performance depends heavily on design choices such as how many layers they have, how many units per layer, how fast they learn, how much regularization they use, and how many student records they see at a time during training.

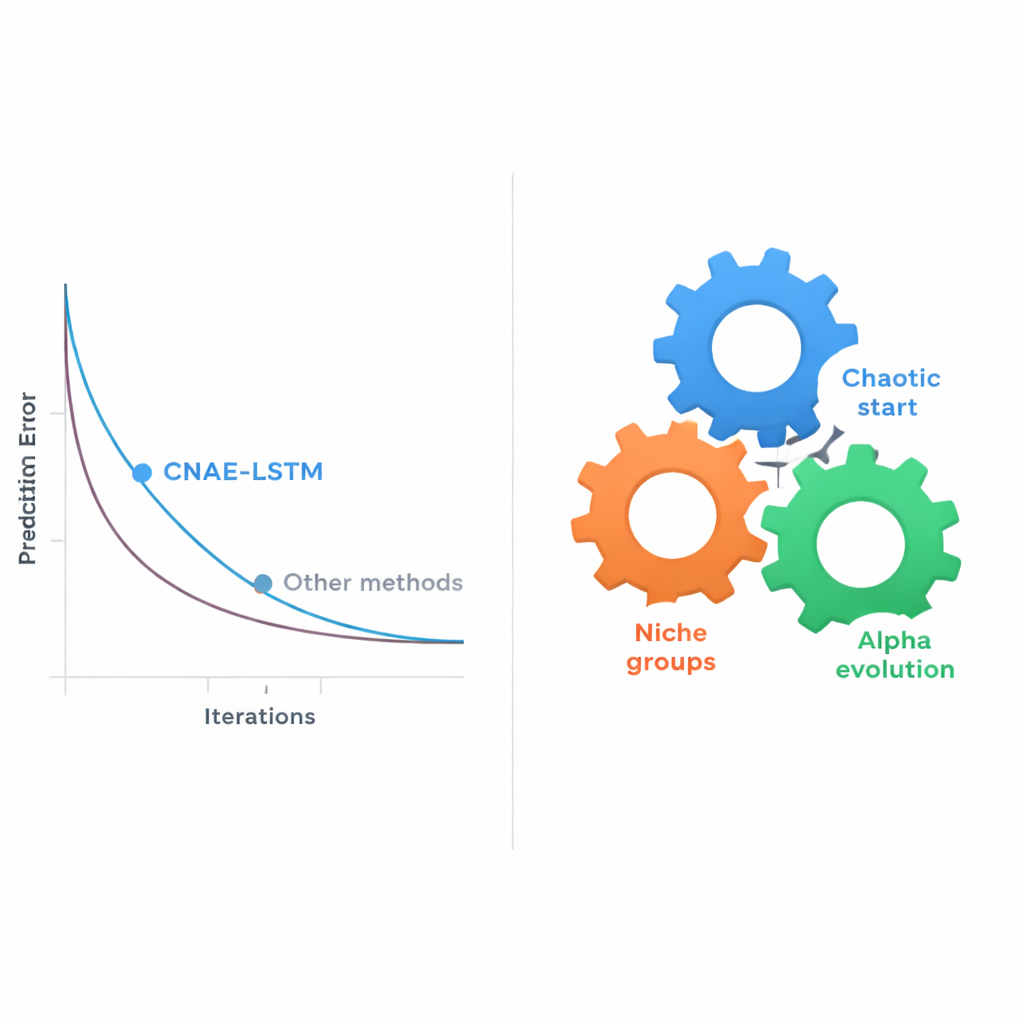

Letting evolution search for the best model

Choosing those design settings by hand—or even by simple trial-and-error grids—quickly becomes impractical as the combinations explode. The heart of this paper is a new automatic search strategy called Chaotic Niche Alpha Evolution (CNAE), which the authors pair with the LSTM, forming the CNAE‑LSTM framework. CNAE starts by generating a wide variety of candidate LSTM designs using a mathematical process inspired by chaos, ensuring the initial options are spread widely across the search space. It then groups similar candidates into “niches,” keeping only the strongest example from each cluster while mutating them slightly to probe nearby possibilities. Finally, an “alpha evolution” step nudges the search toward the most promising regions while gradually shifting from broad exploration to fine‑tuning. Each candidate LSTM is judged by how well it predicts grades on a held‑out validation set, and the best designs survive to shape the next generation.

What the experiments show

The researchers tested their approach on the real secondary‑school dataset, comparing CNAE‑LSTM with a range of alternatives: a support vector machine (a classic machine‑learning method), two deep‑learning models (a convolutional network and a Transformer), a standard hand‑tuned LSTM, and several LSTMs whose settings were chosen by well‑known evolutionary search methods or by grid and random search. Performance was measured by how close the predicted grades came to the true ones and by how much of the variation in scores the model could explain. CNAE‑LSTM came out on top across all measures: it had the lowest average prediction error and the highest ability to account for differences among students, improving error by more than 10 percent compared with the strongest existing evolutionary baseline. Repeating the experiments 30 times showed that CNAE‑LSTM was not only more accurate, but also more stable—its results varied less from run to run.

Why this matters for students and schools

For a lay reader, the bottom line is straightforward: by letting an evolutionary search procedure design the predictive model, schools can obtain more reliable forecasts of how students will finish a course long before the final exam. The CNAE‑LSTM framework turns messy, real‑world educational data into a clearer picture of who is on track and who may need extra help, while using computing resources efficiently enough to be practical. Although the current study focuses on a single secondary‑school dataset, the same approach could be adapted to other subjects and school levels. If paired with thoughtful, humane interventions, such forecasting tools could help educators move from reacting to failure to preventing it.

Citation: Chen, H., Zhou, Y. & Cao, Q. A hybrid learning framework integrating chaotic Niche alpha evolution for student academic performance prediction. Sci Rep 16, 5302 (2026). https://doi.org/10.1038/s41598-026-36263-1

Keywords: student performance prediction, educational data mining, LSTM, evolutionary optimization, early warning systems