Clear Sky Science · en

Mutual information-based hierarchical NBV decision for active semantic visual SLAM under dynamic environments

Robots That Can Think Ahead

As robots move out of factories and into homes, hospitals, and offices, they must navigate spaces filled with people and other moving objects. This paper presents a new way for a robot to "think ahead" about where to look and how to move so that it can build a reliable map of its surroundings—even when those surroundings refuse to sit still. The work matters for anyone interested in safer service robots, smarter delivery bots, or future home assistants that must share space with humans rather than empty corridors.

Why Moving People Confuse Robots

To get around on their own, many robots use a technique called visual SLAM, in which a camera helps them build a map and estimate their position at the same time. This works well in still environments but quickly breaks down when people are walking by, blocking the view, or carrying objects. One common fix is to use "semantic" vision so the robot can recognize people, cars, and chairs and simply ignore them when building the map. However, this creates a new problem for active robots that choose their own paths: if they throw away too many visual clues, they may lose track of where they are altogether. The camera’s limited field of view makes this even harder, because a single person passing close by can hide most of the useful scenery from the robot’s eyes.

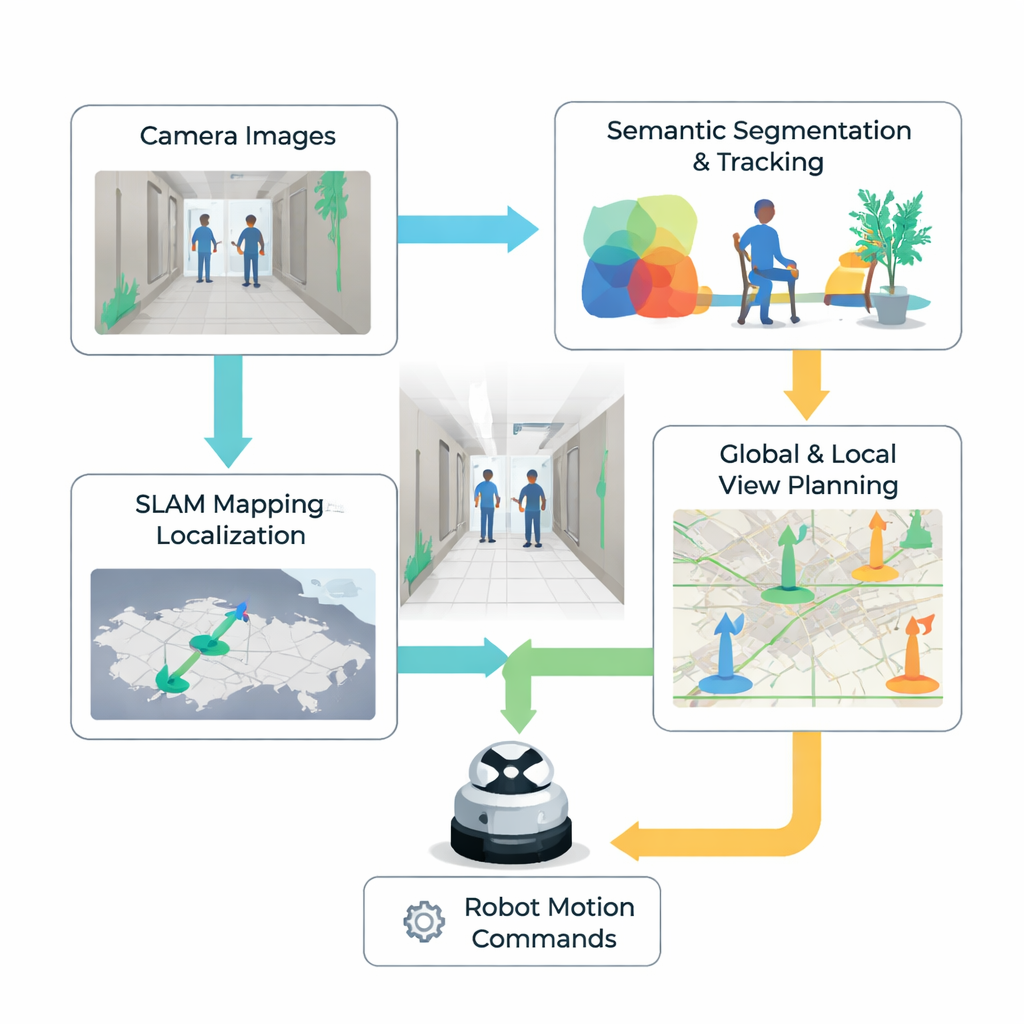

A Two-Level Strategy for Choosing Where to Look Next

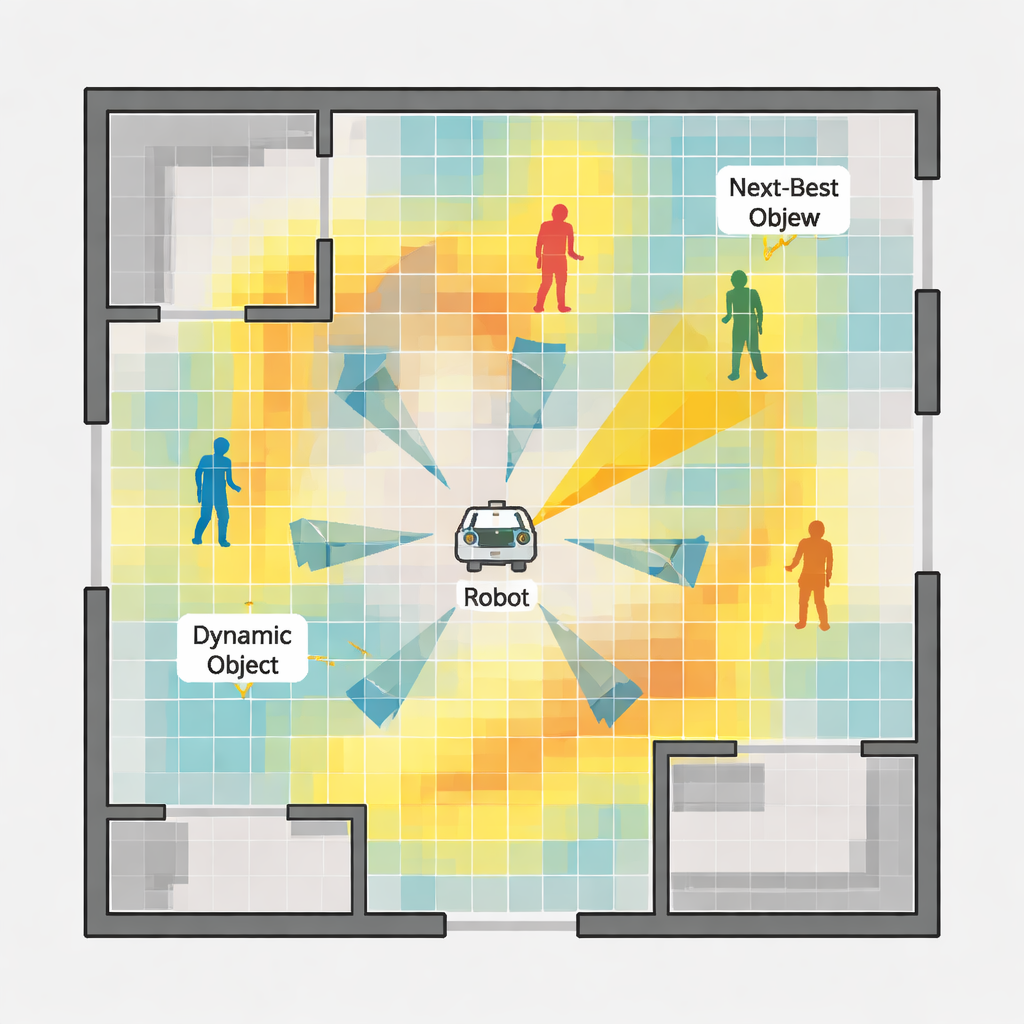

The authors propose a hierarchical decision system that helps a robot decide its next viewpoints in a more informed way. At the higher level, the robot maintains a bird’s-eye grid map of free, occupied, and unknown areas. It evaluates possible distant viewpoints by estimating how much each one would reduce uncertainty in this map, a concept borrowed from information theory. The robot prefers spots that reveal large unexplored regions while also considering how far it must travel and how much it needs to turn its camera. Once a promising area is chosen, a lower-level process takes over to fine-tune exactly how the robot should move and face within that neighborhood so that it can actually see enough useful detail with its narrow camera view.

Seeing What Is Stable and Dodging What Is Not

At the heart of the local decision process is a "feature probability map" built from each camera image. First, the system detects visual landmarks—corners and patterns in the scene—that are likely to remain stable over time and are helpful for tracking motion. It then uses a modern object detector to find potentially moving objects, such as people, and tracks them across frames. By analyzing how these objects move, the system estimates not just where they are now, but where they are likely to be in the near future. These two sources of information are fused into a heatmap over the image: bright regions indicate a high chance of seeing reliable landmarks, while darker regions mark places that are either feature-poor or likely to be covered by moving objects. The robot uses this map to judge which small motion—turning left, right, or moving forward—will give it the clearest and most stable view next.

Testing in Virtual Worlds and the Real One

The researchers tested their approach in two simulated indoor spaces of different sizes and complexity, each populated with wandering virtual pedestrians, and then on a physical robot driving through a real indoor environment. They compared their method with several established exploration strategies that aim mainly to cover space or shorten travel distance. In the simulations, the new system produced maps with less distortion and achieved better positioning accuracy while exploring in roughly the same or less time. It was also less likely to lose track of its position or to come uncomfortably close to moving people. In the real-world experiment, the method ran in real time on an off-the-shelf robot computer, confirming that it is practical for deployment outside the lab.

What This Means for Everyday Robots

In plain terms, this work teaches a robot to be choosy about where it looks and where it goes when people are around. By combining scene understanding, motion prediction, and a measure of information gain, the robot can steer itself toward views that are both informative and safe, rather than simply marching toward the nearest unexplored corner. This makes its internal map more reliable and its movements more predictable, which are key ingredients for robots that must share crowded spaces with humans. Some challenges remain—such as sudden large crowds blocking the camera—but the approach marks a step toward home and service robots that can gracefully handle the messy, dynamic nature of real life.

Citation: Yang, Z., Sang, A.W.Y., Muthugala, M.A.V.J. et al. Mutual information-based hierarchical NBV decision for active semantic visual SLAM under dynamic environments. Sci Rep 16, 5847 (2026). https://doi.org/10.1038/s41598-026-36259-x

Keywords: active SLAM, robot navigation, dynamic environments, semantic mapping, next-best view