Clear Sky Science · en

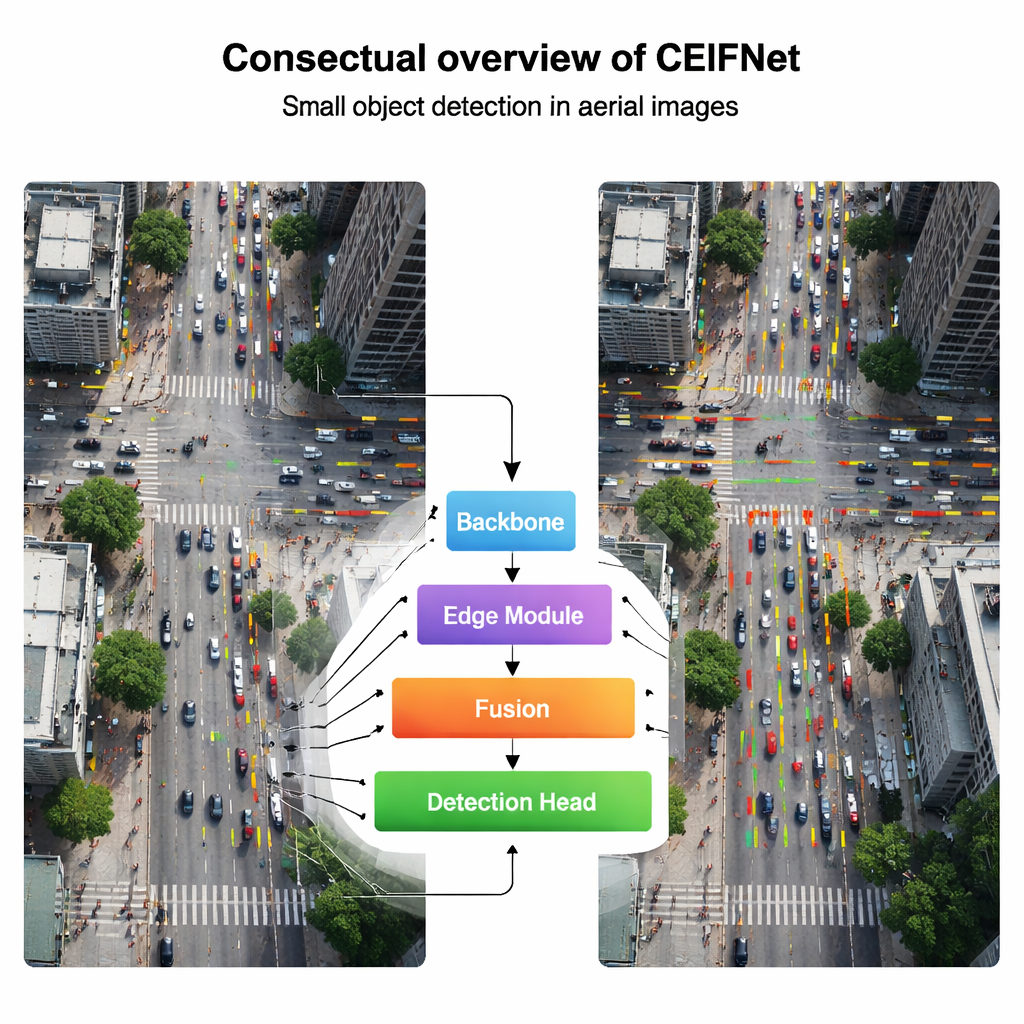

Cross-stage edge information fusion network for small object detection in aerial images

Why spotting tiny details from the sky matters

From traffic monitoring and disaster response to crop care, more and more of our world is watched from above by drones. Yet many of the things we care about most in these aerial images—people, cars, or animals—appear as just a few pixels wide. This paper introduces a new computer vision system, CEIFNet, designed specifically to find these tiny objects more accurately and quickly, even when they are surrounded by cluttered city streets, fields, or nighttime noise.

Seeing small things in a big picture

Standard object-detection systems were built mainly for ground-level photos, where a car or a person usually fills a noticeable part of the frame. In drone images, however, the camera may be hundreds of meters up, so each target is minuscule and easily blurred or lost when the image is shrunk inside a neural network. The authors explain that popular one-shot detectors such as the YOLO family work well for everyday scenes but struggle when objects are both tiny and highly varied in size. Repeated downsampling, meant to understand the whole scene, tends to erase the faint signals from these small targets.

Blending close-up vision with big-picture context

To tackle this, CEIFNet combines two complementary ways of seeing. One path uses classic convolutional filters, which are good at capturing crisp local patterns like corners and textures. The other path uses a Transformer-style attention mechanism, which excels at relating distant parts of the image and understanding the scene as a whole. Inside the core building block, called a cross-stage transformer block, incoming image features are split: most channels go through a lightweight convolutional path, while a smaller portion passes through an attention path that reasons about long-range relationships. These are then recombined, giving the network both fine details and global awareness without exploding its computational cost.

Using edges as a map for tiny targets

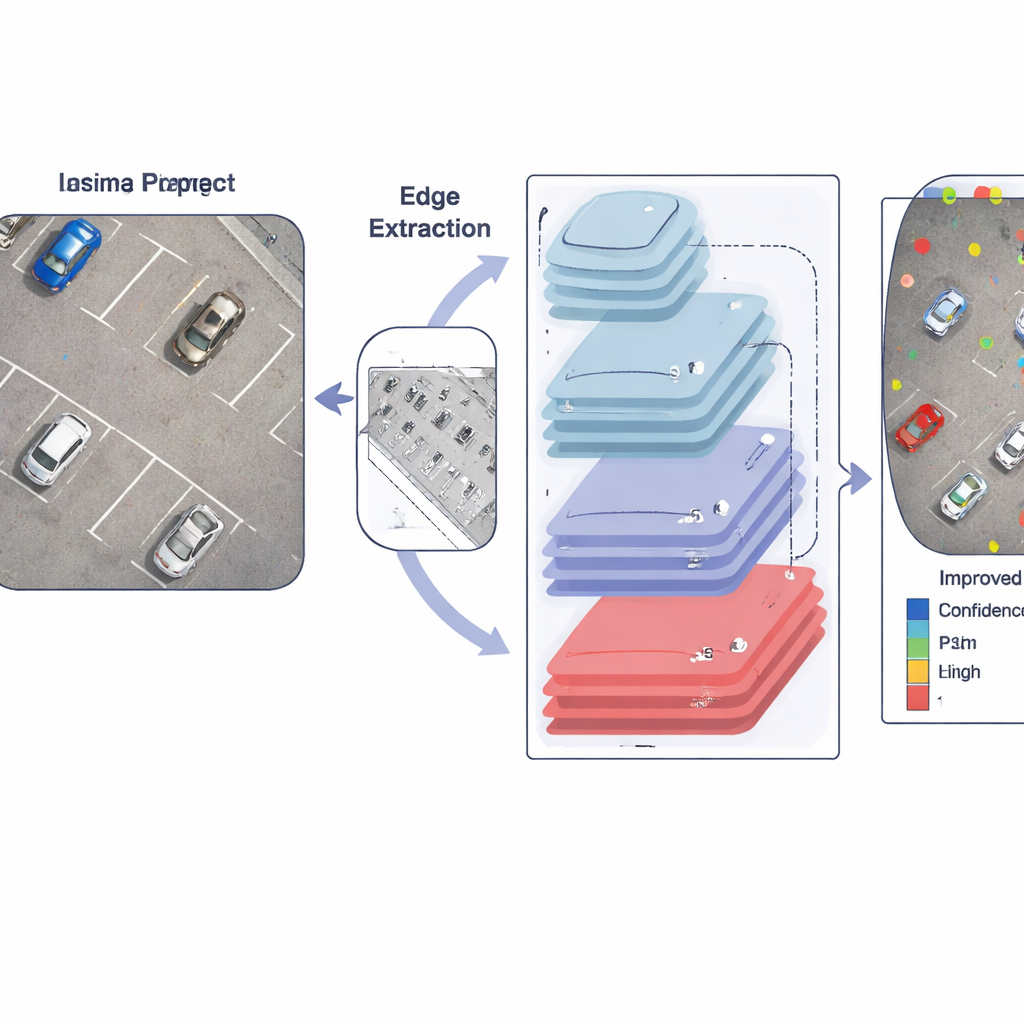

A key insight of the paper is that object boundaries—edges—are especially valuable when targets are only a few pixels across. Instead of relying solely on learned filters, the authors deliberately inject edge information into the network. A dedicated module first applies a Sobel operator, a simple yet robust edge detector, to highlight where brightness changes sharply, such as around the outlines of cars or people. These edge maps are then pooled into several sizes to match different feature scales and fused through a cross-channel module. As the image flows deeper into the network, these sharpened edge cues are repeatedly fed into later layers, helping the model keep track of where small objects begin and end despite the usual blurring and shrinking.

Adapting to size, position, and scene complexity

At the output, CEIFNet uses a dynamic detection head that can adjust its behavior according to what it sees. Instead of using fixed filters, this final stage applies three forms of attention at once: it can prefer certain object sizes, focus on the most promising locations in the image, and emphasize the most informative feature channels. Together with a feature-pyramid structure that preserves an extra fine-grained layer, this makes the system more responsive to tiny, densely packed targets in realistic drone footage, from crowded intersections to busy parking lots and thermal infrared scenes at night.

Proving the gains in real drone scenarios

The researchers tested CEIFNet on two demanding drone datasets: VisDrone2019, consisting of daylight urban and suburban scenes, and HIT-UAV, a thermal infrared collection where many targets are faint and small. On both, the new system detected objects more accurately than a strong YOLO-based baseline and a range of other modern detectors, while still running fast enough for real-time use on a powerful graphics card. Careful ablation experiments showed that each piece—the hybrid block, the edge module, the extra fine layer, and the dynamic head—contributed to the overall boost.

What this means for everyday technology

For non-specialists, the takeaway is that CEIFNet offers a smarter way for drones to “notice the little things” in large, complex scenes. By preserving edge information, mixing local detail with global context, and adapting its attention dynamically, the network can spot small objects that other systems miss or misplace. This makes aerial monitoring more reliable for tasks like traffic safety, search and rescue, and precision farming, and it points toward future systems that can extract trustworthy information from ever-higher and wider views of our world.

Citation: Xiao, J., Li, C., Chen, H. et al. Cross-stage edge information fusion network for small object detection in aerial images. Sci Rep 16, 7639 (2026). https://doi.org/10.1038/s41598-026-36251-5

Keywords: aerial object detection, small objects, drone imaging, edge-based vision, deep learning