Clear Sky Science · en

Optimizing sepsis mortality prediction using hybrid federated learning and explainable AI framework

Why deadly infections still catch hospitals off guard

Sepsis is one of the most dangerous emergencies in modern medicine. A routine infection—from a urinary tract, the lungs, or even the skin—can suddenly trigger a body‑wide reaction that shuts down vital organs and leads to death within hours. Doctors know that acting early saves lives, yet spotting which patients are about to spiral into trouble remains difficult. This study explores how a new blend of privacy‑preserving artificial intelligence and "glass box" explanations could help hospitals flag high‑risk sepsis patients sooner, without exposing sensitive medical records.

From simple scoring charts to smart, data‑hungry tools

Until now, many hospitals have relied on checklists and scoring systems such as SOFA and qSOFA. These tools watch a few basic measurements—like blood pressure and breathing rate—and give a rough sense of how sick a patient is. But they are often applied late, and they ignore the rich streams of information now stored in electronic health records and bedside monitors. As a result, they may miss the complex patterns that warn of sepsis‑related organ failure and death. Researchers have turned to machine learning, which can sift through thousands of data points per patient, but that shift brings two new problems: hospitals are reluctant to pool their raw data for fear of privacy breaches, and many advanced models act like opaque "black boxes" that clinicians struggle to trust.

A network of hospitals that learn without sharing secrets

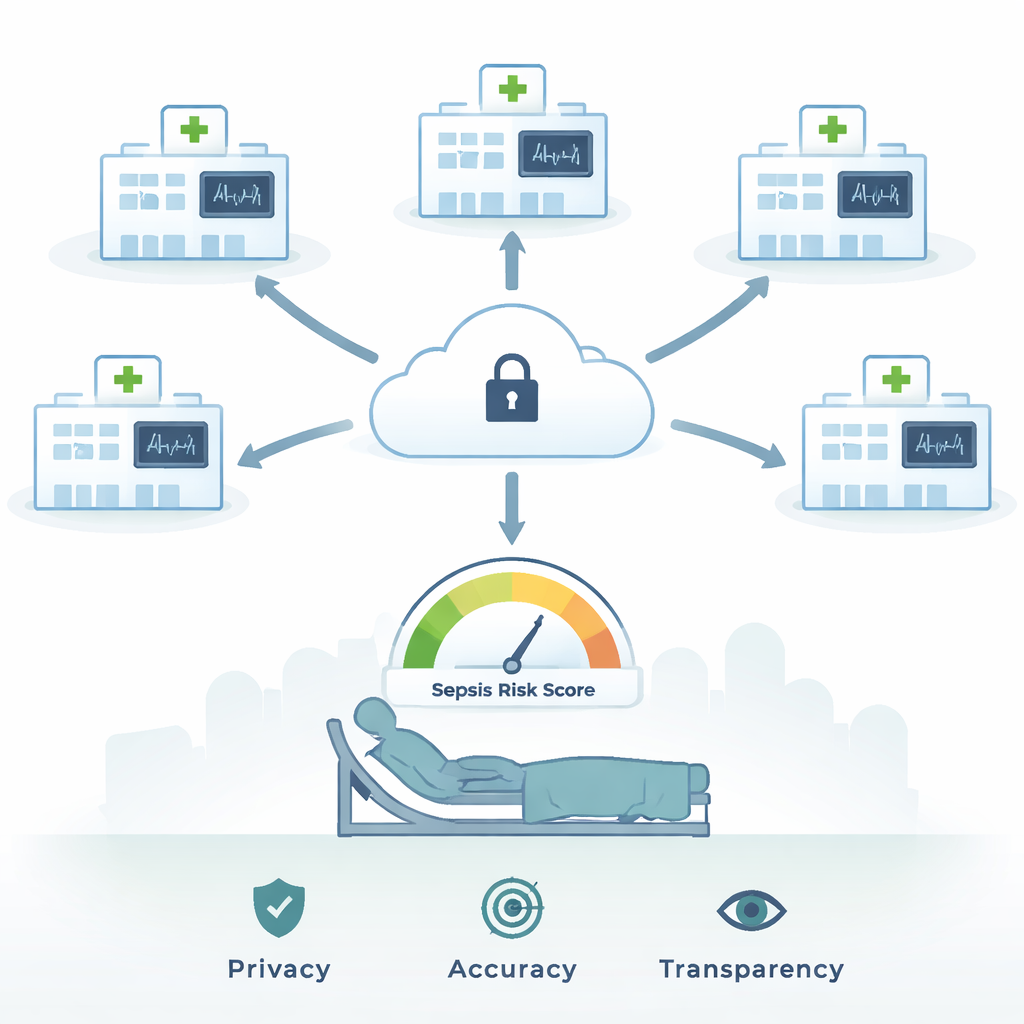

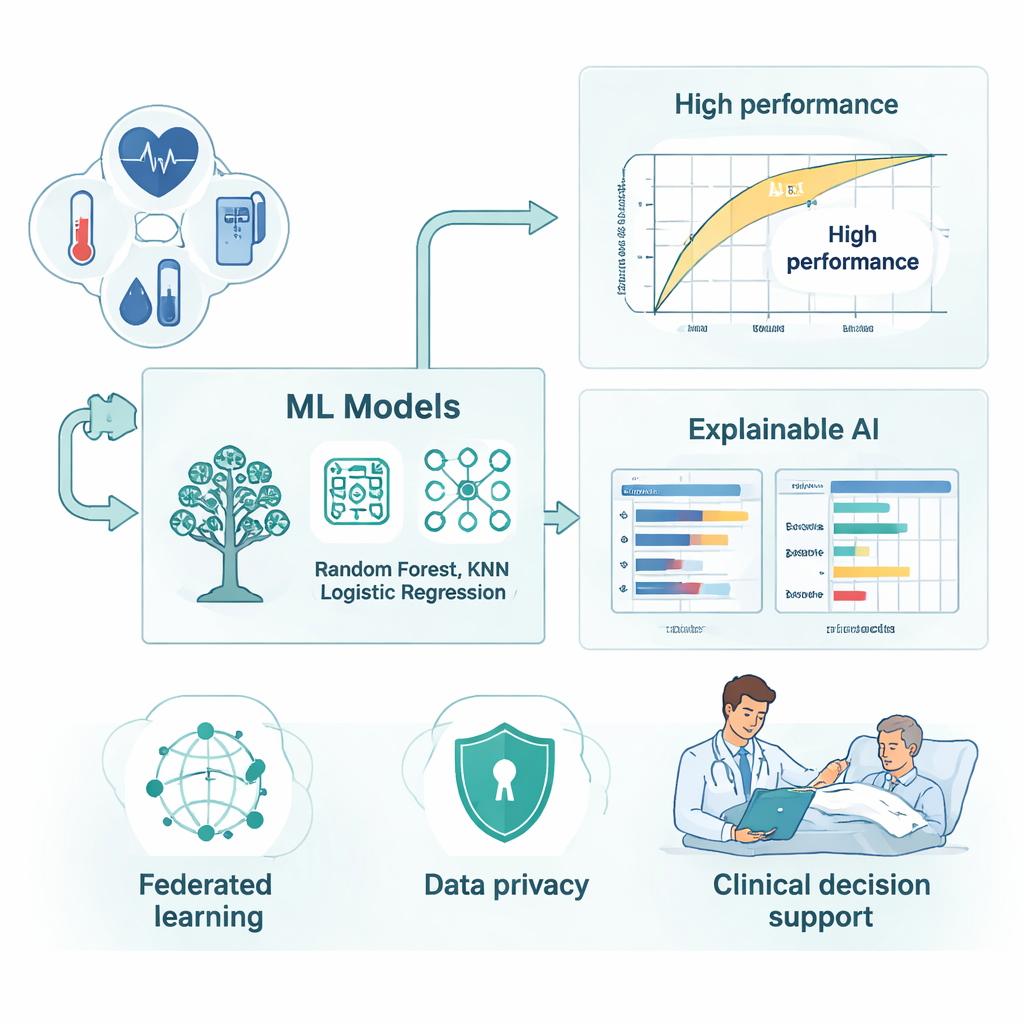

The authors propose a framework that tackles both privacy and trust at once. They use federated learning, a strategy in which each hospital trains the same set of prediction models on its own intensive care unit data—heart rate, blood pressure, oxygen levels, lab tests, and more—without ever sending patient records to a central server. Instead, only model updates are securely combined in the cloud to form a stronger global model. This way, the system learns from a large and diverse pool of patients while keeping their records inside each institution’s firewall. To avoid the model simply learning that "most patients survive," the team also re‑balanced the data so that fatal and non‑fatal sepsis cases were represented more evenly, using a technique that creates realistic synthetic examples of the rarer outcome.

Opening the black box for doctors at the bedside

Inside this federated setup, the researchers trained several well‑known machine‑learning models, including Random Forest, LightGBM, XGBoost, K‑Nearest Neighbors, and Logistic Regression. They then wrapped these models in an "explainable AI" layer designed to show not just a risk score, but also the reasoning behind it. Tools such as SHAP and LIME break down each prediction into contributions from specific clinical features—how much a rising respiratory rate, a longer stay in intensive care, or a drop in oxygen saturation pushes a patient toward the high‑risk category. Partial dependence plots add a big‑picture view, revealing, for example, how the predicted danger climbs steadily once breathing rate or length of stay passes certain thresholds. These explanations help clinicians see when the model’s warning matches their own judgment, and when it may be reacting to hidden trends in the data that deserve a closer look.

Strong performance without sacrificing privacy

Using a large, publicly available sepsis dataset built from intensive care records, the team tested their approach in both traditional centralized training and the more realistic federated setting. Ensemble models—especially Random Forest and gradient‑boosting methods—stood out. In the centralized case, the best model correctly classified nearly all patients and achieved near‑perfect discrimination between survivors and non‑survivors. When the same models were trained in a simulated network of five virtual hospitals with differing patient mixes, performance dipped only slightly, yet remained extremely high. That small trade‑off bought significant gains in privacy and institutional independence: no raw patient data ever left the local servers, and the system still caught the vast majority of high‑risk cases.

What this means for patients and clinicians

For a non‑specialist, the takeaway is straightforward: by letting hospitals "learn together" without sharing their actual charts, and by forcing the computer to show its work, this framework brings powerful sepsis‑risk prediction closer to real‑world use. Doctors could receive early, explainable alerts that a patient’s infection is tipping toward organ failure, backed by clear pointers to the vital signs and lab results driving that warning. According to the study, such a system can remain accurate even under strict privacy rules and varied hospital conditions. If validated in live clinical environments, this hybrid of federated learning and explainable AI could become an important safety net in intensive care units, catching more sepsis patients before it is too late.

Citation: Fuzail, M.Z., Din, I.u., Ahmed, S. et al. Optimizing sepsis mortality prediction using hybrid federated learning and explainable AI framework. Sci Rep 16, 5218 (2026). https://doi.org/10.1038/s41598-026-36245-3

Keywords: sepsis, mortality prediction, federated learning, explainable AI, intensive care