Clear Sky Science · en

Deep atrous context convolution generative adversarial network with corner key point extracted feature for nuts classification

Smarter Sorting for Everyday Nuts

From snack mixes to nut butters, billions of nuts move through factories every year, and each one must be sorted by type and quality. Today this is often done by machines that still struggle when nuts look alike or photos are taken under different lighting. This study introduces a powerful artificial-intelligence system called DAC‑GAN that can tell eight common nut types apart with near‑perfect accuracy, promising faster, cheaper and more reliable sorting for the food industry.

Why Recognizing Nuts Is Hard

At first glance, a cashew and a peanut seem easy to distinguish. But in real production lines, nuts can be tilted, broken, overlapping, or poorly lit. Traditional computer programs rely on simple hand‑crafted cues, such as color or average shape, which easily break down when conditions change. Deep learning has improved matters by letting computers learn patterns directly from images, but these methods usually demand very large, carefully balanced datasets. For nuts, only a few thousand labeled photos may be available, and some varieties can look confusingly similar, leading to mistakes and biased predictions.

Making More and Better Training Images

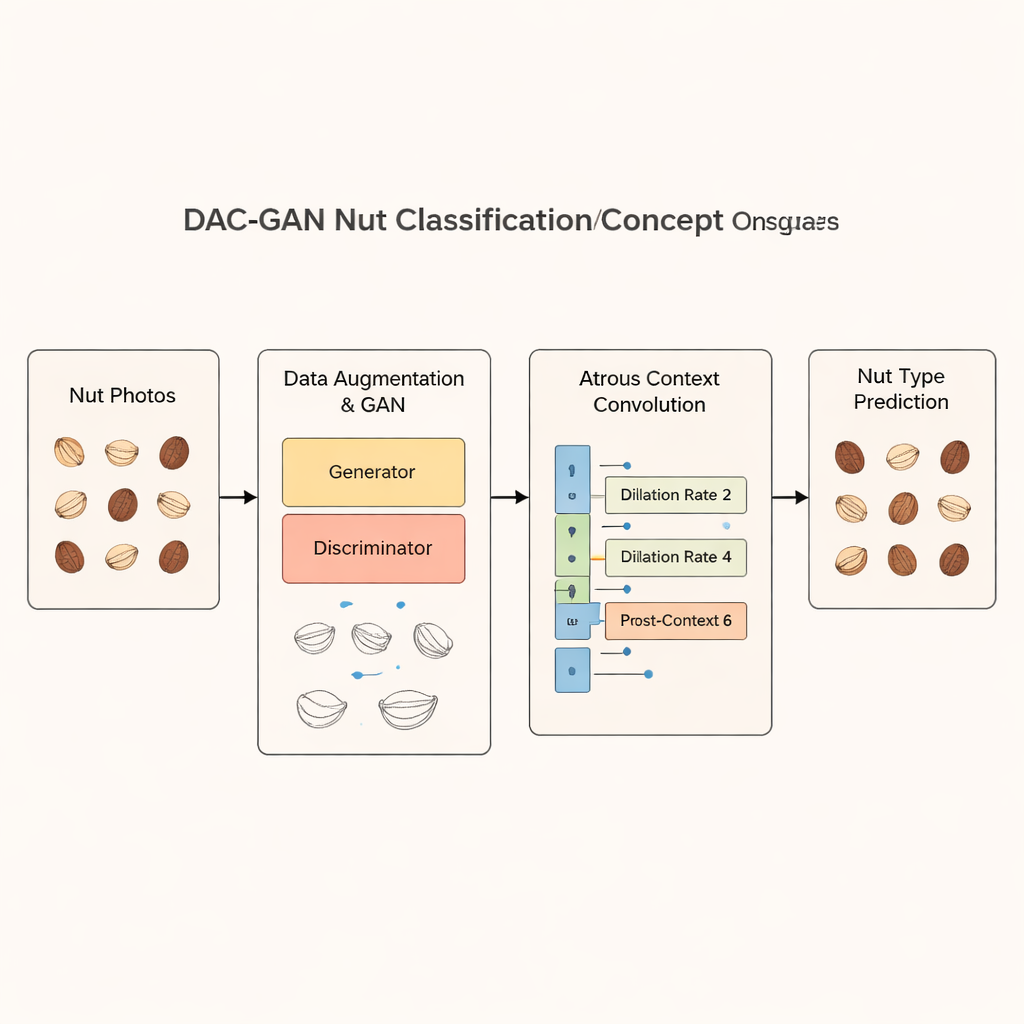

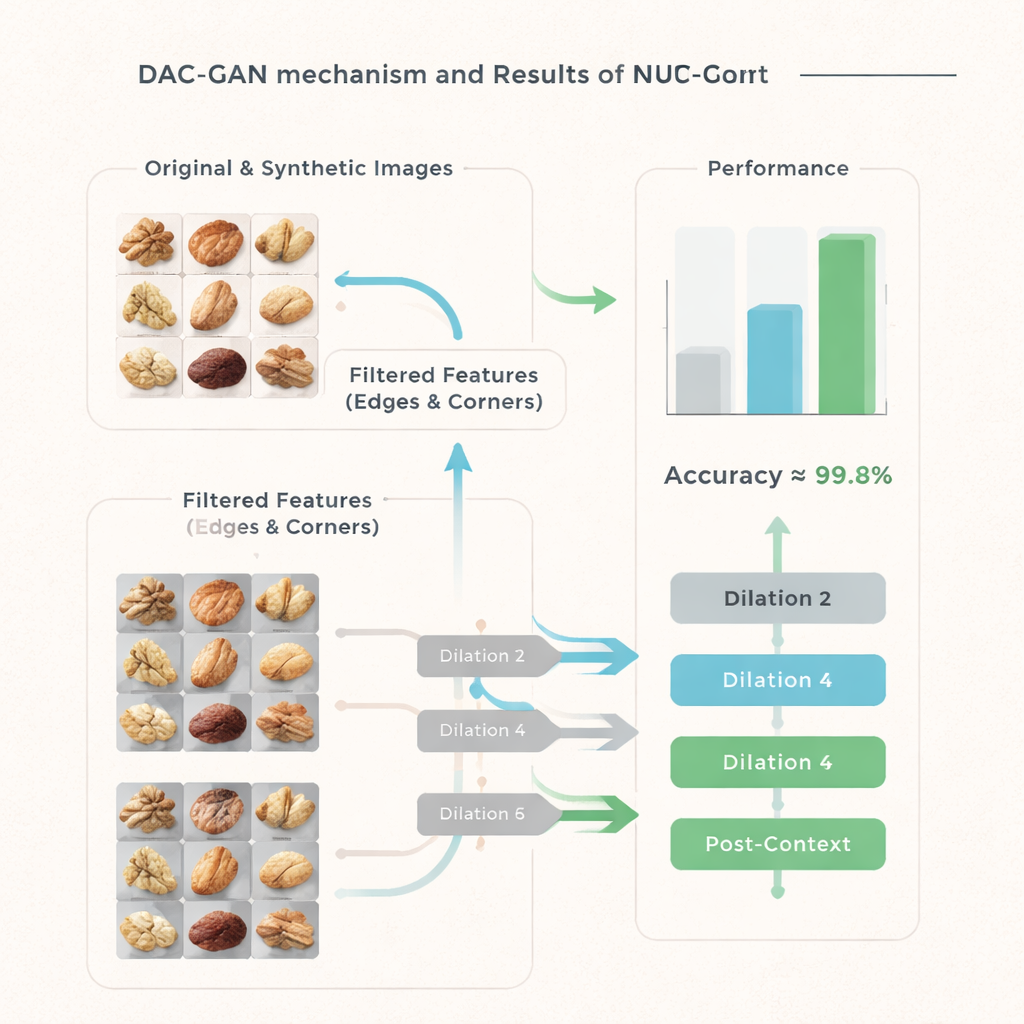

The researchers start with a public “Common Nut” image collection, containing 4,000 photos evenly split across eight nut types: Brazil nut, cashew, chestnut, peanut, pecan, pistachio, macadamia and walnut. To train a robust model, they need far more examples than this. DAC‑GAN tackles the problem using a special kind of neural network called a generative adversarial network (GAN). One part of the GAN, the generator, learns to create realistic nut images from random noise, while another part, the discriminator, learns to tell real from fake. As the two compete, the generator becomes good enough to produce high‑quality, lifelike synthetic nuts. By combining these artificial images with standard flips and rotations, the team expands the dataset to more than 70,000 images while keeping each nut class perfectly balanced.

Teaching the Model to Focus on Nut Details

Simply adding more images is not enough; the model also has to focus on the right visual clues. DAC‑GAN introduces a filtration step that converts nut photos to grayscale and then extracts strong outlines, edges and distinctive corner points. These “corner key point features” capture where a nut’s shape bends or its surface texture changes, details that often distinguish one variety from another. Additional filters highlight overall kernel outlines and internal patterns. Instead of feeding raw photos into the classifier, the system works on these sharpened feature images, which emphasize geometry and texture while downplaying distracting background and color variations.

Seeing the Whole Nut at Multiple Scales

The heart of DAC‑GAN is a refined version of a technique called atrous, or dilated, convolution. Ordinary convolutional layers in deep networks see only small patches at a time. Atrous convolution spaces out the sampling points so the model can take in a broader view without losing resolution. The authors add “pre‑context” and “post‑context” blocks around this core operation, which summarize the entire image and feed that summary back into the layer. By running three such convolutions with different dilation rates, the network learns to capture both tiny grooves on a nut’s surface and the overall silhouette, and then combines these views into a rich, context‑aware representation before making a decision.

How Well Does It Work?

The team puts DAC‑GAN through an extensive series of tests. They compare it with many well‑known neural networks, from classic models like VGG and ResNet to newer transformer‑based designs, both with and without synthetic data. Across accuracy, precision, recall and a combined F1‑score, DAC‑GAN consistently outperforms all alternatives by a large margin. On the held‑out test set of real nut images, it correctly identifies the nut type in 99.83% of cases, with only 25 mistakes out of 800 samples. Even the most competitive rival models lag several percentage points behind, and detailed statistics show that DAC‑GAN’s advantage is not due to chance but is statistically very robust.

What This Means for Food and Beyond

For non‑specialists, the takeaway is simple: by smartly inventing extra training images and teaching the network to pay attention to edges, corners and multi‑scale context, DAC‑GAN turns a visually subtle problem into one it can solve almost perfectly. In practical terms, this approach could lead to automated nut‑sorting machines that handle large volumes with very few errors, improving quality control while reducing manual labor. Because the method is general, it could also be adapted to other food products—or even industrial parts—that must be distinguished based on fine visual details under imperfect imaging conditions.

Citation: Devi, M.S., Jaiganesh, M., Priya, S. et al. Deep atrous context convolution generative adversarial network with corner key point extracted feature for nuts classification. Sci Rep 16, 6409 (2026). https://doi.org/10.1038/s41598-026-36238-2

Keywords: nut classification, deep learning, image augmentation, food sorting, computer vision