Clear Sky Science · en

Agriculture surrounding monitoring and object identification based on optimized you only look once and single shot multibox detector setups using combined vision and thermal images

Smarter Eyes for Safer Farm Machines

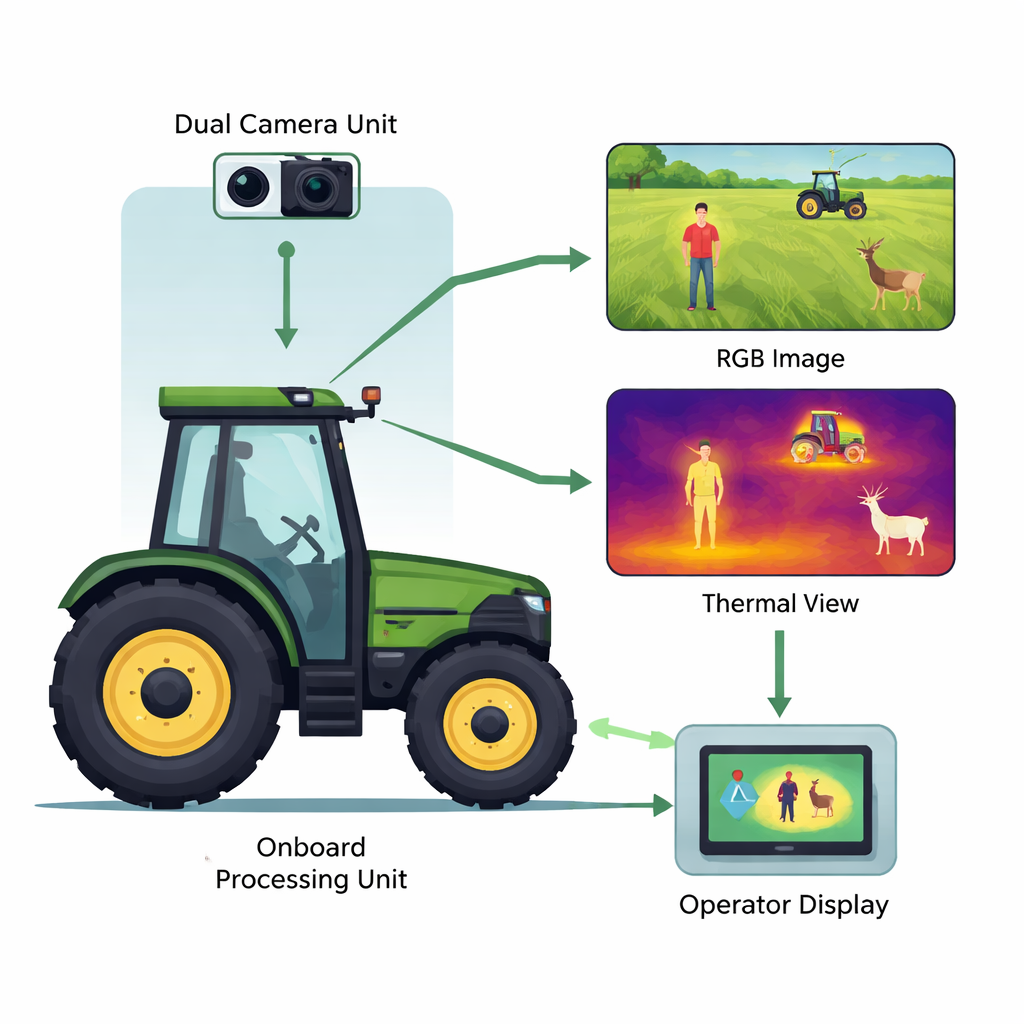

Modern tractors and harvesters are getting bigger, faster and more automated, which raises a simple but serious question: how do you make sure they don’t hit people, animals or other machines hidden in dust, fog or darkness? This paper describes a practical safety system that gives farm equipment a kind of “super vision” by combining normal video and heat-sensing cameras, then compares different artificial-intelligence setups to see which can spot hazards most accurately and quickly.

Why Farm Work Needs Better Vision

Farming now relies heavily on large, powerful machines that work long hours, often at night or in bad weather. A basic video camera can help an operator see around a tractor, but ordinary images fail when there is fog, rain, bright glare or darkness. Thermal cameras, which see heat rather than light, work well in those tough conditions and make warm bodies—people and animals—stand out from the background. The authors argue that combining both types of images is the best way to build an affordable warning system that can be retrofitted onto existing machinery and integrated with standard tractor control panels.

How the Dual and Unified Systems Work

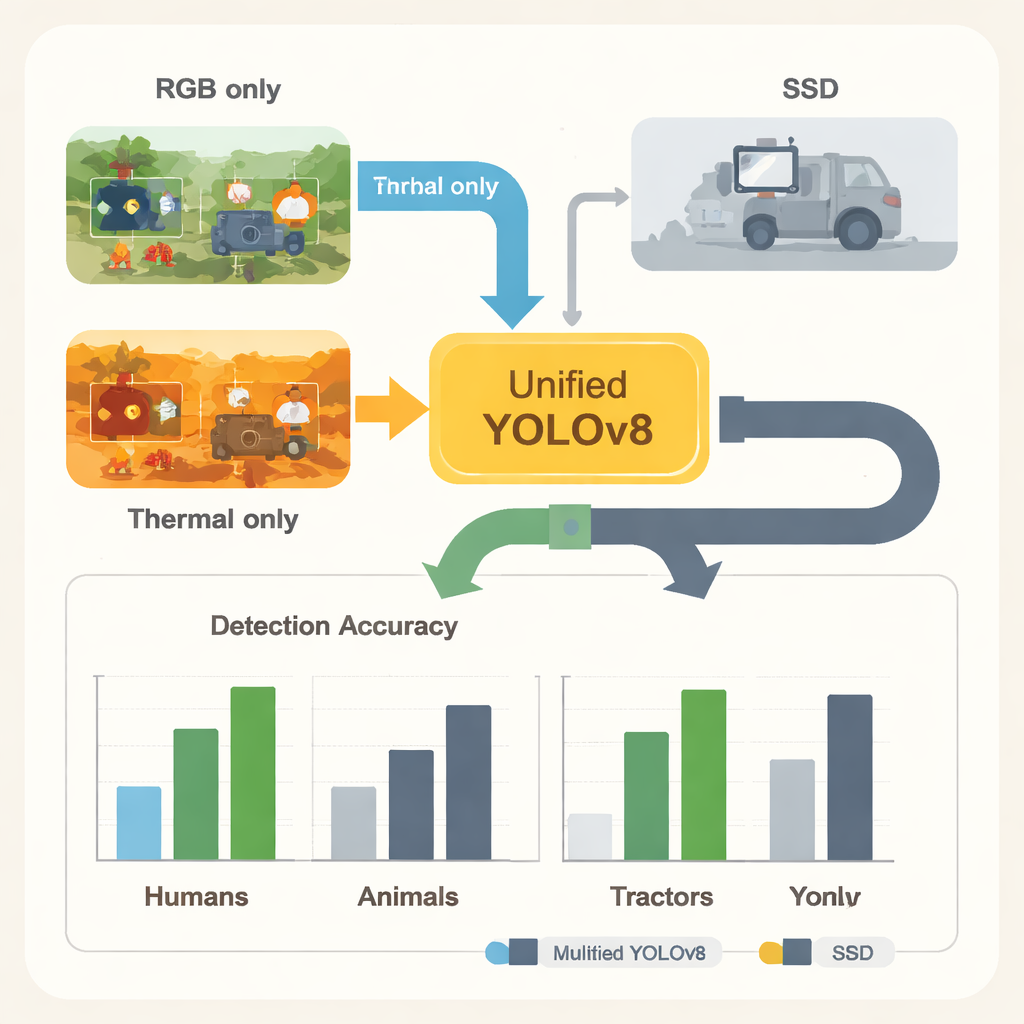

The team mounted a combined RGB (normal color) and thermal camera unit on a tractor roof and fed both image streams to a low-cost processing unit in the cab. They explored two main ways to use artificial intelligence to detect objects in these images. In the first, “dual-network” approach, one neural network was trained only on normal images and a second network only on thermal images; their results were then merged. In the second, “unified” approach, the two images were carefully aligned, stacked together and passed into a single network that learned from both at once. Both designs were implemented with a family of fast object-detection models known as YOLOv8 and with an alternative design called SSD, tailored for small, embedded computers.

Building and Training the Machine’s View of the Field

To teach these networks what to look for, the researchers assembled a large dataset from public image libraries and their own camera recordings. The images covered people, wild and domestic animals, tractors, harvesters, trucks, buses and other farm machines, in both visible and thermal views. Each object was surrounded by a hand-drawn box and given a label, and the images were then augmented—flipped, rotated or slightly blurred—to mimic the variety seen in real fields. The data were split into training, validation and test sets so that the networks could learn on one portion and be judged fairly on images they had never seen before. Special care was taken to measure not just raw accuracy, but also how many computing operations and how many frames per second each model needed, since any real tractor system must run quickly and reliably in the field.

Which Digital Eyes Performed Best?

Across thousands of test images, all of the YOLOv8 setups detected most targets very well, especially large farm machines and warm-bodied animals. The unified model that ingested both RGB and thermal data in a single stream reached an overall score (mean average precision) of about 0.90, slightly ahead of the dual-network setup at 0.88. In other words, fusing both kinds of vision inside one network gave a small but real boost in performance without making the system more complex to operate. The biggest gains from thermal imaging appeared for people and animals in poor lighting, while normal images remained better for detailed shapes like tractors. When the team swapped YOLOv8 for their streamlined SSD model, performance dropped noticeably for most classes, even though SSD trained much faster. YOLOv8, especially its smallest “Nano” version, delivered higher accuracy while still achieving real-time speeds of around 27 frames per second on modest hardware.

Turning AI Detections into Simple Warnings

Rather than overwhelming the driver with video feeds, the system converts detections into an easy dashboard view that follows a common tractor communication standard (ISOBUS). On a simple green panel, icons show whether a human, animal or vehicle is in front of the machine, along with distance, direction and how confident the system is. This pared-down interface can run on existing operator terminals and is designed for harsh farm conditions, with protected cameras, stabilized mounts and dust and temperature control planned for future versions.

What This Means for Everyday Farming

For a non-specialist, the takeaway is that giving tractors “two kinds of eyes” and a well-chosen AI brain can substantially improve safety without requiring exotic hardware. A single, carefully tuned YOLOv8 network that blends normal and thermal views offers the best mix of accuracy, speed and simplicity among the tested options, clearly outperforming the SSD design. While the system still struggles somewhat to recognize humans in all situations—partly because there were fewer examples of them in the training data—the study shows that practical, camera-based warning systems for farm machinery are both feasible and close to field-ready. With more balanced data and refined fusion methods, future versions could help prevent accidents, protect wildlife and make large-scale farming safer for everyone in and around the field.

Citation: Tarasiuk, K., Mystkowski, A., Ostaszewski, M. et al. Agriculture surrounding monitoring and object identification based on optimized you only look once and single shot multibox detector setups using combined vision and thermal images. Sci Rep 16, 5129 (2026). https://doi.org/10.1038/s41598-026-36181-2

Keywords: agricultural safety, thermal imaging, computer vision, object detection, YOLOv8