Clear Sky Science · en

Metalens-style image synthesis for metalens imaging via image-to-image translation

Sharper Photos from Thinner Cameras

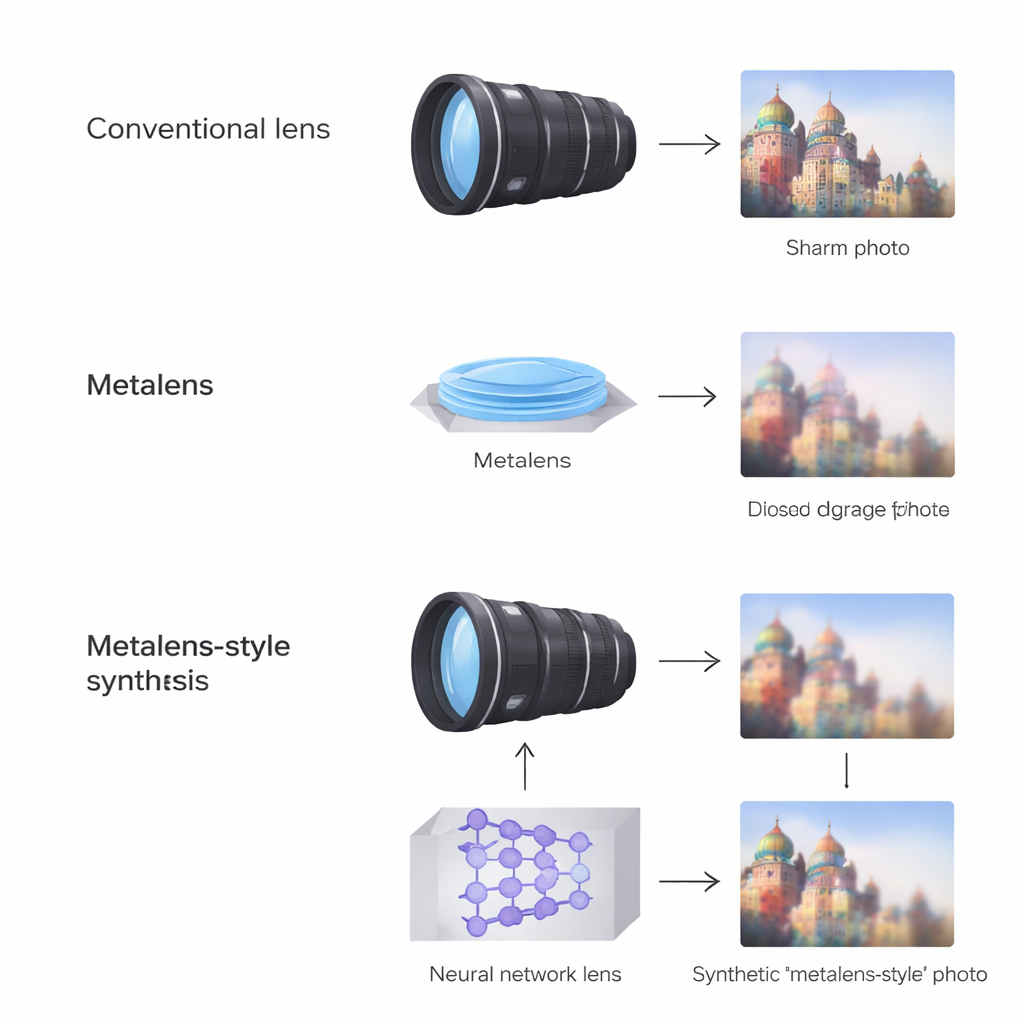

Today’s phones and wearables are packed with cameras, yet the glass lenses that make sharp photos possible still take up precious space. A new class of ultra-thin “metalenses” promises razor-thin optics that could flatten cameras into the thickness of a credit card. But these flat lenses introduce strange color fringes and blurring that ruin everyday photos. This paper shows how artificial intelligence can learn to mimic those flaws on ordinary pictures and then use the results to teach cameras how to fix metalens images—without spending hours taking calibration shots.

Why Flat Lenses Are So Hard to Tame

Traditional cameras rely on stacks of curved glass elements to bend light gently and correct unwanted blur and distortion. Metalenses, by contrast, are flat surfaces covered with tiny structures smaller than the wavelength of light that steer light in more exotic ways. This makes them incredibly thin and easy to fabricate on wafers, but also very finicky: image sharpness and color can change quickly across the frame, and small changes in color, viewing angle, or fabrication tolerances can cause streaks, halos, and smeared details. For manufacturers, the biggest obstacle is not building metalenses, but collecting the thousands of example photos needed to train software to undo these defects for every new design.

Teaching a Network to Imitate a Flawed Lens

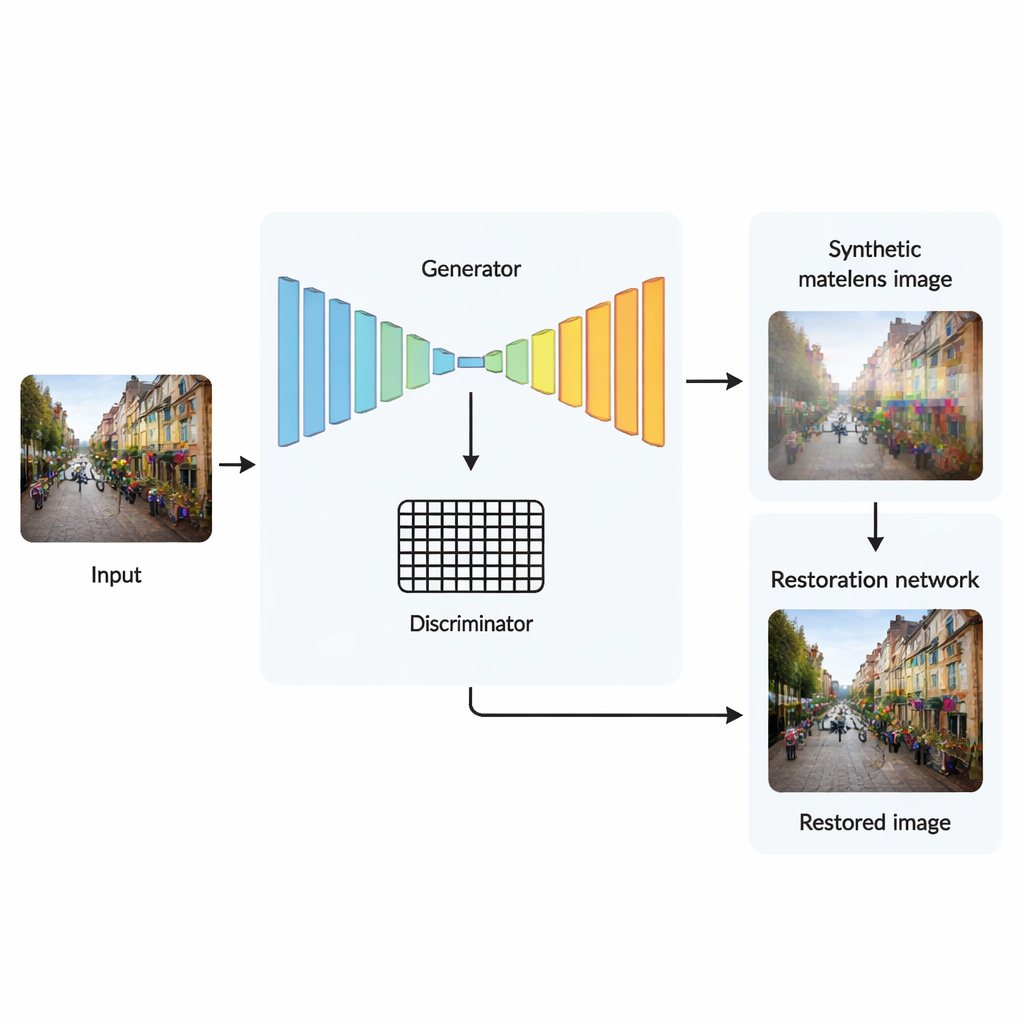

Instead of starting from bad metalens photos and trying to clean them up, the authors flip the problem. They begin with clean photos taken using a conventional lens and train a neural network to make those images look as if they had been captured through a specific metalens, complete with its signature color fringes, position-dependent blur, and warping near the edges. This network is based on a U-Net “image-to-image” translator that can copy fine details from input to output while adding realistic distortions. A companion discriminator network judges whether the output looks like a true metalens photo or a fake, nudging the generator toward believable imperfections. With only about 600 real metalens–conventional photo pairs for calibration, the trained system can then turn hundreds of ordinary photos into convincing metalens-style images within seconds.

Checking How Real the Fake Images Are

To see whether these synthetic images really behave like metalens photos, the team compares their method to several advanced image-restoration and super-resolution models, but run in reverse: instead of cleaning up images, the competing models are asked to degrade clean photos into metalens-like ones. Using standard quality measures that capture both sharpness and human-perceived similarity, their translator best reproduces the true metalens artifacts while avoiding unnatural textures. Visually, its outputs show vivid color fringes and realistic blur patterns that match real captures more closely than those produced by other models, which tend to over-smooth or distort fine details.

Using Fake Data to Fix Real Photos

The real payoff comes when these synthetic metalens-style images are used to train a second neural network whose job is to restore metalens photos back to pristine quality. This restorer sees only pairs of clean images and their AI-generated degraded versions, never real metalens data. Still, when tested on actual metalens photos it has never seen before, it recovers overall structure and color more faithfully than competing approaches trained on the same synthetic-only data. Some edge regions remain softer than ideal, revealing that the current training does not fully capture the strongest blur near the borders. Nonetheless, the results show that carefully constructed fake data can stand in for large, expensive real datasets when teaching cameras how to correct metalens quirks.

What This Means for Future Cameras

For a non-specialist, the key message is that camera makers may no longer need to choose between bulky lenses and poor image quality. By first learning to imitate the complex flaws of flat lenses and then using those imitations for training, the proposed approach cuts data-collection time by around sixty-fold while still enabling software that cleans up metalens photos effectively. In practical terms, this kind of physics-aware image synthesis could help shrink multi-element camera modules down to a single flat lens plus a smart correction algorithm, paving the way for slimmer phones, lightweight wearables, and compact scientific instruments that still deliver crisp, conventional-looking images.

Citation: Kang, C., Suk, H., Seo, J. et al. Metalens-style image synthesis for metalens imaging via image-to-image translation. Sci Rep 16, 5819 (2026). https://doi.org/10.1038/s41598-026-36150-9

Keywords: metalens imaging, computational photography, deep learning, image restoration, data augmentation