Clear Sky Science · en

A multi-source physiological data-driven method for assessing the hazard perception level of operators

Why watching the watchers matters

Deep underground, modern coal mines increasingly rely on remote control rooms rather than people at the rock face. In these rooms, operators stare at walls of video screens, searching for the earliest signs of danger. If they miss a gas leak, a roof crack, or a spark from a conveyor belt, the result can be a deadly accident. This study asks a simple but vital question: can we tell, in real time, how sharp an operator’s “danger radar” is, by listening to their body’s hidden signals?

Reading the body’s quiet alarm bells

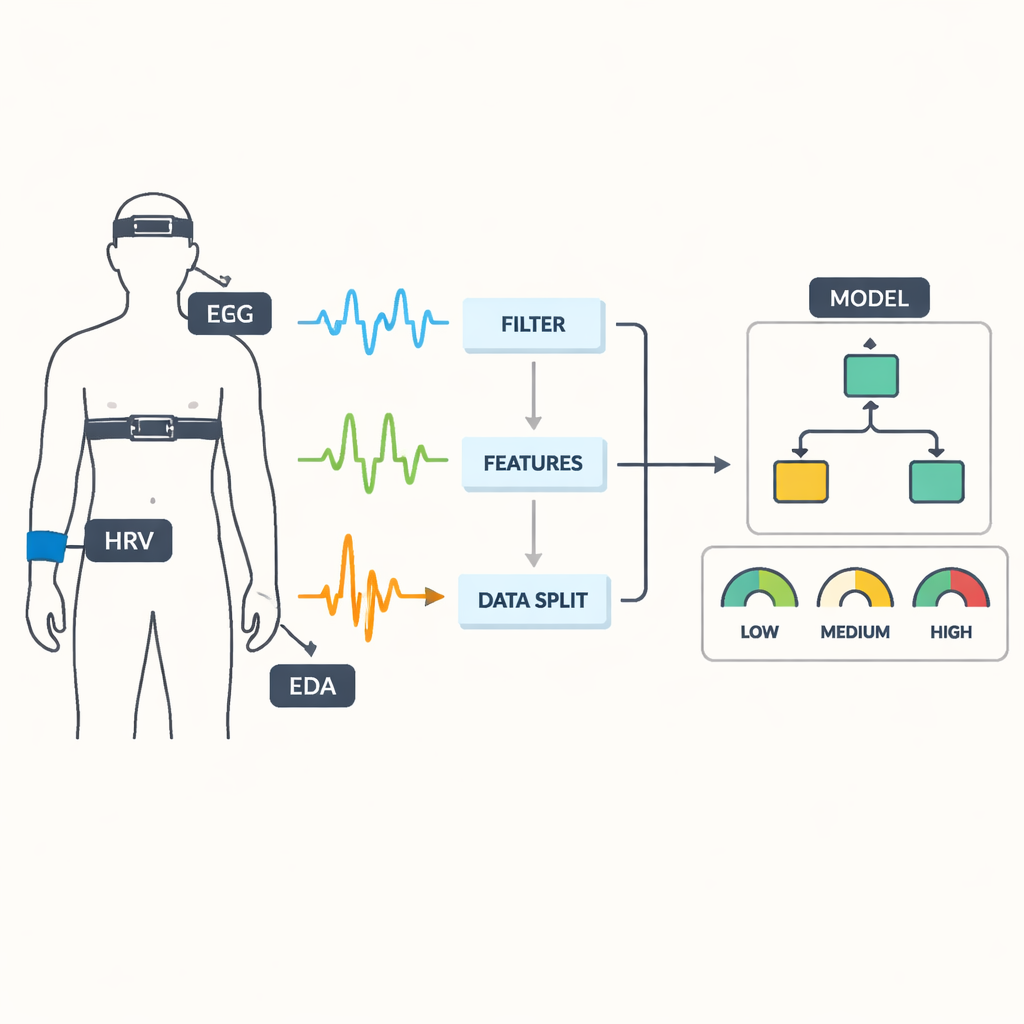

The researchers focused on three kinds of physiological signals that change when people notice and evaluate hazards. Electrical activity in the brain, recorded as EEG, reflects how intensely different regions of the cortex are working. Electrodermal activity (EDA) captures tiny changes in skin conductance linked to sweat gland activity, a classic sign of arousal and alertness. Heart rate variability (HRV) describes subtle fluctuations in the time between heartbeats, revealing how the body’s automatic nervous system balances stress and recovery. Rather than relying on self-reports or simple reaction times alone, the team set out to merge these three streams into a richer picture of an operator’s hazard perception level.

Simulating a real control room

To keep the experiment realistic, 23 professional operators from intelligent coal mine safety monitoring centers were recruited. In the lab, the team recreated a multi-screen monitoring setup using specialized software. Participants viewed 286 real coal mine images on four screens at once, some depicting hazardous scenes—like workers without helmets, methane build-up, water in tunnels, or unstable roofs—and others showing safe conditions. For each image, operators had to quickly decide whether it was dangerous or safe using keyboard presses, then rate their own hazard awareness using a tailored questionnaire adapted to coal mine work.

Turning raw signals into a hazard score

While operators worked, the system continuously recorded EEG from eight locations on the scalp, skin conductance from the hand, and heart activity from a wearable device. The researchers carefully cleaned the data to remove noise such as eye blinks, then chopped the continuous recordings into short five-second windows. From each window they extracted dozens of features—for example, power in different brainwave bands, slow and fast components of skin conductance, and a range of heart variability measures. Separately, each operator’s overall hazard perception level was quantified by combining three things: questionnaire scores, average reaction time (with faster treated as better), and accuracy. Using statistical cutoffs, every data window was labeled as reflecting low, moderate, or high hazard perception. Machine learning and deep learning models were then trained to recognize these levels purely from physiology.

What the body reveals when danger perception rises

The analysis showed clear and meaningful patterns. As hazard perception increased, some brainwave bands in frontal regions—especially theta, alpha, and beta—grew stronger, indicating more focused cognitive processing. Certain skin conductance measures, reflecting how much and how unpredictably the skin was sweating, rose when operators were more tuned in to hazards, consistent with heightened sympathetic nervous system activation. Heart rate tended to be higher at greater hazard perception levels, while some long-term variability measures were less sensitive in these short tasks. These trends confirmed that the body’s signals genuinely track how effectively people are spotting danger on the screens.

Teaching machines to spot low awareness

The team compared 12 different algorithms, from classic decision trees and support vector machines to a modern gradient-boosting method called LightGBM and a one‑dimensional convolutional neural network. LightGBM stood out: using all three signal types together (EEG, EDA, and HRV), it classified hazard perception level with an impressive 99.89% accuracy, with very few false alarms or missed cases. The deep learning model also performed extremely well. Importantly, combining all three physiological sources beat any single signal or pair, showing that the brain, skin, and heart each contribute unique pieces of information about an operator’s state.

From smarter mines to safer work

For a non-specialist, the bottom line is that this research demonstrates a practical way to “monitor the monitors.” By quietly tracking an operator’s brain waves, skin response, and heart rhythms, an intelligent system can infer when their ability to notice danger is slipping—perhaps due to fatigue, overload, or distraction—and trigger timely interventions, such as breaks, task reallocation, or extra support. While more testing in real mines is needed, the approach points toward future control rooms where safety systems protect not only machines and tunnels, but also the human attention that stands between early warning and catastrophe.

Citation: Qi, A., Liu, H., Li, J. et al. A multi-source physiological data-driven method for assessing the hazard perception level of operators. Sci Rep 16, 6595 (2026). https://doi.org/10.1038/s41598-026-36107-y

Keywords: coal mine safety, hazard perception, physiological monitoring, machine learning, operator fatigue