Clear Sky Science · en

Knowledge graph enhanced cross modal generative adversarial network for martial arts motion reconstruction and heritage preservation

Why High-Tech Kung Fu Matters

Traditional martial arts are more than spectacular kicks and punches – they are living carriers of philosophy, health practice, and cultural identity. Yet many of these skills exist only in the bodies and memories of aging masters, and ordinary video recordings cannot fully capture their depth. This paper explores how an advanced artificial intelligence system can "learn" martial arts in a rich, meaningful way, so that future generations might study not just how a movement looks, but why it is done that way.

The Problem with Filming Ancient Skills

For centuries, martial arts were passed down from teacher to student, often with little written record. Modern cameras and motion-capture suits help, but they still fall short. Video flattens three-dimensional, full-body actions into two dimensions, and even sophisticated sensors can miss subtle weight shifts, internal power flow, or the tactical purpose behind a move. Existing systems mainly log "what" the body does – joint angles and positions – while ignoring the cultural ideas and combat principles that give each technique its soul. As a result, archived motions may look correct to casual viewers but feel wrong to experienced practitioners.

A Digital Map of Martial Wisdom

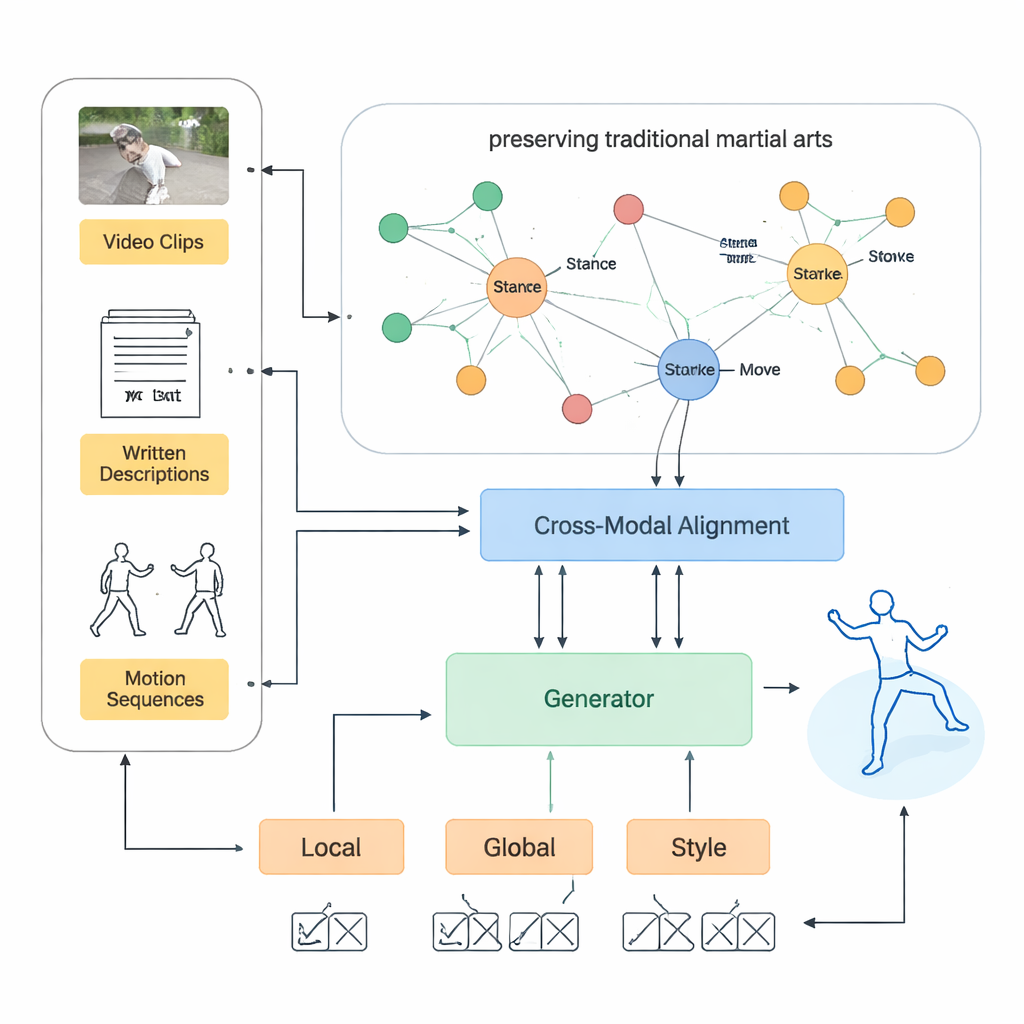

To tackle this, the authors first build a large martial arts knowledge graph – essentially a digital map of concepts and relationships. It includes individual techniques, body parts, force directions, training progressions, core ideas like “substantial and insubstantial” weight, and the contexts in which moves are used. Links express relations such as "this stance is the prerequisite for that strike" or "this movement embodies this principle." Using graph-learning methods, each item in this map is turned into a numerical representation that a computer can work with, while still preserving the structure of expert knowledge.

Teaching AI to Connect Words, Images, and Motion

Next, the team designs a system that can understand martial arts through several forms at once: videos of performances, written explanations, and precise motion-capture data. Separate modules analyze each type – a video network studies the footage frame by frame, a language model reads technical and historical descriptions, and a graph-based model follows how joints move over time. A special alignment step, guided by the knowledge graph, forces these different views to agree on what a technique really is. This prevents the AI from picking up misleading patterns and helps it handle rarely seen moves by relating them to better-known ones through shared principles.

Generating Movements that Feel Authentic

On top of this foundation, the authors build a motion-generating engine based on generative adversarial networks. One part of the system proposes new motion sequences; three "critic" parts judge them from different angles: local posture accuracy, whole-body coordination, and stylistic faithfulness to the martial art. Throughout this process, the knowledge graph acts like a supervising master, nudging the AI away from poses that would break balance, violate a style’s rules, or ignore key phases of a technique. In tests on six major Chinese styles, the system cut joint-position errors by more than a quarter compared with strong modern baselines and achieved high scores for obeying encoded martial principles.

Beyond Pretty Moves: Saving Living Traditions

For non-specialists, the takeaway is that this is not just about smoother computer animation. By baking expert rules and cultural meaning into the heart of an AI model, the method can reconstruct forms that are both physically sound and true to the character of each style – from the flowing circles of Baguazhang to the explosive lines of Xingyiquan. The authors argue that such knowledge-guided systems could power future teaching tools, museum exhibits, and digital archives that let people explore traditional arts interactively, even without a master present. With further work, the same approach could help preserve other fragile practices like classical dance or ritual performance, offering a new way for technology to support, rather than replace, human tradition.

Citation: Yue, X., Zhang, L. Knowledge graph enhanced cross modal generative adversarial network for martial arts motion reconstruction and heritage preservation. Sci Rep 16, 5925 (2026). https://doi.org/10.1038/s41598-026-36095-z

Keywords: martial arts preservation, human motion generation, knowledge graphs, cross-modal AI, generative adversarial networks