Clear Sky Science · en

Safety and efficacy of privacy-preserving models to create Lay summaries of brain MRI reports

Why Your Scan Report Can Feel So Confusing

More and more patients can read their own test results online, including detailed radiology reports from brain scans. But these documents are written for doctors, not for patients, and are packed with unfamiliar terms that can fuel worry instead of reassurance. This study asks whether modern artificial intelligence (AI) programs can safely turn real emergency brain MRI reports—written in French for people with headaches—into plain‑language summaries that patients can actually understand, without sending sensitive medical data to distant commercial servers.

Turning Doctor Language into Everyday Words

The researchers focused on “lay summaries”: short explanations that keep the medical facts but translate them into everyday language and directly link findings to the patient’s symptoms. They used three large language models (LLMs)—Llama 3.3, Athene V2, and Mistral Small—run entirely on computers within a French university hospital, so that no report ever left the secure hospital network. Each AI system received the same instruction: write a 4–6 sentence summary in French for a patient, covering all key points, explaining hard terms, and connecting the scan findings to the person’s headache.

How Doctors Judged Accuracy and Safety

From nearly 600 brain MRI reports written in 2022 for emergency patients with headache, the team randomly selected 105. Three experienced neuroradiologists read each original report alongside three anonymous AI‑generated summaries (one from each model). They rated them for medical correctness, completeness, helpfulness for teaching patients, and whether the text was good enough to be shown directly in a patient’s online portal. On average, ratings were high: doctors judged the summaries to be largely accurate and comprehensive, and often suitable for clinical use. Still, about one in five summaries contained at least one problem, such as a wrong explanation of an abbreviation, a slightly off anatomical description, awkward phrasing, or an invented detail that was not in the original report.

What Non‑Doctors Actually Understood

To see whether these summaries truly helped lay readers, the researchers recruited 11 non‑physicians working in medical informatics who regularly handle health data but are not trained doctors. This group rated 30 MRI reports, some in their original form and some with an added AI summary. They scored how well they felt they understood each report, how confident they were about explaining the results to friends or family, and how anxious they would feel if the report were their own. They also answered simple yes‑or‑no questions: is there anything abnormal in this report, and is there a finding that could reasonably explain the patient’s headache?

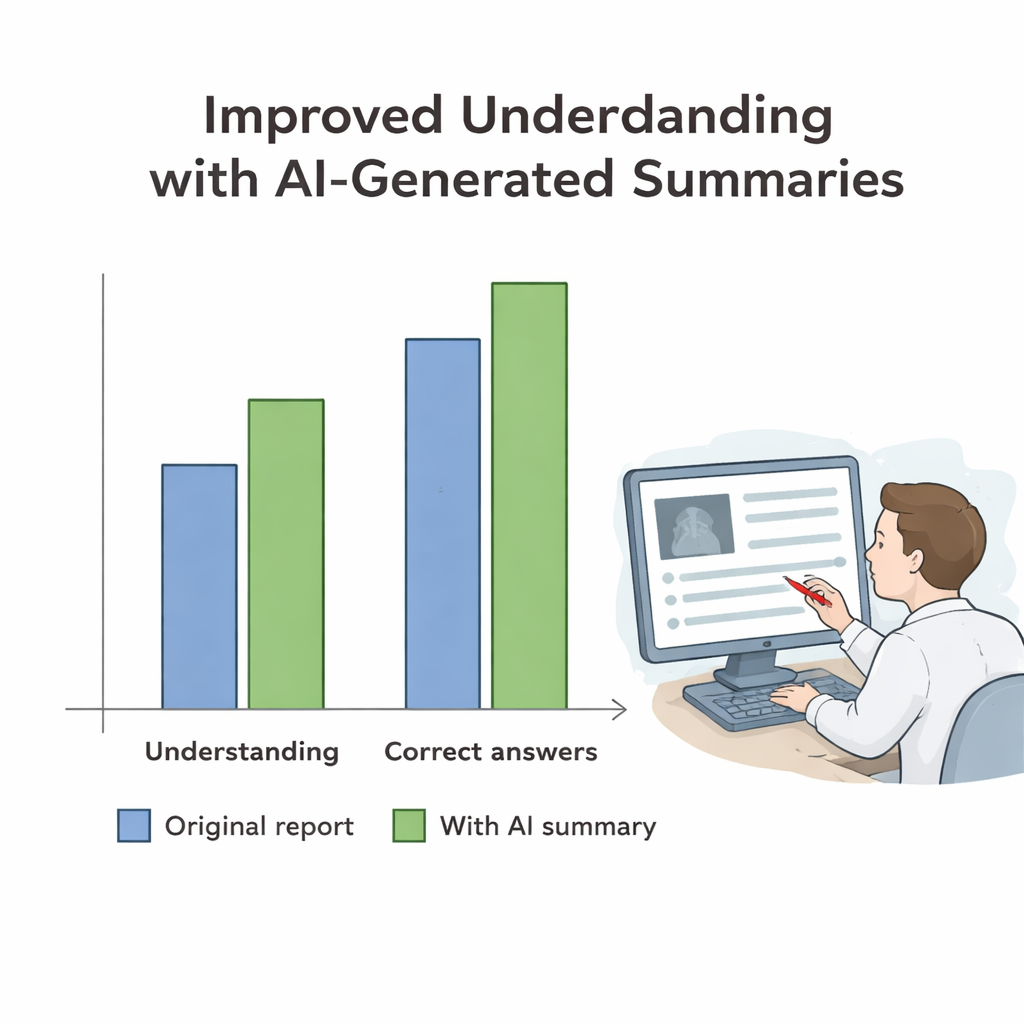

Clearer Reports, Modest but Real Understanding Gains

Adding AI‑generated summaries dramatically boosted how well participants felt they understood the reports, raising average self‑rated comprehension from “moderate” to “high” levels. Their confidence in being able to discuss the results with others also rose, while reported anxiety decreased slightly. When it came to objective understanding, the effect was more modest but still meaningful. Participants became better at spotting when a scan was abnormal and at recognizing findings that might actually cause the headache, with improvements concentrated in reports that contained real abnormalities. For normal scans, people were already close to perfect at recognizing that nothing serious had been found, so the summaries added little extra benefit.

Why Human Oversight Still Matters

Although these privacy‑preserving AI tools substantially improved perceived clarity and offered small but important gains in factual understanding, they were not flawless. Roughly 20% of summaries contained medical or language errors, often linked to tricky medical abbreviations or to English and Chinese words slipping into French sentences. Because even small mistakes can mislead patients, the authors argue that AI should be used in a “human‑in‑the‑loop” setup: the model drafts a patient‑friendly summary, and a radiologist quickly checks and corrects it before it reaches the patient. Used this way, the study suggests that on‑site AI could help hospitals offer clearer, more reassuring explanations of brain MRI results while keeping sensitive health data safely inside their walls.

Citation: Le Guellec, B., Bentegeac, R., Shorten, L. et al. Safety and efficacy of privacy-preserving models to create Lay summaries of brain MRI reports. Sci Rep 16, 6316 (2026). https://doi.org/10.1038/s41598-026-36081-5

Keywords: radiology reports, patient communication, brain MRI, large language models, medical privacy