Clear Sky Science · en

M6: multi-generator, multi-domain, multi-lingual and cultural, multi-genres, multi-instrument machine-generated music detection databases

Why the rise of AI-made music matters

Music streaming platforms are quietly filling up with songs written not by people, but by algorithms. These machine-generated tracks can be useful tools for therapy, film scores, or everyday playlists, yet they also blur the line between human creativity and automated production. This paper introduces M6, a large new music collection built to help researchers tell apart human-made and AI-made music, and to understand what makes them different. Its goal is to support fair credit for artists while still allowing AI to play a constructive role in music creation.

Building a big music test bed

The authors argue that progress in detecting AI-generated music has been held back by a lack of suitable data. Existing collections either focus on other tasks, such as matching music to text descriptions, or cover only a narrow slice of musical styles. M6 is designed to fill this gap. It combines thousands of tracks made by humans with thousands made by several different AI systems, packaged in a standard audio format. The dataset is intentionally broad: it spans many instruments, languages, cultures, genres, song lengths, and both vocal songs and instrumental background pieces. This rich variety lets scientists test whether detection methods work in realistic, ever-changing musical settings.

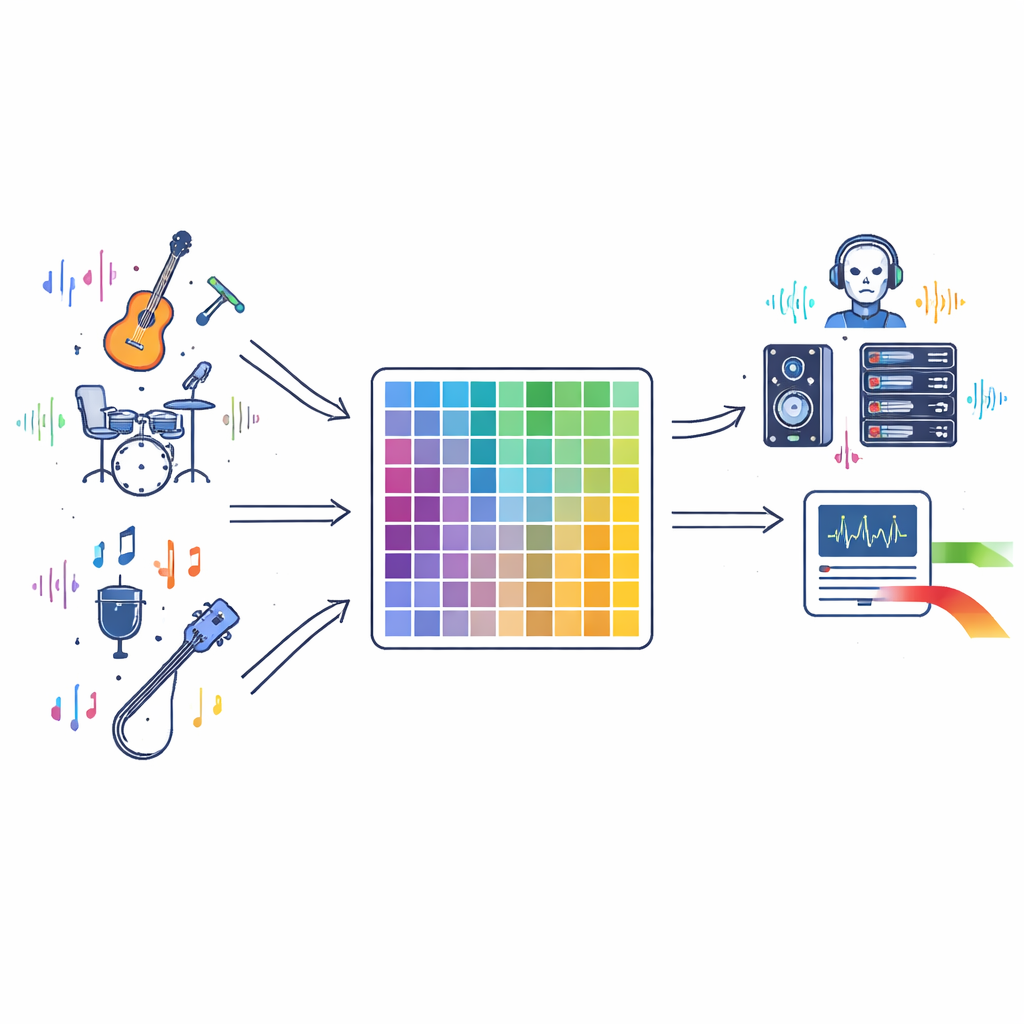

Gathering songs from people and machines

To assemble the human side of M6, the team drew on well-known open collections such as GTZAN (for music genres), the Free Music Archive, COSIAN (Japanese vocal music), and a dataset of individual instrument sounds. They also sampled additional songs from streaming platforms when licenses allowed, choosing clips that clearly showcase the main musical idea rather than just brief interludes. On the machine side, they generated new music using several state-of-the-art systems, including open research models and commercial tools. Carefully designed prompts, some produced by a language model, asked these systems to create music in particular styles, tempos, or with specific instruments, so that the AI tracks would mirror the diversity of human music.

Checking quality and spotting subtle differences

Because judgments of musical quality are subjective, the authors combined listener opinions with objective measurements. They computed simple numerical descriptors for each track, capturing aspects such as how complex the rhythms are, how wide the melodic range is, how bright the sound spectrum appears, and how much energy the signal carries. On average, AI-made pieces differed from human ones in these measures—for example, they often had slightly narrower pitch ranges or lower overall energy—but the differences were small enough that most listeners would not notice without careful comparison. In listening tests with 50 volunteers, people correctly identified whether a clip was human-made or AI-made only about half the time, essentially no better than flipping a coin. This suggests that AI music has already reached a level of polish that can easily fool casual listeners.

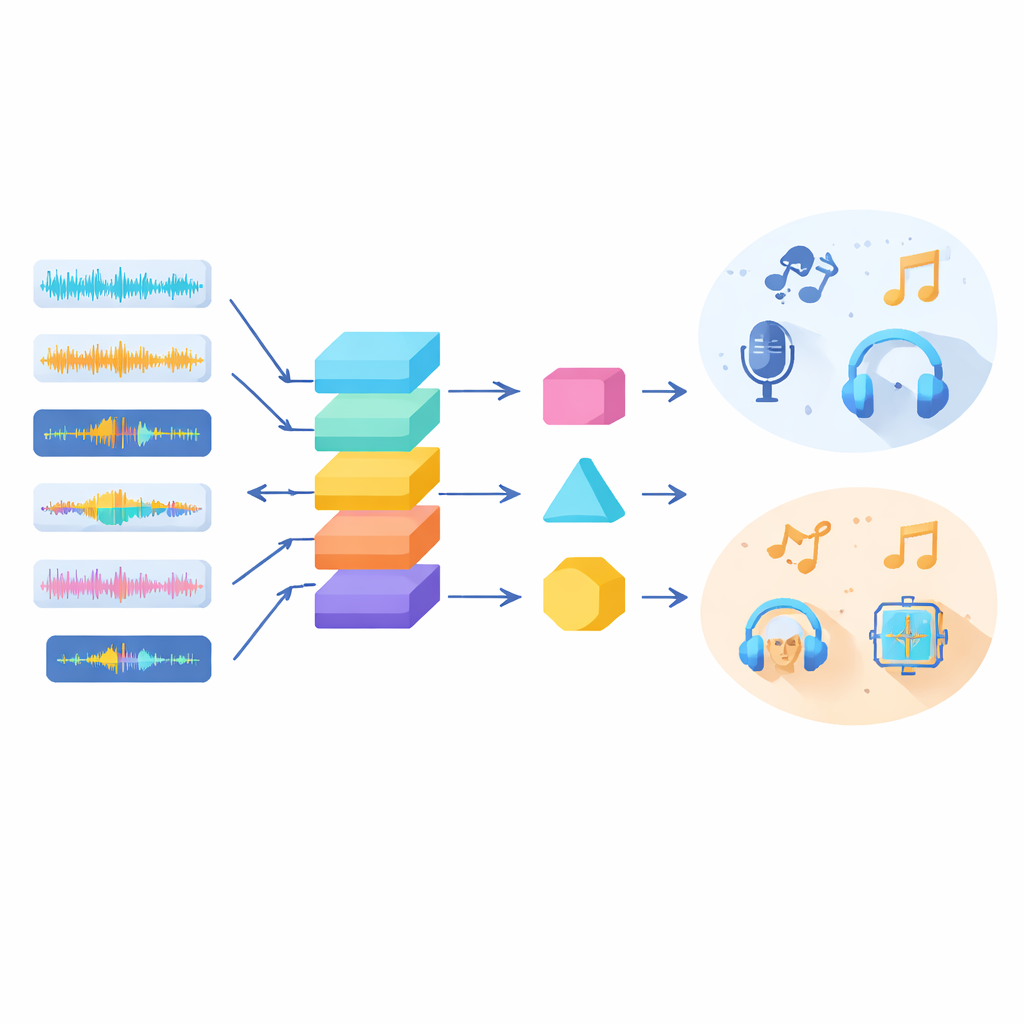

Putting detection methods to the test

With M6 in place, the researchers evaluated a variety of computer models to see how well they could detect AI-generated music. They tried classic machine learning, image-style neural networks that process spectrograms, transformer models, and modern audio systems originally built for detecting fake speech. When models were trained and tested on similar types of music, several achieved high accuracy, especially convolutional networks that focus on patterns in the time–frequency picture of the sound. However, performance dropped sharply when models faced "out-of-domain" music—styles, languages, or generators they had not seen before. The study also revealed that different AI music systems leave distinct acoustic "fingerprints," making some generators easier to spot than others and raising concerns that detectors might overfit to particular tools rather than to the deeper traits of machine-made music.

What this means for music and AI

The M6 project shows that while computers can often flag AI-generated music accurately in familiar settings, robust detection in the wild remains a major challenge. Human listeners already struggle to tell the difference, and current algorithms falter when music shifts in genre, language, length, or generation method. By releasing a large, diverse, and openly available dataset, the authors hope to spur the development of more reliable and transparent detection tools. In turn, such tools could help maintain trust in digital music platforms, support fair recognition of human artists, and guide future AI systems toward collaboration with musicians rather than silent replacement.

Citation: Li, Y., Li, H., Specia, L. et al. M6: multi-generator, multi-domain, multi-lingual and cultural, multi-genres, multi-instrument machine-generated music detection databases. Sci Rep 16, 9237 (2026). https://doi.org/10.1038/s41598-026-36044-w

Keywords: AI-generated music, deepfake audio, music detection, machine learning, digital creativity