Clear Sky Science · en

Health-FedNet: secure federated learning for chronic disease prediction on MIMIC-III with differential privacy and homomorphic encryption

Why Your Medical Data Needs New Kinds of Locks

Modern hospitals are turning to artificial intelligence to spot diseases earlier and tailor treatments, but this power comes with a catch: computers learn best from huge piles of patient records that are far too sensitive to simply pool in one place. This paper introduces Health-FedNet, a new way for hospitals to train powerful prediction tools for chronic illnesses such as diabetes and hypertension while keeping patient details locked safely inside each institution. It shows how we can get the benefits of big-data medicine without creating a single tempting target for hackers or breaking privacy laws.

How Hospitals Can Learn Together Without Sharing Charts

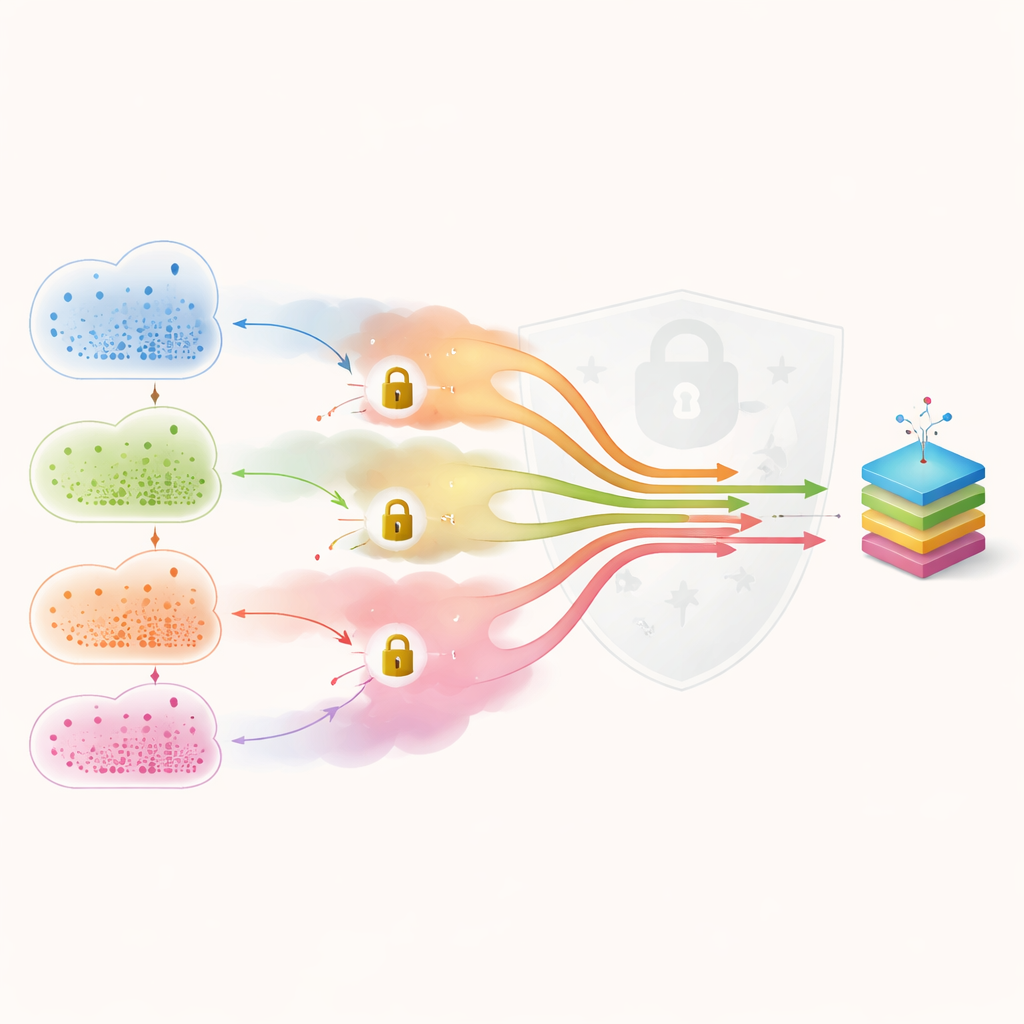

Health-FedNet builds on an approach called federated learning, where a shared prediction model is sent out to multiple hospitals, trained locally on each site’s data, and then sent back as an updated set of numbers. Instead of collecting raw medical records in a central warehouse, only these updates travel, so patient information never leaves the hospital’s walls. The central server then combines all the updates to produce an improved global model, and this cycle repeats until the predictions stabilize. In this work, the authors simulate a network of hospitals using the well-known MIMIC-III intensive care database, asking Health-FedNet to predict who will develop diabetes or high blood pressure.

Adding Noise and Locks to Hide Individual Patients

Simply exchanging model updates is not enough to guarantee privacy, because skilled attackers can sometimes work backwards from those updates to learn about individual patients. Health-FedNet counters this in two stages. First, each hospital deliberately adds a small amount of mathematical “noise” to its updates, so that the influence of any one person’s record becomes indistinguishable. This technique, called differential privacy, lets the authors put a hard numerical bound on how much any single record can be exposed. Second, the noisy updates are encrypted using a method that still allows them to be combined while locked, so the central server never sees them in plain form. Together, these layers sharply reduce the chances that outsiders—or even the server itself—could reconstruct private details.

Letting High-Quality Data Speak More Loudly

Real hospitals do not all look alike. Some treat more patients, some collect richer information, and some have noisier records. If every institution’s contribution is treated equally, the final model can be dragged down by poorer-quality data. Health-FedNet introduces an adaptive weighting scheme that scores each hospital based on how many records it holds and how well its local model performs. Those with more consistent, informative data are given a somewhat stronger voice when updates are combined, while still ensuring that no single site dominates. The authors show that this weighting helps the shared model learn more stably when disease rates and record quality differ from one institution to another—a realistic situation in everyday healthcare.

How Well the System Predicts and Protects

To test its practicality, the team compares Health-FedNet with both a standard centralized model that trains on pooled data and a more basic federated setup without the extra privacy tools. On the simulated hospital network, Health-FedNet predicts chronic disease with about 92% accuracy and an area-under-the-curve score of 0.94, clearly ahead of the alternatives. At the same time, it sharply cuts the risk that an attacker could tell whether a specific person’s record was used for training, or reconstruct their medical details, reducing such leakage chances by roughly three to four times. Despite the added encryption and noise, the system also lowers communication overhead between hospitals and the central server, thanks to careful packing and weighting of updates, making it more practical for large networks.

What This Means for Future Digital Medicine

In plain terms, Health-FedNet shows that we do not have to choose between accurate medical predictions and strong privacy safeguards. By letting hospitals learn together from patterns in their data while keeping individual charts local, adding carefully calibrated noise, and encrypting updates from end to end, the framework meets key requirements of regulations such as HIPAA and GDPR. The study suggests that similar designs could underpin future nationwide or even international health networks, where many institutions collaborate to forecast disease, spot outbreaks, and guide treatment—without ever handing over raw patient records.

Citation: Shahid, M.I., Badar, H.M.S., Asghar, M.N. et al. Health-FedNet: secure federated learning for chronic disease prediction on MIMIC-III with differential privacy and homomorphic encryption. Sci Rep 16, 7968 (2026). https://doi.org/10.1038/s41598-026-36034-y

Keywords: federated learning, health data privacy, chronic disease prediction, medical AI security, hospital data sharing