Clear Sky Science · en

Towards the transformation of MATLAB models into FPGA-Based hardware accelerators

Turning Lab Algorithms into Real-World Devices

Many modern medical and engineering breakthroughs start life as software written by researchers, often in user-friendly tools like MATLAB. But when those same algorithms must run in real time—such as spotting dangerous heart rhythm problems from an electrocardiogram (ECG)—they need the speed and efficiency of custom hardware. This article shows how to reliably move a complex heart-beat–detecting neural network from MATLAB onto a specialized chip called an FPGA, without losing the accuracy that doctors and engineers depend on.

Why Rewriting Code for Chips Is So Hard

FPGAs (Field-Programmable Gate Arrays) are reconfigurable chips prized for their speed and low power use. Traditionally, programming them required hardware-centric languages like VHDL or Verilog, which are far removed from the high-level math and signal-processing code scientists write in MATLAB. New tools can automatically convert algorithms to hardware descriptions, but they often require manual tweaking and focus mainly on performance: how fast the chip runs, how many resources it uses. What has been missing is a clear way to prove that the hardware does exactly what the original software did, layer by layer, number by number.

A Step-by-Step Check for Every Network Layer

The author tackles this gap by proposing a verification-focused workflow for a one-dimensional convolutional neural network that classifies heartbeats from the well-known MIT-BIH Arrhythmia Database. The network, built in MATLAB, examines short snippets of ECG data and assigns them to one of five heartbeat types, including normal and several abnormal rhythms. Three versions of the network, with different depths, are trained on the same data. After training, the weights, biases, and the outputs of every layer on test signals are saved as "golden outputs"—a precise numerical record of how the MATLAB model behaves.

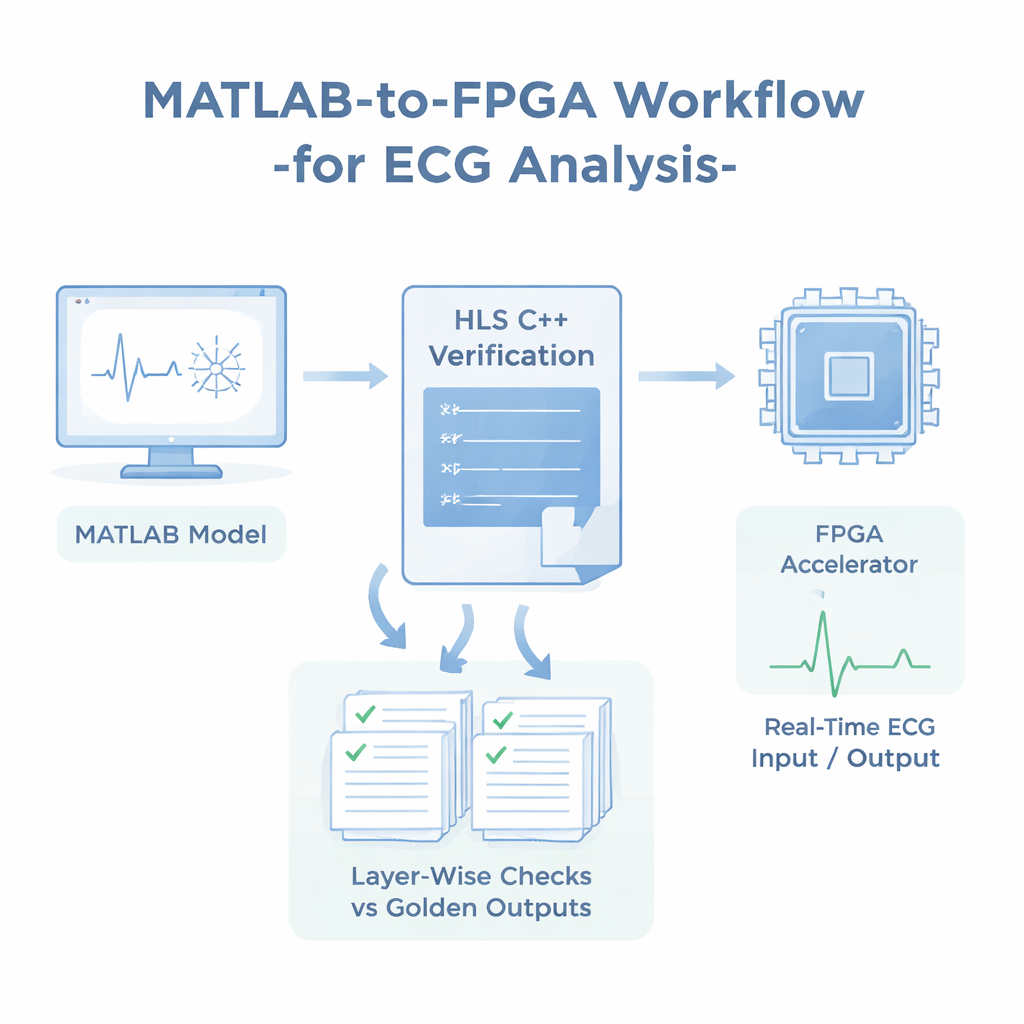

From MATLAB to C++ to FPGA, With Numbers Tracked

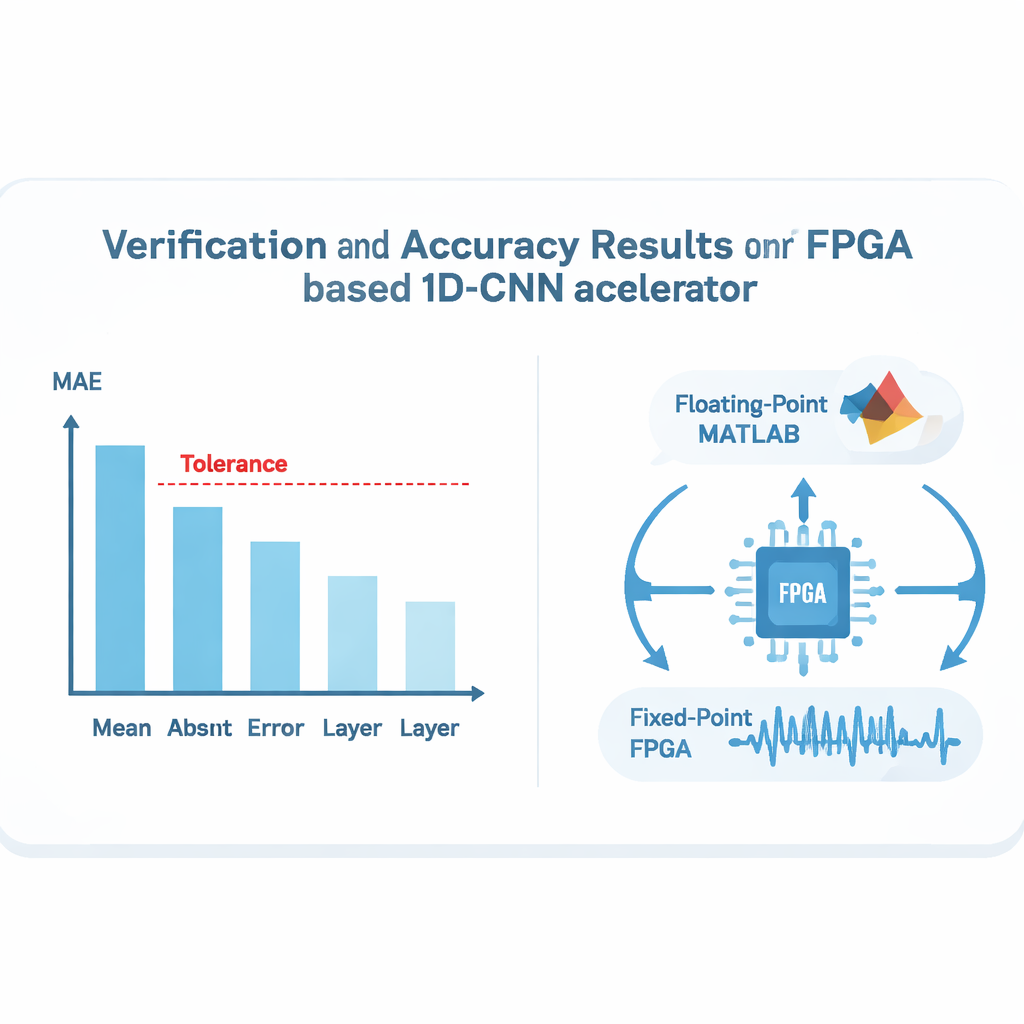

Instead of pressing an automatic "convert" button, the network is manually reimplemented in C++, following the same data layout and mathematical steps as in MATLAB. This C++ code is then used with a high-level synthesis tool to generate hardware that runs on a PYNQ-Z1 FPGA board. At each stage—MATLAB, C++, and FPGA—the layer outputs are compared against the stored golden outputs using a simple yardstick: mean absolute error, which measures the average numerical difference between two sets of results. The study sets a strict tolerance: all layers must stay below an error of 1.5×10⁻³. Across thousands of ECG samples, every tested layer meets this target, and the final classification accuracy remains almost unchanged: about 98.3–98.4% in MATLAB and C++, and only about 0.2 percentage points lower on the FPGA, a difference consistent with the expected effects of using shorter, fixed-point numbers in hardware.

Making the Hardware Fast Without Breaking the Math

Once correctness is secured, the design is tuned for speed. The most demanding parts of the network—the convolutional layers—are mapped onto the FPGA’s programmable logic, using fixed-point arithmetic for efficiency, while lighter tasks such as the final softmax calculation run on the chip’s built-in processor with normal floating-point numbers. Techniques like loop pipelining, loop unrolling, and careful splitting of memory into parallel banks allow different pieces of the computation and data transfers to run side by side. An optimized version of the hardware processes a heartbeat window in about 1.7 milliseconds and fits comfortably within the FPGA’s resource and power limits, all while preserving the layer-wise numerical behavior of the original MATLAB model.

What This Means for Future Smart Devices

For non-specialists, the key message is that it is now possible not only to accelerate a sophisticated MATLAB-based heart rhythm classifier on a low-power chip, but to prove that the chip’s inner workings closely match the trusted software model at every layer. Rather than chasing speed at the expense of reliability, this approach builds a clear audit trail from floating-point MATLAB code to fixed-point FPGA hardware. The same verification recipe—saving golden outputs, comparing layer-wise errors against a strict threshold, and checking final accuracy—can be applied to other one-dimensional neural networks in areas such as wearable health monitors, industrial sensors, and smart vehicles, helping ensure that when algorithms leave the lab and move into devices, their decisions remain dependable.

Citation: Bal, S. Towards the transformation of MATLAB models into FPGA-Based hardware accelerators. Sci Rep 16, 5027 (2026). https://doi.org/10.1038/s41598-026-36033-z

Keywords: FPGA accelerators, MATLAB neural networks, ECG arrhythmia classification, hardware verification, fixed-point deep learning