Clear Sky Science · en

A multi-branch network for cooperative spectrum sensing via attention-based and CNN feature fusion

Why Your Wi‑Fi Shares the Air

Every wireless gadget you own—your phone, laptop, smart speaker, and even your car—competes for the same invisible real estate: radio waves. Governments tightly license much of this spectrum, yet many licensed channels sit idle at any moment. This paper explores a smarter way for unlicensed devices to detect when those channels are truly free, even in very noisy conditions and when several licensed users are transmitting at once. That step is crucial for future networks that promise faster, more reliable connections without demanding more spectrum.

Finding Hidden Gaps in a Crowded Airwave

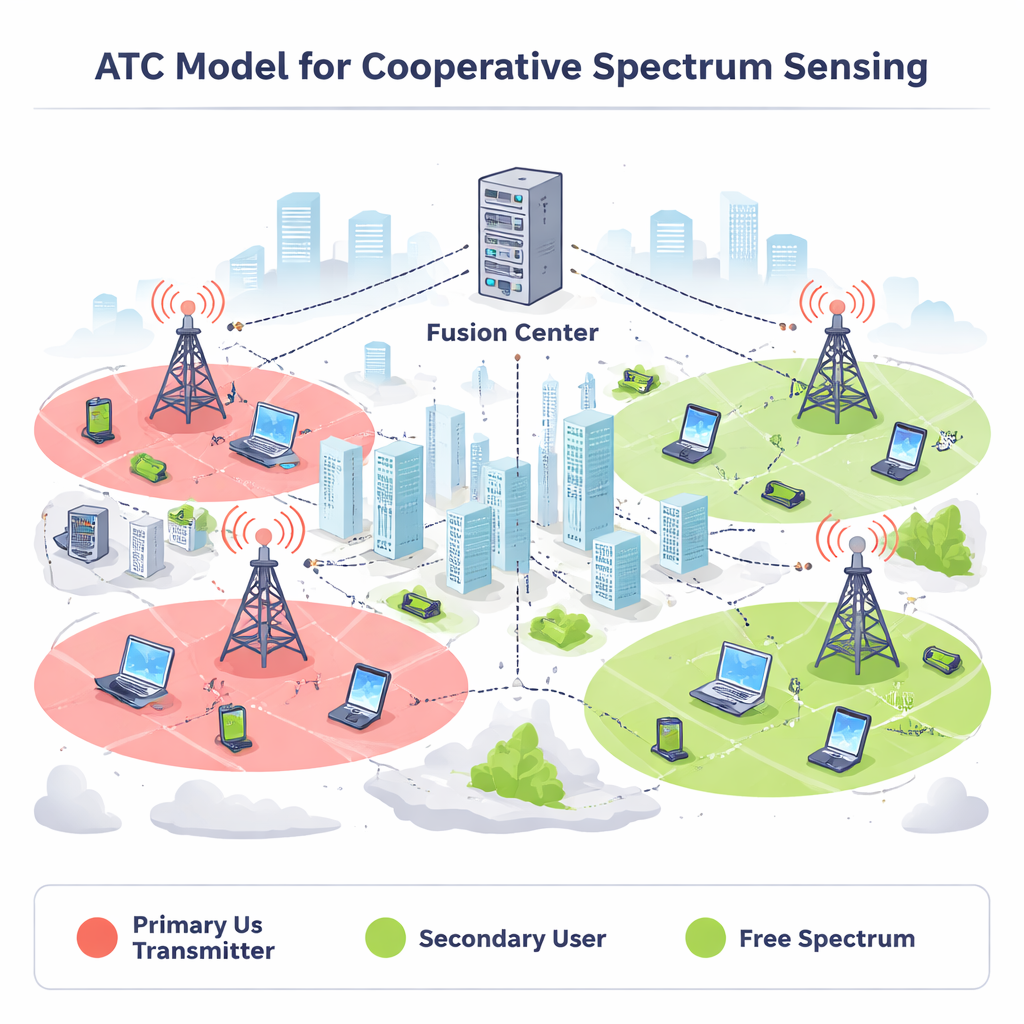

Modern "cognitive" radios are designed to listen before they talk. They search for brief openings—called spectrum holes—where licensed, or primary, users are inactive so that secondary devices can transmit without causing interference. Simple listening methods struggle when signals are weak, noisy, or coming from many directions. To improve reliability, multiple secondary users can cooperate: each listens locally and forwards its observations to a fusion center that decides whether the channel is busy or free. Yet existing techniques, including many based on machine learning, usually assume only one primary transmitter and have trouble when several licensed users share the same channel, as often happens in real cellular and Wi‑Fi systems.

A Three‑Eyed View of the Radio World

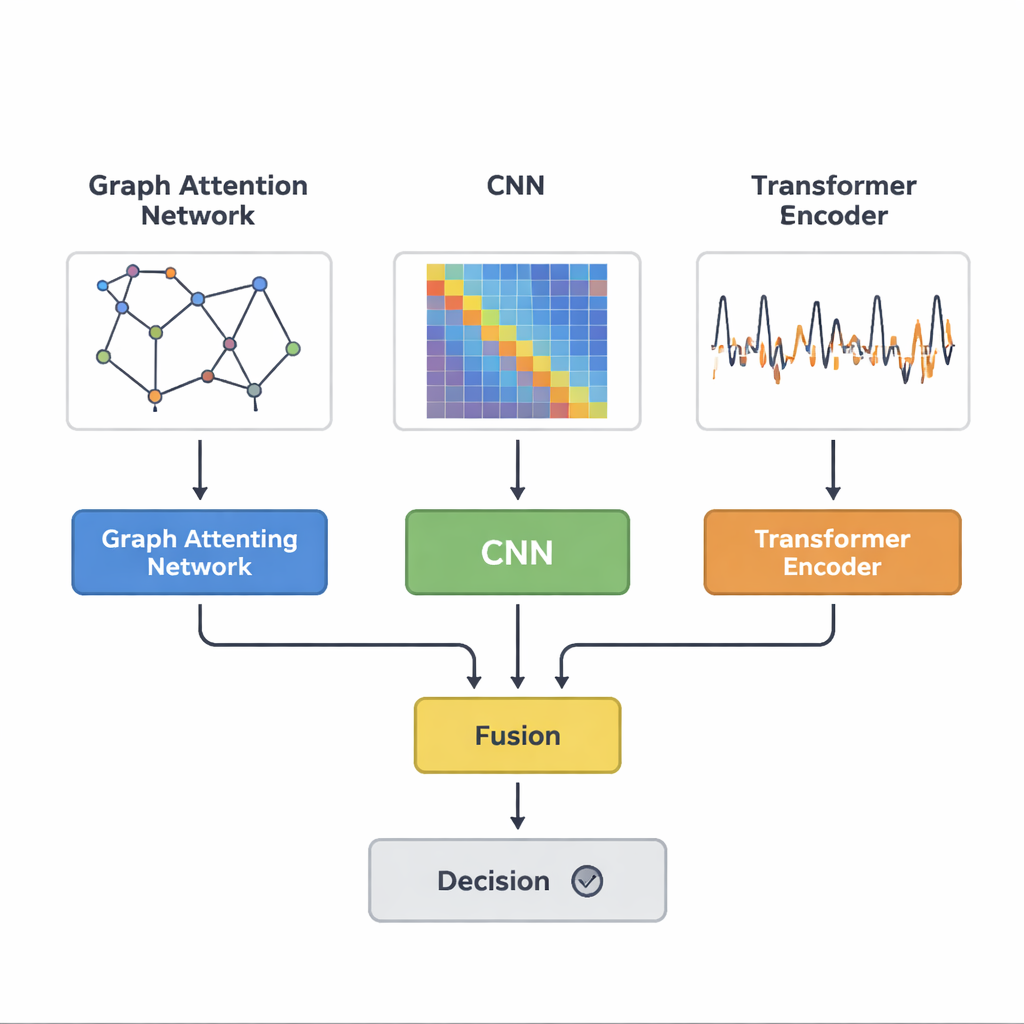

The authors propose a new deep‑learning model, called ATC, that treats cooperative spectrum sensing as a pattern‑recognition problem with many possible "network states" (which primary users are on or off). Instead of relying on a single view of the data, ATC looks at the same sensing signals through three complementary lenses running in parallel. One branch uses a graph attention network to model how signal strength at each secondary device relates to its neighbors, highlighting which sensors carry the most informative clues. A second branch feeds a covariance matrix—essentially a map of how signals at different sensors rise and fall together—into a convolutional neural network, treating it like an image that reveals fine‑grained spatial structure that is robust to noise. A third branch employs a Transformer encoder, best known from language models, to learn the temporal patterns in how primary users turn on and off over time.

Blending Multiple Clues into One Clear Decision

Because each branch specializes in a different kind of structure—network geometry, statistical relationships, and time dynamics—the model merges their outputs only at the end. This parallel design keeps each type of information intact until a final fusion layer learns how to weigh them. The authors contrast this with a serial design, where one network’s output feeds directly into the next; in such pipelines, early processing can distort or erase details that later stages would need. They also introduce a decision rule tuned to the real‑world trade‑off between one kind of error (missing an active primary user and causing interference) and the other (declaring the channel busy when it is actually free, wasting potential capacity). By adjusting a threshold on a carefully defined probability ratio, network operators can explicitly choose how aggressively to reuse spectrum.

Putting the Model to the Test

To see how well ATC performs, the researchers trained and compared it with several popular baselines, from traditional clustering and support‑vector machines to deep networks that use only convolutional layers, only recurrent layers, or a simpler combination of convolution and Transformers. Using simulated data that mimics two primary users and ten collaborating secondary users under both pure noise and realistic fading channels, ATC consistently achieved higher detection probabilities, especially when signals were very weak. It was also more accurate at telling which specific combination of users was active. In tests on a real Wi‑Fi dataset—where channel measurements captured conditions with and without people walking around—ATC again outperformed competing methods, remaining more reliable in complex, changing environments. Despite its sophistication, the model is compact enough to train in minutes and to make decisions in microseconds on a standard graphics processor.

What This Means for Everyday Wireless Devices

For a lay reader, the key takeaway is that smarter listening can unlock more wireless capacity without buying new spectrum or breaking existing rules. By combining three complementary ways of "looking" at radio signals, the ATC model can more confidently spot when licensed users are present and when the airwaves are genuinely free, even in crowded, noisy, and variable conditions. While the study still assumes a limited number of primary users and simplified channel types, it points toward future radios that can safely share spectrum on the fly, making better use of what we already have and paving the way for denser networks of connected devices.

Citation: Lan, D.T., Ngo, Q.T., Nguyen, L.V. et al. A multi-branch network for cooperative spectrum sensing via attention-based and CNN feature fusion. Sci Rep 16, 5111 (2026). https://doi.org/10.1038/s41598-026-36031-1

Keywords: cognitive radio, spectrum sensing, deep learning, wireless networks, attention networks