Clear Sky Science · en

Laplacian reconstructive network for guided thermal super-resolution

Sharper heat vision for everyday tech

Thermal cameras can see heat where our eyes cannot, from people walking in the dark to crops stressed by drought. But sharp thermal cameras are expensive, so many devices—from drones to security systems—use low-resolution sensors that produce blurry images. This paper introduces LapGSR, a new way to “upgrade” those blurry thermal images using an ordinary color camera, promising clearer heat views without costly hardware.

Why combining two kinds of vision helps

Color cameras capture rich detail about edges, textures, and shapes, but they only see visible light. Thermal cameras sense heat, revealing information that remains invisible at night, in smoke, or through some materials—yet consumer-grade versions are often coarse and fuzzy. Guided thermal super-resolution tries to get the best of both worlds: it uses a sharp color image as a guide to enhance a low-resolution thermal image of the same scene. The challenge is doing this accurately and fast, with models that are small enough to run on robots, drones, or handheld devices.

A pyramid of details instead of heavy computing

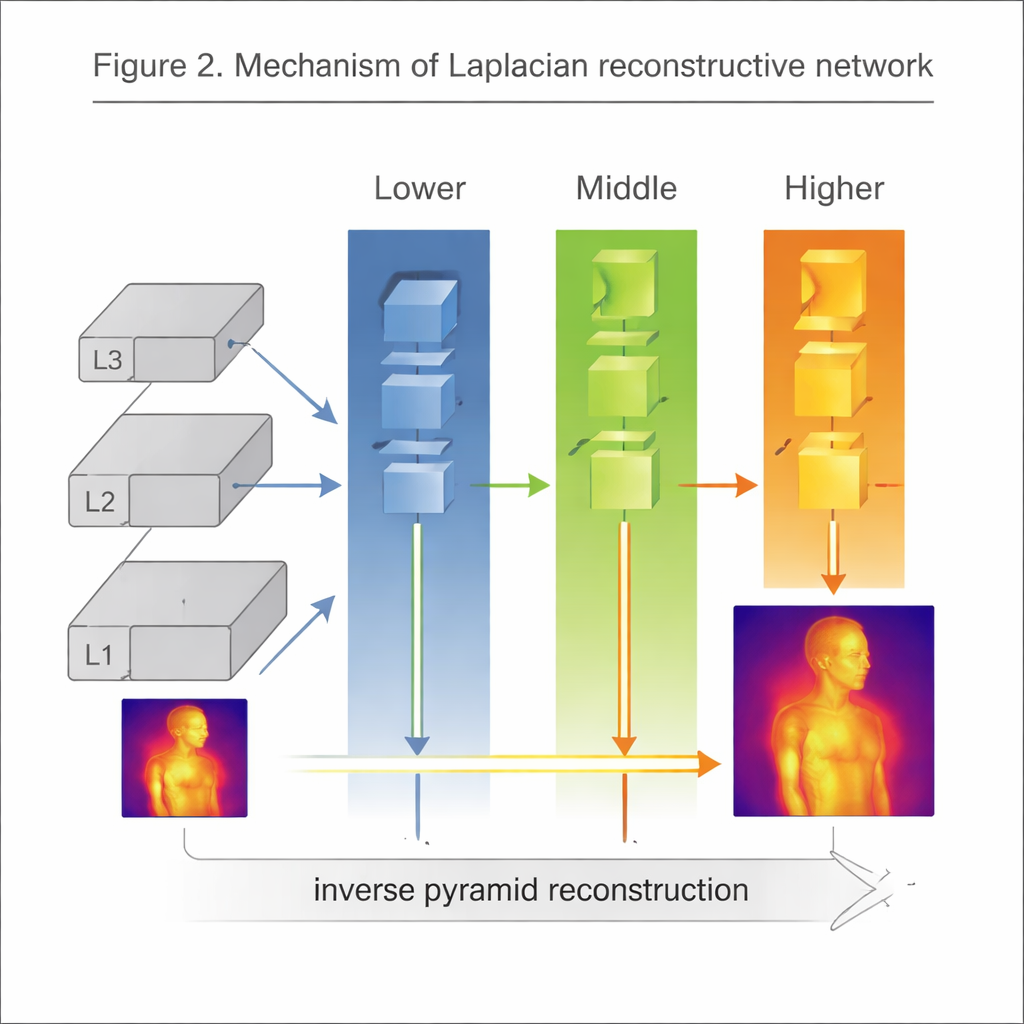

LapGSR tackles this challenge by leaning on a classic image-processing idea: the Laplacian pyramid. Instead of forcing a deep neural network to learn all edges and textures from scratch, the method breaks the guiding color image into several layers, each capturing edges and fine detail at a different scale. These pyramid layers are fed into a compact generative network made of three branches—lower, middle, and higher—that focus on different levels of detail. The low-resolution thermal image is inserted into this pyramid and gradually refined, using the color image’s edge information as a blueprint for where to sharpen and where to smooth.

Lightweight design with smart training

To keep the model fast and affordable for real-time use, the authors avoid bulky upscaling tricks that add many extra parameters. Instead, they use simple bicubic resizing and an “inverse pyramid” step to rebuild a high-resolution thermal image from the processed layers. Training also balances two goals that can conflict: numerical accuracy and visual realism. A standard pixel-by-pixel error measure encourages the output to be close to the ground truth, while an adversarial loss—borrowed from generative adversarial networks—pushes the model to produce more natural-looking textures and edges. Together, these choices produce crisp images while keeping the network remarkably small.

Performance in the lab and in the air

The team tested LapGSR on two demanding datasets. One comes from a handheld thermal camera capturing people and scenes in varied indoor and outdoor conditions. The other comes from a drone-mounted sensor looking down on roads, vehicles, and landscapes from above. On the handheld dataset, LapGSR not only outperformed previous methods in image quality but did so with a fraction of the parameters—about 398,000, compared with millions in competing models. On the drone dataset, it delivered competitive quality while using around 95 percent fewer parameters than state-of-the-art approaches. The authors also showed that LapGSR handles moderate misalignment between the color and thermal images, an issue that often occurs when cameras move or vibrate.

Limits, uses, and what comes next

LapGSR works best when the color image contains clear edges and textures, which the model can transfer to sharpen the thermal view. When scenes are far away or visually smooth—as in some aerial shots—those edges weaken, and the benefits shrink. Even so, the method’s combination of efficiency, robustness, and strong results makes it attractive for applications like security, search-and-rescue robots, and agricultural drones, where both cost and speed matter. In simple terms, the paper shows how a clever blend of old image tricks and modern AI can turn cheap, blurry thermal cameras into tools that see heat with much finer detail.

Citation: Kasliwal, A., Gakhar, I., Kamani, A. et al. Laplacian reconstructive network for guided thermal super-resolution. Sci Rep 16, 5665 (2026). https://doi.org/10.1038/s41598-026-36027-x

Keywords: thermal imaging, super-resolution, multimodal fusion, computer vision, drones and robotics