Clear Sky Science · en

Utilising artificial intelligence to identify surgical anatomy during laparoscopic donor nephrectomy – a validation and feasibility study

Smarter Tech for a High‑Stakes Gift

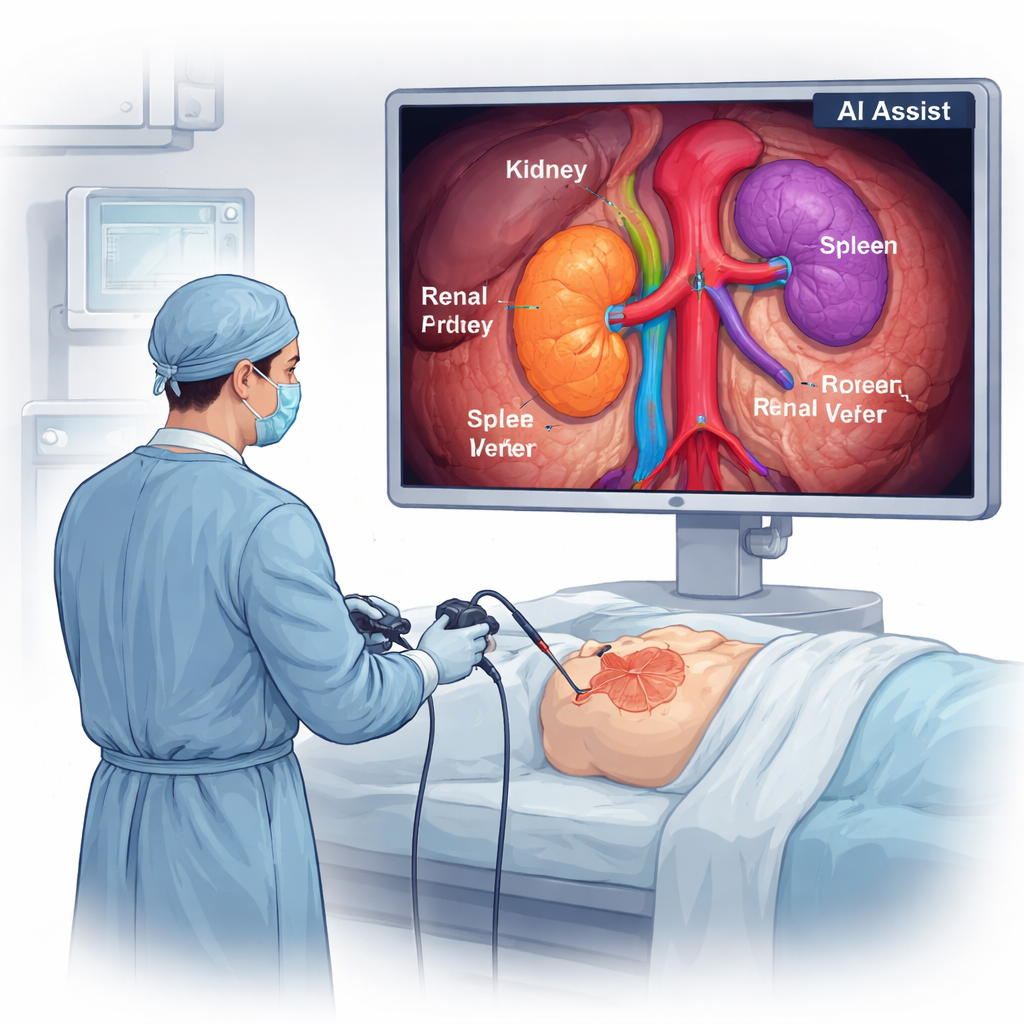

Laparoscopic donor nephrectomy is the minimally invasive operation used when a healthy person donates a kidney—a selfless act where any surgical mistake is especially hard to accept. This study explores how artificial intelligence (AI) can help surgeons see vital structures more clearly during this delicate procedure, aiming to reduce risk for donors and improve training for future surgeons.

Why Donor Kidney Surgery Needs Extra Care

Living kidney donors are often perfectly healthy people choosing surgery purely to help someone else. While laparoscopic techniques have already made donation safer and recovery faster, the operation still involves working in a cramped space around arteries, veins, and the ureter that must not be damaged. Surgeons currently rely on their own eyes and experience to recognize these structures on a video screen. An AI “co‑pilot” that can reliably point out key anatomy in real time could help prevent slips, especially for less‑experienced surgeons or in difficult cases.

Teaching a Computer to See Inside the Body

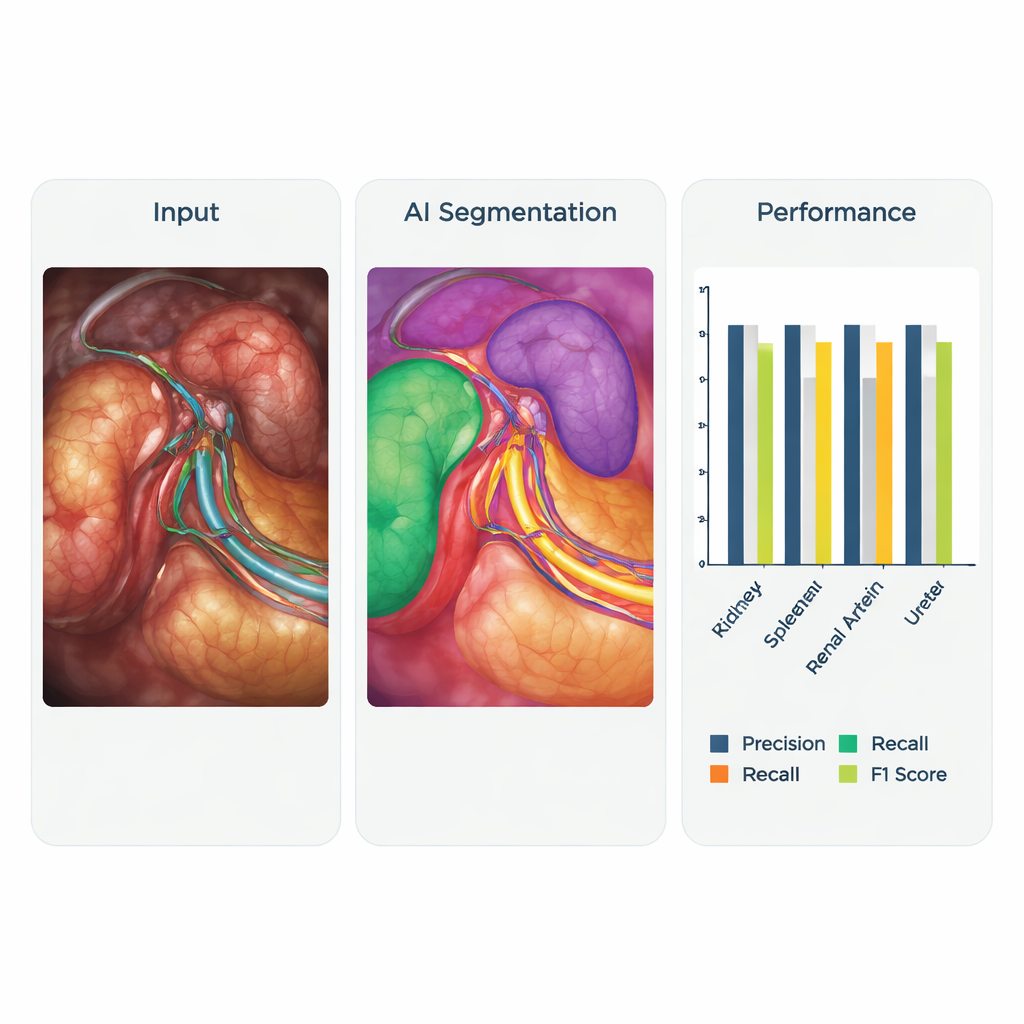

The research team partnered with a medical technology company to build a computer vision system that can recognize important structures during left kidney donation surgery. They collected video recordings from 30 operations and focused on moments when the surrounding fat had already been cleared, making the kidney, spleen, main blood vessels, and ureter visible. From these, they extracted thousands of still images. Each image was carefully labeled pixel by pixel by an anatomist and then double‑checked by an experienced transplant surgeon. These annotated images served as “answer keys” for training the AI. The system was built on a modern deep learning model originally designed to detect objects quickly in images, then adapted to outline individual organs and vessels on each surgical frame.

How the System Was Trained and Tested

The researchers used 6,828 labeled images from 16 surgeries to teach the AI and kept another 1,757 images from four different surgeries aside to test how well it had learned. They deliberately gave extra weight to the most critical structures—the renal artery, renal vein, and ureter—so the model would pay special attention to them. During training, the program repeatedly compared its guesses to the expert labels, adjusting itself to reduce errors. The core question was whether, when shown a new image, the AI could correctly highlight the spleen, kidney, major vessels, and ureter without missing them or confusing one structure for another.

How Well the AI Co‑Pilot Performed

When tested, the AI system showed encouraging accuracy. It was especially strong at spotting the spleen and the main blood vessels supplying the kidney. For the left kidney, renal artery, and renal vein, the system reached a good balance between not falsely labeling background tissue and not overlooking the structures it was supposed to find. These results meet commonly accepted thresholds for real‑time detection in surgery. The ureter— a thin tube that drains urine from the kidney—proved harder to detect, likely because it is narrow, mobile, and similar in color to nearby tissues, and also less represented in the training images. Beyond static tests, the team also trialed the system live in the operating room and on videos from another hospital in a different country. The AI still identified key anatomy and even picked up an unusual pattern of dual arteries, suggesting it may generalize beyond the original training center.

What This Could Mean for Surgeons and Patients

Although the system is not yet ready to serve as a full real‑time navigation tool, this work marks an important first step. Being able to outline crucial anatomy reliably opens the door to several applications: on‑screen guidance during tricky parts of surgery, automatic labeling of teaching videos for trainees, and more objective ways to assess surgical performance. Future improvements will require more diverse data from multiple hospitals, better handling of difficult structures like the ureter, and formal measurement of how fast and consistently the AI works frame by frame. Still, the central message is clear for non‑specialists: AI can already “see” much of what a trained surgeon sees, and with further refinement, systems like this could make the gift of kidney donation even safer.

Citation: Ong, C.S.H., Wong, H.P.N., Leung, M. et al. Utilising artificial intelligence to identify surgical anatomy during laparoscopic donor nephrectomy – a validation and feasibility study. Sci Rep 16, 7416 (2026). https://doi.org/10.1038/s41598-026-35999-0

Keywords: kidney donation, laparoscopic surgery, surgical artificial intelligence, computer vision in medicine, organ transplant safety