Clear Sky Science · en

Estimating cognitive workload in robot assisted surgery using time and frequency features from EEG epochs with random forest regression

Why mental effort in robot surgery matters

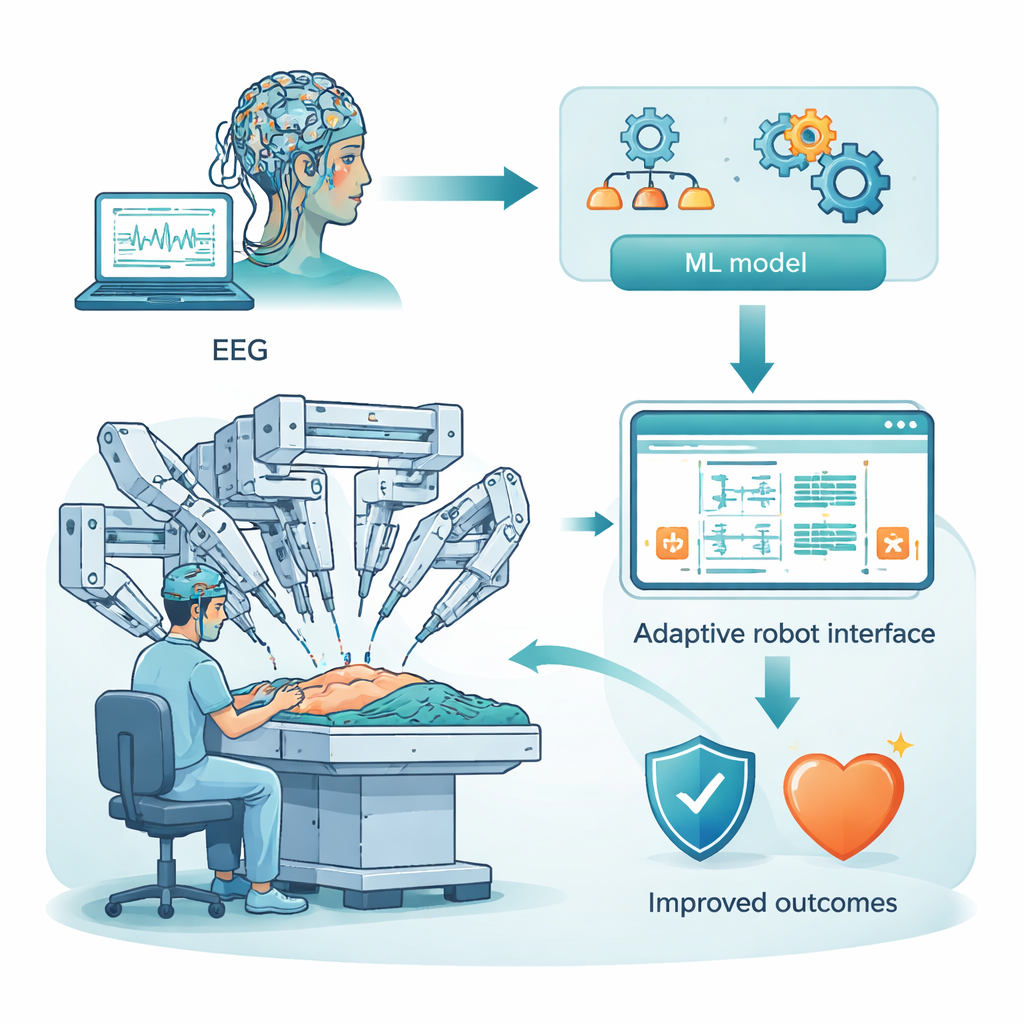

Robot-assisted surgery promises smaller cuts, faster recovery, and steadier hands. But behind the robot sits a human surgeon whose brain can become overloaded by complex visuals, controls, and high-stakes decisions. This study asks a simple question with big consequences: can we read a surgeon’s brain signals in real time to estimate how mentally taxed they are, and then use that information to keep them sharp and patients safe?

Listening to the brain during robot surgery

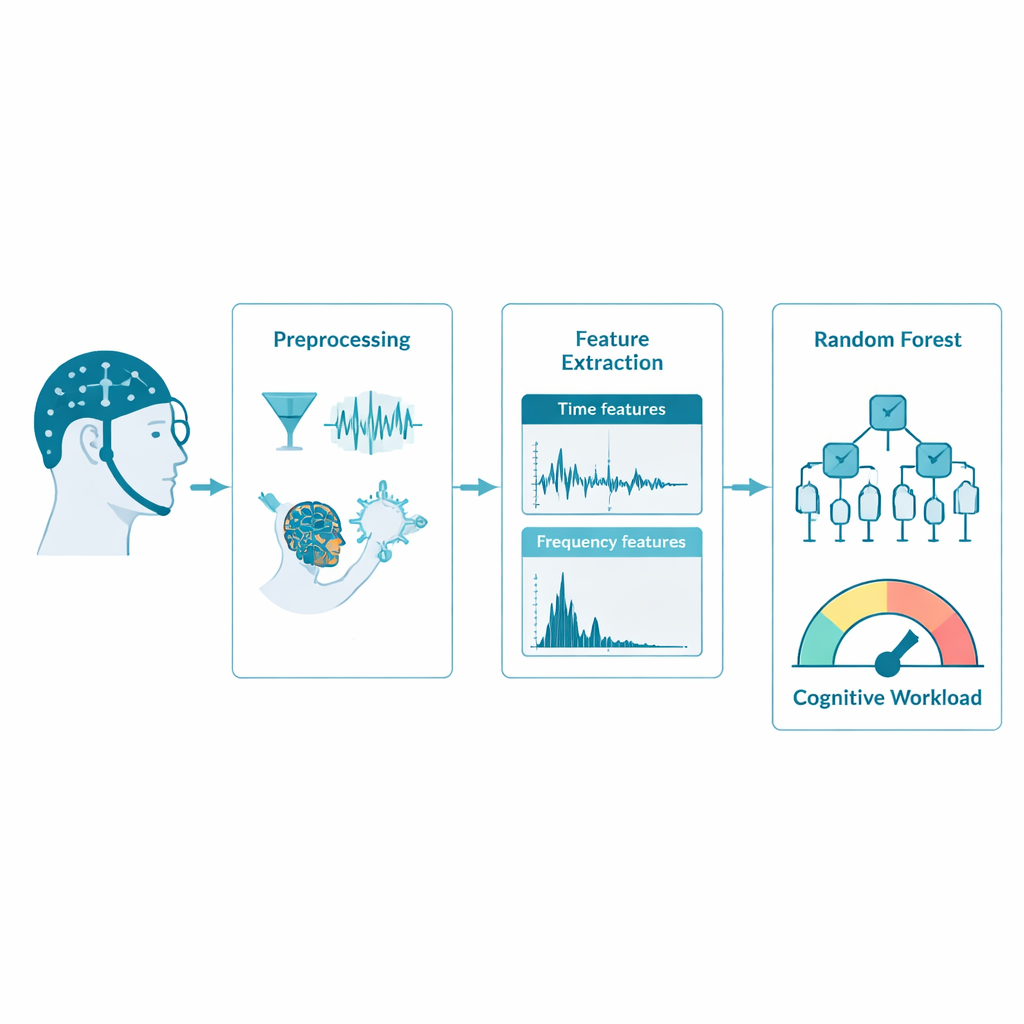

Instead of relying on checklists or gut feeling, the researchers turned to electroencephalography, or EEG, a technique that measures tiny electrical signals on the scalp. They used a public dataset of 25 people performing robot-assisted surgery tasks while wearing a cap with 128 electrodes. These sensors pick up rhythms from different brain areas: the front (planning and decision-making), sides (hearing and memory), top (touch and spatial awareness), and back (vision). The goal was to convert these raw, noisy squiggles into a continuous estimate of “cognitive workload” — how hard the brain is working moment by moment.

Cleaning and condensing the brain’s signals

Raw EEG behaves more like a city’s electrical grid than a clear brain read-out: it is full of interference from blinking, muscle twitches, and power lines. The team first cleaned the data by filtering out unwanted frequencies and using a mathematical method called independent component analysis to remove eye and muscle artifacts. To make the system fast enough for near real-time use, they reduced the recording speed from 500 to 128 measurements per second. Careful comparisons of brain maps and spectrograms before and after this step showed that key patterns were preserved, so the signals stayed scientifically useful while becoming much cheaper to process.

Turning waves into meaningful numbers

Next, the researchers chopped the continuous EEG into one-second “epochs” and described each tiny segment using simple statistics and frequency content. Time-based features, such as average signal size, variability, asymmetry, and the number of times the wave crossed zero, captured the overall shape and energy of the brain activity. Frequency-based features focused on how much power lay in classic bands like theta (linked to effort and working memory), alpha (rest and inhibition), beta (active focus), and gamma (complex processing). Together, these numbers form a compact fingerprint of the brain’s state in each second, ready for a computer to learn from.

Teaching a forest of decision trees to read workload

To link these EEG fingerprints to cognitive workload, the team used a machine-learning method called random forest regression. Instead of a single complex formula, this approach builds many simple decision trees that each make a prediction, then combines them into a more reliable “ensemble” answer. The model learned to predict how strongly each brain region was activated, treating that activation as a stand-in for mental effort. Across the frontal, temporal, parietal, and occipital areas, the model matched the true activation extremely well, with accuracy scores (R²) above 0.93 and especially strong results in the temporal region, which helps integrate sound, memory, and perception during demanding tasks.

What the model reveals about the working brain

By examining which features the random forest relied on most, the study also shed light on how the brain signals reflect mental strain. Measures of signal energy and burst-like spikes (root mean square and kurtosis), together with power in selected frequency bands, were particularly informative. Different regions emphasized different features: for example, fast beta and gamma activity in parietal and occipital areas lined up with heavy visual and spatial processing, while patterns in frontal signals mirrored decision-making load. These region-specific signatures suggest that the method could eventually be adapted to lighter-weight EEG caps that focus on only the most informative areas.

From lab study to safer operating rooms

For non-specialists, the punchline is clear: the study outlines a practical recipe for turning scalp sensors and smart algorithms into a continuous “mental effort meter” for surgeons. While the current work uses archived data from a limited group of participants, it shows that with careful cleaning and feature design, a relatively simple machine-learning model can track cognitive workload with high precision and low computing cost. In the future, such systems could help robotic consoles automatically simplify displays, adjust pacing, or flag moments of overload, supporting surgeons’ minds as reliably as robots already support their hands.

Citation: Atheef G A, M., Powar, O.S. Estimating cognitive workload in robot assisted surgery using time and frequency features from EEG epochs with random forest regression. Sci Rep 16, 7624 (2026). https://doi.org/10.1038/s41598-026-35986-5

Keywords: cognitive workload, robot-assisted surgery, EEG monitoring, machine learning, brain-computer interface