Clear Sky Science · en

An IoT-based smart emotion recognition system by using internal body parameters

Why Your Emotions Might Soon Be a Vital Sign

Imagine your smartwatch quietly tracking not only your steps and heart rate, but also how stressed, calm, or joyful you feel throughout the day—and alerting your doctor before burnout or depression takes hold. This paper describes a step toward that future: a smart system that reads internal body signals, sends them through the Internet of Things (IoT), and uses machine learning to figure out which emotion you are experiencing in real time.

Listening to Feelings Through the Body

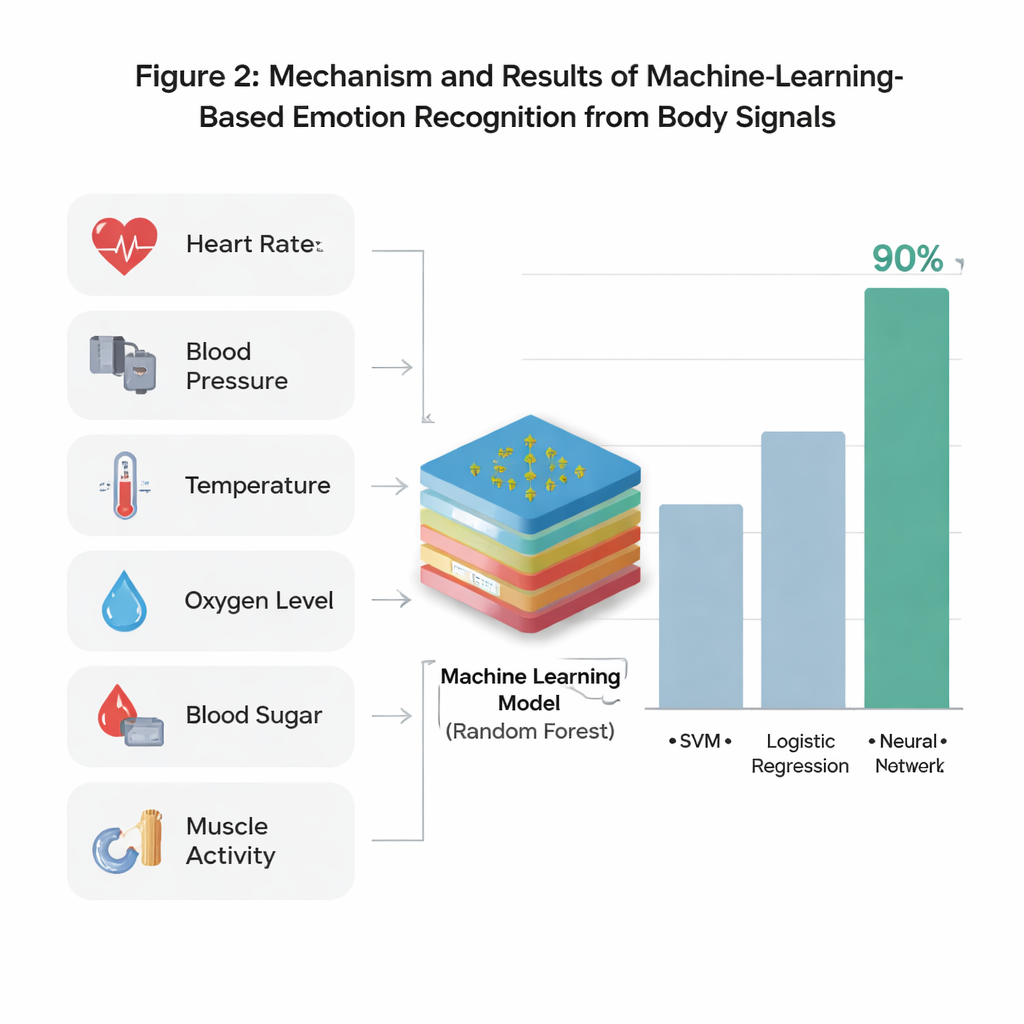

Our emotions do not live only on our faces or in our voices; they ripple through our bodies. When we are angry, our blood pressure can spike. Fear can quicken the pulse, while sadness may slow us down. The researchers behind this study set out to build a system that reads these inner ripples and translates them into six common emotional states: neutral, happy, sad, fear, anger, and surprise. Instead of cameras or microphones, they rely on internal body measurements—such as heart rate, blood pressure, body temperature, blood sugar, oxygen saturation, and muscle activity—captured by wearable sensors and fed to a computer system for analysis.

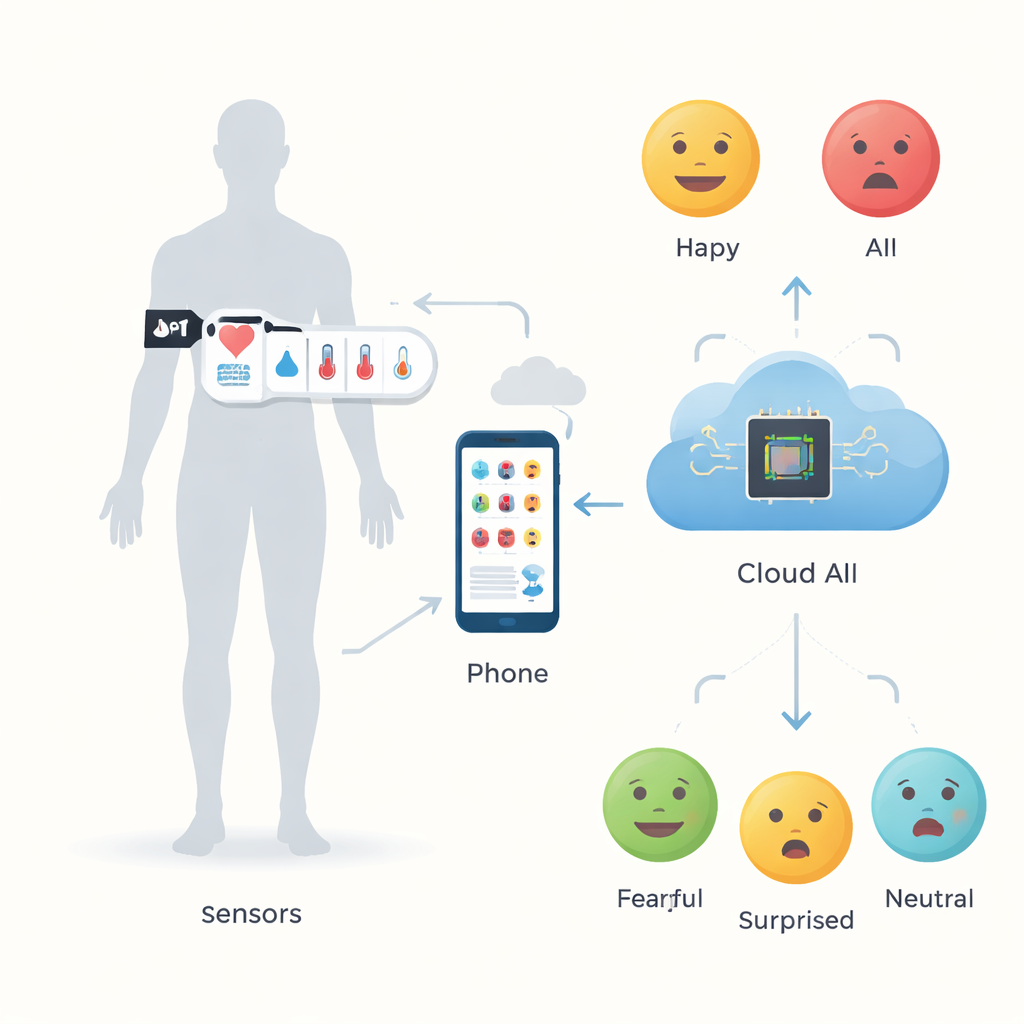

Turning a Wearable into an Emotion Radar

The team designed a multi-sensor armband paired with a small Wi‑Fi–enabled microcontroller. This device collects several streams of data at once: how fast the heart beats, how warm the skin is, how much oxygen the blood carries, how tense the muscles are, how high the blood pressure runs, and how blood sugar levels fluctuate. These signals travel wirelessly to a nearby phone or gateway and then to cloud-based servers. There, the data are cleaned—removing noise, correcting obvious errors, and normalizing units—before being stored in local and cloud databases. Medical experts helped define realistic ranges for each parameter and crafted rules that link specific patterns of body changes to likely emotions, creating a labeled dataset for training machine learning models.

Teaching Machines to Read Moods

With a large set of labeled examples in hand, the researchers tested eleven different machine learning methods to see which could best guess a person’s emotion from their internal readings. These included well-known techniques such as logistic regression, support vector machines, k‑nearest neighbors, neural networks, and several “ensemble” methods that combine many simple decision trees. The Random Forest approach—a method that votes across many decision trees—came out on top. On their main dataset, it correctly identified the six emotions about 91% of the time in standard tests, and about 93% when checked more rigorously using a k‑fold cross‑validation procedure that helps guard against overfitting.

Testing the System Beyond the Lab

To find out whether the model would work on people and situations beyond its training data, the team performed an external test using a widely used emotional benchmark called DEAP. In this setup, volunteers watched carefully selected videos meant to evoke different feelings, while their internal signals were measured with the same sensor configuration. The trained Random Forest model, without being retrained, was then asked to classify these new recordings. It achieved about 94% accuracy, with strong scores across all emotions—evidence that the system can generalize beyond its original sample. The authors argue that this validates both their choice of body signals and their overall design, which spans sensor hardware, IoT communication, cloud storage, and intelligent software.

From Research Prototype to Everyday Companion

For a non-specialist, the key takeaway is simple: your body’s hidden signals can reliably reveal how you feel, and computers can learn to read them. This work shows that a network of wearable sensors, connected through the internet and analyzed with advanced algorithms, can track emotions in a non-invasive way and in near real time. Although the current system has limits—such as a modest sample size and a focus on only six basic emotions—it points toward future tools that might support mental health, personalize digital experiences, monitor lonely or vulnerable people at home, and make smart environments more responsive to our inner lives.

Citation: Rashid, T., Bajwa, I.S. & Kim, J. An IoT-based smart emotion recognition system by using internal body parameters. Sci Rep 16, 7210 (2026). https://doi.org/10.1038/s41598-026-35982-9

Keywords: emotion recognition, wearable sensors, physiological signals, Internet of Things, machine learning