Clear Sky Science · en

Quantum-enhanced multimodal prognostic transformer for skin disease progression prediction and visualization

Why predicting skin rashes matters

Skin rashes from infections like monkeypox, chickenpox, and measles can look confusingly similar, yet they call for very different medical responses. Doctors also need to know not just what the rash is today, but how it is likely to change in the coming days. This study introduces an experimental computer system that tries to do both at once: identify the disease and forecast how the skin lesions may progress, while also showing doctors what the system is "thinking." Although still far from use in the clinic, it sketches a possible future for smarter, more transparent tools in dermatology.

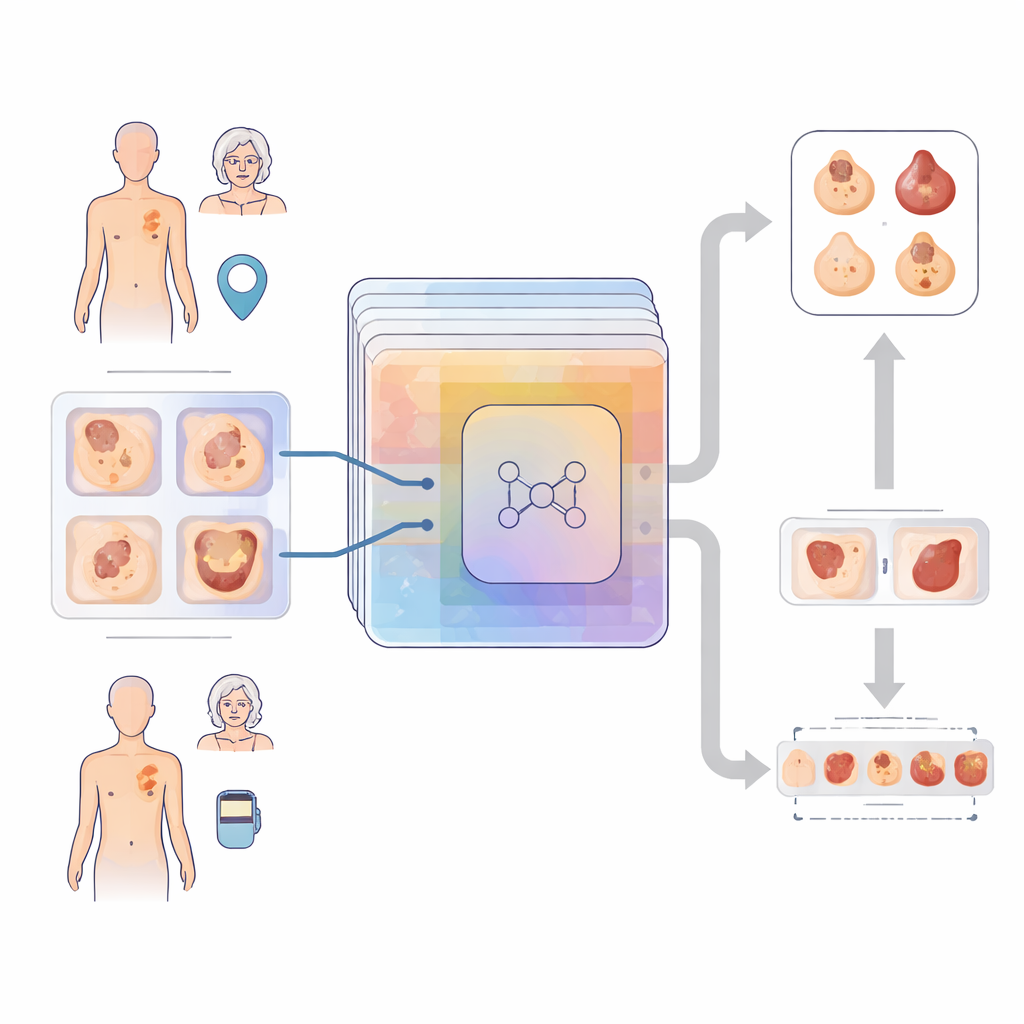

Bringing images and patient context together

The heart of the work is a model the authors call a Quantum-Enhanced Multimodal Prognostic Transformer. In plain terms, it is a layered network that takes two kinds of input: detailed photographs of skin lesions and basic patient information such as age and where on the body the rash appears. A component borrowed from cutting-edge image research, known as a vision transformer, scans the lesion images to capture patterns of color, texture, and shape across the whole image. In parallel, a simpler pathway turns the age and body-site data into a compact numerical description. These two streams are combined so the system can reason about the rash in the context of who it is affecting and where it appears.

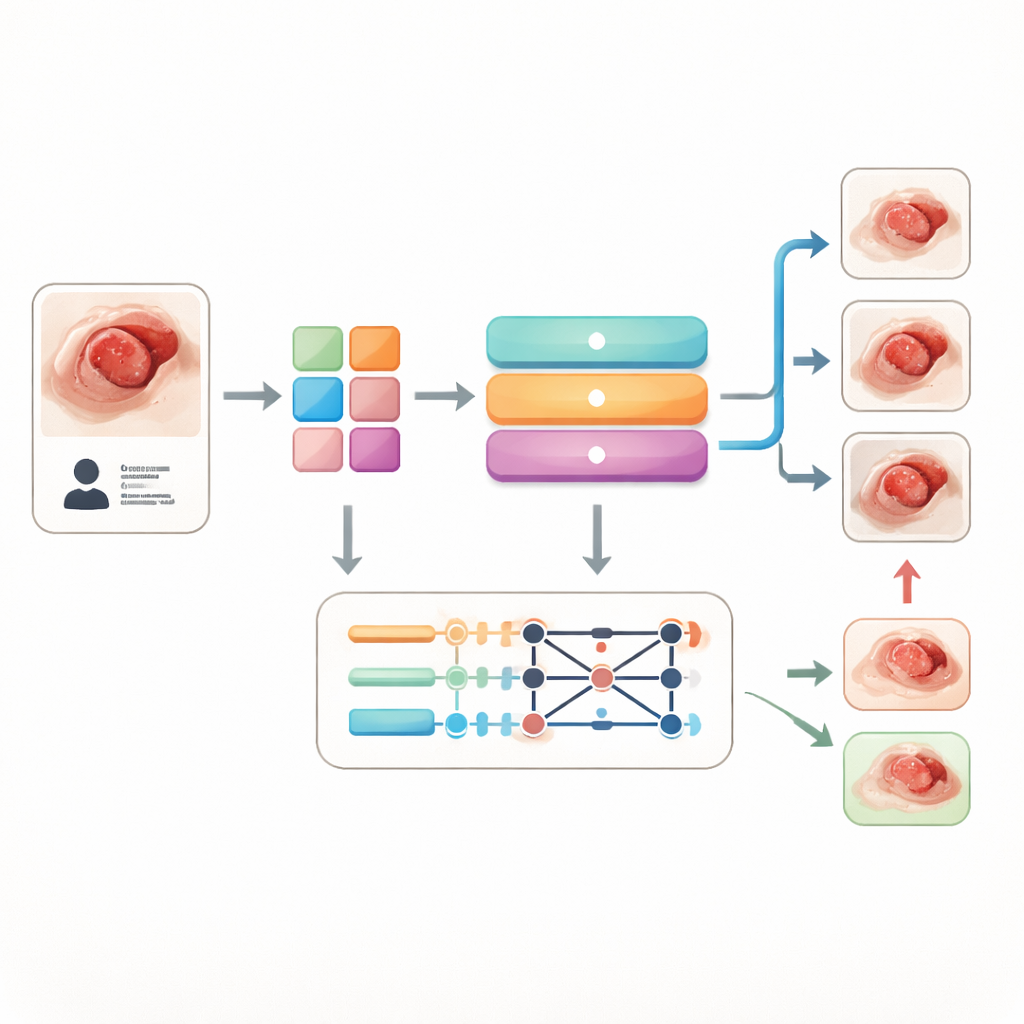

Looking ahead in time, even without time series

Real-world medical records that track the same skin lesion over many visits are scarce, so the authors faced a challenge: how to train a model to predict future stages of disease without true time-lapse data. Their solution is to build "pseudo-trajectories" in an abstract space of features. A recurrent module, inspired by tools for analyzing sequences like speech, learns how features associated with milder lesions differ from those linked to more advanced ones. On top of this, a generative module is trained to imagine what the lesion might look like if it worsens or improves, producing synthetic images of potential futures. This ability to visualize hypothetical outcomes could one day help clinicians compare different treatment paths, provided it is validated on real follow-up data.

Adding a touch of quantum flavor

An eye-catching aspect of the framework is a small "quantum-inspired" layer. Instead of running on a full-scale quantum computer, it uses a simulated quantum circuit inserted between the temporal module and the final decision layers. This circuit transforms the model’s internal features in a way that encourages complex interactions among them, a bit like stirring ingredients together more thoroughly. In tests, adding this quantum-style block gave a modest but consistent boost in accuracy for both disease type and stage prediction, while keeping the overall model size relatively compact. The authors stress that this is about exploring new ways to represent data, not about claiming a speedup over classical hardware.

Seeing what the model sees

Because medical AI must earn clinicians’ trust, the team devoted substantial effort to explainability. They use attention maps from the vision transformer to highlight which regions of a lesion image most influenced a prediction, and mathematical techniques to estimate how strongly each piece of metadata, such as age or lesion site, affected the outcome. They also project the model’s internal representations into two dimensions, where clusters of points correspond to different diseases or stages, giving a visual sense of how well the system separates similar conditions. Additional tools generate "counterfactual" versions of lesions that show how small changes in appearance might push the model toward a different diagnosis, helping users understand its decision boundaries.

Promise, but not yet a clinic-ready tool

On a dataset of about 4,200 public skin images, enriched with simulated age and body-location information, the model correctly identified the disease in nearly nine out of ten cases and predicted the assigned stage with only slightly lower accuracy. It outperformed several strong conventional networks under the same testing setup. Still, the authors are careful to describe their work as a proof of concept. The stage labels and patient data were generated using simple rules rather than real clinical records, and no dermatologist panel confirmed the ground truth. As a result, the system’s current success mainly shows that this combination of image analysis, patient context, quantum-inspired layers, and explainability tools is technically feasible. Turning it into a reliable assistant for doctors will require rigorously labeled, longitudinal patient data and thorough clinical validation.

Citation: Aravinda, C.V., Raja, J.E. & Alasmari, S. Quantum-enhanced multimodal prognostic transformer for skin disease progression prediction and visualization. Sci Rep 16, 8351 (2026). https://doi.org/10.1038/s41598-026-35951-2

Keywords: skin disease AI, dermatology imaging, disease progression, quantum-inspired learning, medical explainable AI