Clear Sky Science · en

Multi-agent coordination and uncertainty adaptation in deep learning–assisted hierarchical optimization for renewable-dominated distribution networks

Why smarter local grids matter

As more homes, farms, and small businesses add rooftop solar panels, wind turbines, and batteries, local power grids are becoming cleaner—but also harder to manage. Sunshine and wind change by the minute, and electricity demand in rural counties can swing with weather, seasons, and human behavior. This paper explores how a new kind of "smart" control system, powered by deep learning and multi‑agent decision making, can keep these renewable‑heavy local grids reliable, affordable, and low‑carbon even when conditions are highly uncertain.

The challenge of juggling sun, wind, and demand

Traditional grid planning assumes that engineers know roughly how much power will be needed and how much will be available from generators. That assumption breaks down when a county network depends heavily on rooftop solar, small wind farms, batteries, and flexible farm or household loads. The output from these devices can rise and fall quickly, and in rural areas the grid layout is irregular and the monitoring sparse. Existing tools either ignore this uncertainty or rely on fixed "what‑if" scenarios that cannot keep up with changing weather and demand patterns. As a result, operators risk blackouts, voltage problems, higher bills, or wasting clean energy by curtailing renewables.

Teaching the grid to understand its own uncertainty

The authors propose a framework they call Deep‑DRO that teaches the grid to recognize and adapt to uncertainty rather than simply endure it. First, advanced deep learning models digest large amounts of historical weather, solar output, wind speed, and demand data. A graph‑based network captures how different locations in the grid influence each other, while a Transformer model tracks patterns over time, such as daily and seasonal cycles. Together, they do more than forecast a single "best guess" for future conditions—they also estimate how much those forecasts might be wrong and how different uncertainties are linked across space and time.

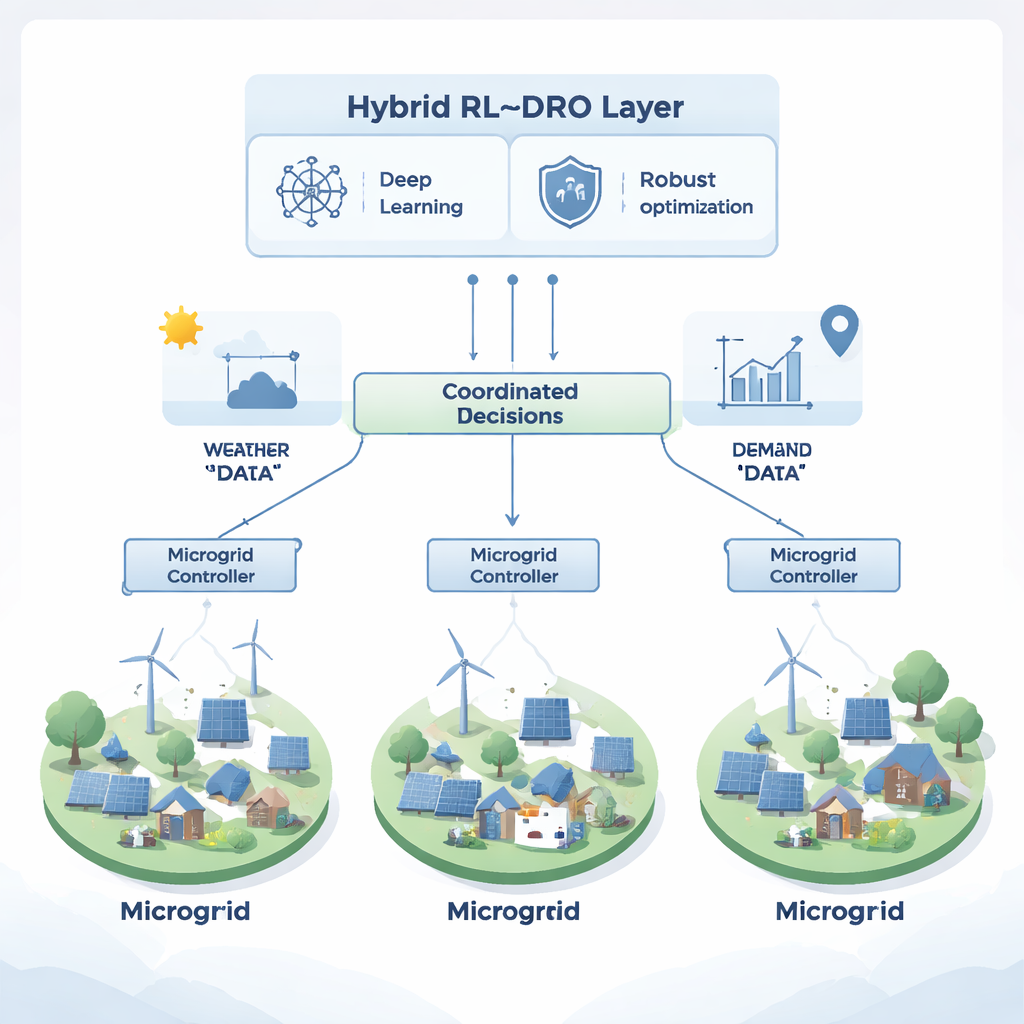

Many decision makers working together

On top of this forecasting layer, the authors build a hierarchy of decision‑making software agents that mimic the structure of a real distribution system. One agent oversees the county as a whole, others manage individual feeders, and local agents represent clusters of distributed energy resources such as solar arrays, batteries, and flexible loads. Using multi‑agent reinforcement learning, these agents learn through trial and error in a simulated environment. They adjust battery charging, power exchanges between microgrids, and demand response, receiving rewards when they lower costs, keep voltages within safe limits, and maintain enough reserves to handle surprises. A federated learning scheme lets agents share what they learn without centralizing all the raw data, reflecting real‑world communication limits.

Building "just‑right" protection against bad days

The third piece of the framework is a distributionally robust optimization (DRO) layer that acts like a cautious supervisor. Instead of trusting a single probability forecast, it considers a whole family of plausible futures around what the deep learning model predicts. Crucially, the size of this family expands when the model detects more unpredictable behavior and shrinks when conditions are stable. This means the system automatically becomes more conservative during stormy or highly variable periods and more cost‑efficient when the outlook is calm. The DRO layer evaluates candidate actions from the learning agents and penalizes strategies that look fragile under worst‑case, but still realistic, conditions.

What the simulations reveal

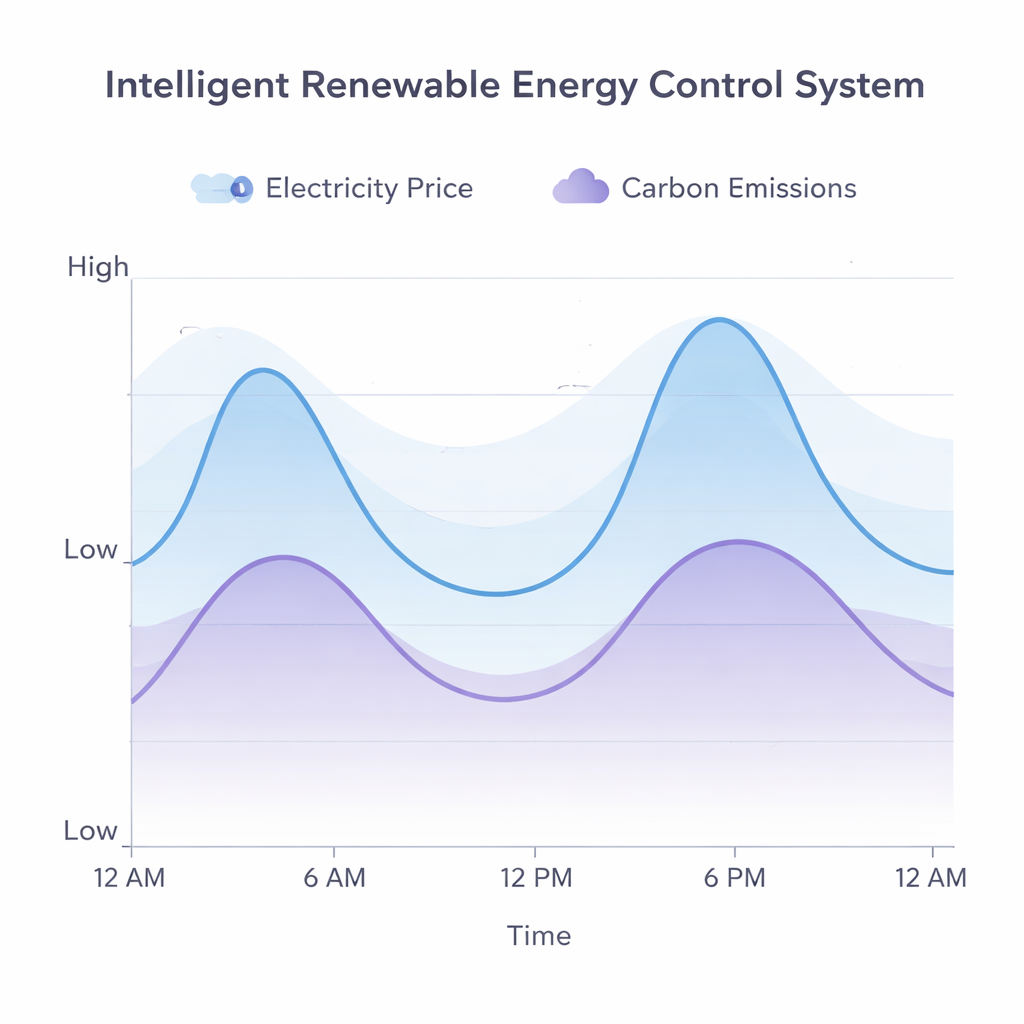

To test the idea, the researchers simulate a network of three interconnected microgrids serving mixed rural loads, each with its own blend of solar, wind, biomass, and batteries. They compare six control strategies, ranging from a simple deterministic schedule to classical risk‑aware optimization and various learning‑based controllers. Across a year of high‑resolution data, the fully integrated Deep‑DRO system cuts operating costs by about a quarter, boosts a reliability index from 0.76 to 0.91, and lowers carbon emissions by nearly 30 percent relative to the simplest baseline. It remains stable even when the underlying uncertainty is artificially increased, and it learns to time battery charging and power exchanges to take advantage of cleaner, cheaper periods while avoiding risky tight‑margin operation.

A smarter path to resilient clean energy

For non‑experts, the key message is that making local grids clean is no longer just about adding more solar panels or batteries—it is about making the control system intelligent enough to anticipate and adapt to uncertainty. By fusing deep learning, cooperative decision‑making among many agents, and a built‑in sense of caution about bad outcomes, the proposed Deep‑DRO framework shows how counties and rural regions could run high‑renewable networks that are both economical and resilient. In practice, this approach could help keep the lights on, reduce bills, and cut emissions, even as our electricity supply becomes more weather‑dependent and decentralized.

Citation: Zheng, Y., Li, H., Wang, S. et al. Multi-agent coordination and uncertainty adaptation in deep learning–assisted hierarchical optimization for renewable-dominated distribution networks. Sci Rep 16, 5176 (2026). https://doi.org/10.1038/s41598-026-35945-0

Keywords: renewable energy grids, multi-agent reinforcement learning, distributionally robust optimization, smart microgrids, deep learning forecasting