Clear Sky Science · en

Assessment of influencing factors of college and universities’ teaching effects using fuzzy and deep learning techniques

Why Better Teaching Measurements Matter

Anyone who has sat in both great and not-so-great classes knows that teaching quality can make or break a college experience. Yet most universities still lean on blunt tools like test scores and end-of-term surveys to judge what works. This paper explores a smarter way to measure how well colleges are teaching by combining two computer-based methods—one that deals well with fuzzy, human data and another that excels at spotting hidden patterns. Together, they promise more reliable guidance for improving courses and supporting students.

Rethinking How We Judge a “Good Class”

College teaching is shaped by many moving parts: how many students are in the room, how experienced the instructor is, how tough the course is, the classroom atmosphere, and the use of technology, to name a few. Traditional evaluation systems often reduce all of this to a single test score or a numerical course rating. That oversimplification misses important context and ignores the messy, subjective side of learning. The authors argue that if we want to understand why some classes help students thrive while others fall short, we need tools that can juggle many factors at once and cope with imperfect, opinion-based information.

A Hybrid “Human-Like” and “Pattern-Spotting” Approach

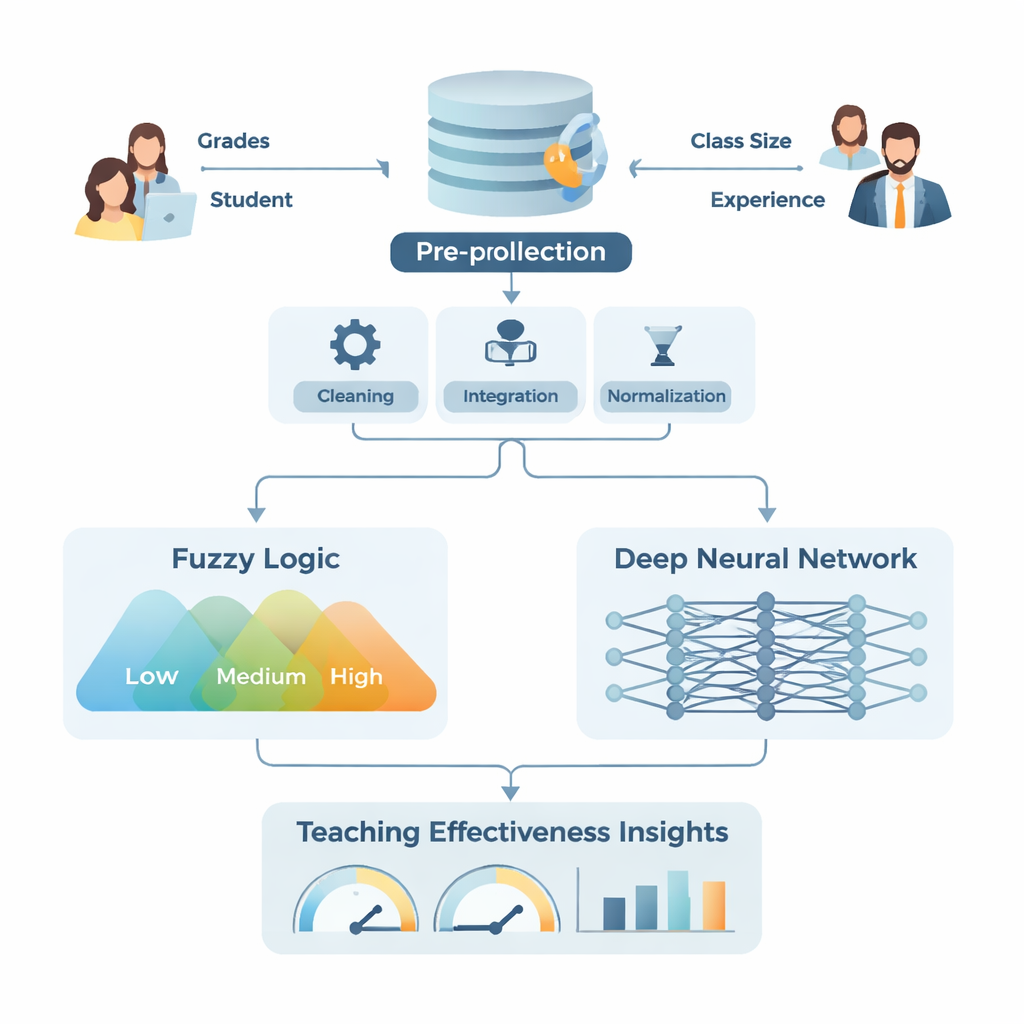

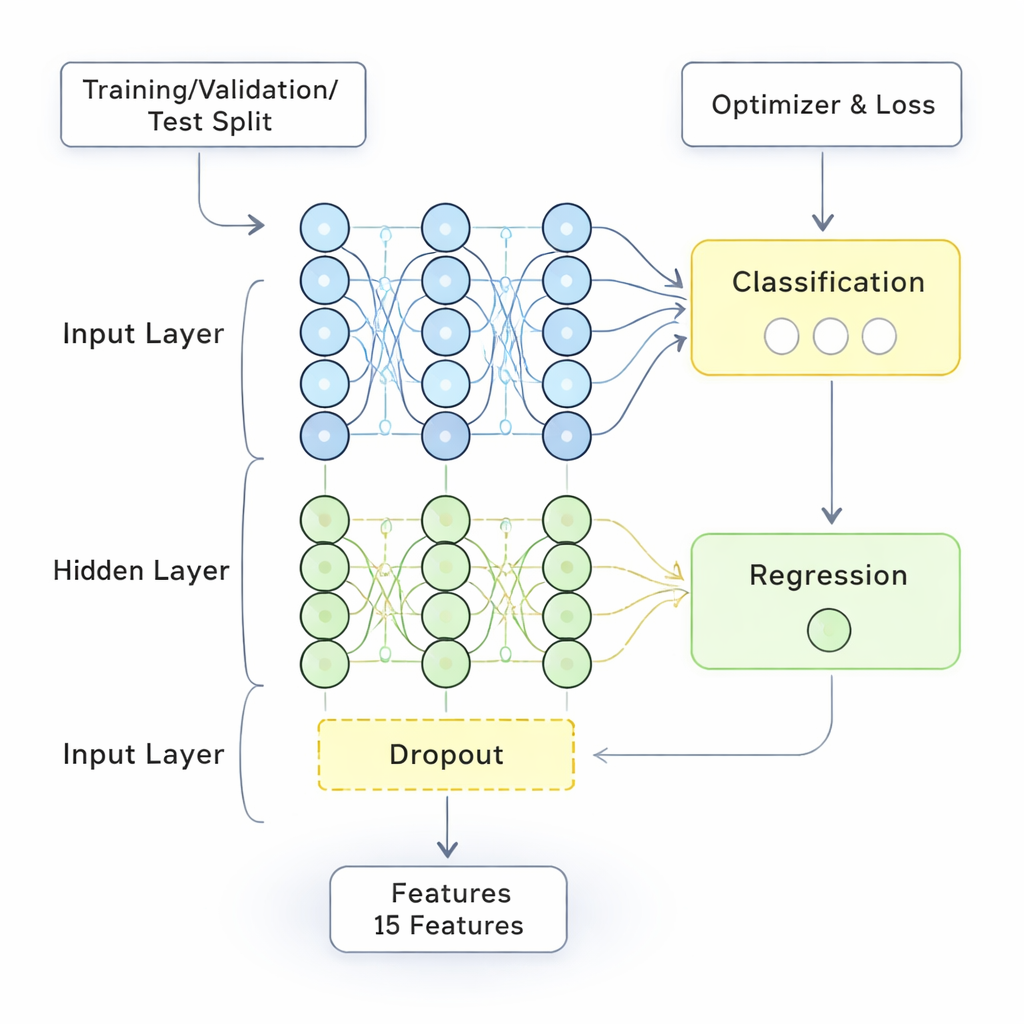

The study introduces a hybrid model called Fuzzy and Deep Learning (FDL). The “fuzzy” part mimics the way people think in shades of gray rather than strict yes-or-no categories—for example, saying a student’s performance is “low,” “medium,” or “high” with smooth transitions instead of hard cutoffs. It turns vague inputs like teaching experience, student–teacher ratio, and course difficulty into flexible categories, then uses simple rules such as “if student performance is high and the class is small, teaching effectiveness is high.” Meanwhile, the deep learning part is a layered network that crunches large amounts of cleaned and standardized data, uncovering complex links that might not be obvious to human reviewers.

From Raw Surveys to Meaningful Signals

To test their approach, the researchers used data from the National Survey of Student Engagement, a large, widely used questionnaire completed by first-year and senior students at North American colleges and universities. They adapted several questions to focus more sharply on how well teachers fulfill their roles, then checked that the revised survey was reliable. Next, they carried out a thorough data-preparation pipeline: cleaning errors, filling in missing values, merging information from student and teacher sides, and scaling everything to a common range. They also created combined indicators, such as a weighted overall grade based on exam scores, homework completion, and attendance, and they reduced data complexity using a standard technique called principal component analysis. This prepared dataset fed both into the fuzzy logic module, which handled imprecise categories, and into the deep learning network, which handled high-dimensional numerical patterns.

How Well Does the New Model Work?

The FDL model was trained and tested on separate portions of the data to avoid fooling itself with familiar examples. Its performance was compared against several strong alternatives, including standard neural networks and more advanced deep models. Across key measures—overall accuracy, precision, recall, and the F1-score—the hybrid method either matched or exceeded the competing approaches, reaching about 98% accuracy and a low error rate of just over 10%. Just as important, the fuzzy rules made its decisions more interpretable than those of black-box models. The system could highlight which combinations of factors—like large classes paired with low teaching experience, or demanding courses supported by strong feedback—were most strongly linked to better or worse teaching outcomes.

What This Means for Students and Colleges

In everyday terms, the study shows that it is now possible to build an automated “teaching barometer” that is both highly accurate and reasonably understandable. Instead of relying mainly on blunt averages and one-off surveys, colleges could use this kind of system to spot weak teaching environments early, identify which teachers or courses need targeted support, and test whether new policies actually help students learn more. The authors stress that the model is not perfect—it depends on data quality, can be computationally demanding, and necessarily simplifies the rich human side of education. Still, used thoughtfully, it offers a powerful new lens for making college classrooms more effective, fair, and responsive to students’ needs.

Citation: He, Z., Zhang, X., Zhang, Z. et al. Assessment of influencing factors of college and universities’ teaching effects using fuzzy and deep learning techniques. Sci Rep 16, 5168 (2026). https://doi.org/10.1038/s41598-026-35940-5

Keywords: teaching effectiveness, higher education, student performance, fuzzy logic, deep learning