Clear Sky Science · en

Understanding mental health discourse on Reddit with transformers and explainability

Why online talk about feelings matters

Many people who struggle with anxiety, depression, bipolar disorder, or borderline personality disorder turn to the internet before they ever speak with a professional. Reddit, with its anonymous communities, has become a huge meeting place for sharing fears, asking for help, and supporting others. This study explores what thousands of these conversations look like, and how artificial intelligence can sort them and highlight the words people most often use when they talk about different mental health challenges.

Peeking into support communities

The researchers focused on four large Reddit communities devoted to anxiety, depression, bipolar disorder, and borderline personality disorder (often shortened to BPD). Each post in their dataset was simply labeled by the community it came from, not by a medical diagnosis. The team treated these communities as “spaces of concern,” where people with similar struggles gather to vent, seek advice, and offer comfort. By studying how language differs across these spaces, they hoped to understand how people describe their experiences in their own words, outside of a clinic.

Teaching computers to sort conversations

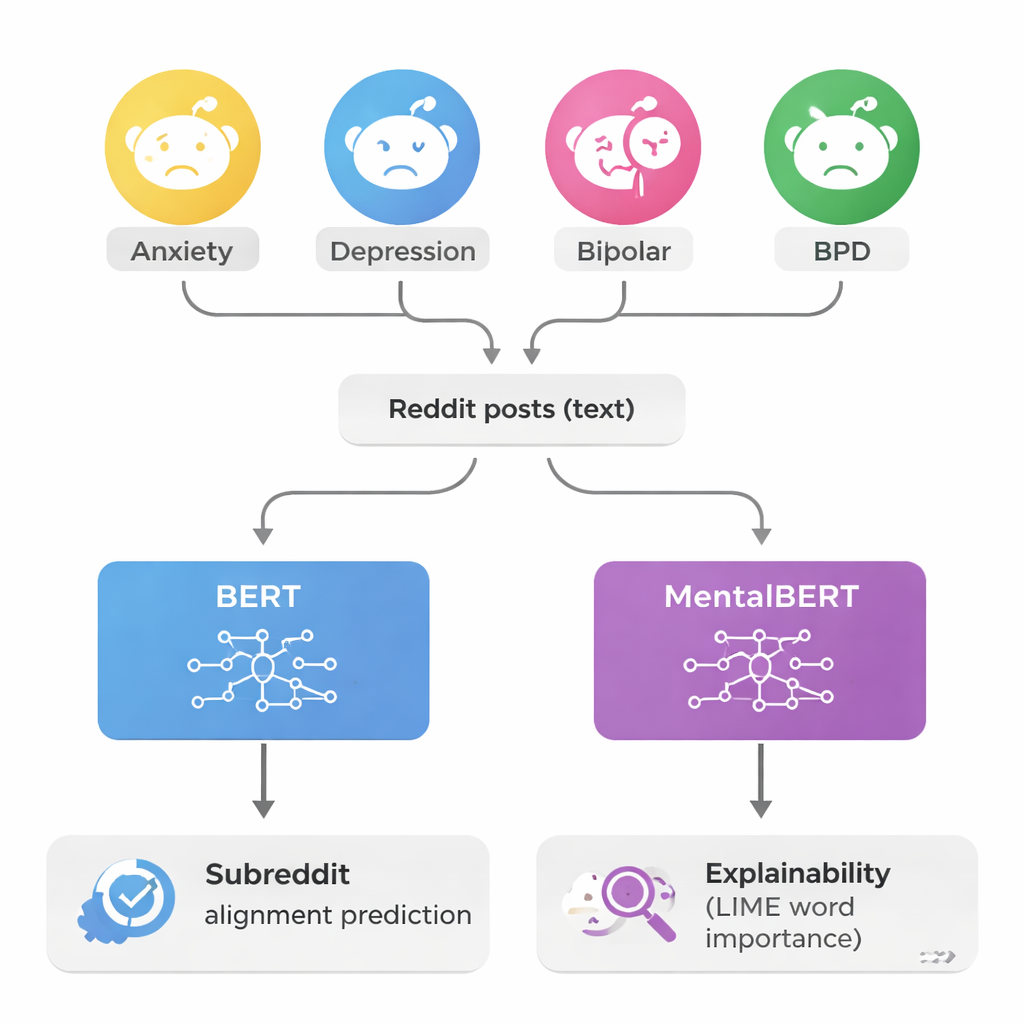

To make sense of more than 150,000 posts, the authors used powerful language models called transformers, specifically BERT and a mental health–focused version called MentalBERT. These models read each post and tried to guess which of the four communities it belonged to. Because some communities had far more posts than others, the team carefully balanced the dataset so that each group was equally represented. This made the task harder but fairer, forcing the models to truly learn differences in wording instead of just favoring the most common communities. When tested, the models correctly labeled posts about 82 percent of the time—a large jump above random guessing, which would be right only one quarter of the time.

Opening the black box of AI

A major concern in mental health technology is that computer systems can seem like mysterious “black boxes,” offering predictions without clear reasons. To tackle this, the researchers used an approach called LIME that reveals which words pushed the model toward a particular decision. In simple terms, LIME hides or tweaks pieces of a post and watches how the model’s answer changes. If removing a word like “panic” suddenly changes the predicted community, that word is judged to be important. By repeating this process thousands of times on many posts, the team built lists of the most influential words for each community and checked that these words matched what clinicians know about each condition.

Distinct voices for different struggles

The explanations uncovered clear language patterns. In anxiety communities, words such as “panic,” “attack,” and “worry” stood out, often tied to physical symptoms and sudden surges of fear. Depression posts frequently included terms like “hopeless,” “worthless,” “life,” and “anymore,” echoing deep sadness and a sense that things will not improve. In BPD discussions, the key words shifted toward relationships and emotions, including “abandonment,” “relationship,” “attachment,” and “fp” (short for “favorite person,” a term common in these groups). Bipolar posts highlighted mood changes and treatment language, with words like “mania,” “manic,” “hypomania,” “mood,” and medication names such as “lithium” and “lamictal.” The models also revealed where conditions blur together: anxiety and depression posts, for example, can both center on distress and negative feelings, making them easier to confuse, much as happens in real-life diagnosis.

From online posts to real-world impact

To a non-specialist, the key message is that computers can reliably sort mental health conversations by theme and explain which words drive their choices, but they still cannot and should not diagnose anyone. The models in this study act more like librarians for online support spaces: they help check whether discussions in a given community truly match its stated focus. This could aid moderators in keeping conversations relevant and help researchers or clinicians better understand how people describe their struggles outside of formal appointments. With careful human oversight and attention to privacy and stigma, such tools may one day support more welcoming and better organized online spaces for talking about mental health.

Citation: Sánchez Rodríguez, I., Bianchi, J., Pinelli, F. et al. Understanding mental health discourse on Reddit with transformers and explainability. Sci Rep 16, 6796 (2026). https://doi.org/10.1038/s41598-026-35918-3

Keywords: mental health, social media, Reddit, explainable AI, text classification