Clear Sky Science · en

Novel transformer-based model for NID in fog computing environment

Why smarter defenses at the network’s edge matter

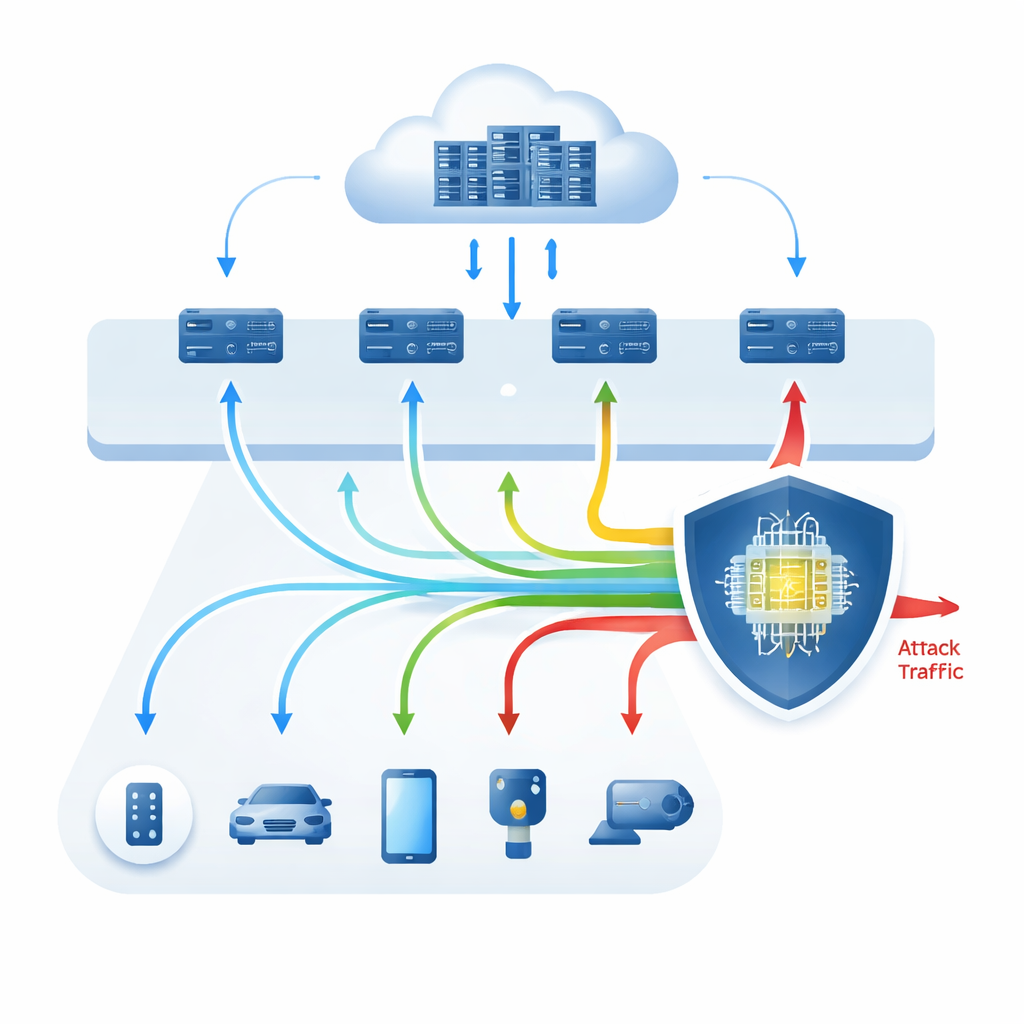

Our homes, cars, factories, and cities now depend on countless small devices that constantly talk to each other and to the cloud. To keep delays low, much of this digital chatter is handled in an intermediate layer called “fog computing,” made up of local gateways and mini–data centers near users. But this same convenience opens new doors for hackers. This paper explores how a new kind of artificial intelligence model, known as a Transformer, can act as an exceptionally sharp guard dog in this fog layer, spotting dangerous activity in network traffic before it reaches critical systems.

Computers between you and the cloud

Fog computing sits between tiny devices at the edge (like sensors, phones, and smart appliances) and powerful cloud data centers. Instead of sending all data straight to distant servers, much of it is briefly processed or filtered in nearby fog nodes. This arrangement reduces delay and saves bandwidth, but it also means that security checks must be done quickly and with limited resources. Network intrusion detection systems are tools that watch traffic for signs of attacks such as denial-of-service floods, probing scans, or stealthy attempts to take over accounts. Traditional systems rely on fixed rules or signatures of known attacks and often miss new or evolving threats, especially amid the huge, diverse traffic produced by modern Internet of Things (IoT) devices.

From classic algorithms to deep learning and beyond

The authors first review how existing methods try to detect intrusions in this demanding setting. Classic machine learning approaches learn what “normal” traffic looks like and flag deviations, using algorithms such as k-nearest neighbors, decision trees, random forests, and simple neural networks. Deep learning methods, including recurrent networks and their variants (RNN, LSTM, GRU), go further by automatically uncovering complex patterns in raw traffic and can catch previously unseen attacks. However, these deep models usually need large amounts of labeled data, are expensive to run, and often behave like black boxes, giving little insight into why they raise an alarm. These limitations are especially problematic in fog computing, where hardware is modest and security analysts need understandable alerts.

A new way to read network traffic

To overcome these issues, the paper proposes a Transformer-based framework tailored to network intrusion detection in fog environments. Rather than treating each traffic record as a flat list of numbers, the model treats every feature as a “token” in a short sequence, letting its attention mechanism learn how features relate to each other across the entire record. The authors explore three variants: a decoder-only style similar to GPT, an encoder-only style similar to BERT, and a full encoder–decoder Transformer. All are trained and tested on a widely used benchmark dataset (NSL-KDD) and a more modern IoT-focused dataset (IoT-20). Careful data preparation, cross‑validation, and techniques for dealing with rare attack types are used to reduce bias and overfitting.

Near-perfect performance and clearer decisions

The results are striking. On the NSL-KDD benchmark, the full Transformer model reaches 100% accuracy, precision, recall, and F1-score for both simple “attack vs. normal” decisions and for distinguishing among multiple attack categories. On the IoT-20 dataset, which better reflects today’s varied IoT traffic, the model still achieves very high scores: about 99.6% accuracy for binary classification and over 95% for multiple attack types. The authors also compare their system against many state-of-the-art machine learning and deep learning methods and find that the Transformer generally matches or surpasses them while remaining fast enough for deployment on fog nodes. To make its behavior more transparent, the study uses explainable AI tools that rank which traffic features most influence each decision, and it tests robustness by creating deliberately perturbed “adversarial” examples. The Transformer maintains strong performance even under such attacks and shows which features it relies on most.

From lab success to real-world protection

In simple terms, this work shows that the same technology powering modern language models can be adapted into a highly capable, more interpretable security guard for fog networks. By learning how different pieces of network data interact, the Transformer-based system can spot both familiar and rare intrusions with remarkable accuracy, while running quickly enough to be practical near the network edge. The authors note that further trials on live, ever-changing traffic are still needed, and that future work should focus on scaling the approach, handling shifting attack patterns, and protecting privacy across many distributed fog nodes. Still, the study suggests that Transformer-style models could become a cornerstone of defending the sprawling, device-rich networks that underpin our daily lives.

Citation: Abdelnaby, K.M., Khedr, A.Y. & Elsemary, A.M. Novel transformer-based model for NID in fog computing environment. Sci Rep 16, 8656 (2026). https://doi.org/10.1038/s41598-026-35879-7

Keywords: fog computing security, network intrusion detection, transformer models, IoT cyberattacks, edge AI