Clear Sky Science · en

Reinforcement learning-driven dynamic optimization strategy for parametric design of 3D models

Smarter 3D Designs With Less Guesswork

From eye‑catching buildings to tiny mechanical parts inside your phone, many modern objects begin life as 3D computer models. Designers often use “parametric” models, where sliders and formulas control shapes, sizes, and patterns. This makes it easy to explore many options—but also creates a maze of possibilities that is impossible to search by hand. This paper introduces a new artificial‑intelligence approach called HRL‑DOS that helps computers navigate that maze, automatically improving 3D designs for strength, material use, and ease of manufacturing.

The Challenge of Too Many Choices

In parametric design, a single object can depend on dozens or hundreds of linked parameters: wall thicknesses, hole sizes, curves, and alignment rules. As models grow more complex, these parameters interact in non‑obvious ways. Traditional optimization tools either rely on smooth mathematical functions, which break down when designs are irregular or noisy, or on trial‑and‑error search methods, which can be painfully slow for large problems. Even standard reinforcement learning—where an AI agent learns from repeated trial and feedback—struggles when it must consider every possible combination of design decisions at once.

A Two‑Level AI That Thinks Like a Designer

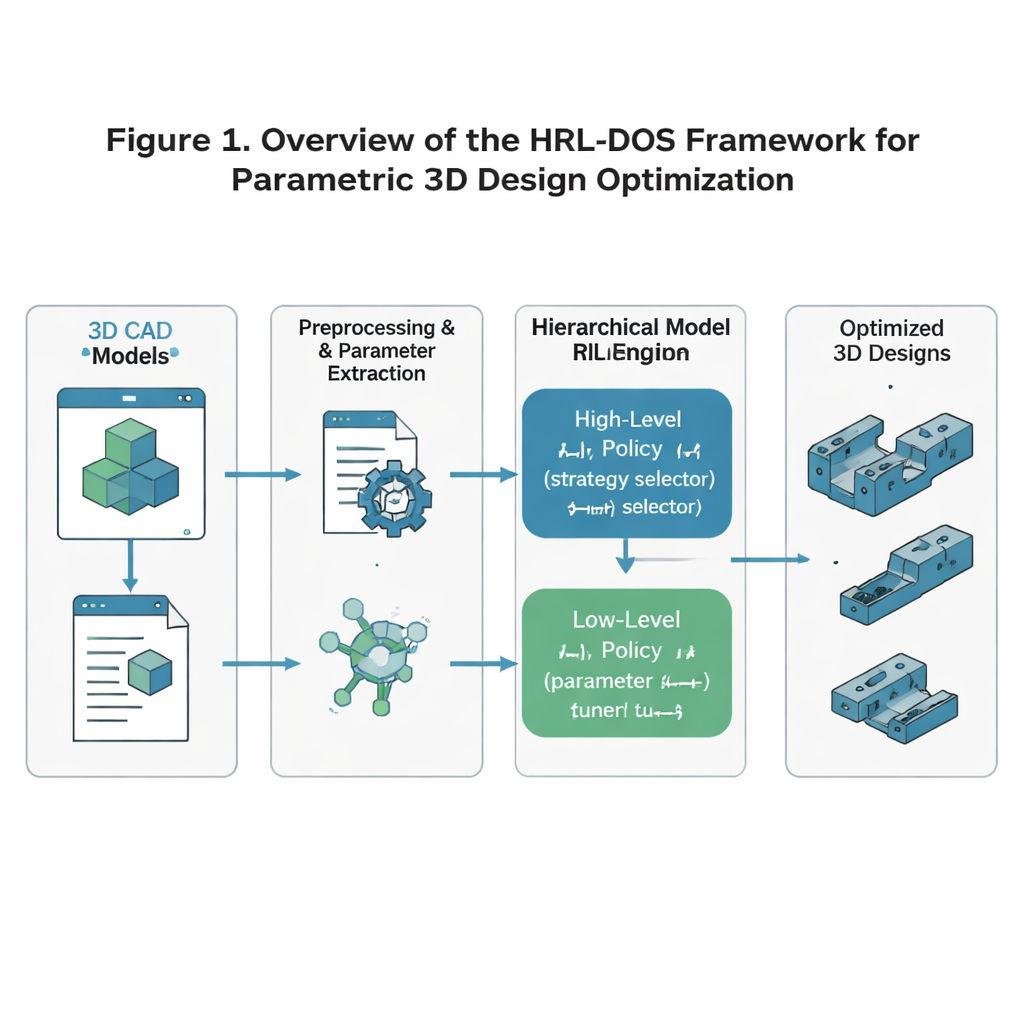

The authors propose Hierarchical Reinforcement Learning‑based Dynamic Optimization Strategy, or HRL‑DOS, to cope with this complexity. Instead of treating design as one giant decision, HRL‑DOS splits the job into two layers. A high‑level policy chooses an overall direction for the design—such as favoring lighter weight, more symmetry, or extra safety margin. A low‑level policy then adjusts individual parameters, like specific dimensions or feature placements, within that broader plan. Both layers receive feedback based on how well the current model performs on three core objectives: structural stability, geometric efficiency, and manufacturability. This layered structure mirrors how human designers work: first deciding on a concept, then fine‑tuning details.

Turning Raw 3D Models Into Learnable Data

To train this system, the researchers start with the ABC Dataset, a large open collection of detailed industrial 3D models such as brackets, gears, levers, and mounting plates. They preprocess each model so that the AI sees a clean, consistent representation: the geometry is normalized to a standard scale and orientation; key dimensions and features are extracted as parameters; and manufacturing rules—such as minimum wall thickness or allowable overhang angles—are encoded as constraints. These parameters are then transformed into a compact “latent” description that naturally discourages impossible or unstable shapes. The result is a numerical state that the AI can safely modify while still respecting basic engineering rules.

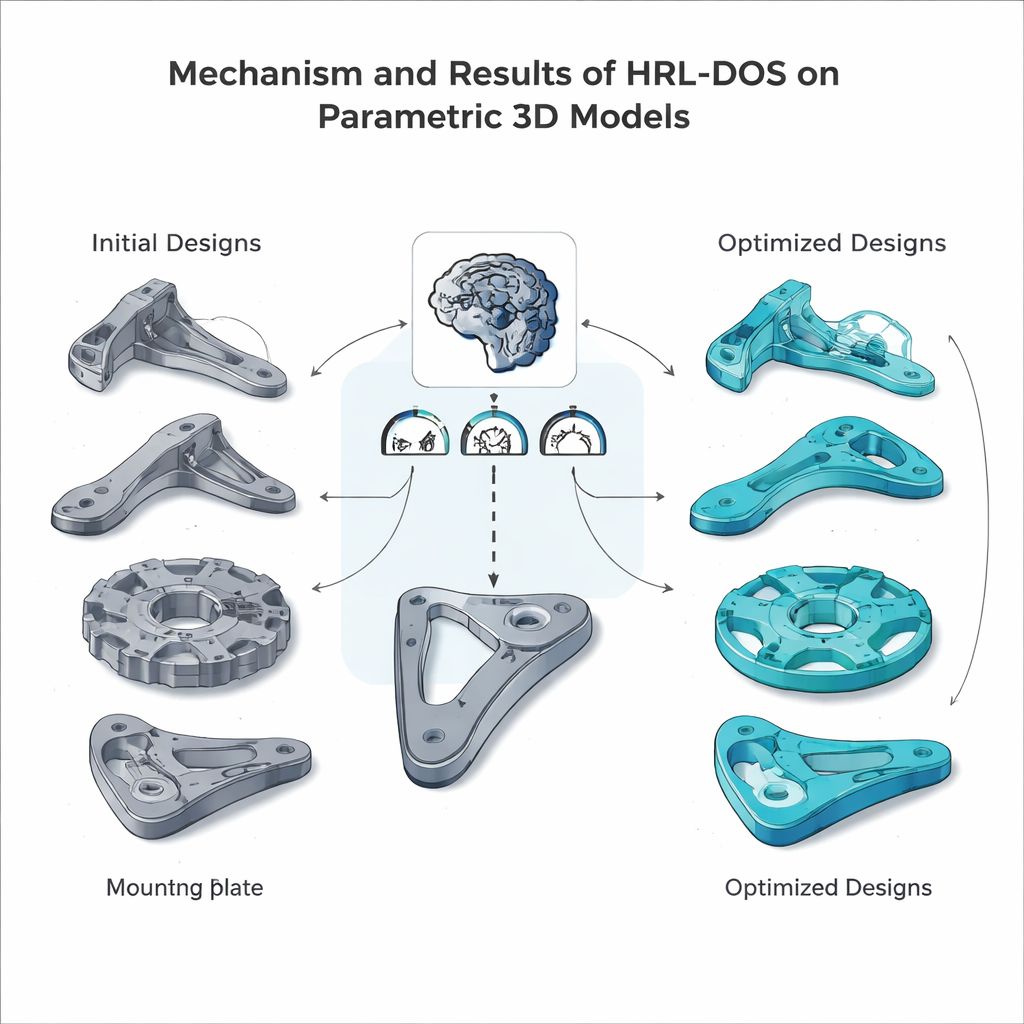

Learning to Improve Realistic Parts

Within this prepared environment, the hierarchical agents repeatedly propose new designs, run simulations to estimate weight and stress, check manufacturability, and receive a combined reward score. Over many training episodes, the high‑level agent learns which strategic goals tend to pay off, while the low‑level agent discovers which parameter tweaks actually deliver those goals. The team tested HRL‑DOS on several representative parts from the dataset—a ribbed bracket, a gear disk, a lever handle, and a mounting plate—and compared its performance with several advanced alternatives, including flat reinforcement learning, genetic‑algorithm hybrids, and other AI‑assisted design tools. HRL‑DOS reached good solutions about 27% faster and produced models with roughly 18% higher overall quality scores.

Designs That Are Strong, Buildable, and Flexible

Beyond raw performance, HRL‑DOS proved better at staying within strict engineering limits. It generated far fewer designs that violated safety or manufacturing constraints and achieved higher manufacturability scores across checks such as overhang angles, internal cavities, and tolerances. The method also generalized well to new, unseen part types and remained robust when the input data were noisy or partially missing—an important trait for real‑world design workflows. Together, these results suggest that hierarchical reinforcement learning can serve as a practical engine for intelligent computer‑aided design, helping architects and engineers explore more options in less time while keeping their models safe, efficient, and ready for fabrication.

Citation: Zhong, G., Vijay, V.C. Reinforcement learning-driven dynamic optimization strategy for parametric design of 3D models. Sci Rep 16, 5041 (2026). https://doi.org/10.1038/s41598-026-35863-1

Keywords: parametric 3D design, reinforcement learning, design optimization, computer-aided design, generative engineering