Clear Sky Science · en

Development and evaluation of surface-guided patient position system for boron neutron capture therapy

Sharper Aiming for Cancer-Fighting Neutrons

Boron Neutron Capture Therapy (BNCT) is a promising cancer treatment that can selectively damage tumor cells while sparing much of the surrounding healthy tissue. But for this precision to pay off, doctors must place a patient in exactly the right spot in front of a fixed neutron beam—often within a millimeter. This paper describes a new camera‑ and robot‑guided positioning system designed to make that delicate setup faster, more accurate, and less dependent on a technician’s steady hand and judgment.

Why Positioning Matters So Much

Unlike many modern radiation machines that can swing around the patient and reshape their X‑ray beams, BNCT relies on a heavy, fixed neutron source with limited options for steering the beam. The patient, not the beam, has to move into just the right place. Traditionally, staff use lasers, skin marks, and manual couch shifts to do this. That process is slow, depends heavily on operator skill, and can leave small position errors that change how much dose a tumor—and nearby organs—actually receive. As BNCT centers open to treat complicated tumors of the head, neck, and other hard‑to‑reach areas, these limitations become a serious obstacle.

A High-Tech Helper: Cameras, Software, and a Robot

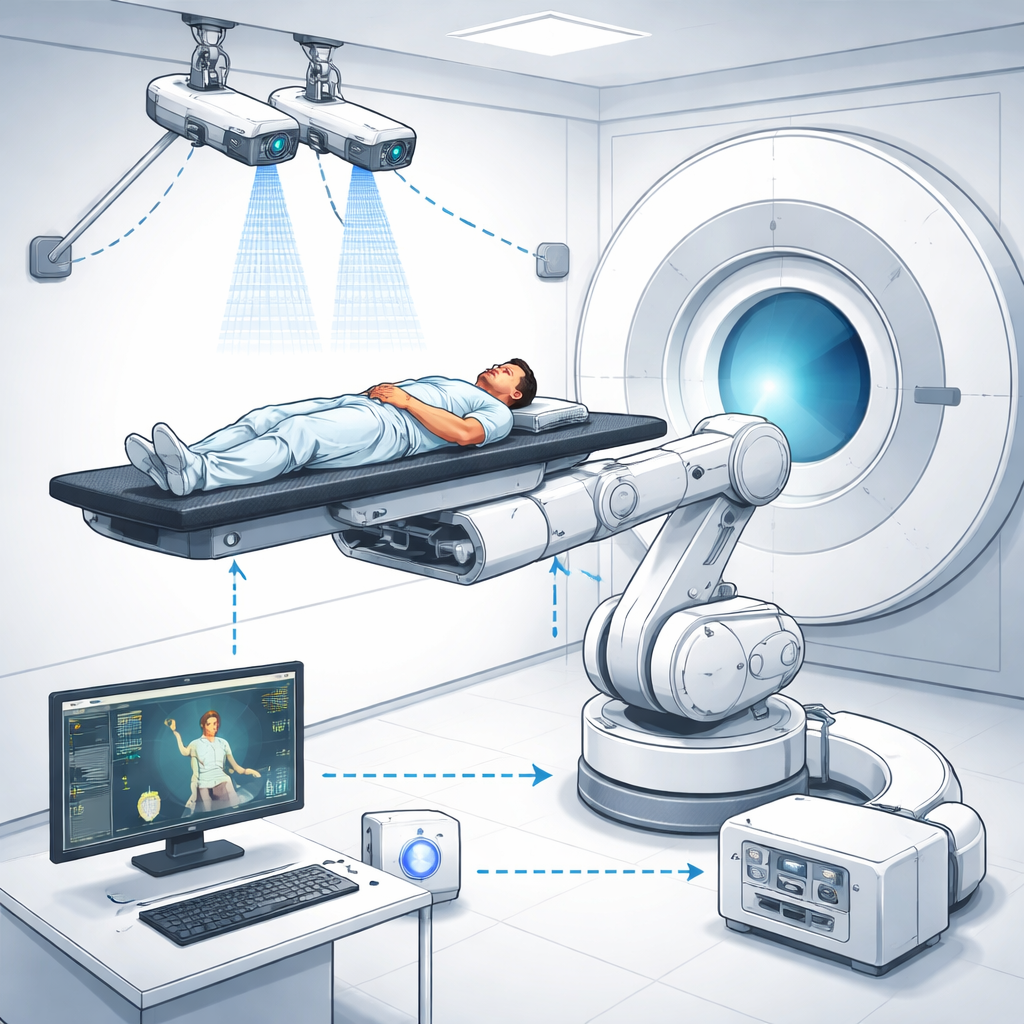

To tackle this problem, the research team built an integrated Surface-Guided BNCT (SG‑BNCT) system that combines three main pieces: a specialized treatment planning program called NeuMANTA, a binocular stereo‑vision camera pair, and a six‑axis industrial robot carrying the treatment bed. In NeuMANTA, doctors plan the BNCT treatment on CT images, including the exact point where the neutron beam should pass and three small reference spots, or fiducial markers, on the patient’s skin. These markers define the patient’s position in the planning images and link that plan to the real‑world treatment room coordinates via mathematical transformation matrices.

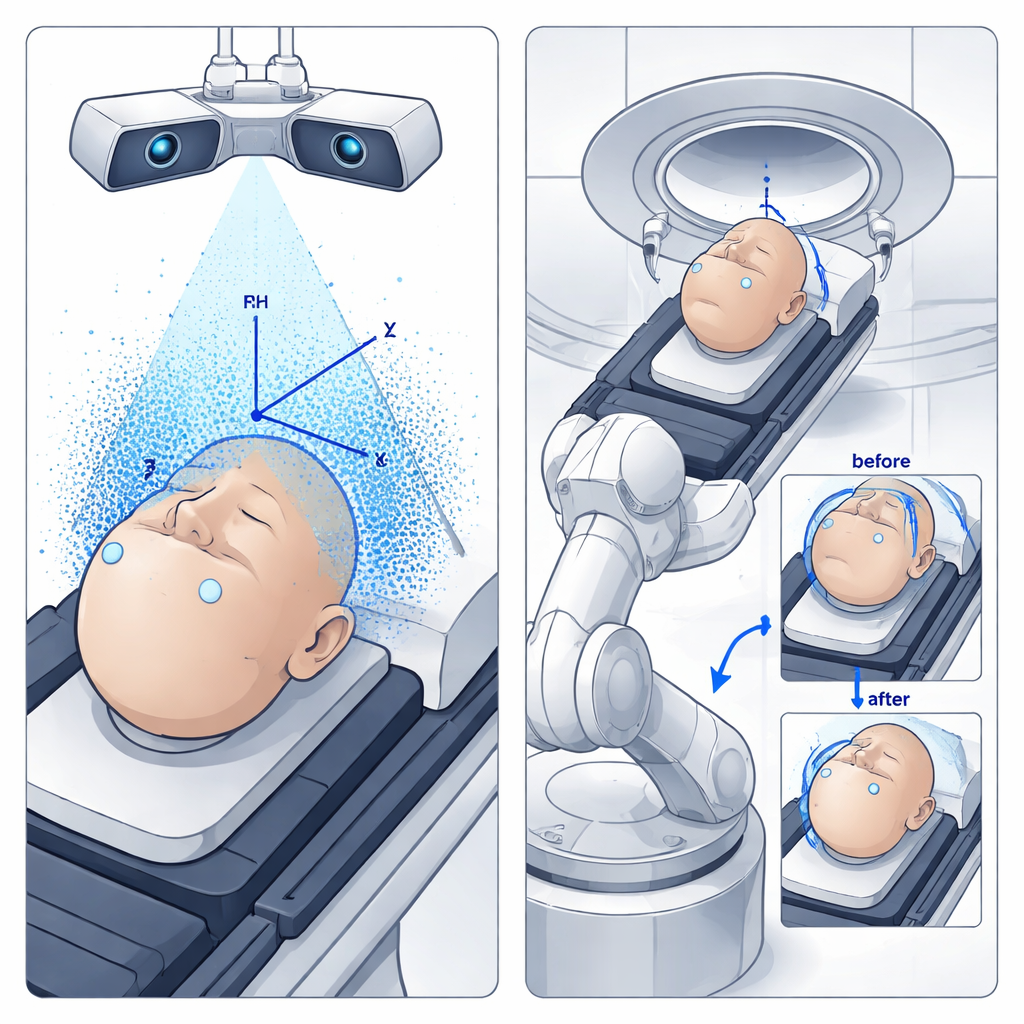

How 3D Cameras See and Correct the Setup

In the treatment room, two high‑resolution cameras watch the patient from different angles, much like human eyes. Using a modern object‑detection algorithm (YOLO) trained on over a thousand images, the system rapidly finds the same fiducial markers on the patient’s surface. By comparing how each marker appears in the left and right camera views, the computer reconstructs their 3D positions and creates a detailed point cloud of the surface. From three non‑collinear markers, it rebuilds a patient coordinate system and compares it with the coordinate system defined in the treatment plan. Any mismatch between the planned and actual positions is turned into translation and rotation commands—six degrees of freedom in total—for the industrial robot that supports the patient bed.

Testing with a Stand-In Patient

Before using the system on real patients, the team tested it with an anthropomorphic phantom—a human‑shaped model—equipped with internal and surface markers visible both in CT scans and to the cameras. They created multiple treatment setups in NeuMANTA with different beam directions and then asked the SG‑BNCT system to reproduce these plans by automatically moving the phantom into place. A laser placed at the center of the neutron beam opening acted as a stand‑in for the actual beam, so the researchers could directly measure how far the final spot on the phantom deviated from the intended target, both along the beam direction (source‑to‑skin distance) and sideways in the plane perpendicular to the beam.

How Close Did the System Get?

Across 24 experimental setups, the system’s positioning errors were typically below 1 millimeter in both directions, with the worst single‑pass errors reaching about 2 to 3 millimeters along the beam axis. The authors traced these residual discrepancies to three main sources: the thickness of the CT image slices, small calibration uncertainties in the stereo cameras, and tiny pixel‑level inaccuracies when the software detects the markers. Importantly, the system is designed to operate in a closed loop: it repeatedly re‑measures the marker positions, updates the 3D alignment, and commands additional fine motions of the robot until the remaining error falls below a preset threshold. In these iterative tests, the final deviations consistently dropped to well under 1 millimeter, achieving sub‑millimeter alignment in both depth and sideways directions.

What This Means for Patients

For patients who may benefit from BNCT—especially those with tumors in complex or sensitive regions—this surface‑guided, robot‑assisted positioning system promises more reliable and efficient treatments. By tying the treatment planning software directly to cameras that see the actual patient and to a robot that can carry out precise motions, the method reduces dependence on manual laser alignment and human guesswork. While the current version is too slow for true real‑time motion tracking, and only static tests have been performed so far, the results show that the technology can routinely position a patient with sub‑millimeter accuracy. With further speed improvements and radiation‑hardening of the hardware, this approach could become a practical backbone for safer, more consistent BNCT treatments in the clinic.

Citation: Chen, J., Xie, QL., Ping, JJ. et al. Development and evaluation of surface-guided patient position system for boron neutron capture therapy. Sci Rep 16, 5818 (2026). https://doi.org/10.1038/s41598-026-35834-6

Keywords: boron neutron capture therapy, patient positioning, stereo vision, medical robotics, surface guidance