Clear Sky Science · en

AI anxiety and adoption intention in higher education based on an extended TAM-UTAUT and PLS-SEM analysis

Why AI Worries Matter on Campus

Universities around the world are racing to weave generative artificial intelligence (AI) into teaching and research. From tools that draft essays to systems that help design lesson plans, these technologies promise to save time and boost creativity. Yet many students and faculty feel a knot in their stomach when they think about using them. This study examines that knot—"AI anxiety"—to understand how different kinds of worry can either block or actually encourage people in higher education to adopt AI tools.

Three Kinds of Worry About AI

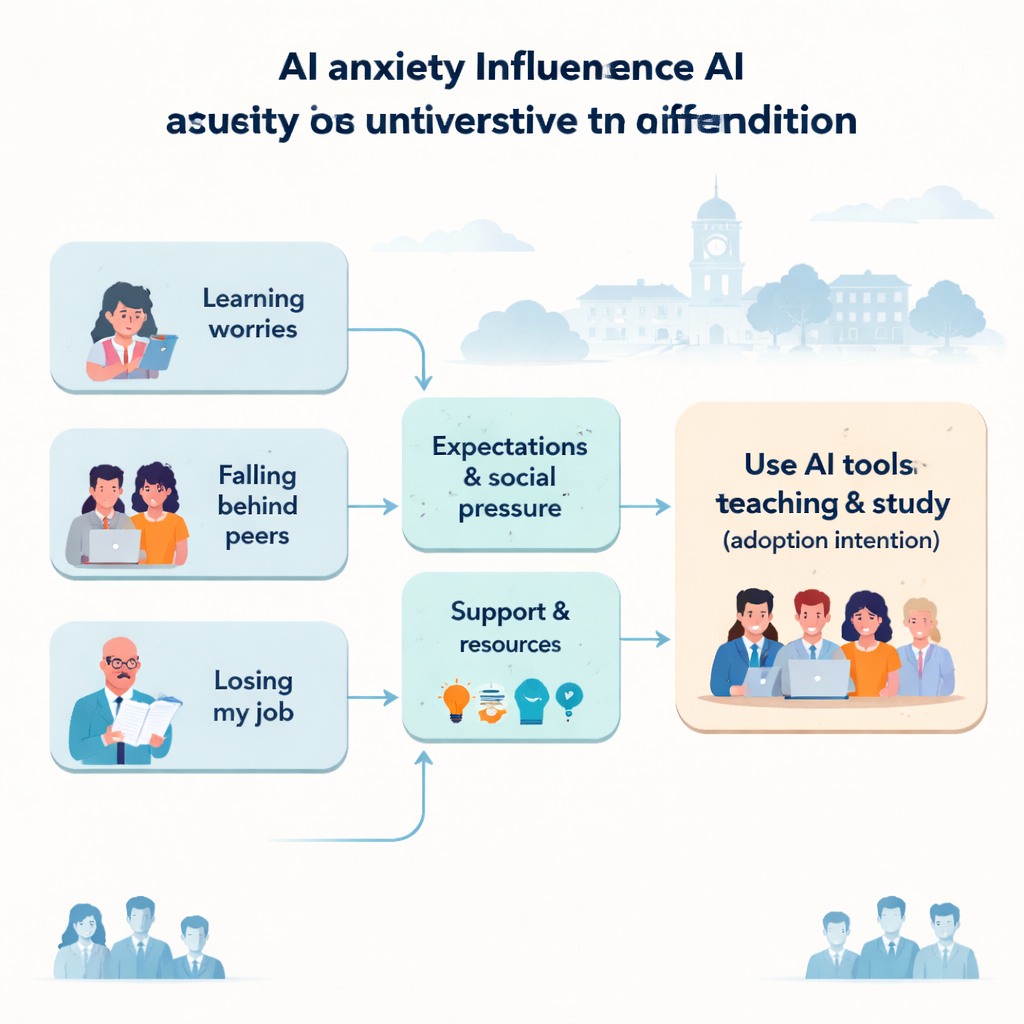

The researchers focus on three distinct forms of AI-related anxiety. The first is learning anxiety: the fear of not being able to master complex AI tools, or of being "not tech-savvy enough." The second is what they call sociotechnical blindness anxiety: a worry about becoming the person in the department who is left behind, out of the loop on new digital practices and conversations. The third is job displacement anxiety: the deeper fear that AI could replace key parts of one’s role—writing, grading, or even designing courses—and eventually make some academic jobs redundant. Rather than treating anxiety as a single, simple feeling, the authors argue that each of these has its own roots and consequences.

From Feelings to Decisions

To see how these anxieties shape real choices, the team combined two well-known theories of technology use that normally focus on rational beliefs: how useful a tool seems, how easy it feels to use, the social pressure to try it, and the availability of support. They surveyed 407 students and faculty at three universities in Sichuan Province, China, asking about their AI worries, their expectations of generative AI, and whether they intended to use such tools in their study or teaching. Using a statistical technique called structural equation modeling, they mapped out how emotional reactions feed into expectations about performance, effort, social influence, and support—and, ultimately, into the decision to adopt AI.

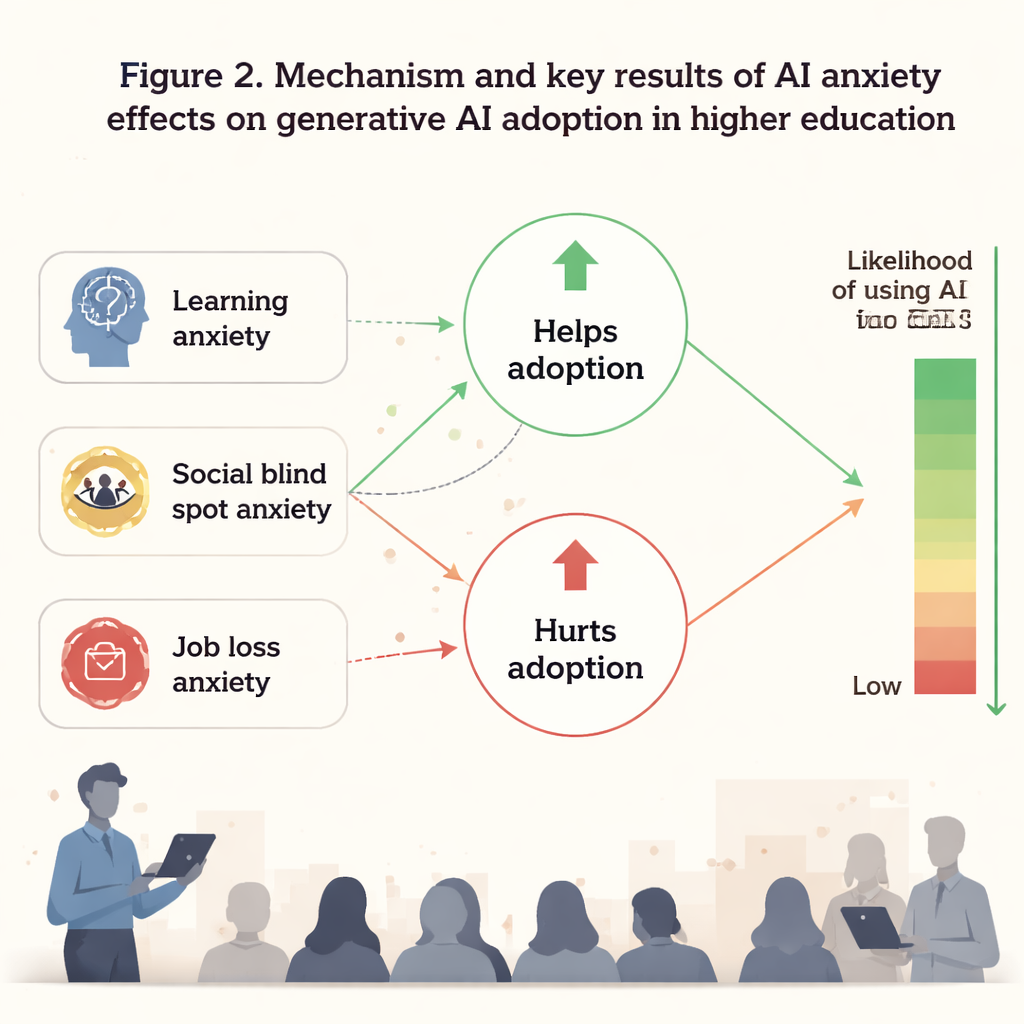

When Fear Holds People Back

Job displacement anxiety emerged as the most consistently harmful force. People who worried that AI might erode their professional value tended to rate AI as less helpful, harder to learn, less socially supported, and less backed by institutional resources. This cluster of negative beliefs strongly reduced their intention to use AI tools at all. Learning anxiety also chipped away at confidence, making AI feel more difficult and less within reach, although its effects were weaker and more dependent on other factors. In these cases, fear leads to a defensive stance: instead of seeing AI as an assistant, anxious users see it as a threat and withdraw from opportunities to experiment.

When Fear Spurs Action

Sociotechnical blindness anxiety told a more surprising story. People who were afraid of becoming the "AI-illiterate" member of their academic community sometimes reacted by leaning in rather than opting out. This kind of social fear was linked to stronger expectations that they could and should put in the effort to learn AI, greater sensitivity to how peers viewed AI use, and, in many cases, a higher intention to adopt generative tools. At the same time, it slightly reduced their belief that AI would truly improve performance. In other words, the same social anxiety that can sting a person’s sense of competence can also push them to catch up, creating a "double-edged sword" that both undercuts confidence and energizes learning.

Who Copes Better With AI Anxiety

The study also shows that context matters. Students and faculty in science and engineering fields were more likely to turn learning anxiety into a drive to experiment with AI, reflecting a culture that is already comfortable with rapid technological change. By contrast, people in the humanities and social sciences, who often think more critically about ethics and meaning, were more prone to see AI as a threat to core academic values. A person’s sense of AI self-efficacy—how confident they feel about eventually mastering these tools—also played a major role. Those with high self-efficacy could reinterpret some anxiety as a signal to improve, not a reason to give up, and were better at converting unease into constructive action.

What This Means for Universities

For higher education, the message is clear: AI anxiety is not simply a brake on innovation, nor is it something to be brushed aside. Certain worries, especially about job loss, can seriously undermine willingness to adopt generative AI, and deserve direct responses such as clear role definitions, career development support, and transparent policies. Other worries, particularly fears of falling behind peers, can be channeled into motivation if universities provide accessible training, peer learning communities, and tools that feel manageable rather than overwhelming. By recognizing and working with these different shades of anxiety—rather than assuming all fear is bad—institutions can encourage thoughtful, responsible, and more equitable use of AI across campus.

Citation: Kai, C., Ping, W. & Xiaomin, J. AI anxiety and adoption intention in higher education based on an extended TAM-UTAUT and PLS-SEM analysis. Sci Rep 16, 3672 (2026). https://doi.org/10.1038/s41598-026-35823-9

Keywords: AI anxiety, generative AI in education, technology adoption, university teaching, student attitudes