Clear Sky Science · en

Secure multi-party test case data generation through generative adversarial networks

Why smarter software tests matter

Modern factories, power grids, and industrial plants run on complex software that must talk perfectly over digital networks. A small mistake in how two systems exchange messages can lead to equipment failures, safety incidents, or cyberattacks. Yet the test data needed to find these hidden bugs are often scattered across many organizations, locked down by privacy rules and corporate secrecy. This article presents a new way for companies to work together to generate powerful test cases without ever sharing their raw, sensitive data.

The challenge of testing in a connected world

Traditional software testing tools were designed for a simpler era, when one team controlled most of the code and data. Today’s industrial networks are very different: devices from many vendors, speaking strict communication protocols, are spread across factories, utilities, and suppliers. Each organization sees only part of the traffic, and laws or contracts often forbid them from pooling their logs. As a result, test suites built in isolation can miss rare combinations of messages that only appear when systems from different owners interact. Existing privacy tools, which blur or remove sensitive fields, also fall short here: if you “generalize” protocol data too much, the messages simply stop being valid and can no longer be used for realistic testing.

A collaborative but privacy-first testing framework

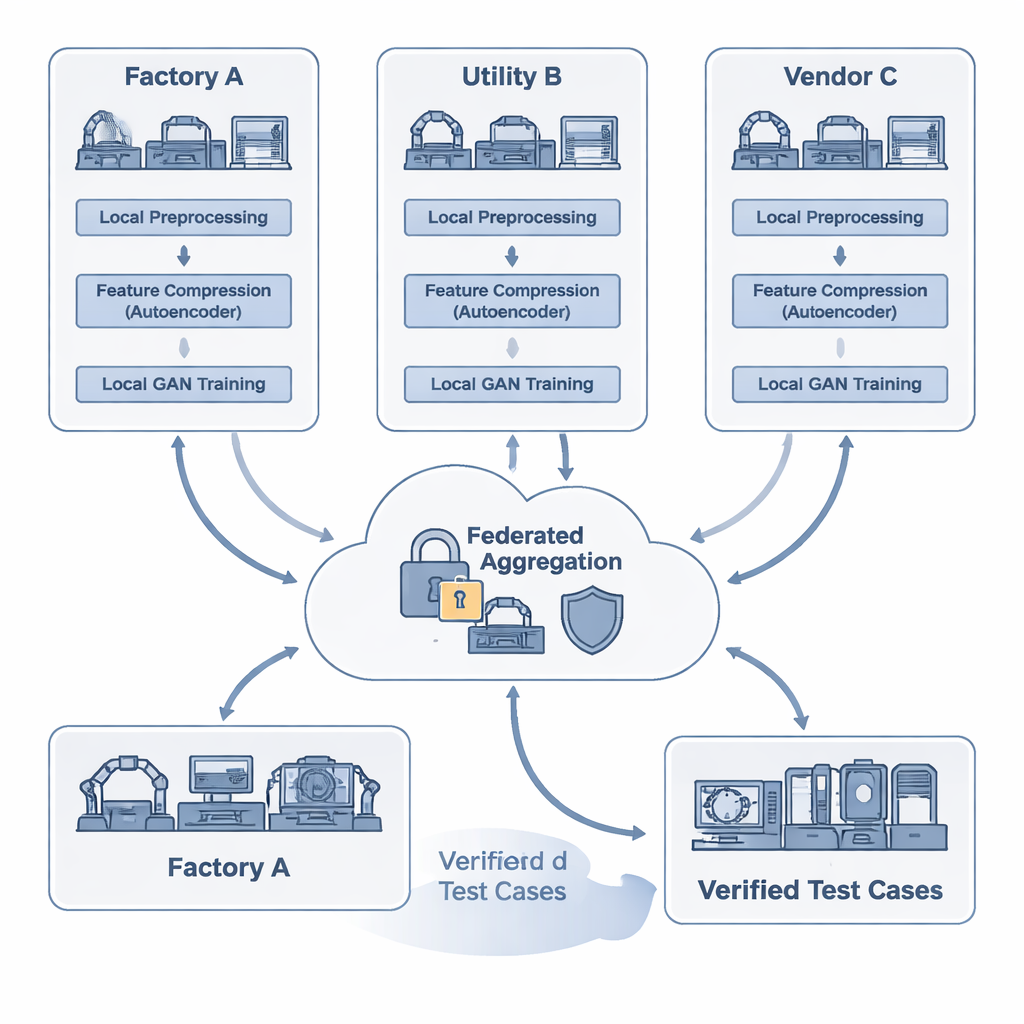

The researchers propose FAT-CG, a framework that lets multiple parties jointly train a test-data generator while keeping their detailed protocol traces secret. At the local level, each participant cleans and anonymizes their data, then feeds it into an autoencoder, a type of neural network that compresses messages into compact numerical summaries. These summaries are rich enough to preserve the grammar and structure of industrial protocols, but no longer expose raw addresses or proprietary values. Instead of shipping logs, organizations share only encrypted model updates. A central coordinator uses special cryptography (homomorphic encryption) and carefully added noise (differential privacy) to combine these updates into a stronger shared model, without being able to reconstruct any participant’s original traffic.

Teaching machines to craft powerful test cases

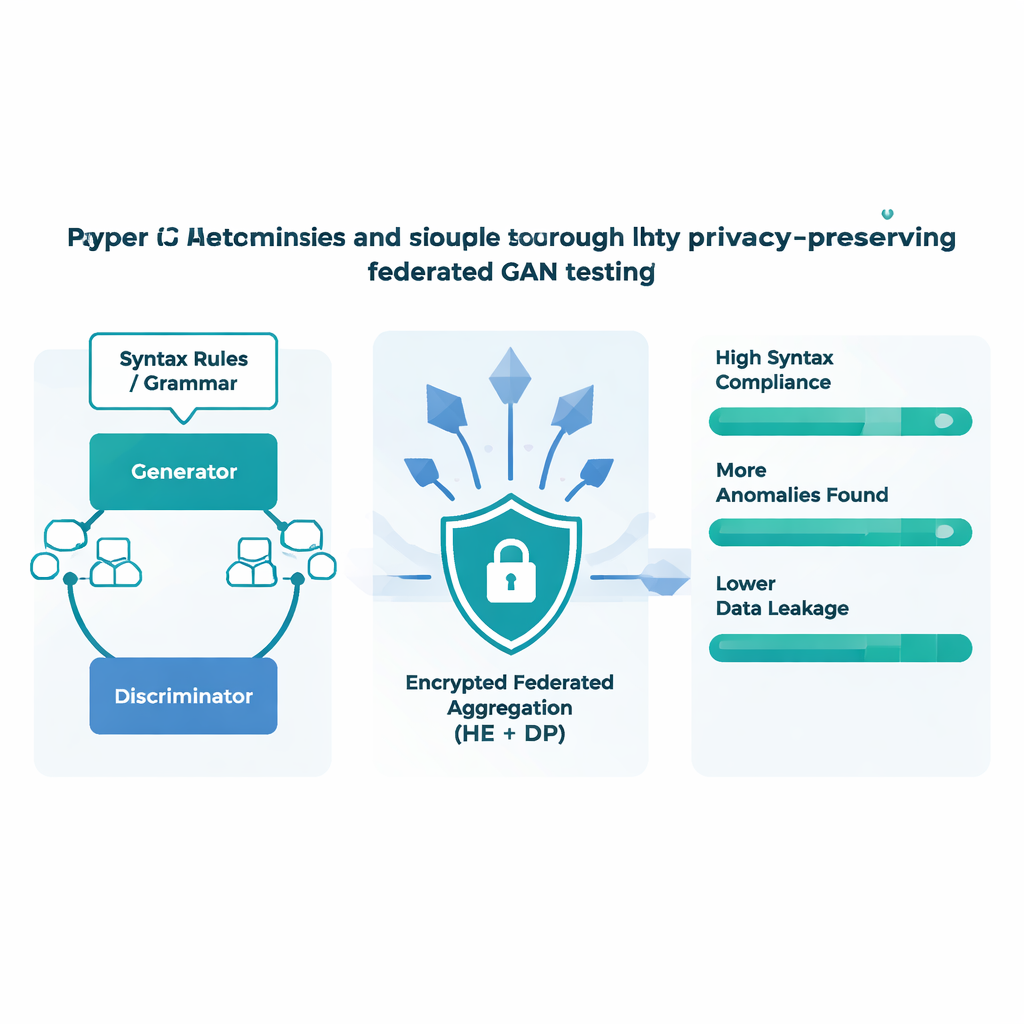

At the heart of FAT-CG is a Generative Adversarial Network (GAN), a pair of neural networks that compete with each other. One network, the generator, tries to produce new protocol messages; the other, the discriminator, tries to tell real messages from fake ones. Over time, this contest pushes the generator to learn subtle patterns in how valid messages are structured. FAT-CG adds another twist: formal descriptions of protocol rules are used to check every generated message, and violations are penalized during training. This keeps the synthetic traffic both realistic and diverse. The system runs in a loop: once messages are generated, they are fired at simulated industrial devices in a sandbox. Any cases that trigger crashes, memory leaks, or odd responses are fed back into the training process, nudging the generator toward the most promising corners of the search space.

Privacy, speed, and bug-finding in real tests

The team tested FAT-CG on several common industrial protocols, including Modbus-TCP and OPC UA, using a realistic setup with multiple edge devices and a central server. Compared with existing fuzzing tools and simpler federated learning methods, the new approach produced test messages that obeyed protocol rules more than 90% of the time and uncovered nearly three times as many anomalies per thousand tests. At the same time, its layered defenses sharply reduced the risk that an attacker could reconstruct training data from shared model updates. By compressing protocol features into small, structured codes before encryption, the system also cut communication overhead by almost a factor of thirty, shortening training rounds and making it practical for bandwidth-limited industrial networks.

What this means for critical systems

In everyday terms, this work shows that companies running critical infrastructure do not have to choose between keeping their data private and testing their systems thoroughly. FAT-CG offers a way for many parties to pool their knowledge about how real networks behave, without ever handing over sensitive logs. The result is a shared test generator that is better at speaking the “language” of industrial devices and better at provoking dangerous edge cases—exactly the kind of tool needed to catch vulnerabilities before they cause outages or accidents. Although the study focuses on industrial protocols, the same ideas could help in other sensitive areas, such as healthcare or finance, where organizations must collaborate on safety and reliability without sacrificing confidentiality.

Citation: Wang, Z., Zhao, L., Meng, F. et al. Secure multi-party test case data generation through generative adversarial networks. Sci Rep 16, 5085 (2026). https://doi.org/10.1038/s41598-026-35773-2

Keywords: industrial software testing, federated learning, generative adversarial networks, privacy-preserving AI, network protocol fuzzing