Clear Sky Science · en

Infrared and visible image fusion via visual enhancement and semantic coupling

Sharper Vision from Day and Night Cameras

Modern cars, drones, and security systems often carry two kinds of eyes: a regular camera that sees color and texture, and an infrared camera that sees heat. Each has strengths and weaknesses, and combining them into a single clear picture is surprisingly hard. This paper presents a new way to fuse these two views into one image that is not only easier to look at, but also easier for computer programs to understand.

Why Two Eyes Are Better Than One

Visible-light cameras capture crisp details like road markings, building edges, and clothing, but they struggle at night, in fog, or when objects blend into the background. Infrared cameras do the opposite: they highlight warm objects such as people and vehicles even in darkness, yet their pictures look blurry and lack fine detail. Fusing these two views into one “best of both worlds” image can help in tasks ranging from pedestrian detection in driver-assistance systems to surveillance and search-and-rescue. However, many existing fusion methods focus only on surface features—bright spots from infrared and textures from visible images—while neglecting the deeper, scene-level meaning that matters for smart machines.

A Smarter Way to Blend Images

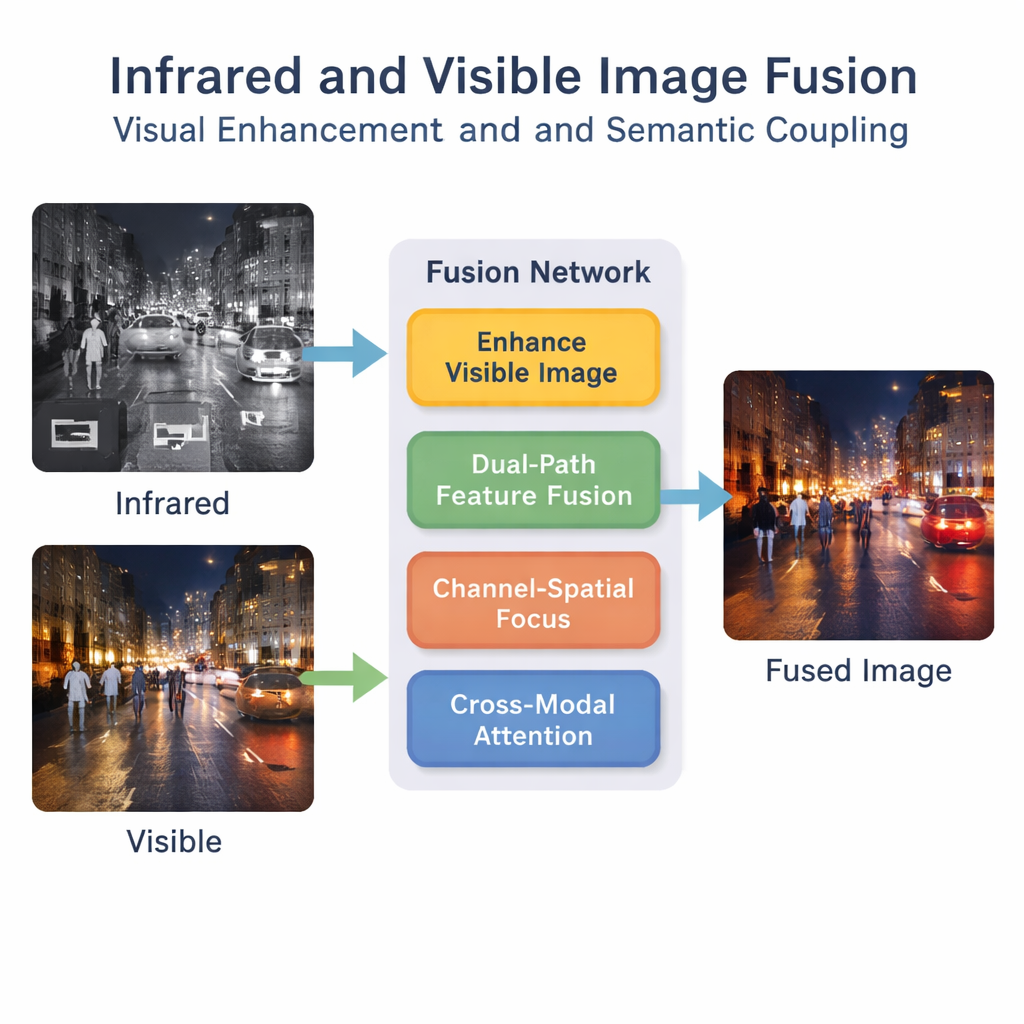

The authors propose a deep-learning framework that treats fusion as more than a simple overlay. First, a special enhancement step brightens and balances the visible image, especially in low-light scenes, so that valuable details are not lost before fusion even begins. Then a dual-path network processes both infrared and visible inputs in parallel. One path concentrates on local patterns such as edges and textures, while the other looks at the broader context of the scene. By combining these paths, the system produces a richer internal description of what is happening in the images.

Teaching the Network What to Pay Attention To

Simply extracting many features is not enough; the network must learn which ones are important. A “channel–spatial” module helps the model highlight critical regions and types of information, such as pedestrians or bright headlights, while downplaying less useful background clutter. On top of this, a bimodal interactive attention mechanism encourages the infrared and visible streams to talk to each other. It learns how heat signatures and visual textures line up across the scene, capturing higher-level concepts like “this bright blob in infrared corresponds to that person in the visible image.” This semantic coupling helps the fused image remain logically consistent rather than just visually blended.

Putting the Method to the Test

To check whether the fused images are not only attractive but also realistic, the authors add a discriminator network similar to those used in generative adversarial networks. This extra network learns to distinguish real visible images from fused ones, pushing the fusion process to produce outputs that look natural to both humans and machines. The method is trained and tested on three challenging collections of infrared–visible image pairs, covering daytime and nighttime roads and military-style scenes. Across a range of standard quality measures, the new approach generally outperforms ten existing fusion techniques, producing images with sharper edges, better contrast, and more informative content.

Better Images for Safer Machines

Beyond visual quality, the authors ask a practical question: do these fused images help computers make better decisions? Using a popular object-detection system to find pedestrians, they show that their fused images improve detection accuracy compared with both single-sensor images and earlier fusion methods. In everyday terms, the technique creates pictures that are easier for both humans and algorithms to interpret, especially in difficult conditions like night driving. While the system still needs tuning for real-time use in resource-limited devices, it offers a promising step toward safer, more reliable vision in automated vehicles, surveillance, and other technologies that must see clearly when it matters most.

Citation: Yang, Y., Li, Y., Li, J. et al. Infrared and visible image fusion via visual enhancement and semantic coupling. Sci Rep 16, 5666 (2026). https://doi.org/10.1038/s41598-026-35763-4

Keywords: image fusion, infrared imaging, low-light vision, deep learning, object detection