Clear Sky Science · en

Enhanced YOLO12 with spatial pyramid pooling for real-time cotton insect detection

Why spotting tiny insects matters

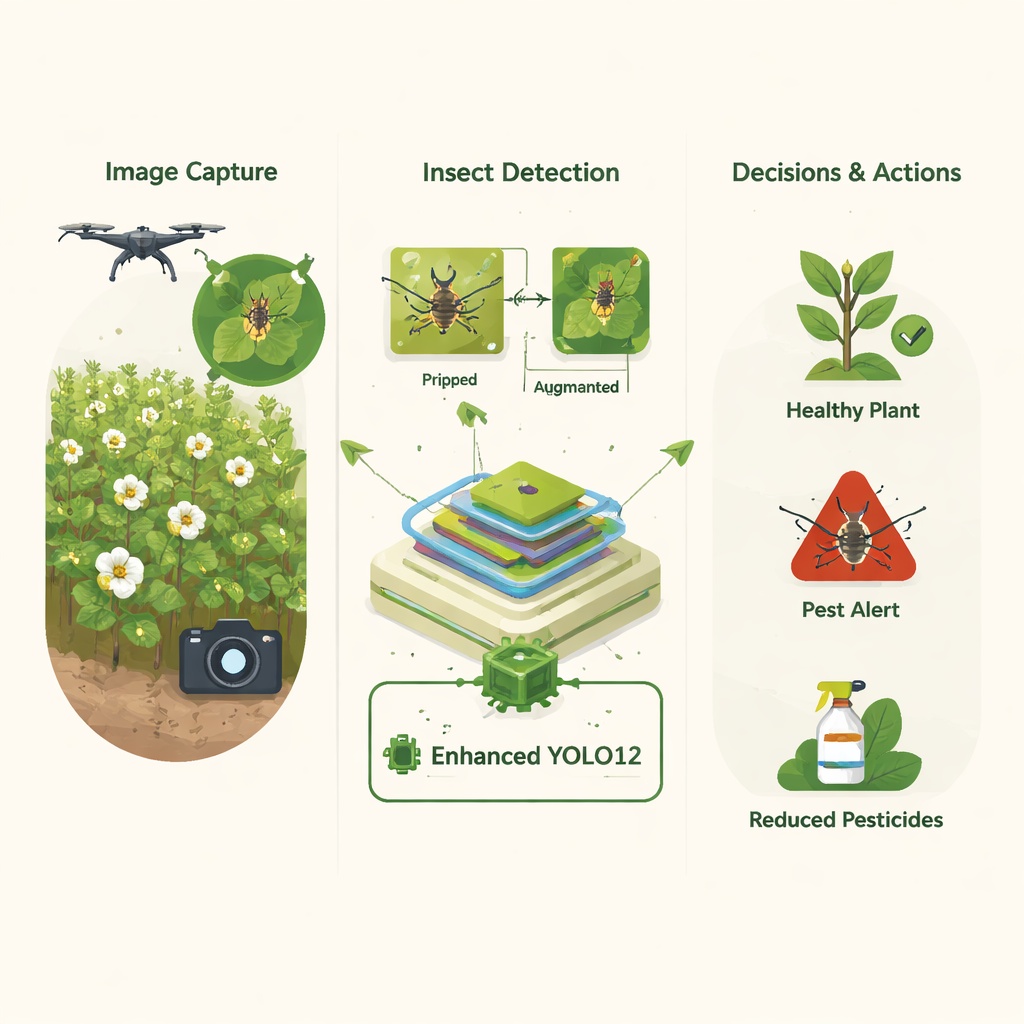

Cotton may look like a simple plant, but its global role is enormous: it clothes billions of people, supports millions of jobs, and feeds industries from cooking oil to cosmetics. Yet whole fields of cotton can be quietly undermined by insects that are barely larger than a grain of rice. Farmers often rely on frequent field walks and heavy pesticide use to keep these pests at bay—methods that are time‑consuming, costly, and hard on the environment. This study explores how a new form of artificial intelligence can watch cotton fields in real time, automatically spotting harmful insects on leaves so that treatments are better targeted and far less wasteful.

From hand scouting to smart cameras

Today, many farmers or field workers still walk through cotton rows, visually checking leaves and flowers for pests. That approach is limited by human fatigue, uneven coverage, and changing light and weather conditions. Broad‑spectrum pesticides, often sprayed on a schedule rather than in response to actual need, can kill beneficial insects, contaminate soil and water, and raise production costs. The authors argue that a more sustainable way forward is to let computers “see” insects directly in field photos, giving farmers an automated early‑warning system that can focus controls precisely where pests are present.

Teaching computers to recognize tiny bugs

To build such a system, the researchers turned to deep learning, a branch of AI that excels at recognizing patterns in images. They used a family of models called YOLO (“You Only Look Once”), which can scan an image and draw boxes around objects in a fraction of a second. Starting from the latest YOLO12 model, they assembled a new, enhanced version tailored for cotton pests. First, they curated and refined a high‑quality open dataset of 3,225 photos from real cotton fields, covering 13 common insect types such as ladybugs, stinkbugs, and bollworms. They converted the original labels into a standard format the model can read and carefully balanced the number of images per insect class so the AI would not become biased toward the most common species.

Making small insects stand out

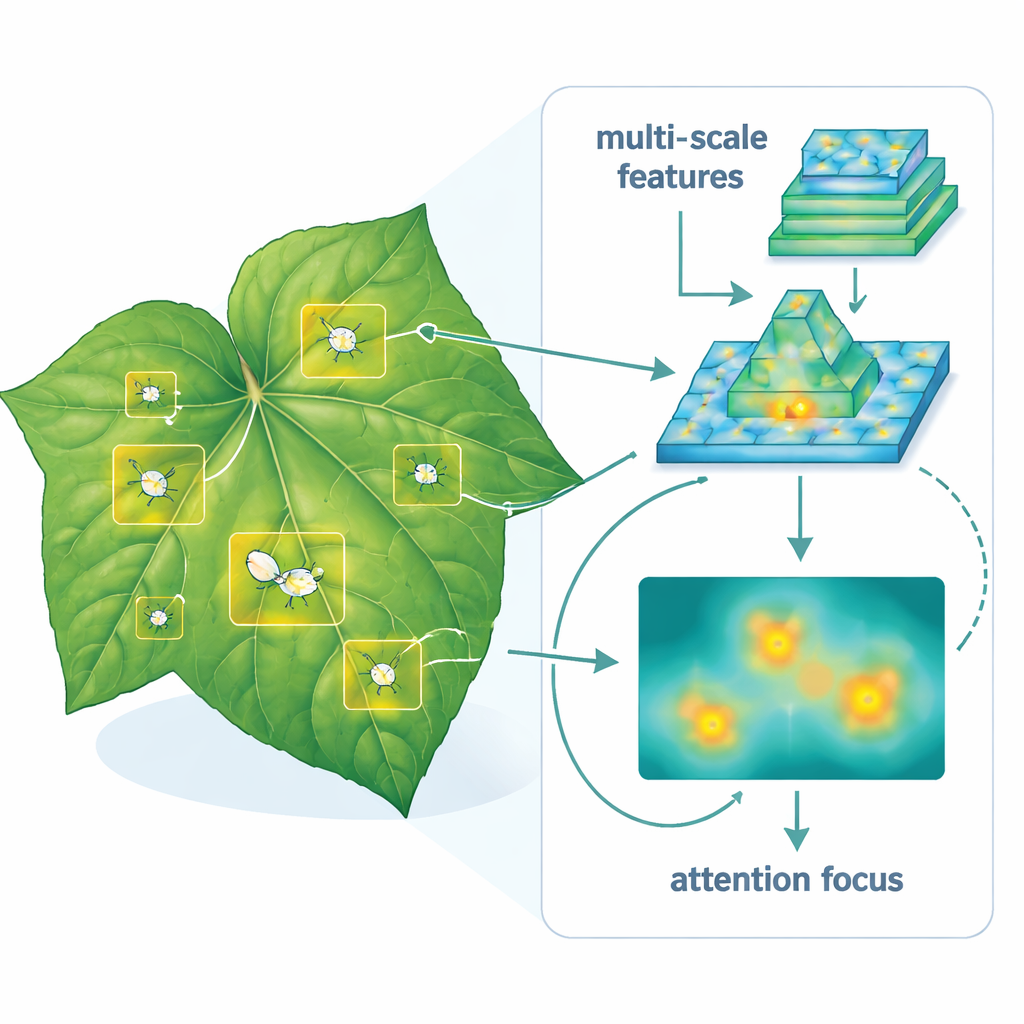

Detecting insects on leaves is much harder than spotting large objects like cars or people. The bugs are tiny, often camouflaged, and can appear at many scales and angles. To handle this, the team improved the YOLO12 architecture in several ways. They added specialized building blocks that help the model capture fine details while still understanding the overall scene. A “spatial pyramid pooling” module lets the network look at the same region at multiple scales simultaneously, which is crucial for seeing both very small and slightly larger insects in the same image. An attention mechanism then helps the model emphasize the most informative parts of the picture—subtle shapes, colors, and textures that distinguish one insect from another—while ignoring background clutter.

Putting the models to the test

The authors did not simply propose one model; they built and compared six different YOLO‑based designs, including standard YOLO11 and YOLO12 and several custom variants. All were trained and tested on the same cotton insect dataset to ensure a fair comparison. The standout model, called Enhanced Hybrid YOLO12, achieved very high scores on standard measures of detection quality, balancing how often it correctly finds insects with how accurately it draws their bounding boxes. Compared to the original YOLO12, it raised both overall detection quality and consistency across many test conditions, while still operating fast enough for real‑time use on modern graphics hardware. Even though this enhanced model is somewhat heavier in computation, the authors show that the gain in reliability is especially valuable in real‑world farming.

What this means for farmers and the environment

In everyday terms, the study shows that a camera plus a trained AI model can act like a tireless digital scout, scanning cotton leaves for pests day and night. Because the Enhanced Hybrid YOLO12 system is better at avoiding false alarms than earlier versions, it can help farmers spray only where and when insects are truly present, cutting chemical use, saving money, and reducing harm to beneficial species and surrounding ecosystems. While further work is needed to run the model on lower‑cost devices and to extend it to more insect species and growing regions, this research points toward a future where precision agriculture turns pest control from guesswork into data‑driven action.

Citation: Saif, D., Askr, H., Sarhan, A.M. et al. Enhanced YOLO12 with spatial pyramid pooling for real-time cotton insect detection. Sci Rep 16, 4806 (2026). https://doi.org/10.1038/s41598-026-35747-4

Keywords: cotton pests, precision agriculture, deep learning, object detection, sustainable farming