Clear Sky Science · en

Hierarchical contextual information aggregation for polyp segmentation

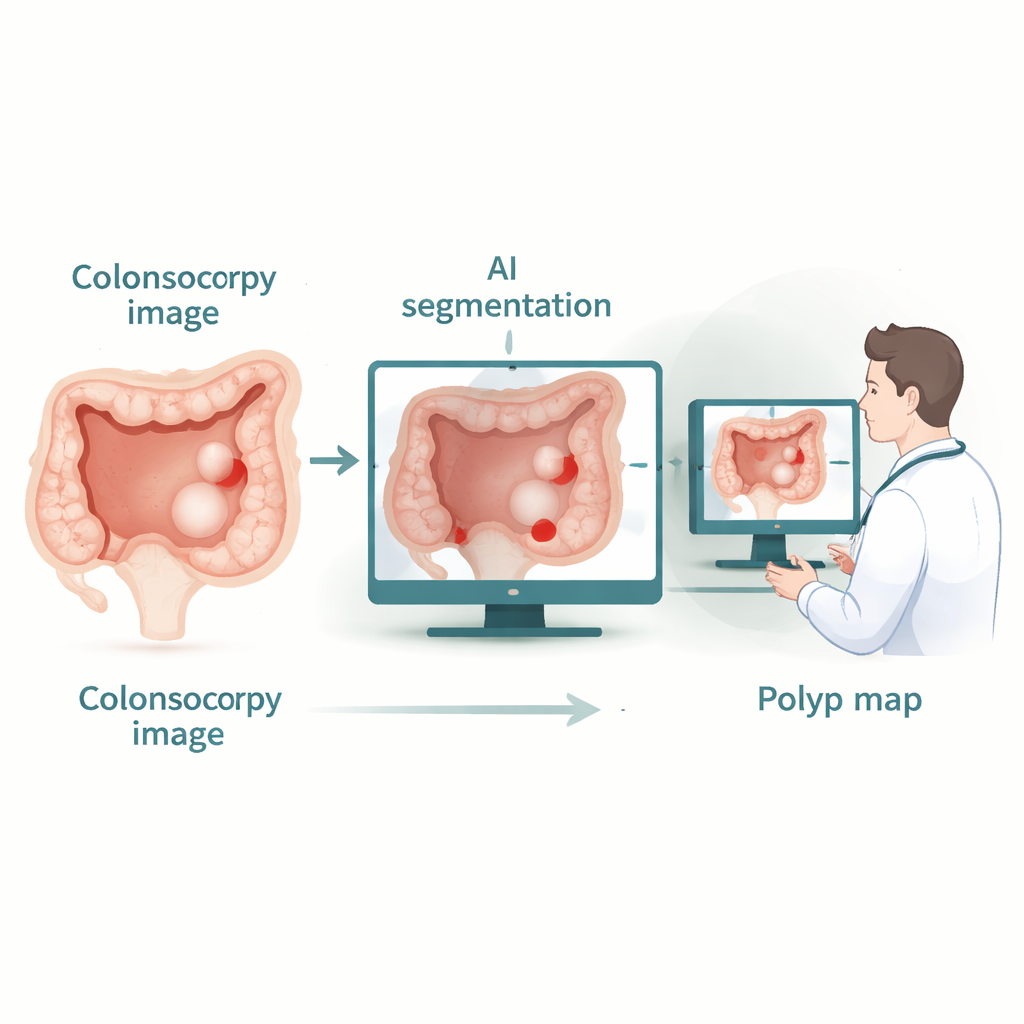

Why tiny growths in the colon matter

Colorectal cancer often begins as small, seemingly harmless bumps called polyps on the lining of the large intestine. During a colonoscopy, doctors try to spot and remove these growths before they turn dangerous. But humans get tired, the view is imperfect, and studies show that up to a quarter of polyps can be missed. This paper presents a new artificial intelligence (AI) system designed to automatically outline polyps in colonoscopy images with high precision, aiming to become a reliable assistant that helps doctors see more—and miss less.

Seeing the forest and the trees

Modern AI tools for medical images usually fall into two camps. One camp, based on classic convolutional neural networks, is very good at recognizing fine local details, such as subtle edges or texture changes, but struggles to understand how far-apart regions in an image relate to each other. The other camp, based on transformer models, excels at capturing long-range relationships—seeing the “big picture” across an entire image—but can lose sharpness around small structures like thin polyp boundaries, and can be expensive to run. Recent hybrid systems try to mix both approaches, yet they often end up favoring the convolution part and fail to make full use of attention mechanisms, leading to blurry edges and missed context.

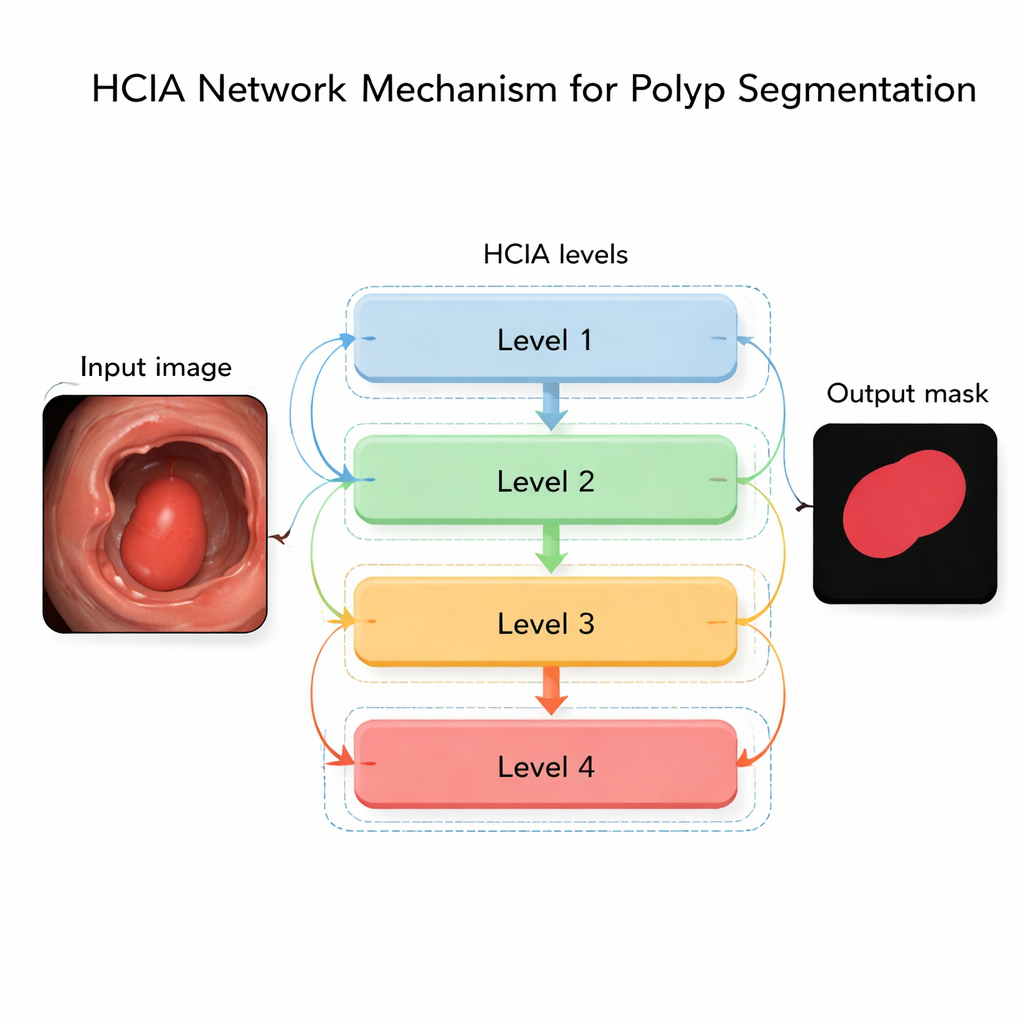

A new way to combine clues across scales

The authors propose a network called the Hierarchical Contextual Information Aggregation (HCIA) model to overcome these limitations. HCIA is built to look at a colonoscopy image at several levels of detail at once—from coarse, zoomed-out views to fine, zoomed-in ones—and to let these levels talk to each other in a structured way. One key component, the Hierarchical Aggregation Module (HAM), merges information from neighboring levels. By blending coarse context with finer details, HAM helps the system tell polyps apart from background tissue of varying colors, shapes, and sizes, while sharpening the boundaries where the polyp meets healthy tissue.

Smart attention without heavy computation

The second crucial piece, the Interconnected Attention Module (IAM), tackles the challenge of global context. Instead of computing attention in the usual way—which compares every pixel with every other pixel and quickly becomes computationally costly—IAM uses a shared “memory” that summarizes what the network has learned across all levels. Each level consults this memory to decide which parts of its features deserve more focus. This design allows IAM to build long-range connections through the whole image while keeping the cost of computation under control. Importantly, the same memory is shared across the network’s branches, giving a form of consistent, global supervision that helps the model stay focused on polyps regardless of their size or location.

Putting the system to the test

To find out whether HCIA’s design matters in practice, the authors tested it on three widely used polyp image collections, each containing hundreds to a thousand colonoscopy frames with expert-drawn polyp outlines. They compared HCIA with more than twenty leading AI models, including both traditional convolutional networks and newer transformer-based hybrids. On nearly all measures—how well the predicted polyp regions overlap with expert labels, how accurately shapes are captured, and how well structures align—HCIA came out on top or matched the best competitors. It was especially strong when tested across different datasets, a demanding setting that mimics how a tool must perform in hospitals using different equipment and imaging conditions. Detailed visual comparisons showed that HCIA more reliably captured irregular polyp shapes and faint boundaries than rival methods.

What this means for patients and clinicians

For non-specialists, the key takeaway is that HCIA is an AI system that more accurately outlines potentially cancerous growths in colonoscopy images while running fast enough for real-time use. By combining sharp local detail with a strong sense of overall context, and doing so efficiently, it reduces the chances that subtle or oddly shaped polyps go unnoticed. Although the model still needs further optimization for very lightweight devices and does not yet use information from video sequences over time, it represents a meaningful step toward smarter, more reliable computer assistance in colon cancer screening—supporting doctors in catching more dangerous growths before they become life-threatening.

Citation: Li, L., Yang, H., Zhang, J. et al. Hierarchical contextual information aggregation for polyp segmentation. Sci Rep 16, 5959 (2026). https://doi.org/10.1038/s41598-026-35703-2

Keywords: colorectal polyps, colonoscopy AI, medical image segmentation, deep learning, early cancer detection