Clear Sky Science · en

DermNet: integrative CNN-ViT architecture for bias mitigation in dermatological diagnostics using advanced unsupervised lesion segmentation

Why fair skin diagnosis matters

Skin diseases touch nearly everyone at some point in life, from acne and eczema to more serious conditions. Yet for many people with brown and dark skin, these problems are harder to spot and easier to misdiagnose, both in the clinic and by artificial intelligence (AI) tools. This paper introduces DermNet, a new AI system designed to recognize skin diseases more fairly across different skin tones by teaching the computer to focus on the actual diseased spot rather than the color of the surrounding skin.

The problem with one-size-fits-all skin AI

Most existing AI systems for skin diagnosis are trained on photos that skew heavily toward light skin. When those same systems are used on people with darker complexions, their accuracy drops by 8–12%. In real life, that gap can mean missed early warning signs and delayed treatment. Collecting balanced medical photo datasets is also difficult: images are scattered across hospitals, some countries do little digital record-keeping, and expert labeling by dermatologists is time-consuming and expensive. As a result, AI often learns shortcuts, such as associating certain diseases mainly with pale skin, instead of truly understanding what a lesion looks like.

Teaching the computer to see only the sore spot

The authors tackle this bias at its source: the image itself. Instead of feeding full photos of arms, faces, or legs into the AI, they first automatically cut out just the diseased area—the lesion—no matter what color the surrounding skin is. To do this, they combine a powerful open-source tool called Segment Anything (which can outline objects without special training) with classic image-processing tricks that highlight differences in color and brightness. By cleverly switching color spaces and applying automated thresholding, they produce black-and-white “masks” that separate lesion from normal skin. Remarkably, this unsupervised pipeline achieves about 90% overlap with expert-quality lesion outlines across both lighter and darker skin tones, without relying on hand-drawn training masks.

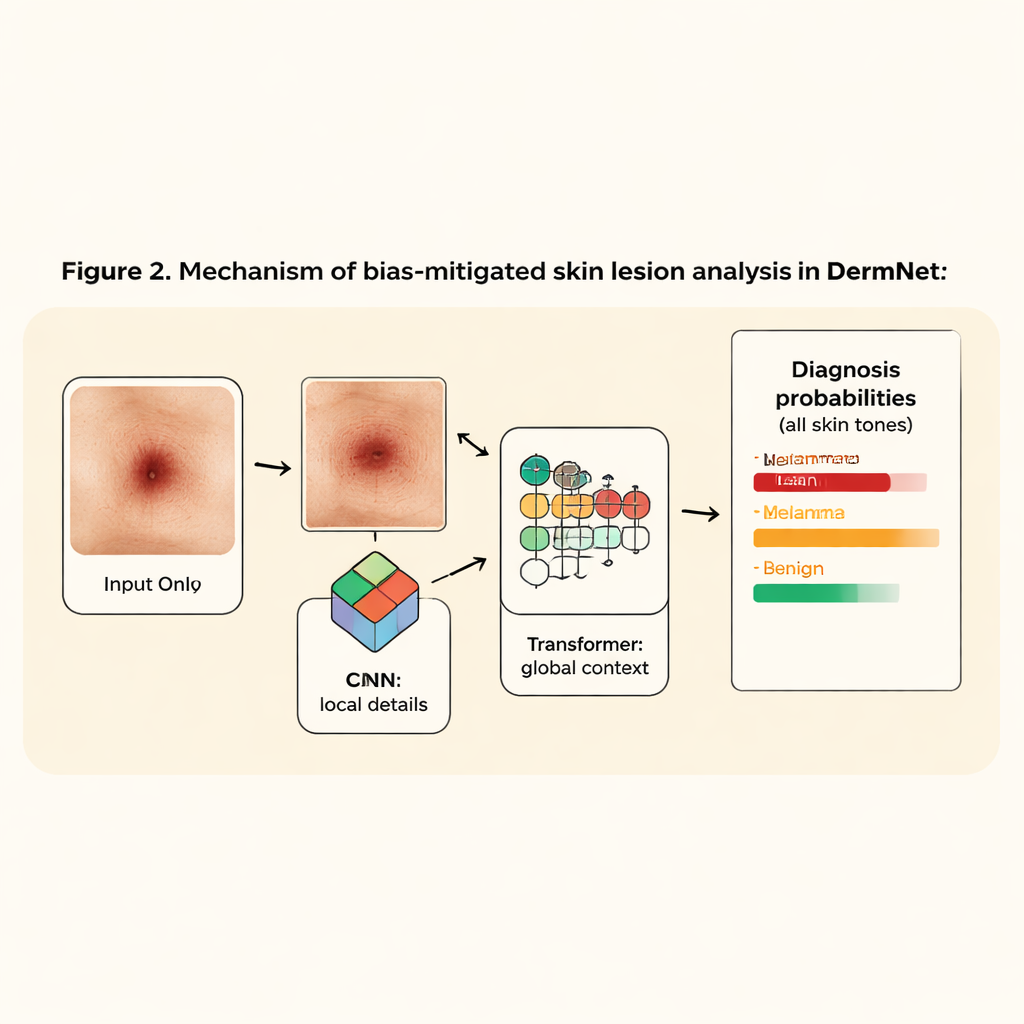

A slimmer, smarter network for skin diseases

Once the lesion is isolated, DermNet takes over. This classifier blends two popular AI ideas: convolutional neural networks (CNNs), which are good at spotting edges and textures, and vision transformers, which excel at seeing long-range patterns across an image. DermNet first uses just two lightweight CNN layers to extract fine details from the cropped lesion, then passes these patterns into a transformer that learns how different parts of the lesion relate to one another. Because the system no longer has to parse full-body photos, it can stay small—about 2.5 million parameters, under 10 megabytes—yet still outperforms much larger, widely used image models.

Building a fairer dataset behind the scenes

To train and test DermNet, the team merged two dermatologist-annotated collections into what they call the SkinCon dataset. After requiring at least 20 images per disease, they obtained 3,643 images covering 122 conditions, with roughly one third each from light, brown, and dark skin types. They further expanded this set through simple transformations, such as rotation and brightness changes, to reduce overfitting and improve robustness. Using this diverse and curated dataset, they found that training on full, unsegmented images led to unstable learning and validation accuracy around 50–56%. When they switched to lesion-only inputs, performance jumped: DermNet reached about 81% validation accuracy and did so more consistently across all three skin tone groups.

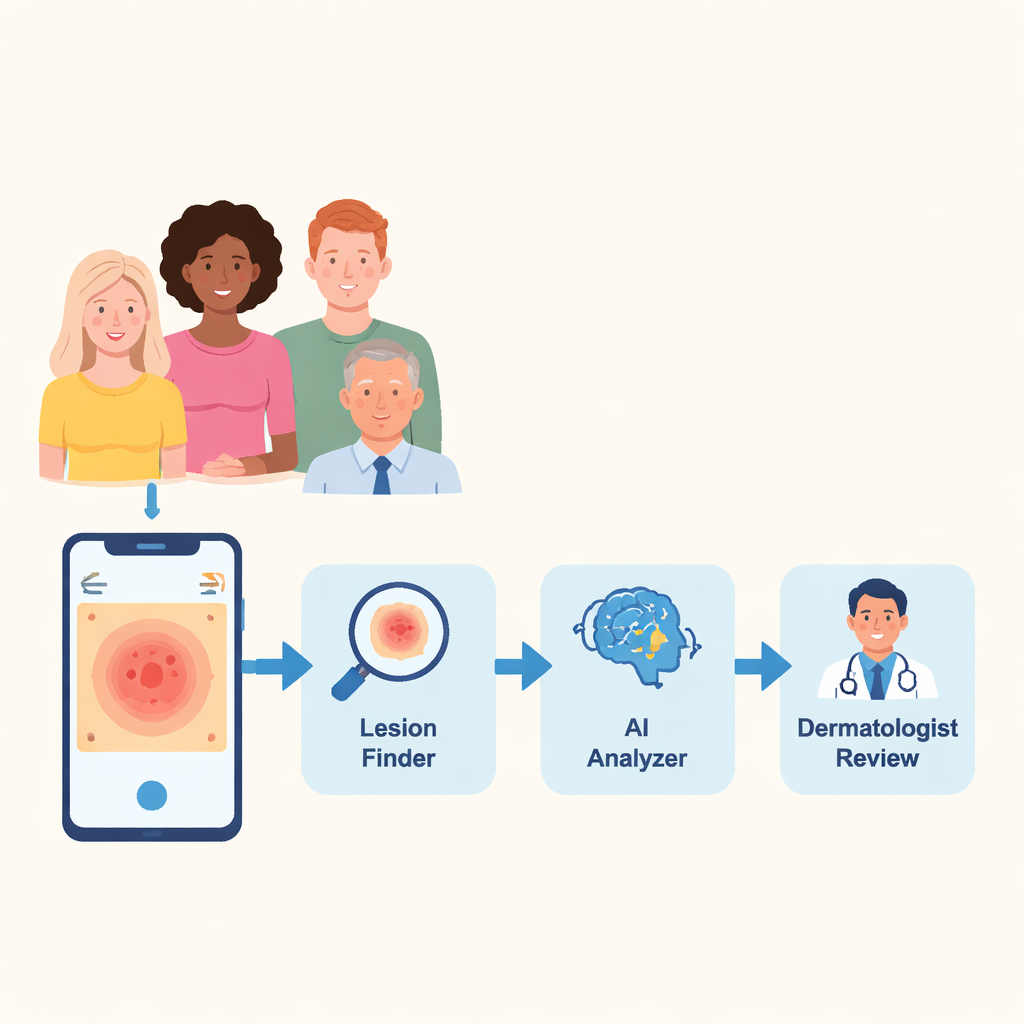

From lab pipeline to pocket assistant

To show how this might work in practice, the researchers built a prototype mobile app. A user snaps or uploads a photo of a suspicious skin area; the system automatically segments the lesion, runs it through DermNet, and returns the three most likely diagnoses with their probabilities—in under 20 seconds—while leaving the final judgment to a dermatologist. Though not a replacement for a doctor, such tools could flag early-stage disease, especially in regions where dermatologists are scarce, and help direct limited specialist time to the most urgent cases.

What this means for everyday patients

The main takeaway for non-experts is that AI does not have to be “color-blind” in a naive sense; instead, it can be guided to look at the right thing: the lesion itself. By stripping away the influence of background skin tone and focusing on the diseased area, this study shows it is possible to build a lean, accurate model that treats images of light, brown, and dark skin more equally. DermNet is an early step toward skin-disease apps and diagnostic systems that work reliably for everyone, not just those whose skin happens to match the majority of existing medical photos.

Citation: Imran, M.H., Shahid, M., Aazam, M. et al. DermNet: integrative CNN-ViT architecture for bias mitigation in dermatological diagnostics using advanced unsupervised lesion segmentation. Sci Rep 16, 5291 (2026). https://doi.org/10.1038/s41598-026-35697-x

Keywords: skin disease diagnosis, medical AI bias, lesion segmentation, dermatology imaging, vision transformer