Clear Sky Science · en

PGSFormer: traffic flow prediction based on joint optimization of progressive graph convolutional networks with subseries transformer

Why Smarter Traffic Forecasts Matter

Cities everywhere struggle with traffic jams that waste time, fuel, and money. As more roads are covered with sensors, we now collect huge amounts of real-time data about how cars move. The challenge is turning that data into reliable short- and long-term forecasts so traffic lights can be adjusted, routes can be suggested, and congestion can be reduced before it even forms. This paper introduces PGSFormer, a new forecasting method that aims to predict traffic more accurately by learning how both space (where the roads are) and time (how patterns change) interact in the real world.

From Simple Averages to Complex Patterns

Early traffic prediction tools mostly relied on simple statistics, such as taking the historical average or using models that assume regular, repeating patterns. These approaches work only when the data behaves in a steady, almost linear fashion—which real traffic rarely does. Congestion can appear suddenly, spread unpredictably across a city, and be affected by many factors like time of day, day of week, or nearby incidents. Later, deep learning methods began to use road networks as graphs, where each sensor is a node and each road link is an edge. These graph-based models improved accuracy by recognizing that what happens on one road strongly affects its neighbors. Yet most of them still treat the road connections as fixed, ignoring that relationships between roads can change with time.

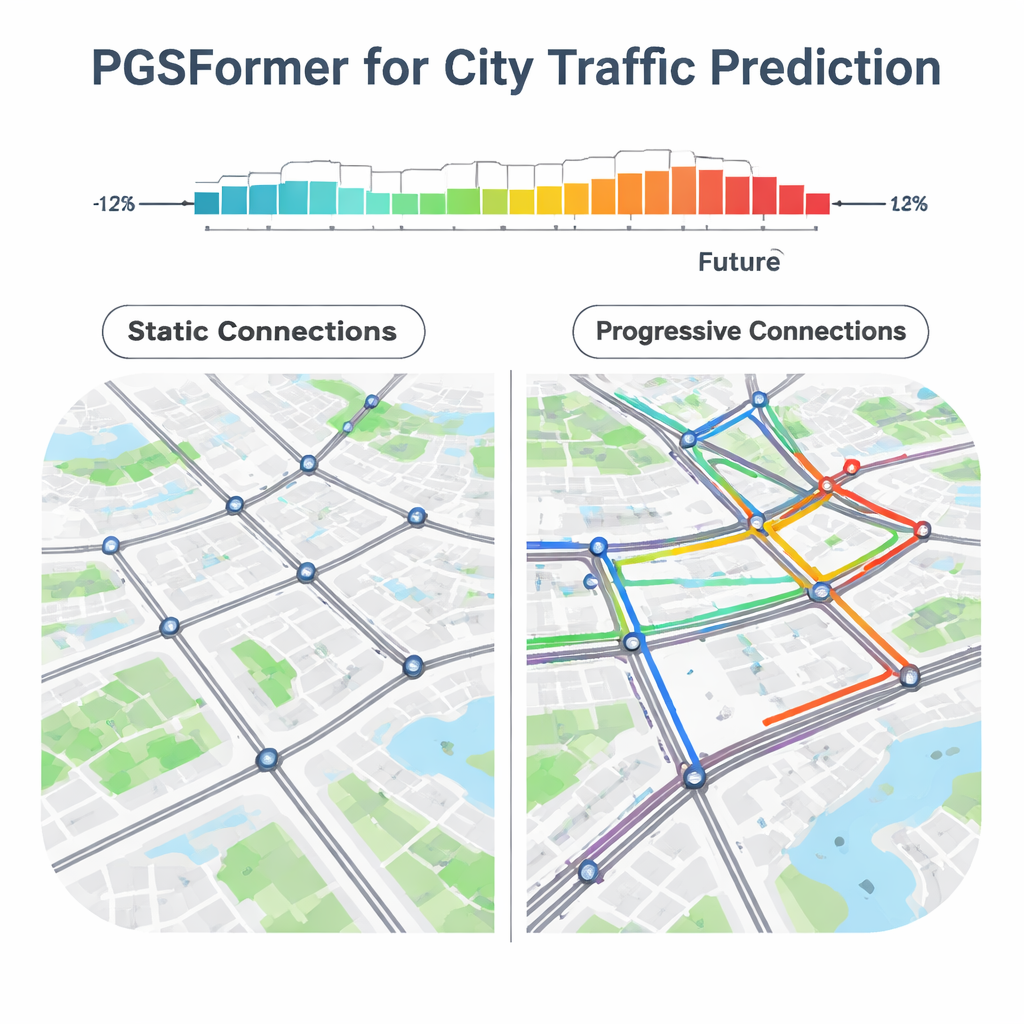

Capturing a Living, Changing Road Network

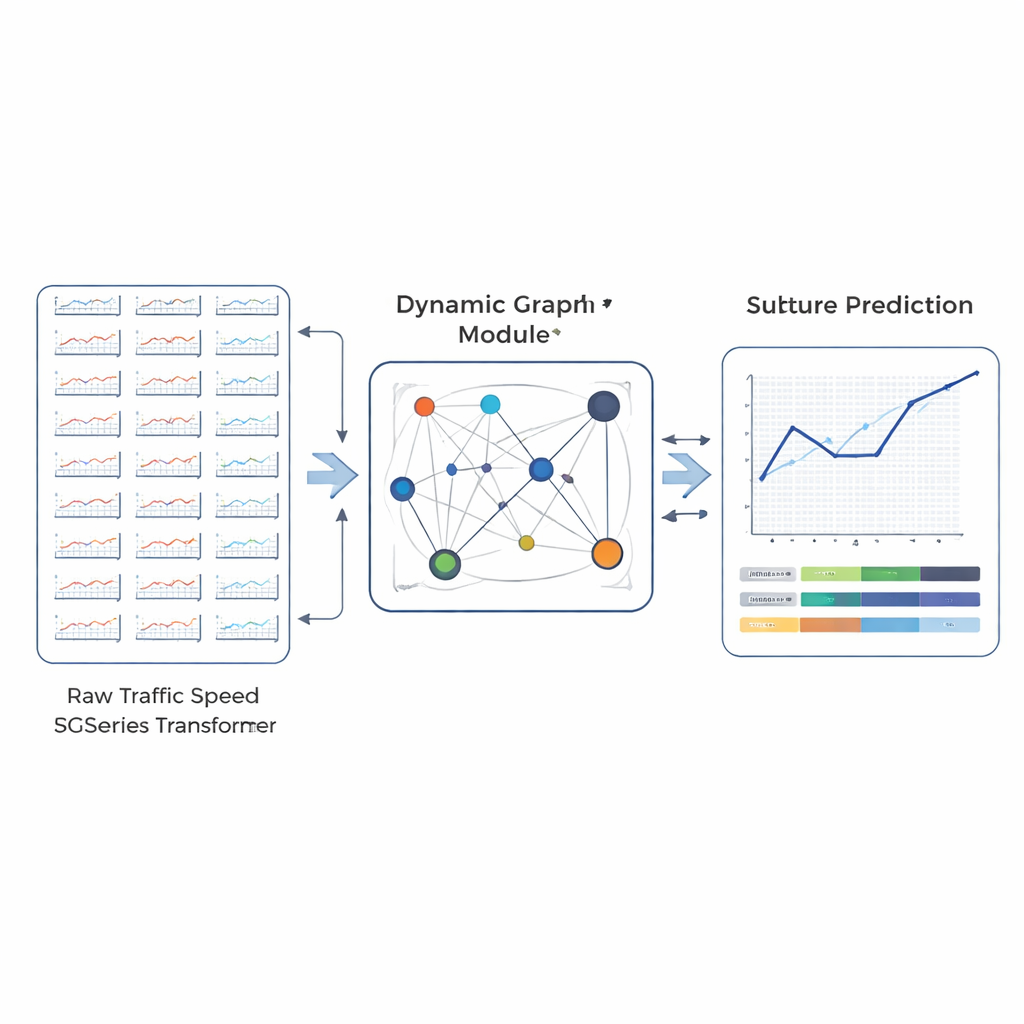

PGSFormer is built on the idea that a city’s traffic network is not static; it behaves more like a living system whose internal connections shift from moment to moment. Instead of relying only on the physical layout of roads, the method builds what the authors call a progressive graph. In this graph, the strength of the connection between any two sensors is based on how similar their recent traffic trends are, not just how close they are on the map. For example, a school zone and a nearby office district might behave alike during the morning rush but differ greatly in the afternoon. PGSFormer continually updates these connections in both training and testing, so the model can react to fresh data rather than being locked into patterns learned long ago.

Looking Deep into Time Without Getting Lost

Another hurdle in traffic forecasting is dealing with long time series. Traffic data often show several overlapping cycles—daily rush hours, weekly work patterns, and even seasonal changes. Standard methods may focus too much on the recent past and miss slower-moving trends, which harms long-range predictions. PGSFormer handles this by combining two ideas. First, it uses a special type of temporal convolution that can see far back in time without needing an extremely deep network, helping it capture both quick swings and broader trends. Second, it cuts long histories into shorter subsequences and feeds them into a Transformer—a powerful architecture originally designed for language—while hiding a portion of these subsequences and asking the model to reconstruct them. This “masked subseries” task teaches the system to understand the broader context instead of memorizing individual data points.

Testing Against Real-World Traffic

To see how well PGSFormer works in practice, the authors tested it on four large, real-world datasets from California, covering hundreds of road sensors in Los Angeles and the San Francisco Bay Area, as well as two other highway networks. They compared their method against a wide range of competitors, from classical models like ARIMA to modern deep-learning systems that use graph networks, recurrent networks, and Transformer-based designs. Across all datasets and for different prediction horizons—15, 30, and 60 minutes ahead—PGSFormer consistently delivered lower errors. It was particularly strong at longer horizons, where many models tend to drift away from reality. Additional experiments in which individual parts of the system were removed showed that both the progressive graph and the masked subsequence Transformer are crucial to its success.

What This Means for Everyday Travel

For non-specialists, the key takeaway is that PGSFormer represents a more realistic way of thinking about city traffic. Instead of assuming that roads always influence only their immediate neighbors, or that patterns never change, it continually learns which areas are behaving similarly right now and uses that knowledge to look further into the future. In tests with real freeway data, this approach produced more accurate and more stable forecasts than many existing methods. If integrated into traffic control centers, navigation apps, or public transit planning tools, such models could help reduce congestion, shorten commutes, and make better use of existing road infrastructure without laying a single new mile of asphalt.

Citation: Chen, L. PGSFormer: traffic flow prediction based on joint optimization of progressive graph convolutional networks with subseries transformer. Sci Rep 16, 7200 (2026). https://doi.org/10.1038/s41598-026-35643-x

Keywords: traffic flow prediction, graph neural networks, transformer models, smart transportation, spatiotemporal data