Clear Sky Science · en

NeoHebbian synapses to accelerate online training of neuromorphic hardware

Teaching Chips to Learn from Experience

Modern artificial intelligence is remarkably powerful but still far from matching the brain’s knack for learning from sparse, delayed feedback—like remembering which shortcut through town actually saved time. This paper presents a new kind of artificial "synapse," the connections between artificial neurons, that lets neuromorphic chips learn in a more brain‑like, online fashion while staying compact and energy efficient. For readers interested in the future of AI hardware, it shows how heat itself can be turned into a useful signal for learning inside tiny memory devices.

Why Neuromorphic Brains Need Better Synapses

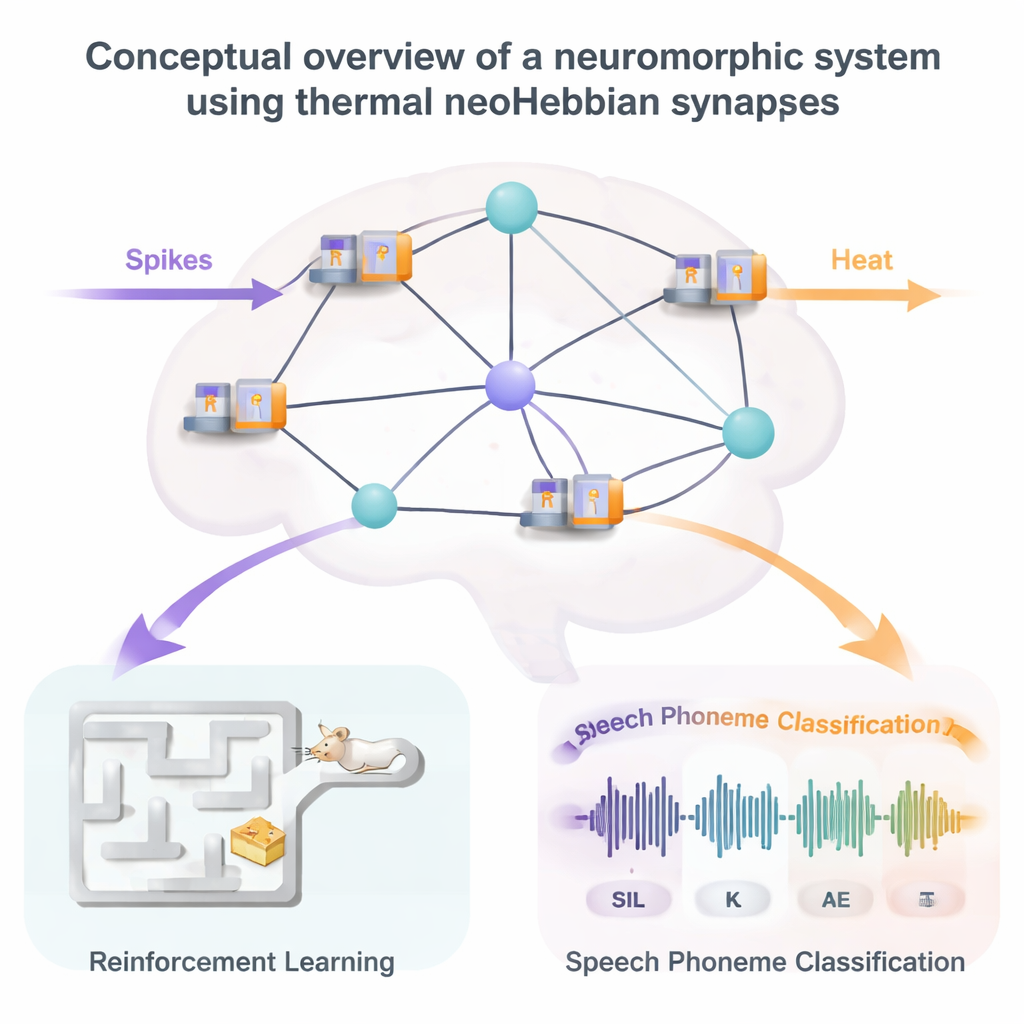

Neuromorphic computing aims to mimic how the brain processes information: using spiking neural networks, where brief electrical spikes pass through vast webs of synapses. Today’s hardware can already implement synapses using memristive components such as ReRAM, whose electrical conductance can be tuned to store a weight. However, simple “Hebbian” learning rules—where synapses strengthen or weaken purely from local spike timing—struggle on realistic tasks that require linking events separated in time, such as understanding speech or solving navigation problems. Standard deep‑learning techniques address this with backpropagation through time, but that method is too memory‑hungry and power‑hungry for brain‑like hardware. The community has therefore turned to “three‑factor” learning rules and algorithms like eligibility propagation (e‑prop), which introduce an extra local memory trace at each synapse so that a delayed global reward signal can still adjust the right connections.

Storing a Fading Memory in Heat

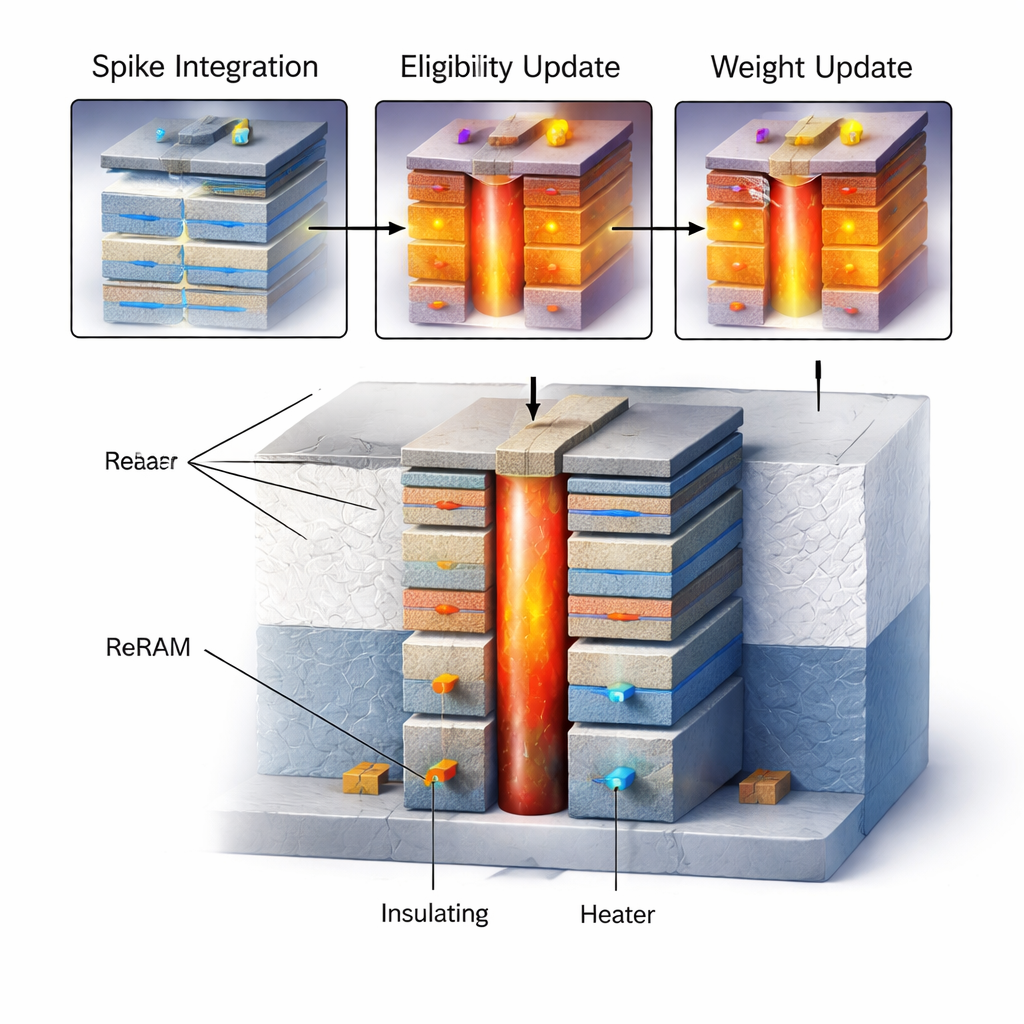

The core idea of this work is a “neoHebbian” synapse that has two internal state variables: a long‑term coupling weight and a short‑term eligibility trace that remembers recent activity. The weight is encoded, as usual, in the conductance of a ReRAM device. The eligibility trace, by contrast, is stored in the local temperature of that device, controlled by a nanoscale resistive heater integrated directly above or beside it. During normal spike processing, the ReRAM simply multiplies incoming spikes by its stored weight. During learning, neuron‑generated signals corresponding to “what fired before” and “how sensitive the postsynaptic neuron currently is” drive current through the tiny heater. Because the heater and the ReRAM are thermally coupled, this power raises the device’s temperature in proportion to the product of those two signals—the mathematical eligibility trace required by the e‑prop algorithm.

How Heat Changes Memory

Once a short input sequence—called a dataframe—has been processed, the accumulated temperature rise encodes how “eligible” that synapse is to change. A fixed programming pulse is then applied across the ReRAM. Crucially, detailed experiments show that the resulting conductance change depends strongly on temperature: hotter devices change more, and the direction and magnitude of that change depend on the initial conductance state and on whether the device is being driven to a higher or lower conductance. By carefully choosing pulse amplitudes and exploiting this temperature sensitivity, the authors arrange for the weight change to be roughly proportional to the stored eligibility. Numerical models of heat flow in a 3D‑integrated heater‑plus‑ReRAM stack confirm that temperature can be raised and allowed to decay over tunable time scales, and that the structure can be engineered to boost desired self‑heating while limiting thermal “crosstalk” to neighboring synapses.

Putting Thermal Synapses to the Test

To assess whether this exotic synapse is actually useful, the authors simulate full neuromorphic systems that include realistic device behaviors such as temperature decay, device‑to‑device variability, and incomplete precision. In one demonstration, a spiking network controls a virtual mouse that must learn to navigate a grid‑like maze to find cheese while avoiding traps. Here, natural thermal cooling—which slowly erodes the stored eligibility—acts like the “discount factor” familiar from reinforcement learning: recent state‑action pairs matter more than ancient ones. The simulations show that learning is fastest when this decay is neither too rapid nor too slow, and that variability in ReRAM behavior degrades performance only gradually. In a second, more demanding test, a recurrent spiking network with thermal neoHebbian synapses is trained online on the TIMIT phoneme classification benchmark, a standard speech‑recognition task. With sufficient conductance resolution (about 8‑bit equivalent), the hardware‑aware model achieves accuracy within a few percent of an ideal, floating‑point implementation.

What This Means for Future AI Hardware

Overall, the study demonstrates that local temperature can serve as a practical, controllable internal memory for advanced learning rules, enabling fast, on‑chip training without massive digital overhead. The proposed synapse occupies about the same footprint as a conventional ReRAM cell, yet implements both a stable weight and a fading trace, and its energy cost per learning step is in the picojoule range. While relying on heat raises real challenges—temperature is hard to measure directly and can accelerate device wear—the work suggests that embracing, rather than fighting, electro‑thermal effects could unlock new classes of compact learning hardware. For non‑experts, the takeaway is that future AI chips may not just shuttle electrons: they could also compute with carefully engineered patterns of heat, bringing machine learning a step closer to the efficiency and adaptability of the human brain.

Citation: Pande, S., Bezugam, S.S., Bhattacharya, T. et al. NeoHebbian synapses to accelerate online training of neuromorphic hardware. Sci Rep 16, 6836 (2026). https://doi.org/10.1038/s41598-026-35641-z

Keywords: neuromorphic computing, spiking neural networks, ReRAM synapse, online learning, hardware-efficient AI