Clear Sky Science · en

Exploring oceanic depths: unveiling hidden treasures with IoT and ensembled deep hybrid learning model

Why exploring the seafloor is getting a high‑tech upgrade

The deep ocean and the ground beneath it hide cables, pipelines, ancient ruins, and fragile ecosystems. Yet murky water, swirling sand, and weak signals make it surprisingly hard to see what is down there. This article describes a new way to combine many kinds of sensors with artificial intelligence so that underwater robots and monitoring systems can spot important objects more accurately, quickly, and with less power. The work could help protect marine life, safeguard infrastructure, and open up new frontiers in exploration.

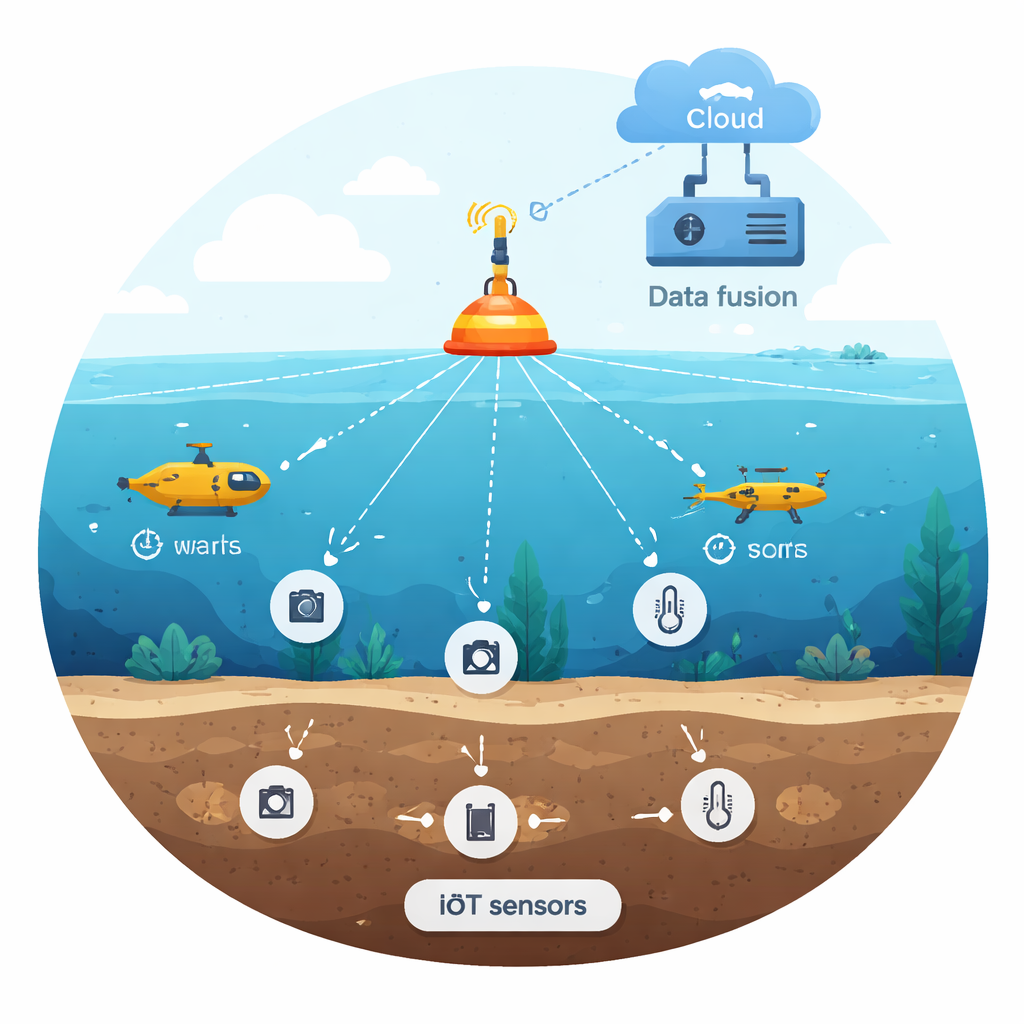

Many sensors, one shared picture of the deep

Today’s underwater detection gear tends to lean on a single sense: a sonar ping, a video camera, or one type of ground sensor. Each works well under ideal conditions, but they falter when water turns cloudy, light fades, or noise creeps in. The researchers instead imagine the ocean as a place watched by an Internet of Things: small, networked devices that listen, look, and feel in different ways. Their system fuses data from cameras, ground‑penetrating radar, seismic vibration sensors, thermal probes, and electromagnetic detectors into one shared stream for analysis. By blending these views, the system can still recognize an object even when one sensor is confused by mud, bubbles, or reflections.

Cleaning messy signals before they reach the brain

Raw data gathered underwater is far from perfect. It is speckled with random spikes, gaps, and odd readings from shifting currents, moving animals, and electronic interference. Before any smart algorithm makes decisions, the authors run this data through a careful cleaning line. They use median filters to smooth away sudden noise without blurring real features, statistical checks to spot and remove outliers, and synchronization so that readings from different devices line up in time. They also rescale values so that one strong sensor does not drown out weaker ones, transform one‑dimensional radar traces into images, and use modern techniques to shrink hundreds of measurements down to a smaller, more informative set. All of this is designed so the learning system sees a crisp picture instead of a chaotic blur.

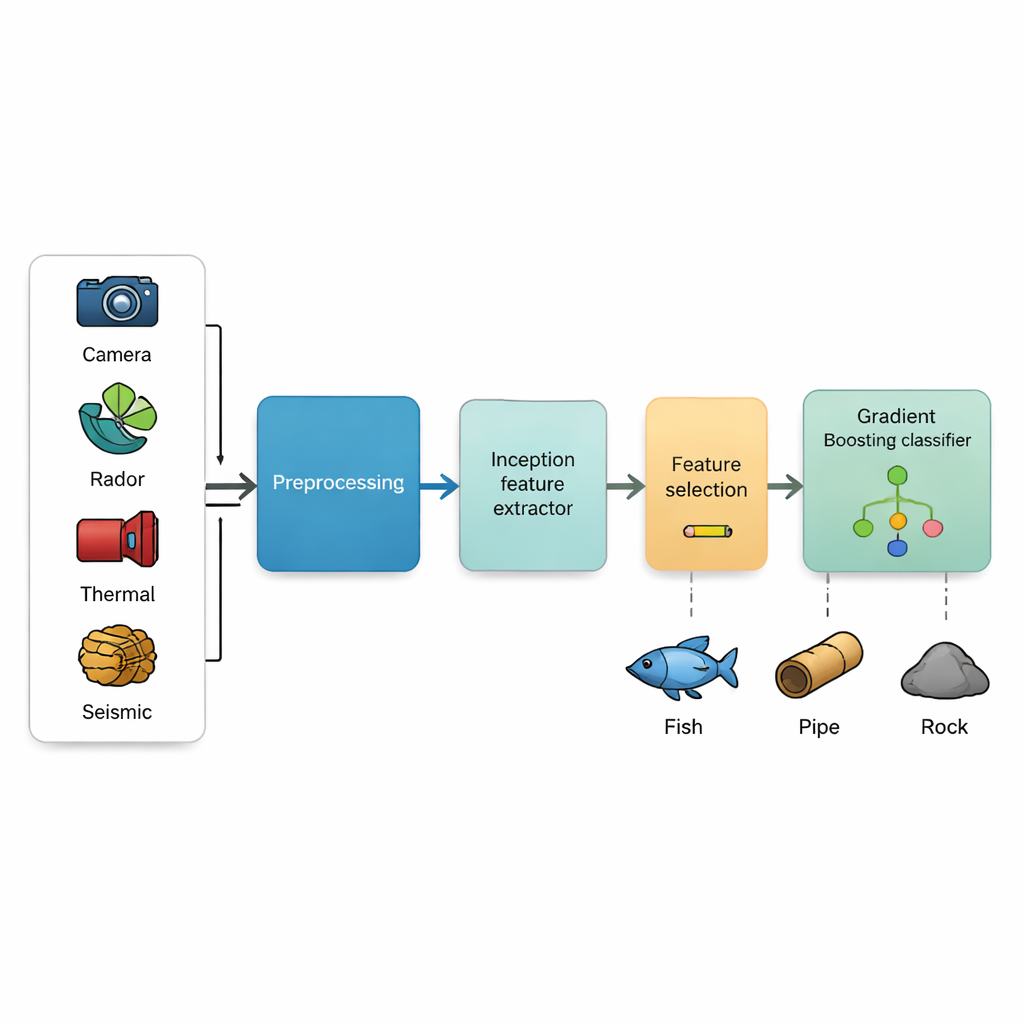

A hybrid learning engine that sees patterns and makes decisions

At the heart of the approach is what the authors call an Ensembled Deep Hybrid Learning (EDHL) model. It has two main parts that play complementary roles. First, an Inception‑style deep neural network acts as the “eyes,” scanning sensor images at several scales at once to catch both fine details, like a cable, and larger shapes, like a rock outcrop. From this it produces rich numerical descriptions of each scene. Second, a gradient boosting classifier acts as the “decision committee.” It builds many small decision trees that each correct the others’ mistakes, gradually honing in on the right answer about what object is present. A feature selection step chooses only the most informative signals, which cuts memory and speeds up the final system without throwing away what matters most.

Putting the system to the test

To see whether their design works in practice, the team trained and evaluated it using a large public collection of underwater images that includes fish, coral, sponges, seaweed, shells, crustaceans, and other seafloor scenes. They compared many variations of data cleaning and feature selection, and stacked their model against well‑known deep learning systems such as classic convolutional networks, ResNet, DenseNet, EfficientNet, capsule networks, and even transformer‑based models. The EDHL setup, especially when paired with careful noise removal and correlation‑based feature selection, reached an accuracy above 98%, with far fewer false alarms and missed objects than its rivals. Importantly, it retained this high performance while running on modest hardware similar to what could be used on edge devices, not just powerful lab servers.

What this means for future ocean exploration

For non‑specialists, the takeaway is that the authors have built a smarter “sense‑and‑think” system for the underwater world. By joining diverse IoT sensors with a hybrid learning engine, they show that it is possible to spot and classify seafloor objects very reliably without needing bulky computers or perfect viewing conditions. In the future, this kind of technology could help fleets of underwater robots map the ocean floor, monitor fragile habitats, track pollution, and inspect buried infrastructure with fewer errors and lower energy use. It turns the messy, hostile environment of the deep into a place where digital senses can finally see clearly enough to unlock its hidden treasures.

Citation: Tada, S., Jeevanantham, V. Exploring oceanic depths: unveiling hidden treasures with IoT and ensembled deep hybrid learning model. Sci Rep 16, 5333 (2026). https://doi.org/10.1038/s41598-026-35634-y

Keywords: underwater object detection, IoT sensors, deep learning, marine exploration, multimodal sensing