Clear Sky Science · en

Horizontal saccade bias results from combination of saliency anisotropies and egocentric biases

How our eyes sweep the world

Every time you look around a room, read a sign, or watch a passing car, your eyes make rapid jumps called saccades. These tiny, fast motions help stitch together what you see. But these jumps are not evenly spread in all directions: people move their eyes left and right much more than up and down. This study asks a deceptively simple question with far-reaching implications for vision science, robotics, and even virtual reality: why are our eye movements so strongly biased toward the horizontal?

Patterns in pictures and in our gaze

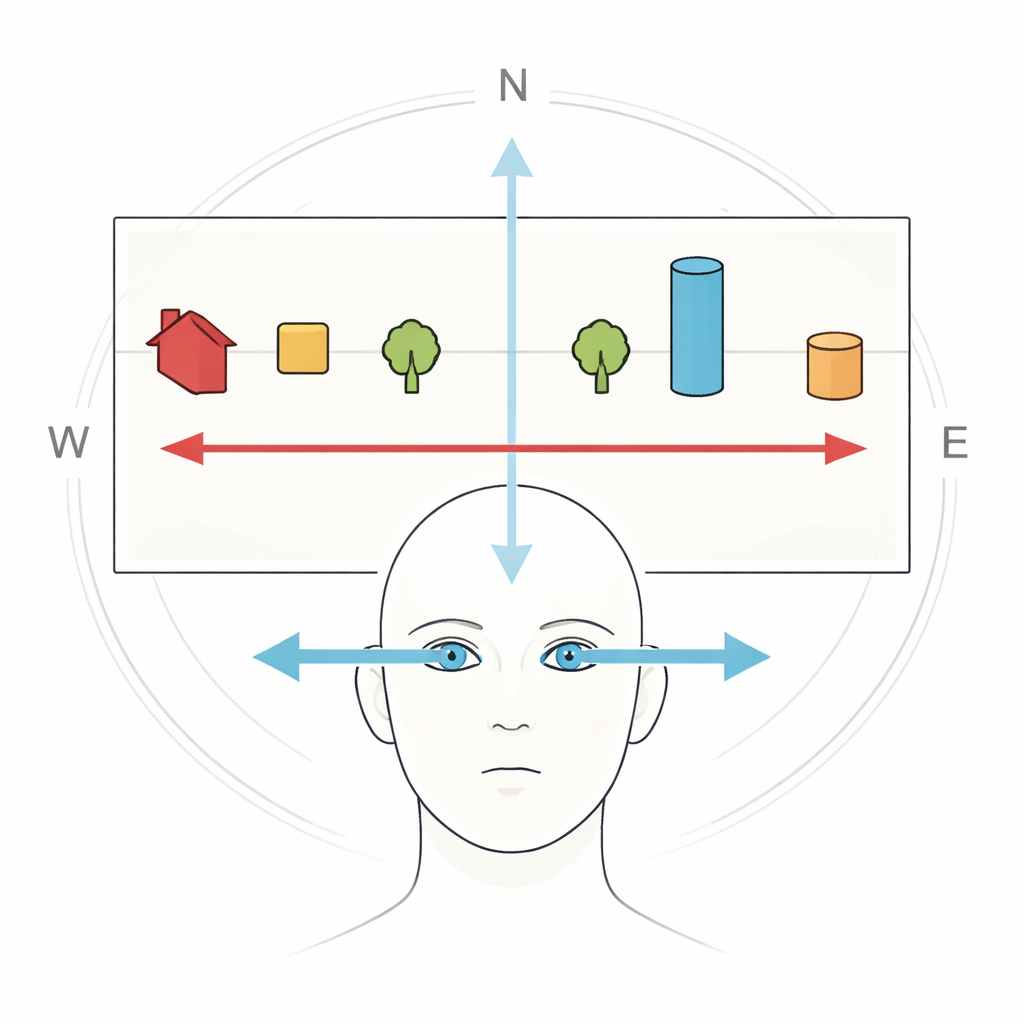

To explore this question, the researchers analyzed eye movement recordings from 48 people who freely looked at 141 natural images, including city streets, landscapes, and close-ups of objects. They confirmed a well-known pattern: when people look at pictures, their saccades tend to follow the main compass directions, and especially the horizontal. But this horizontal bias was not the same for every picture. Some images, such as a tight cluster of leaves, produced only a weak left–right preference, while others, like a wide scene with a clear horizon and rows of trees, led to very strong horizontal eye movements. This variation hinted that something in the pictures themselves was shaping how the eyes moved.

Three ways to measure what stands out

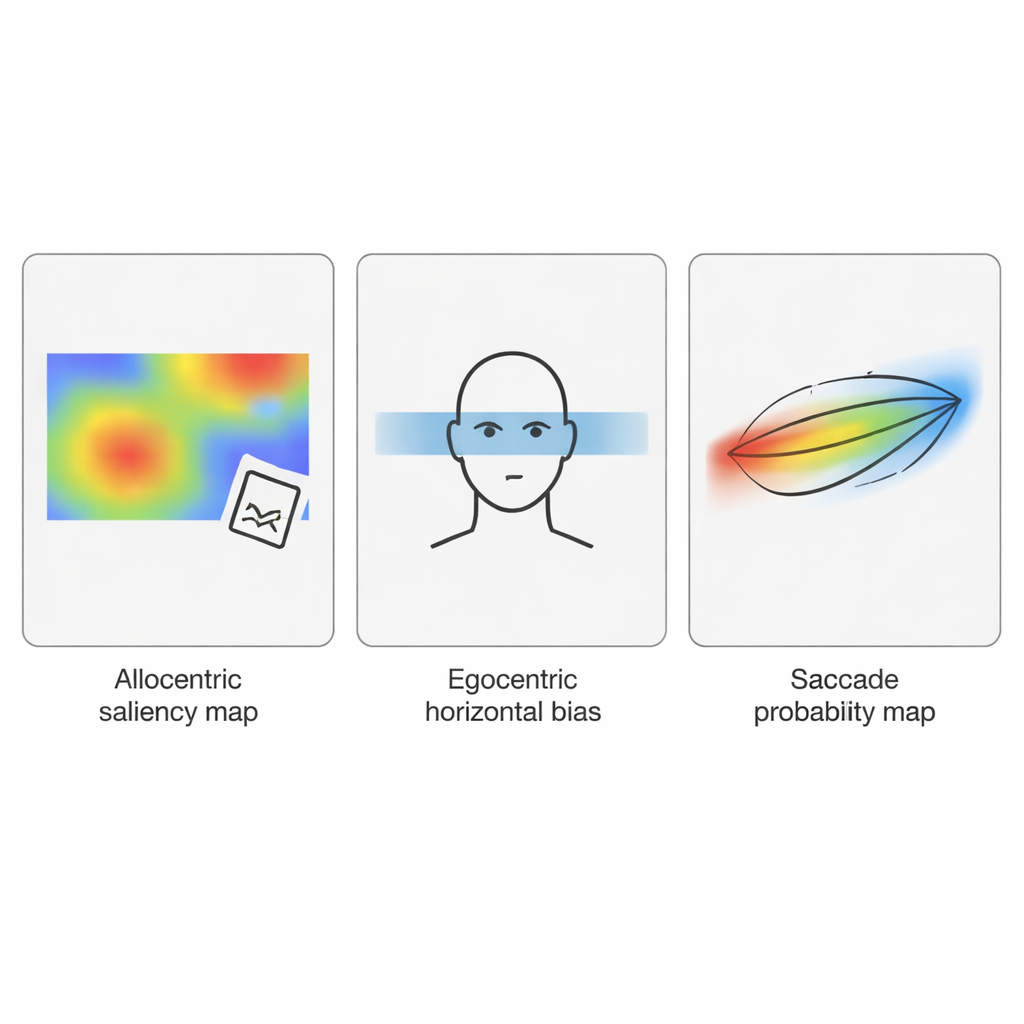

The team then looked for specific image features that might predict how strongly viewers’ eyes would favor horizontal moves. They tested three candidates. First, they measured the basic pattern of light and dark at different orientations, using a technique related to the Fourier transform, to see whether the image had more horizontal or vertical stripes. Second, they used a state-of-the-art computer model of visual saliency, which estimates where people are most likely to look in a scene. From these saliency maps, they simulated thousands of eye jumps to infer whether the model “preferred” horizontal moves. Third, they used a neural network trained to guess the orientation of an image relative to gravity, which captured more global structural cues like whether buildings and horizons were aligned. For each image, they distilled these three analyses into a single number describing how much each feature was biased toward horizontal orientations.

Salient structure drives the strongest bias

When the researchers compared these image measures with the actual eye movement data, one factor stood out: the orientation biases in the saliency maps. Images in which the saliency model predicted that the most attention-grabbing regions lined up more horizontally also produced more strongly horizontal saccades in human viewers. In contrast, the raw pattern of light–dark stripes and the global structural cue did not meaningfully predict the horizontal eye movement bias. Statistical models showed that saliency-related orientation explained a notable chunk of the differences between images, across two very different image sets. In other words, it is not just that the world has many horizontal and vertical lines; what matters most is how these lines organize the specific spots that attract our gaze.

Combining body-centered and world-centered preferences

However, saliency alone could not fully explain how people move their eyes. Previous experiments have shown that even when the scene is rotated or when people sit with their heads tilted, their eye movements are pulled partly by the orientation of the scene and partly by their own body and eye orientation. To capture this balance, the authors built a computational model that mixed two ingredients: an allocentric map (tied to the scene, using saliency predictions) and an egocentric map (tied to the observer, with a built-in preference for horizontal saccades around the center of gaze). The model then simulated sequences of saccades by combining these maps. For upright images, the mixed model produced eye-movement patterns that closely resembled human data, better than a model that relied on the scene alone or on the internal bias alone.

When scenes tilt, big and small eye jumps disagree

The real test came when the researchers applied their model to tilted versions of the same scenes. In human observers, the distribution of saccade directions rotates partway toward the tilt of the picture, and large saccades follow the tilted scene more than small ones, which remain more tied to the person’s own sense of “horizontal.” The combined model reproduced this pattern in the right direction: its simulated saccades rotated toward the tilted scene, and larger simulated jumps showed more rotation than smaller ones. But the amount of rotation was smaller than what people actually showed. This mismatch suggests that current saliency models do not yet capture all the ways that tilted scenes pull our eyes and that the way the brain blends body-centered and world-centered information is more flexible than the model assumes.

Why this matters for understanding vision

For a non-specialist, the key takeaway is that our preference for looking left and right is not a quirk or flaw; it reflects a partnership between two forces. One is built into our eye-movement system itself, favoring horizontal jumps even in the dark. The other comes from the structure of the world we live in, where horizons, ground planes, buildings, and many objects line up along horizontal and vertical axes and shape which parts of a scene stand out. This study shows that the brain likely evolved an egocentric horizontal bias because it matches the statistics of natural scenes we typically encounter when upright. Understanding this interplay not only clarifies how we see but also offers guidance for building more human-like computer vision systems and designing visual environments that work with, rather than against, our eyes’ natural tendencies.

Citation: Reeves, S.M., Otero-Millan, J. Horizontal saccade bias results from combination of saliency anisotropies and egocentric biases. Sci Rep 16, 6027 (2026). https://doi.org/10.1038/s41598-026-35572-9

Keywords: eye movements, visual attention, natural scenes, saliency models, saccades