Clear Sky Science · en

Blind recognition of channel codes based on dual-branch feature fusion convolutional neural networks

Smarter radios for crowded airwaves

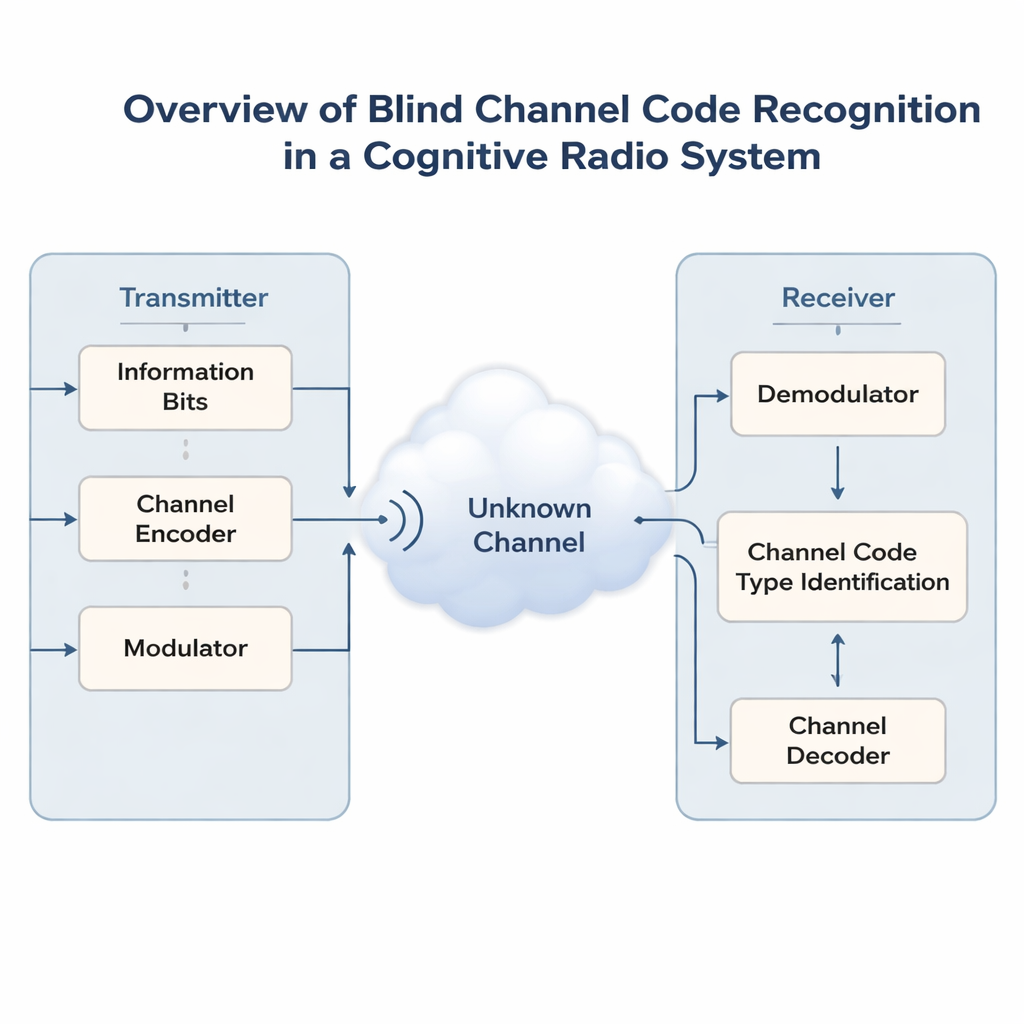

Wireless networks are getting crowded as phones, sensors and vehicles all compete for the same airwaves. To avoid chaos, future “cognitive radios” will need to listen first and then intelligently share spectrum that already belongs to others. A key problem is that these radios often do not know how the original signal was protected from errors before it was sent. This paper introduces a new artificial-intelligence method that can guess the hidden error-correcting code used on a signal—without any prior information—making it easier for smart receivers to lock on and communicate reliably.

Why hidden error-correcting codes matter

Modern wireless links protect data with error-correcting codes, which add carefully structured redundancy so that receivers can fix mistakes caused by noise and interference. Different situations call for different codes: simple Hamming codes, more powerful BCH and Reed–Solomon codes, flexible LDPC and Polar codes, or streaming-style convolutional and Turbo codes. In non-cooperative settings—such as military communications, spectrum monitoring, or open, shared bands—receivers cannot ask a transmitter which code it is using. They see only a noisy stream of bits. Correctly guessing the coding scheme, a task called blind code recognition, is essential before any meaningful decoding or higher-level processing can happen.

Limits of earlier recognition methods

Earlier research either focused on one family of codes at a time or relied on hand-crafted statistics such as how often bits repeat, how random a sequence looks, or algebraic tricks tailored to a specific code. These approaches might tell you “this is some kind of block code” but struggle to distinguish several popular formats at once. Deep learning has recently improved matters by treating bit streams a bit like sentences in a language model. However, most neural networks either look only at raw sequences or only at manually designed features, and typically handle at most two or three code types together. Their accuracy drops sharply when the bit error rate rises, which is exactly when robust recognition is most needed.

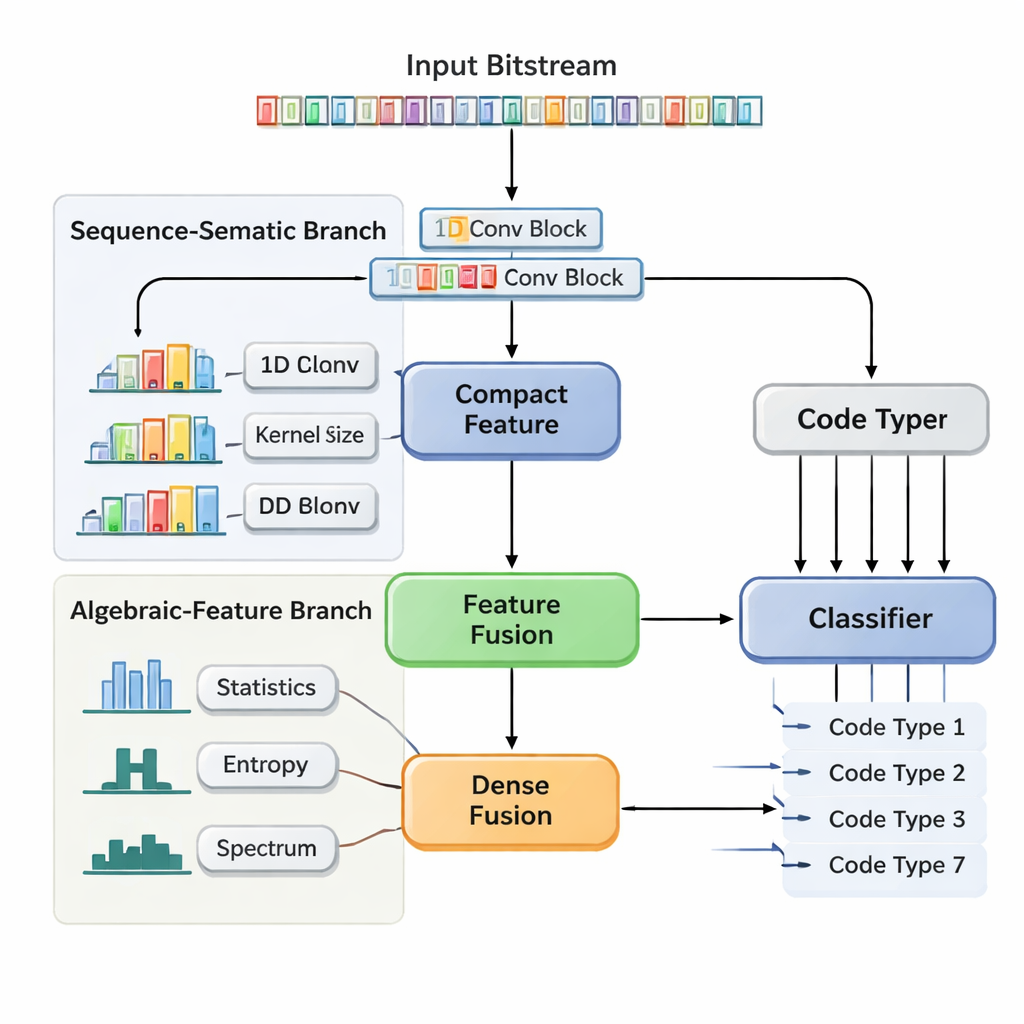

A two-track neural network that looks at structure and statistics

The authors propose a Dual-Branch Feature Fusion Convolutional Neural Network (DBFCNN) that tackles blind recognition of seven widely used codes in a single shot: Hamming, BCH, Reed–Solomon, LDPC, Polar, convolutional, and Turbo codes. The first branch treats the incoming bits as short “words,” grouping them into 8-bit chunks and mapping each chunk into a dense vector, much like a word embedding in natural language processing. It then applies a set of one-dimensional convolutions with different window sizes and dilation rates. Small filters capture short-range patterns, such as the tight structure of simple block codes, while larger and dilated filters span longer ranges, picking up traces of interleavers and parity patterns typical of Turbo and LDPC codes. A global pooling step squeezes this into a compact summary of the sequence’s structural “fingerprint.”

Hand-crafted measurements that steady the model

The second branch takes a very different view. Instead of raw bits, it computes seven families of descriptive statistics that engineers know are sensitive to coding choices. These include how often runs of identical bits occur, how complex the sequence is, how random it appears, how strongly it correlates with shifted copies of itself, and how its energy is distributed across frequencies. Additional measures probe how “linear” the code seems and how local blocks of bits behave. Because these statistics change slowly with added noise, they give the network a stable, noise-tolerant perspective. A small neural sub-network turns this feature vector into another compact representation. Finally, DBFCNN concatenates the two branches, normalizes and regularizes the combined features, and feeds them to a classifier that outputs probabilities for each of the seven code types.

Proving reliability across noisy conditions

To rigorously test DBFCNN, the authors generated more than a million synthetic examples covering seven code families, multiple parameter settings, and bit error rates from nearly error-free to extremely noisy conditions. They trained and tested the model using careful Monte Carlo procedures to avoid hidden overlaps between training and test data. Across this wide range, DBFCNN consistently outperformed three strong baselines, including a previous multiscale dilated CNN designed specifically for this task. At moderate and low error rates (bit error rate below 10⁻³), the new network correctly identified the code type about 98% of the time, improving absolute accuracy by roughly 5–11 percentage points over the strongest prior model. Even when the noise level became quite severe, DBFCNN retained a clear advantage and could still recognize several complex codes with high confidence.

What this means for future smart radios

For a non-expert, the key takeaway is that this work shows how combining domain knowledge with deep learning can make radios far more self-reliant. DBFCNN learns the subtle “accent” of different error-correcting codes in noisy bit streams by listening in two ways at once: one branch hears detailed local patterns, while the other measures global statistical clues. By fusing these views, the system can usually tell exactly which coding scheme is in use, without any cooperation from the sender. That ability is a building block for cognitive radios that can join unfamiliar networks, adapt to changing environments, and make better use of scarce spectrum, all while keeping communications reliable even when the airwaves are crowded and noisy.

Citation: Ma, Y., Lei, Y., Liu, C. et al. Blind recognition of channel codes based on dual-branch feature fusion convolutional neural networks. Sci Rep 16, 5159 (2026). https://doi.org/10.1038/s41598-026-35558-7

Keywords: cognitive radio, channel coding, deep learning, error correction, signal classification