Clear Sky Science · en

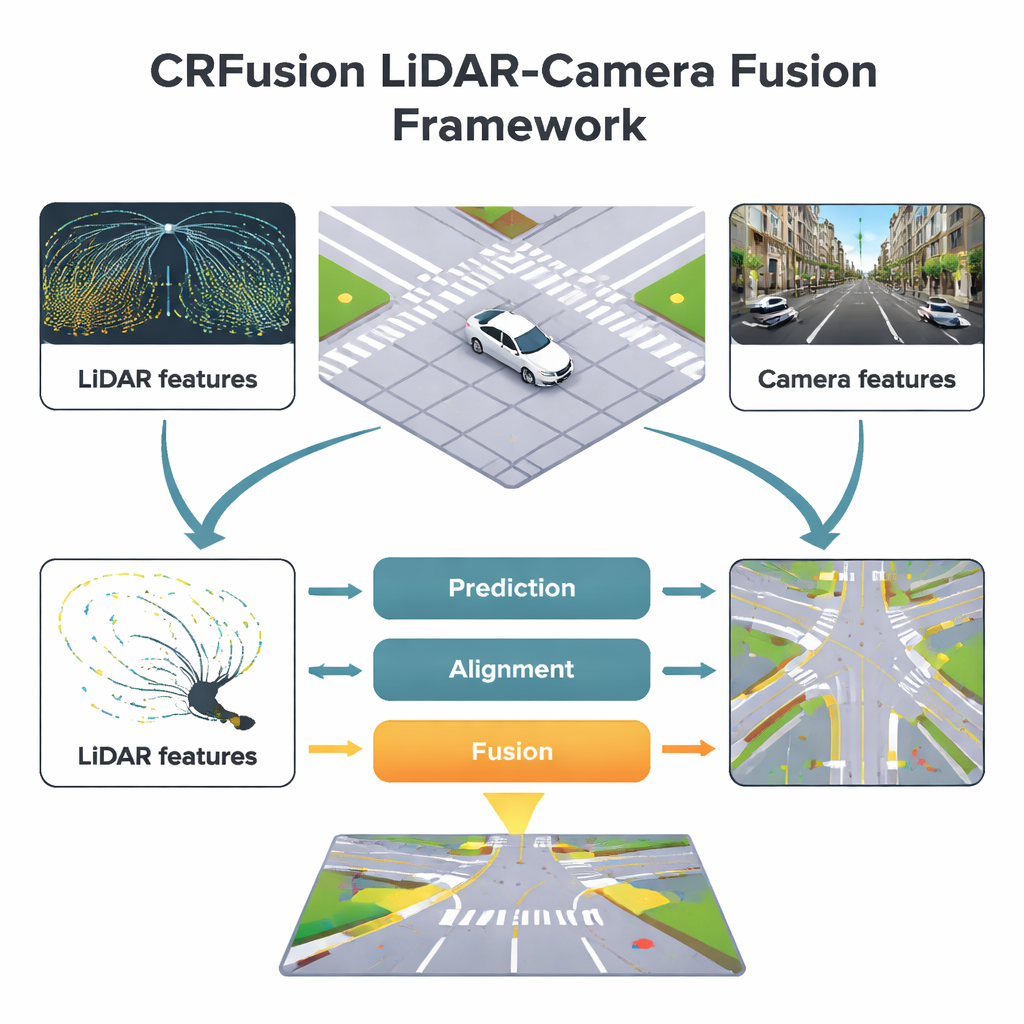

CRFusion: a novel LiDAR-camera fusion network for BEV map construction

Smarter Digital Maps for Self-Driving Cars

For a self-driving car, understanding the road ahead is like reading a constantly changing map. The vehicle must know exactly where lanes, crosswalks and road edges are, even when it’s dark, raining or something blocks the view. This paper introduces CRFusion, a new system that combines laser scanners and cameras to build cleaner, more reliable overhead maps of city streets, helping autonomous cars stay in their lanes and avoid people with greater confidence.

Why Two “Electronic Eyes” Are Better Than One

Modern self-driving cars rely heavily on two main sensors. Cameras capture rich color images, making it easy to spot lane paint, traffic signs and crosswalks far ahead. Laser scanners, or LiDAR, send out light pulses and measure their return, building an accurate 3D picture of distances and shapes around the car. Each has weaknesses: camera images can be fooled by poor lighting or bad weather, while LiDAR measurements grow sparse and incomplete as distance increases. CRFusion is designed to merge these strengths and weaknesses into a single bird’s-eye view, a top-down map where every pixel represents a small patch of ground labeled as lane, divider or pedestrian crossing.

Filling in the Gaps in Long-Range Vision

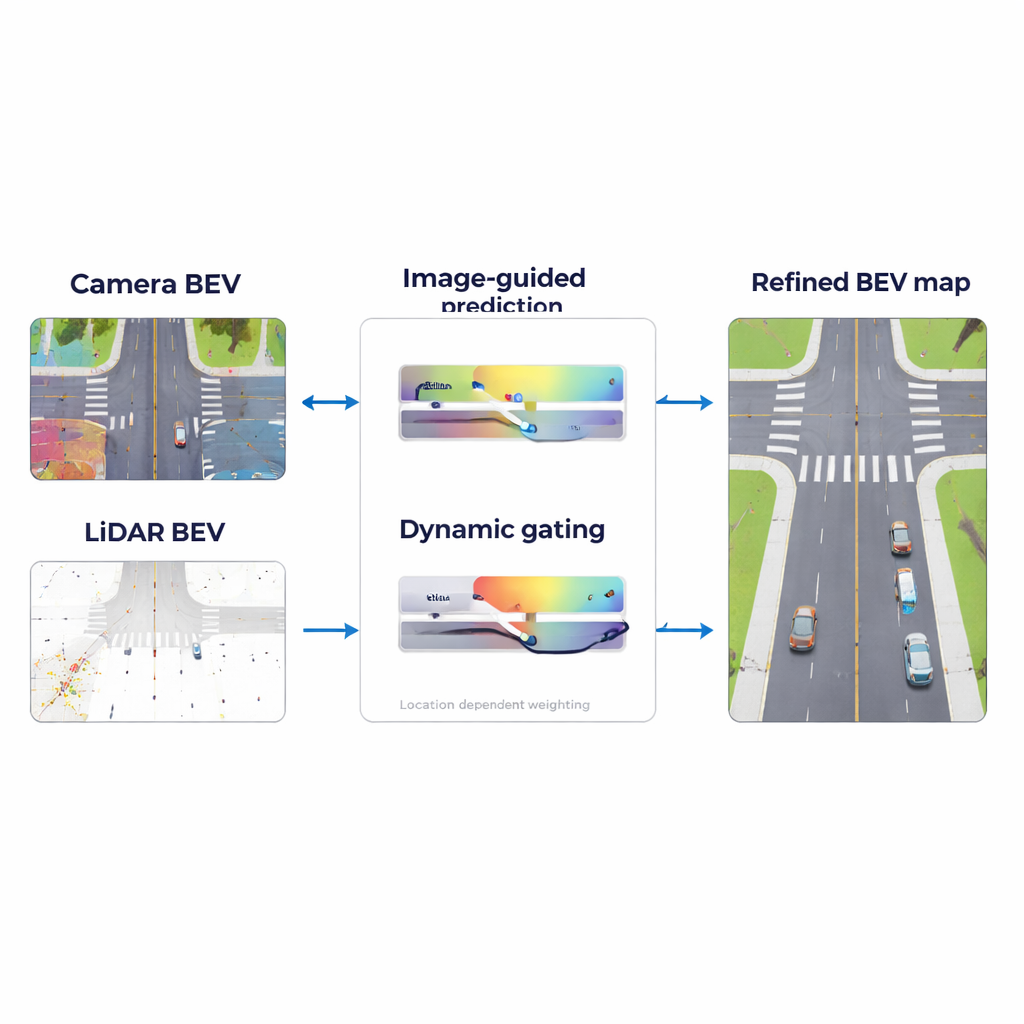

A key challenge in road mapping is that LiDAR often misses details far from the car, just where advance planning is most important. CRFusion tackles this with a “LiDAR Range Predictor.” In simple terms, the system looks at what the camera sees far down the road and uses that information to guess what the LiDAR would have measured if it had been dense and complete. A mechanism called attention helps the model decide which parts of the camera’s view best explain the empty or uncertain areas in the LiDAR-based map. It then refines this prediction by comparing nearby regions in the map, strengthening consistent patterns such as continuous lane lines and reducing random noise.

Getting Sensors to Agree on the Same Picture

Even when both camera and LiDAR are working, they do not line up perfectly. Small errors in distance estimates or sensor placement can cause lane markings seen by the camera to appear slightly shifted compared with LiDAR readings. CRFusion introduces an alignment step that gently “warps” the camera’s top-down features so they better match the LiDAR view. This is done using a lightweight correction network that predicts how each point in the camera’s map should be nudged. As a result, the two sensor views describe the same physical road positions, making later fusion far more trustworthy.

Adapting to Day, Night and Bad Weather

Once the two sensor views are aligned, the system still must decide how much to trust each one at every location. A sunny highway with clear lane paint is very different from a rainy night in a crowded city. CRFusion addresses this with a “Dynamic Gated Fusion” module that assigns flexible weights to the camera and LiDAR features across the map. Where LiDAR returns are strong, such as nearby road edges or solid objects, the system leans more on LiDAR. Where visual clues are clearer, such as bright crosswalk stripes at a distance, it relies more on the camera. These weights are learned automatically from data, allowing the fusion strategy to shift smoothly as conditions change.

Proving the Benefits in Real-World Data

The researchers tested CRFusion on a widely used autonomous driving dataset called NuScenes, which includes many driving scenes in different cities, weathers and times of day. Compared with leading earlier methods, CRFusion produced more accurate lane boundaries, dividers and pedestrian crossings, especially at medium and long distances up to 90 meters. It improved standard quality measures for both overlap with ground-truth maps and the ability to detect individual lane segments, and an “ablation” study showed that each major component—the long-range prediction, the alignment network and the dynamic fusion—contributed meaningful gains. Visual examples revealed smoother lane continuity and clearer crosswalks, confirming that the system’s maps look more like carefully drawn road plans.

What This Means for Everyday Driving

For non-specialists, the main takeaway is that CRFusion helps self-driving cars build cleaner, more dependable street maps by letting cameras and LiDAR “talk to each other” in a smarter way. By predicting missing LiDAR information from camera views, lining up the two sensor perspectives and adjusting how much it trusts each one based on the situation, the system reduces blind spots and guesswork. This brings autonomous vehicles a step closer to handling complex, cluttered city streets with the same confidence as a careful human driver following well-marked lanes and crossings.

Citation: Guan, Y., Wang, T., Cheng, Q. et al. CRFusion: a novel LiDAR-camera fusion network for BEV map construction. Sci Rep 16, 5169 (2026). https://doi.org/10.1038/s41598-026-35551-0

Keywords: autonomous driving, LiDAR-camera fusion, HD semantic maps, bird’s-eye view perception, urban road mapping