Clear Sky Science · en

Quantum denoising autoencoder improves retinal fundus image quality for early diabetic retinopathy screening

Sharper eye scans to protect sight

For millions of people living with diabetes, a simple photograph of the back of the eye can reveal the earliest warning signs of blindness. But these retinal images are often grainy or blurred by camera noise, making tiny, sight‑saving details hard to see. This paper explores an unusual ally in the fight against blindness: a new image‑cleaning method that mixes today’s deep learning with tomorrow’s quantum computers to produce clearer eye scans for early diabetic retinopathy screening.

Why small details in eye photos matter

Diabetic retinopathy is a disease in which high blood sugar slowly damages the fine blood vessels in the retina. Doctors look for small bulges in vessels (microaneurysms), thin branching capillaries, and subtle texture changes to catch the disease before vision is lost. These signs are often only a few pixels wide in a standard fundus photograph. Unfortunately, real‑world images picked up in screening centers are affected by many types of noise: sensor imperfections, dim lighting, and motion blur. Traditional clean‑up tools, such as Gaussian smoothing or median filters, can remove some of this noise—but they tend to wash away exactly the delicate structures doctors care about most.

Limits of today’s smart filters

In recent years, deep learning methods have become the workhorses of medical image denoising. Convolutional autoencoders, residual networks (ResNets), and specialized denoising CNNs can learn how a “clean” image should look and then subtract the noise from new scans. These models work well, but they come with drawbacks. To capture complex image patterns, they need many layers and millions of parameters, which demand heavy computation and lots of training data. In medical imaging, where labeled datasets are relatively small, such large models can overfit—memorizing training images instead of generalizing—and may still blur thin vessels or tiny lesions.

Bringing quantum ideas into the picture

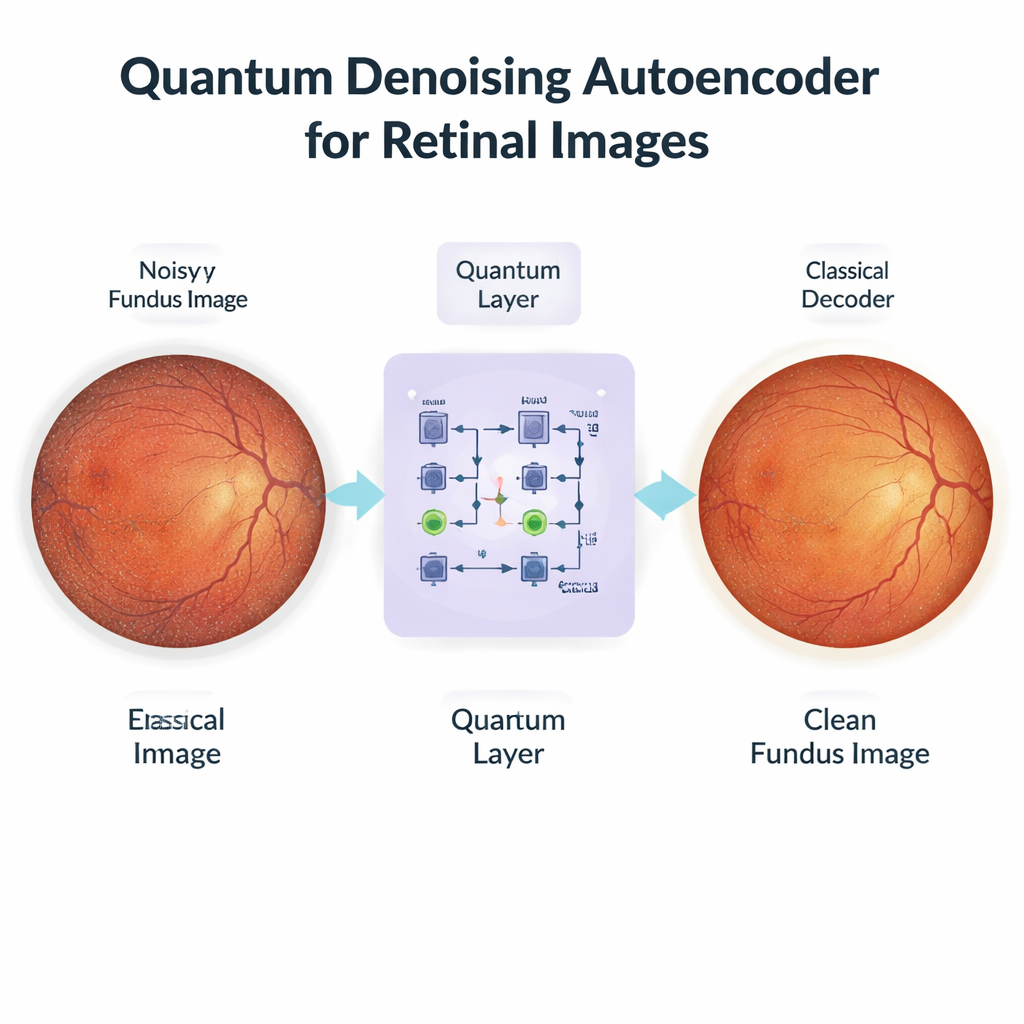

The authors propose a hybrid approach called the Quantum Denoising Autoencoder (QDAE). At first glance it resembles a standard deep learning pipeline: a classical encoder compresses each noisy eye photo into a compact set of features, and a classical decoder later reconstructs a cleaned‑up image. The key twist happens in the middle. Instead of passing features directly through a simple mathematical bottleneck, QDAE converts them into quantum‑style states and processes them with a small, parameterized quantum circuit before converting them back. In physical quantum machines, superposition would allow many feature combinations to be considered at once, and entanglement would link distant parts of the image. Even though this work uses simulated circuits on a regular GPU, the same structure lets the model represent rich, non‑linear relationships between pixels using a relatively small number of trainable parameters.

Clearer images, tiny vessels preserved

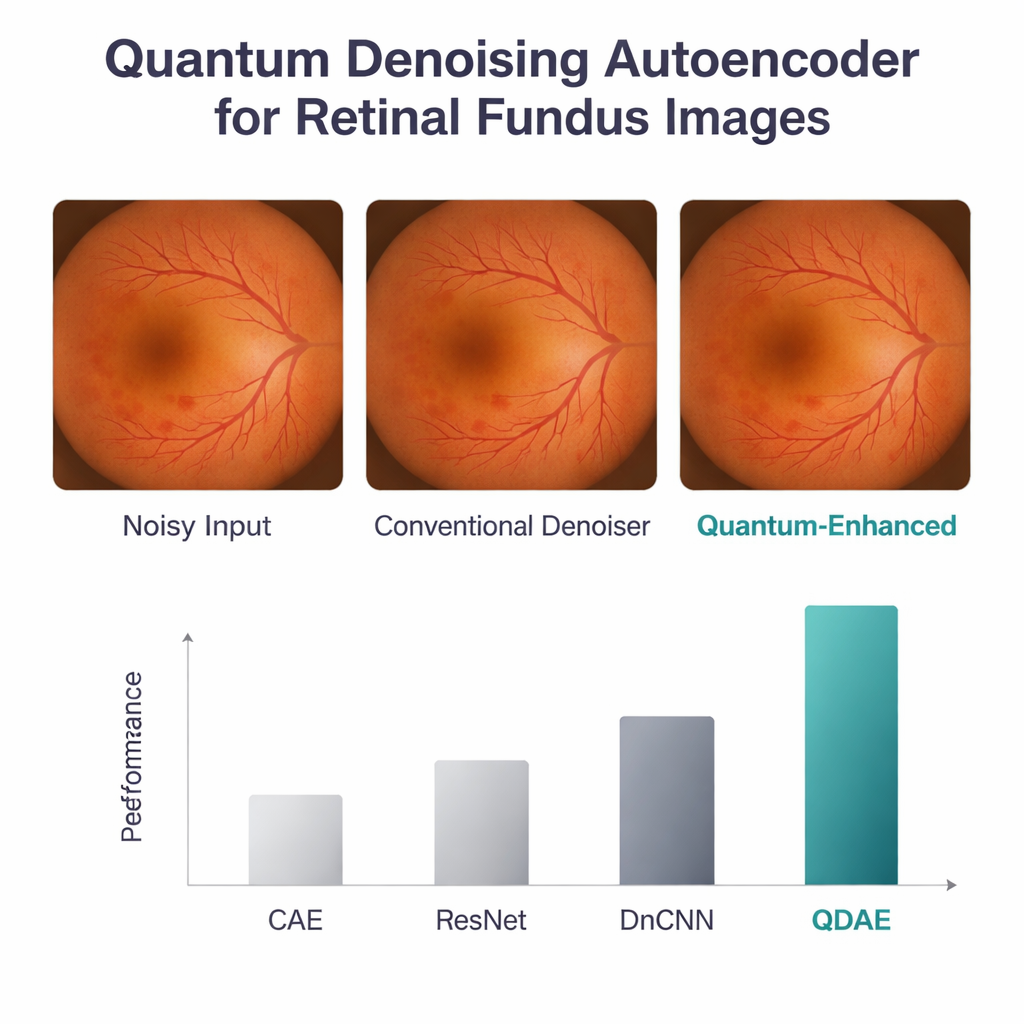

To test QDAE, the researchers used a public dataset of retinal images, scaled to 224×224 pixels and artificially corrupted with realistic Gaussian and speckle noise. They compared their method against three strong baselines: a convolutional autoencoder, a ResNet‑based model, and a popular denoising CNN. All models were trained and evaluated on the same data using standard image quality scores. The quantum‑enhanced model came out ahead on every major measure: it achieved a peak signal‑to‑noise ratio of 38.8 dB and a structural similarity score of 0.96, well above the classical networks. It also better preserved the original intensity and texture patterns in the images, including the brightness and contrast of the optic disc, macula, and fine vessel network. Although the quantum step added a small delay—about half a second per image—the overall computing cost stayed similar to that of deep CNNs thanks to the use of shallow circuits with only four qubits and three layers.

What this could mean for patients and clinics

For a person with diabetes, the technical details boil down to a simple benefit: sharper eye photos that make it easier for software and specialists to catch disease early, when treatment can still prevent vision loss. The QDAE acts as a smart pre‑cleaning stage that can plug into existing screening systems, helping downstream tools that segment lesions or grade disease severity. Because the quantum piece is currently simulated, hospitals would not need special quantum hardware to try it, yet the design is ready for future quantum devices as they mature. The study still needs broader clinical testing on images from many clinics and cameras, but it offers an intriguing glimpse of how quantum‑inspired computing could quietly improve routine eye exams and, ultimately, help preserve sight.

Citation: Chilukuri, R., P, P., Gatla, R.K. et al. Quantum denoising autoencoder improves retinal fundus image quality for early diabetic retinopathy screening. Sci Rep 16, 5970 (2026). https://doi.org/10.1038/s41598-026-35540-3

Keywords: diabetic retinopathy, retinal imaging, image denoising, quantum machine learning, medical AI